Introduction

RAG evaluation and benchmarking tools help teams measure whether a retrieval-augmented generation system is accurate, grounded, safe, and reliable. In simple terms, these tools test whether your AI system retrieves the right context, uses that context correctly, avoids hallucinations, and responds consistently across real user queries.

This category matters because RAG systems often fail silently. A chatbot may sound confident while using weak retrieval, stale data, poor chunks, or unsupported claims. Evaluation tools help AI teams detect those issues before users are affected. They also support regression testing, model comparison, prompt testing, retrieval scoring, observability, and production monitoring.

Common use cases include:

- Testing enterprise AI assistants before rollout

- Measuring hallucination and faithfulness risk

- Comparing retrieval strategies and chunking methods

- Benchmarking models, prompts, and rerankers

- Monitoring production RAG quality over time

- Building evaluation gates into AI development workflows

What buyers should evaluate:

- RAG-specific metrics such as faithfulness and context relevance

- Retrieval evaluation and answer evaluation support

- Synthetic test data generation

- Human review workflows

- CI/CD integration for regression testing

- Observability and tracing

- Support for multiple models and frameworks

- Guardrails and safety testing

- Cost and latency tracking

- Dataset management and versioning

- Security, access control, and auditability

- Ease of adoption for engineering and product teams

Best for: AI engineers, ML teams, product teams, CTOs, and enterprises building production RAG systems.

Not ideal for: Teams only experimenting casually with AI or using simple chatbots where formal evaluation is not yet required.

What’s Changed in RAG Evaluation & Benchmarking Tools

- RAG evaluation has moved from manual review to repeatable automated testing across retrieval, generation, and user experience.

- Teams now evaluate both retrieval quality and answer faithfulness, instead of only checking final responses.

- Agentic AI has made evaluation more complex because tools must inspect multi-step reasoning, tool calls, and intermediate decisions.

- Multimodal workflows are increasing demand for evaluation across text, tables, PDFs, images, and document layouts.

- More tools now support LLM-as-a-judge workflows, where one model scores another model’s output using defined criteria.

- Evaluation is becoming part of CI/CD, allowing teams to block bad prompt, model, or retrieval changes before deployment.

- Production monitoring is now essential because RAG quality can degrade when documents, indexes, or user behavior change.

- Guardrail testing is expanding to include prompt injection, unsafe retrieval, sensitive data exposure, and unsupported answers.

- Enterprises are focusing more on auditability, dataset versioning, and explainable evaluation results.

- Cost and latency are becoming evaluation metrics because better answers are not useful if they are too slow or too expensive.

- Human feedback loops are being combined with automated metrics for more balanced quality checks.

- Benchmarking is shifting from generic public tests to private, domain-specific test sets using real business data.

Quick Buyer Checklist

- Check whether the tool supports RAG-specific metrics like faithfulness, context precision, context recall, and answer relevance.

- Confirm it can evaluate both retrieval quality and final answer quality separately.

- Look for support for synthetic test data and custom evaluation datasets.

- Make sure it integrates with your RAG framework, vector database, and LLM provider.

- Review whether it supports hosted, open-source, BYO model, or multi-model evaluation workflows.

- Check for regression testing so prompt, model, or index changes do not reduce quality.

- Prioritize observability features such as traces, latency, token usage, and cost tracking.

- Look for guardrail testing around prompt injection, hallucination, and sensitive data exposure.

- Confirm admin controls, audit logs, and access permissions if used in enterprise environments.

- Avoid vendor lock-in by choosing tools with APIs, export options, and framework flexibility.

- Check whether human review workflows are available for subjective or high-risk outputs.

- Evaluate how easy it is for both engineers and non-technical reviewers to understand results.

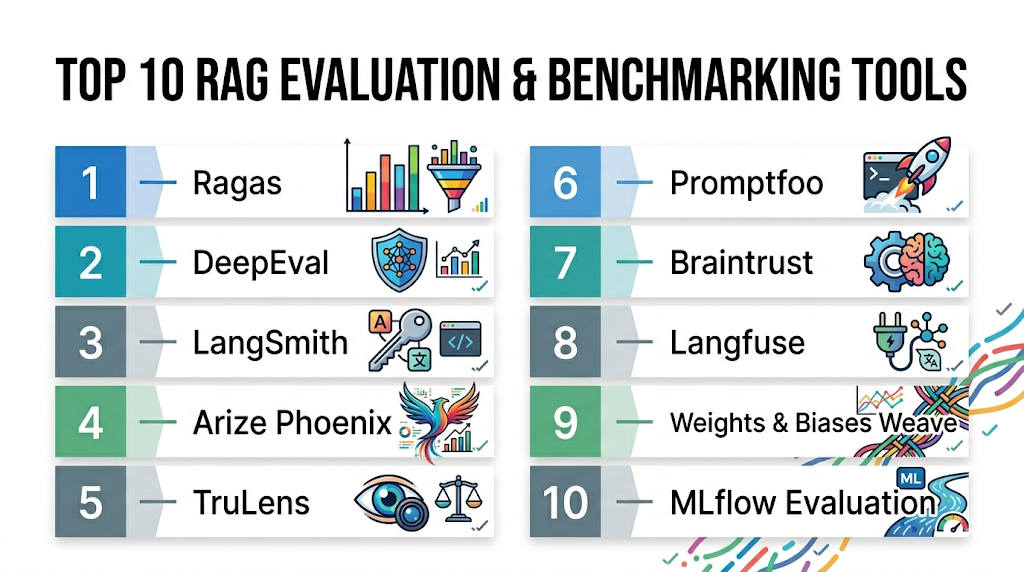

Top 10 RAG Evaluation & Benchmarking Tools

1 — Ragas

One-line verdict: Best for teams needing open-source, RAG-specific evaluation metrics for retrieval and generation quality.

Short description:

Ragas is an open-source framework focused on evaluating RAG pipelines. It helps teams measure faithfulness, answer relevance, context precision, and context recall using practical evaluation workflows.

Standout Capabilities

- Purpose-built metrics for RAG evaluation

- Measures both retrieval and response quality

- Supports reference-free evaluation workflows

- Useful for testing chunking and retrieval changes

- Can be integrated into experimentation pipelines

- Strong fit for developer-led AI teams

- Works well with broader LLM evaluation stacks

AI-Specific Depth

- Model support: BYO model / multi-model depending on configuration

- RAG / knowledge integration: Strong support for RAG pipeline evaluation

- Evaluation: Faithfulness, answer relevance, context precision, context recall, and custom workflows

- Guardrails: Limited native guardrail coverage; usually paired with other tools

- Observability: Basic; often combined with tracing platforms

Pros

- Strong RAG-specific metric coverage

- Open-source and developer-friendly

- Good for comparing retrieval and chunking strategies

Cons

- Requires technical setup and metric interpretation

- Limited native production monitoring

- Guardrail and security testing may need additional tools

Security & Compliance

Not publicly stated. Enterprise security depends on deployment environment, hosting model, and surrounding infrastructure.

Deployment & Platforms

- Python-based framework

- Self-hosted / local development

- Can be integrated into cloud workflows

Integrations & Ecosystem

Ragas is commonly used inside broader RAG and LLMOps workflows where teams need measurable quality checks.

- Python workflows

- RAG frameworks

- LLM providers through configuration

- Evaluation datasets

- Observability tools

- CI/CD pipelines through custom integration

Pricing Model

Open-source. Enterprise or managed options may vary depending on ecosystem partners.

Best-Fit Scenarios

- Evaluating retrieval quality in RAG pipelines

- Comparing prompt, chunking, and reranking changes

- Building open-source evaluation workflows

2 — DeepEval

One-line verdict: Best for developers who want unit-test-style evaluation for LLM and RAG applications.

Short description:

DeepEval is an open-source evaluation framework designed to test LLM applications with metrics, test cases, and CI/CD-friendly workflows. It is especially useful for teams treating AI quality like software testing.

Standout Capabilities

- Unit-testing style structure for AI applications

- RAG-focused metrics for retrieval and generation

- Supports custom evaluation metrics

- Useful for CI/CD regression testing

- Strong developer workflow alignment

- Supports synthetic test data workflows

- Practical for repeatable quality gates

AI-Specific Depth

- Model support: BYO model / multi-model depending on setup

- RAG / knowledge integration: Supports RAG evaluation through contextual metrics

- Evaluation: Contextual precision, recall, relevancy, faithfulness, answer quality, and custom tests

- Guardrails: Some safety evaluation possible through custom metrics

- Observability: Limited native observability; often paired with tracing tools

Pros

- Easy fit for engineering teams using test-driven workflows

- Good support for automated regression testing

- Flexible custom metric creation

Cons

- Requires engineering knowledge to configure well

- Production monitoring is not the primary focus

- Non-technical reviewers may need simplified dashboards

Security & Compliance

Not publicly stated. Security depends on implementation, model provider, and deployment setup.

Deployment & Platforms

- Python-based

- Local / CI/CD / cloud workflow integration

- Self-managed evaluation pipelines

Integrations & Ecosystem

DeepEval works well when teams want to add evaluation checks directly into development workflows.

- Python testing workflows

- CI/CD pipelines

- LLM applications

- RAG pipelines

- Custom metrics

- Synthetic datasets

Pricing Model

Open-source with possible commercial ecosystem options depending on usage.

Best-Fit Scenarios

- Adding AI evaluation to CI/CD

- Regression testing RAG pipelines

- Creating custom LLM quality tests

3 — LangSmith

One-line verdict: Best for LangChain teams needing tracing, datasets, evaluation, and debugging in one workflow.

Short description:

LangSmith helps teams debug, evaluate, and monitor LLM applications. It is especially useful for LangChain-based RAG systems where tracing and dataset-driven evaluation are important.

Standout Capabilities

- Detailed tracing for LLM application flows

- Dataset management for evaluation

- Useful debugging for chains, agents, and RAG pipelines

- Supports human feedback workflows

- Helps compare prompt and model changes

- Strong fit for LangChain users

- Production monitoring support

AI-Specific Depth

- Model support: Multi-model depending on application setup

- RAG / knowledge integration: Strong for LangChain-based RAG workflows

- Evaluation: Dataset-based evaluation, custom evaluators, human review

- Guardrails: Limited native guardrails; can integrate with external checks

- Observability: Strong tracing, latency, token, and run-level visibility

Pros

- Excellent tracing and debugging experience

- Strong fit for teams already using LangChain

- Helpful for production monitoring and evaluation loops

Cons

- Best value is within the LangChain ecosystem

- May feel less flexible for non-LangChain stacks

- Pricing and enterprise details vary

Security & Compliance

Security features may vary by plan and deployment model. Certifications and exact compliance details are not publicly stated here.

Deployment & Platforms

- Web platform

- Cloud-based workflows

- Integrates with application code and LangChain stack

Integrations & Ecosystem

LangSmith works closely with LLM application development workflows and is especially strong for tracing complex pipelines.

- LangChain

- LLM providers

- Evaluation datasets

- Human feedback workflows

- Prompt and chain debugging

- Monitoring workflows

Pricing Model

Tiered / usage-based details vary. Exact pricing should be verified directly.

Best-Fit Scenarios

- LangChain-based RAG applications

- Debugging complex agent and retrieval flows

- Tracking evaluation results across experiments

4 — Arize Phoenix

One-line verdict: Best for open-source observability and evaluation across RAG traces, embeddings, and production issues.

Short description:

Arize Phoenix is an open-source tool for LLM observability, tracing, and evaluation. It helps teams inspect RAG workflows, identify poor retrieval behavior, and analyze model performance.

Standout Capabilities

- Open-source LLM observability

- Trace inspection for RAG and agent workflows

- Embedding visualization and clustering

- Helps detect retrieval and response quality issues

- Useful for debugging production failures

- Supports evaluation workflows

- Strong visualization experience

AI-Specific Depth

- Model support: Multi-model depending on instrumentation

- RAG / knowledge integration: Strong for tracing retrieval and generation flows

- Evaluation: Supports evaluation and analysis workflows

- Guardrails: Limited native guardrail support

- Observability: Strong tracing, embeddings, latency, and debugging visibility

Pros

- Strong open-source observability

- Useful for debugging retrieval failures

- Good visualization for embeddings and traces

Cons

- Requires instrumentation and setup

- Evaluation may need pairing with metric-focused tools

- Enterprise governance details vary

Security & Compliance

Not publicly stated. Security depends on deployment model and enterprise configuration.

Deployment & Platforms

- Open-source / self-hosted options

- Cloud options may vary

- Web-based analysis interface

Integrations & Ecosystem

Phoenix fits well into modern AI observability stacks where teams need to inspect failures deeply.

- OpenTelemetry-style tracing workflows

- LLM applications

- RAG pipelines

- Evaluation datasets

- Embedding analysis

- Monitoring systems

Pricing Model

Open-source with commercial options depending on vendor offering.

Best-Fit Scenarios

- Debugging RAG retrieval failures

- Visualizing embedding quality

- Monitoring production LLM applications

5 — TruLens

One-line verdict: Best for explainable feedback functions and transparent evaluation of RAG and agent workflows.

Short description:

TruLens helps teams evaluate and trace LLM applications using feedback functions. It is useful for measuring groundedness, relevance, and quality across RAG pipelines.

Standout Capabilities

- Feedback-function-based evaluation

- Groundedness and relevance scoring

- Trace-level inspection of application behavior

- Useful for comparing application versions

- Supports RAG and agent evaluation workflows

- Transparent evaluation logic

- Good fit for research and engineering teams

AI-Specific Depth

- Model support: Multi-model depending on setup

- RAG / knowledge integration: Strong for RAG evaluation and tracing

- Evaluation: Feedback functions for groundedness, relevance, coherence, and custom metrics

- Guardrails: Limited native guardrails

- Observability: Strong tracing and leaderboard-style comparison workflows

Pros

- Transparent and explainable evaluation approach

- Useful for comparing app versions

- Strong fit for RAG quality diagnostics

Cons

- Requires technical understanding

- May need additional production monitoring tools

- Setup can be more involved than simple SaaS platforms

Security & Compliance

Not publicly stated. Security depends on deployment model and integration choices.

Deployment & Platforms

- Python-based

- Self-hosted / local workflows

- Can be used in cloud development environments

Integrations & Ecosystem

TruLens is useful in evaluation stacks where teams want interpretable quality signals.

- LLM applications

- RAG pipelines

- Feedback functions

- Experiment tracking

- Trace analysis

- Custom evaluation workflows

Pricing Model

Open-source / commercial ecosystem may vary.

Best-Fit Scenarios

- Evaluating groundedness and context relevance

- Comparing RAG application versions

- Building transparent quality scoring workflows

6 — Promptfoo

One-line verdict: Best for teams wanting prompt, model, RAG, and security tests in CI-friendly workflows.

Short description:

Promptfoo is an evaluation and testing tool for AI applications. It helps teams compare prompts, models, providers, and RAG behavior through repeatable tests.

Standout Capabilities

- Prompt and model comparison

- Test-driven AI development workflows

- Useful for CI/CD evaluation gates

- Supports red-team style testing workflows

- Can test RAG outputs using custom assertions

- Helps compare providers and configurations

- Lightweight and developer-friendly

AI-Specific Depth

- Model support: Multi-model / BYO depending on configuration

- RAG / knowledge integration: Supports RAG testing through custom test cases

- Evaluation: Prompt tests, regression tests, assertions, model comparison

- Guardrails: Stronger fit for security and adversarial testing than many basic tools

- Observability: Basic evaluation reporting; deeper observability may require external tools

Pros

- Practical for CI/CD and regression testing

- Good for comparing prompts and models

- Useful for security-oriented testing

Cons

- Less focused on deep RAG metric analysis

- Requires custom test design

- Production monitoring is not the main focus

Security & Compliance

Not publicly stated. Security depends on how tests, data, and providers are configured.

Deployment & Platforms

- CLI and developer workflow support

- Local / CI/CD / cloud pipelines

- Self-managed testing workflows

Integrations & Ecosystem

Promptfoo works well where teams want evaluation close to the development process.

- Model providers

- Prompt testing workflows

- CI/CD tools

- Custom assertions

- Red-team tests

- RAG test cases

Pricing Model

Open-source with possible hosted or commercial options depending on usage.

Best-Fit Scenarios

- Prompt regression testing

- RAG output validation

- AI security and red-team checks

7 — Braintrust

One-line verdict: Best for teams needing experiment tracking, datasets, human review, and evaluation workflows together.

Short description:

Braintrust is an AI evaluation and observability platform that helps teams run experiments, track datasets, evaluate outputs, and collect feedback for LLM applications.

Standout Capabilities

- Dataset and experiment management

- Evaluation workflows for LLM applications

- Human review support

- Prompt and model comparison

- Production feedback loops

- Useful dashboards for teams

- Supports collaboration across product and engineering

AI-Specific Depth

- Model support: Multi-model depending on configuration

- RAG / knowledge integration: Supports RAG evaluation workflows

- Evaluation: Custom evaluators, datasets, human review, regression checks

- Guardrails: Varies / N/A

- Observability: Strong for experiments, traces, and feedback loops

Pros

- Good collaboration features

- Strong dataset and experiment tracking

- Useful for human-in-the-loop evaluation

Cons

- May be more platform-heavy than simple open-source tools

- Requires structured datasets for best results

- Exact enterprise features may vary

Security & Compliance

Not publicly stated. Security and enterprise controls may vary by plan.

Deployment & Platforms

- Web platform

- Cloud-based workflows

- Integrates with AI application pipelines

Integrations & Ecosystem

Braintrust fits teams that want evaluation workflows shared across engineering, product, and QA.

- LLM providers

- Evaluation datasets

- Human review workflows

- Prompt experiments

- Production feedback

- Application traces

Pricing Model

Tiered / platform-based. Exact pricing not stated here.

Best-Fit Scenarios

- Collaborative AI evaluation

- Human feedback workflows

- Experiment tracking for RAG systems

8 — Langfuse

One-line verdict: Best for open-source LLM observability with traces, evaluations, prompts, and production monitoring.

Short description:

Langfuse is an open-source LLM engineering platform focused on observability, tracing, prompt management, and evaluation workflows. It is useful for teams monitoring RAG and agent applications.

Standout Capabilities

- Open-source observability platform

- Trace-level monitoring for LLM applications

- Prompt management workflows

- Evaluation and scoring support

- Useful for production debugging

- Tracks latency, token usage, and cost

- Supports team collaboration

AI-Specific Depth

- Model support: Multi-model depending on integration

- RAG / knowledge integration: Supports RAG observability and evaluation workflows

- Evaluation: Scoring, datasets, custom evaluation workflows

- Guardrails: Varies / N/A

- Observability: Strong traces, latency, token usage, cost, and prompt tracking

Pros

- Strong open-source observability foundation

- Useful for cost and latency tracking

- Works across different LLM stacks

Cons

- Requires setup and instrumentation

- RAG-specific metrics may need custom configuration

- Enterprise support details may vary

Security & Compliance

Not publicly stated. Enterprise security depends on deployment and configuration.

Deployment & Platforms

- Cloud / self-hosted

- Web interface

- Integrates with application code

Integrations & Ecosystem

Langfuse is suitable for teams needing broad LLM observability and evaluation across production apps.

- LLM frameworks

- Model providers

- Prompt management

- Evaluation datasets

- Tracing workflows

- Monitoring dashboards

Pricing Model

Open-source with cloud or paid options depending on usage.

Best-Fit Scenarios

- Production LLM observability

- Tracking RAG latency and cost

- Self-hosted evaluation and monitoring workflows

9 — Weights & Biases Weave

One-line verdict: Best for ML teams already using experiment tracking and wanting LLM evaluation workflows.

Short description:

Weights & Biases Weave helps teams trace, evaluate, and improve LLM applications. It is especially useful for teams already using ML experiment tracking and model development workflows.

Standout Capabilities

- Experiment tracking for AI applications

- Trace and evaluation workflows

- Dataset and model comparison support

- Helpful for ML team collaboration

- Supports debugging LLM app behavior

- Useful for iterative model and prompt improvement

- Works well in broader ML lifecycle environments

AI-Specific Depth

- Model support: Multi-model depending on setup

- RAG / knowledge integration: Supports LLM and RAG application evaluation workflows

- Evaluation: Custom evaluations, experiment comparison, human review workflows may vary

- Guardrails: Varies / N/A

- Observability: Strong tracking and experiment visibility

Pros

- Strong fit for existing ML teams

- Good experiment tracking and collaboration

- Useful for comparing model and prompt changes

Cons

- May feel heavy for small teams

- Best value if already using the ecosystem

- RAG-specific setup may require customization

Security & Compliance

Not publicly stated. Enterprise controls may vary by plan and deployment.

Deployment & Platforms

- Web platform

- Cloud-based workflows

- Integrates with model and application development stacks

Integrations & Ecosystem

Weave is useful when evaluation is part of a larger ML and AI engineering process.

- ML experiment tracking

- LLM application traces

- Datasets

- Prompt experiments

- Model comparison

- Team collaboration workflows

Pricing Model

Tiered / platform-based. Exact pricing varies.

Best-Fit Scenarios

- ML teams expanding into LLM evaluation

- Experiment-heavy RAG development

- Comparing models, prompts, and datasets

10 — MLflow Evaluation

One-line verdict: Best for teams wanting LLM and RAG evaluation inside broader MLOps workflows.

Short description:

MLflow provides experiment tracking, model management, and evaluation workflows that can support LLM and RAG application testing. It is useful for teams standardizing AI evaluation within an existing MLOps stack.

Standout Capabilities

- Strong experiment tracking foundation

- Supports model and application evaluation workflows

- Useful for reproducibility and versioning

- Can integrate with multiple evaluation approaches

- Good fit for MLOps-driven teams

- Supports comparison across experiments

- Helps standardize evaluation processes

AI-Specific Depth

- Model support: Multi-model / BYO depending on configuration

- RAG / knowledge integration: Supports RAG evaluation through custom workflows and integrations

- Evaluation: Model, prompt, and custom evaluation workflows

- Guardrails: Varies / N/A

- Observability: Strong experiment tracking; production traces may require additional tools

Pros

- Familiar to many ML engineering teams

- Strong reproducibility and tracking

- Flexible enough for custom evaluation workflows

Cons

- RAG-specific features may require setup

- Less plug-and-play than dedicated RAG eval tools

- May need additional observability tooling

Security & Compliance

Not publicly stated. Enterprise security depends on deployment and surrounding platform configuration.

Deployment & Platforms

- Open-source / self-hosted workflows

- Cloud-managed options may vary

- Works across ML and AI engineering environments

Integrations & Ecosystem

MLflow is valuable when RAG evaluation needs to align with existing model lifecycle practices.

- Experiment tracking

- Model registry workflows

- Custom evaluators

- LLM applications

- MLOps pipelines

- CI/CD and reproducibility workflows

Pricing Model

Open-source with managed options depending on platform provider.

Best-Fit Scenarios

- MLOps-driven AI teams

- Evaluation standardization across models and RAG apps

- Reproducible benchmarking workflows

Comparison Table

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| Ragas | RAG-specific metrics | Self-hosted / Hybrid | BYO / Multi-model | Strong RAG metrics | Needs setup | N/A |

| DeepEval | Test-driven AI evaluation | Self-hosted / Hybrid | BYO / Multi-model | CI/CD-friendly tests | Engineering effort | N/A |

| LangSmith | LangChain app evaluation | Cloud | Multi-model | Tracing and debugging | Best in LangChain ecosystem | N/A |

| Arize Phoenix | Open-source observability | Self-hosted / Hybrid | Multi-model | Trace and embedding analysis | Requires instrumentation | N/A |

| TruLens | Explainable feedback functions | Self-hosted / Hybrid | Multi-model | Transparent evaluation | Technical setup | N/A |

| Promptfoo | Prompt and security testing | Self-hosted / Hybrid | BYO / Multi-model | Regression and red-team tests | Less RAG-metric depth | N/A |

| Braintrust | Collaborative evaluation workflows | Cloud | Multi-model | Datasets and human review | Platform adoption effort | N/A |

| Langfuse | LLM observability and eval | Cloud / Self-hosted | Multi-model | Cost and trace visibility | Requires instrumentation | N/A |

| W&B Weave | ML team evaluation workflows | Cloud | Multi-model | Experiment tracking | Best for ML teams | N/A |

| MLflow Evaluation | MLOps-based benchmarking | Self-hosted / Hybrid | BYO / Multi-model | Reproducibility | Needs customization | N/A |

Scoring & Evaluation

This scoring is comparative, not absolute. It reflects how each tool fits practical RAG evaluation and benchmarking needs across engineering, product, and enterprise environments. A higher score does not mean the tool is universally better; it means it performs strongly across the weighted criteria used here. Some tools are stronger for offline metrics, while others are better for tracing, human review, or production monitoring. Teams should use this table as a shortlist guide, then validate results using their own data, queries, documents, and risk requirements.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| Ragas | 9 | 9 | 6 | 8 | 7 | 8 | 6 | 7 | 7.9 |

| DeepEval | 9 | 9 | 7 | 8 | 8 | 8 | 6 | 7 | 8.1 |

| LangSmith | 8 | 8 | 6 | 9 | 8 | 8 | 8 | 8 | 8.0 |

| Arize Phoenix | 8 | 8 | 6 | 8 | 7 | 8 | 7 | 7 | 7.6 |

| TruLens | 8 | 8 | 6 | 7 | 6 | 8 | 6 | 7 | 7.2 |

| Promptfoo | 8 | 8 | 8 | 8 | 8 | 8 | 6 | 7 | 7.9 |

| Braintrust | 8 | 8 | 6 | 8 | 8 | 8 | 8 | 8 | 7.9 |

| Langfuse | 8 | 7 | 6 | 8 | 8 | 9 | 7 | 7 | 7.7 |

| W&B Weave | 8 | 8 | 6 | 8 | 7 | 8 | 8 | 8 | 7.7 |

| MLflow Evaluation | 8 | 8 | 6 | 8 | 7 | 8 | 8 | 8 | 7.7 |

Top 3 for Enterprise: LangSmith, Braintrust, W&B Weave

Top 3 for SMB: DeepEval, Ragas, Langfuse

Top 3 for Developers: DeepEval, Ragas, Promptfoo

Which RAG Evaluation & Benchmarking Tool Is Right for You?

Solo / Freelancer

If you are building small RAG prototypes, start with Ragas or DeepEval. They are flexible, developer-friendly, and strong enough to test retrieval quality, answer relevance, and basic faithfulness without requiring a heavy platform setup.

SMB

Small and mid-sized teams should focus on tools that are easy to adopt and can grow with the product. DeepEval, Langfuse, and Ragas are good options because they support repeatable testing, observability, and practical quality checks without forcing a large enterprise workflow.

Mid-Market

Mid-market teams usually need stronger collaboration, monitoring, and regression testing. LangSmith, Braintrust, and Arize Phoenix can help teams track experiments, debug production issues, and improve evaluation consistency across multiple AI applications.

Enterprise

Enterprises should prioritize auditability, role-based workflows, production monitoring, and human review. LangSmith, Braintrust, W&B Weave, and MLflow Evaluation are strong fits when evaluation must align with broader governance, engineering, and model lifecycle processes.

Regulated industries (finance/healthcare/public sector)

Regulated teams should avoid relying only on automated scores. They should combine evaluation metrics, human review, audit trails, red-team testing, and access-controlled datasets. Tools with strong workflow visibility and enterprise controls are better suited for sensitive environments.

Budget vs premium

Open-source tools like Ragas, DeepEval, TruLens, Promptfoo, Langfuse, and MLflow can reduce cost but may require more engineering ownership. Premium platforms may be better when teams need dashboards, collaboration, support, and production-grade workflows.

Build vs buy (when to DIY)

Build your own evaluation workflow when your domain requires custom scoring, private datasets, or unique compliance checks. Buy or adopt a platform when your team needs faster rollout, shared dashboards, human review, and lower maintenance effort.

Implementation Playbook (30 / 60 / 90 Days)

30 Days — Pilot & Success Metrics

- Define the main RAG use cases you want to evaluate first.

- Create a small test dataset using real user questions and representative documents.

- Select core metrics such as faithfulness, answer relevance, context precision, and context recall.

- Compare at least two retrieval or chunking strategies using the same evaluation set.

- Track baseline latency, token usage, and cost per evaluation run.

- Review failed answers manually to understand whether issues come from retrieval, generation, or data quality.

60 Days — Security, Evaluation & Rollout

- Add regression tests so prompt, model, chunking, and retrieval changes can be checked before release.

- Introduce human review for high-risk or subjective answers.

- Add red-team tests for prompt injection, unsafe retrieval, and unsupported claims.

- Connect traces and logs so teams can inspect where each answer came from.

- Create version control for prompts, datasets, evaluation criteria, and model settings.

- Start sharing evaluation dashboards with engineering, product, and business stakeholders.

90 Days — Optimization, Governance & Scale

- Expand evaluation datasets to cover edge cases, multilingual content, and domain-specific queries.

- Optimize cost and latency by adjusting retrieval depth, reranking, caching, and model choice.

- Add governance rules for dataset retention, access control, and audit review.

- Use continuous monitoring to detect production quality drift over time.

- Build incident handling workflows for hallucination reports, unsafe answers, or retrieval failures.

- Scale evaluation gates across all major RAG applications and agent workflows.

Common Mistakes & How to Avoid Them

- Only evaluating final answers: Test retrieval quality separately so you know whether the issue is bad context or bad generation.

- Skipping ground-truth datasets: Even small curated datasets can reveal quality problems that automated scoring alone may miss.

- Ignoring prompt injection exposure: Include adversarial tests that check whether malicious documents or user prompts can manipulate responses.

- Using generic benchmarks only: Public benchmarks are useful, but private domain-specific tests are more relevant for production systems.

- No regression testing: Without repeatable tests, prompt and model updates can silently reduce answer quality.

- Overtrusting LLM-as-a-judge: Judge models are helpful, but they should be calibrated with human review for important use cases.

- Not tracking cost and latency: A highly accurate evaluation setup may still be impractical if it is too slow or expensive.

- Weak observability: Without traces, teams cannot easily debug whether failure came from retrieval, reranking, prompt design, or generation.

- No dataset versioning: If test data changes without tracking, evaluation results become hard to compare.

- Over-automation without human review: Human evaluation is still important for tone, judgment, compliance, and sensitive outputs.

- Ignoring data retention: Evaluation logs may contain sensitive prompts, retrieved chunks, or user data that need governance.

- Vendor lock-in without abstraction: Keep exports, APIs, and evaluation definitions portable where possible.

- No ownership model: Assign clear owners for metrics, review workflows, failure triage, and production quality monitoring.

- Treating evaluation as one-time work: RAG systems change constantly, so evaluation should run continuously.

FAQs

1. What are RAG evaluation and benchmarking tools?

RAG evaluation tools measure how well a retrieval-augmented generation system retrieves context and generates grounded answers. Benchmarking tools compare different prompts, models, retrievers, and chunking strategies.

2. Why is RAG evaluation important?

RAG systems can produce confident but incorrect answers if retrieval quality is poor. Evaluation helps detect hallucinations, missing context, irrelevant chunks, and weak answer grounding before users are affected.

3. What metrics matter most for RAG evaluation?

Important metrics include faithfulness, answer relevance, context relevance, context precision, context recall, latency, cost, and user satisfaction. The right mix depends on your use case and risk level.

4. What is faithfulness in RAG evaluation?

Faithfulness checks whether the generated answer is supported by the retrieved context. It helps detect hallucinations where the model says something that is not grounded in the source data.

5. What is context precision?

Context precision measures whether the retrieved chunks are actually useful for answering the question. High precision means the system is not wasting context window space on irrelevant information.

6. What is context recall?

Context recall measures whether the system retrieved enough of the needed information. Low recall often means important documents, passages, or facts are missing from the retrieved context.

7. Can I use BYO models for evaluation?

Yes, many evaluation workflows allow BYO model or multi-model setups. However, exact support depends on the tool, framework, and deployment design.

8. Can RAG evaluation tools be self-hosted?

Some tools are open-source and can be self-hosted, while others are cloud platforms. Self-hosting is useful when privacy, data residency, or internal governance is a priority.

9. Do these tools store my data?

Data handling depends on the tool and configuration. For sensitive use cases, check retention settings, logging behavior, access controls, and whether prompts or retrieved chunks leave your environment.

10. How do evaluation tools help with guardrails?

They can test whether the system resists prompt injection, avoids unsupported claims, and handles unsafe requests properly. Some tools focus more on guardrail testing than others.

11. How much do RAG evaluation tools cost?

Costs vary by open-source usage, hosted platform plans, evaluation volume, model usage, and storage. Exact pricing should be verified directly because it can change by plan and usage pattern.

12. Can evaluation tools reduce hallucinations?

They do not eliminate hallucinations by themselves, but they help detect and measure them. Teams can then improve retrieval, chunking, prompts, reranking, and guardrails based on evaluation results.

13. How often should RAG evaluation run?

Run evaluation during development, before deployment, and continuously in production. At minimum, run regression tests whenever prompts, models, retrieval settings, or data indexes change.

14. What is the difference between observability and evaluation?

Evaluation scores quality against defined criteria, while observability shows what happened inside the application. Strong RAG teams usually need both scoring and traces for proper debugging.

15. Can I switch RAG evaluation tools later?

Yes, but switching is easier if your datasets, metrics, prompts, and traces are portable. Avoid designing evaluation workflows that depend entirely on one proprietary format.

16. What are alternatives to dedicated RAG evaluation tools?

Alternatives include manual review, custom scripts, spreadsheet-based test sets, general ML experiment tracking, or internal QA workflows. These can work early but become harder to scale.

Conclusion

RAG evaluation and benchmarking tools are essential for building AI systems that are accurate, grounded, measurable, and production-ready. The best tool depends on your team size, technical maturity, risk level, and whether you need lightweight metrics, deep tracing, human review, or enterprise governance. Strong teams usually combine automated evaluation, human feedback, observability, and regression testing rather than relying on one score or one platform.

Next steps:

- Shortlist tools based on your RAG quality goals.

- Run a pilot using real queries and documents.

- Verify evaluation, security, and scalability before production rollout.