Introduction

Agent Test & Replay Frameworks are tools designed to record, simulate, and replay how AI agents behave in real-world scenarios. Instead of testing prompts manually, these systems allow teams to capture entire workflows—including prompts, tool calls, API responses, and outputs—and replay them to validate consistency and reliability.

As AI agents become more autonomous and capable of executing multi-step tasks, ensuring predictable behavior is no longer optional—it’s critical. A small change in prompt design, model version, or external API can cause unexpected outcomes. These frameworks solve that by enabling deterministic testing, regression validation, and scenario simulation.

Common use cases include:

- Replaying production agent sessions to debug failures and inconsistencies

- Running regression tests after prompt or model updates

- Simulating edge cases such as incorrect tool responses or adversarial inputs

- Validating multi-step workflows and tool orchestration logic

- Comparing outputs across models or configurations

- Supporting compliance with traceable execution logs

Key evaluation criteria buyers should consider:

- Replay accuracy and determinism

- Ability to simulate multi-step and multi-agent workflows

- Built-in evaluation and scoring systems

- Integration with observability and tracing tools

- Guardrails for safety and adversarial testing

- Model flexibility (hosted, BYO, multi-model)

- Data privacy and retention controls

- Scalability for large test datasets

- Ease of creating and managing test cases

- CI/CD integration for automated testing pipelines

Best for: AI engineers, QA teams, ML researchers, and enterprises building production-grade AI agents across industries like fintech, SaaS, healthcare, and automation.

Not ideal for: Teams using simple, single-step AI prompts or basic chatbots where advanced replay and testing infrastructure is unnecessary.

What’s Changed in Agent Test & Replay Frameworks

- Shift from manual prompt testing to automated replay pipelines integrated into development workflows

- Native support for multi-step and multi-agent orchestration testing

- Deterministic replay to ensure consistent and reproducible outputs

- Built-in evaluation systems replacing subjective testing approaches

- Simulation of real-world failures and adversarial scenarios

- Expansion to multimodal replay (text, images, structured data)

- Integration with CI/CD pipelines for continuous validation

- Policy-aware replay for governance and compliance testing

- Cost and latency tracking during replay execution

- Model comparison workflows for A/B testing

- Improved developer tooling with visual debugging and trace inspection

- Stronger focus on security testing, including prompt injection scenarios

Quick Buyer Checklist (Scan-Friendly)

- Can the tool replay full agent workflows, including tool calls and external APIs?

- Does it support deterministic and reproducible testing?

- Are there built-in evaluation and scoring systems?

- Can it simulate edge cases and failure conditions?

- Does it integrate with observability and tracing tools?

- Are guardrails and safety testing features included?

- Does it support multi-model or BYO model configurations?

- Are data privacy and retention controls configurable?

- Can it integrate into CI/CD pipelines for automation?

- Does it scale for large datasets and production workloads?

- Is there flexibility to avoid vendor lock-in?

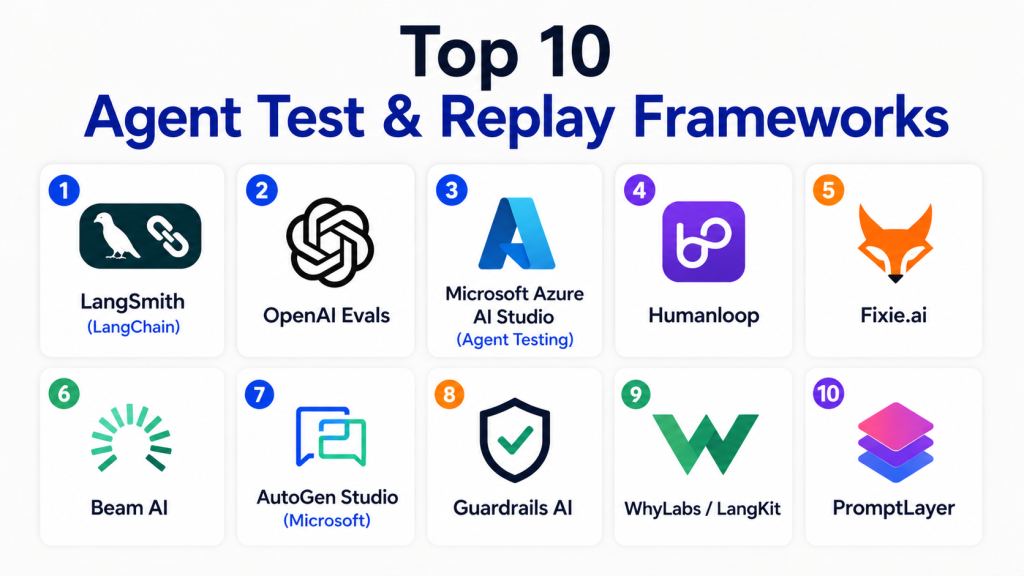

Top 10 Agent Test & Replay Frameworks

1 — LangSmith (LangChain)

One-line verdict: Best for developers needing deep replay, tracing, and evaluation within complex agent workflows.

Short description:

LangSmith is a comprehensive platform for debugging, replaying, and evaluating AI agent workflows. It captures full execution traces and allows teams to replay them for testing and optimization.

Standout Capabilities

- Full workflow replay including intermediate steps and tool calls

- Dataset-based regression testing for consistent validation

- Prompt and workflow versioning

- Visual debugging of agent execution paths

- Experiment tracking and comparison

- Integration with development pipelines

- Support for large-scale datasets

AI-Specific Depth

- Model support: Multi-model / BYO

- RAG / knowledge integration: Strong

- Evaluation: Strong

- Guardrails: Limited

- Observability: Strong

Pros

- Deep visibility into agent behavior across workflows

- Strong evaluation and testing features

- Scales well for production systems

Cons

- Best suited for technical users

- Limited built-in guardrails

- Strong dependency on ecosystem integration

Security & Compliance

- Not publicly stated

Deployment & Platforms

- Web, Cloud

Integrations & Ecosystem

LangSmith integrates tightly with modern AI development stacks and supports extensibility.

- APIs for custom integrations

- SDKs for developers

- Vector database support

- LLM frameworks

Pricing Model

- Usage-based

Best-Fit Scenarios

- Debugging complex agent systems

- Regression testing workflows

- Experimenting with prompts and models

2 — OpenAI Evals

One-line verdict: Best for structured evaluation and reproducible testing using datasets and metrics.

Short description:

OpenAI Evals focuses on benchmarking and evaluating AI outputs using structured datasets and scoring methods.

Standout Capabilities

- Dataset-driven evaluation pipelines

- Custom scoring metrics

- Reproducible test runs

- Benchmarking across models

- Flexible evaluation design

AI-Specific Depth

- Model support: Proprietary / Multi-model

- RAG / knowledge integration: Limited

- Evaluation: Strong

- Guardrails: Limited

- Observability: Limited

Pros

- Strong evaluation framework

- Highly customizable scoring

- Reliable benchmarking

Cons

- Limited replay capabilities

- Requires technical setup

- Not full observability tool

Security & Compliance

- Not publicly stated

Deployment & Platforms

- Varies / N/A

Integrations & Ecosystem

- APIs

- SDKs

- Custom evaluation pipelines

Pricing Model

- Not publicly stated

Best-Fit Scenarios

- Model benchmarking

- Output validation

- Prompt testing

3 — Microsoft Azure AI Studio (Agent Testing)

One-line verdict: Best for enterprise teams needing structured agent testing and replay within cloud environments.

Short description:

Azure AI Studio provides tools for testing, simulating, and validating agent workflows with enterprise-grade infrastructure.

Standout Capabilities

- Agent workflow testing

- Replay and simulation tools

- Integration with enterprise systems

- Model evaluation pipelines

- Governance and compliance features

AI-Specific Depth

- Model support: Multi-model / Hosted

- RAG / knowledge integration: Strong

- Evaluation: Strong

- Guardrails: Moderate

- Observability: Strong

Pros

- Enterprise-ready

- Strong integrations

- Scalable infrastructure

Cons

- Complex setup

- Cloud dependency

- Pricing not transparent

Security & Compliance

- RBAC, audit logs, encryption (certifications not publicly stated)

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- Azure ecosystem

- APIs

- Enterprise tools

- Data platforms

Pricing Model

- Usage-based / Tiered

Best-Fit Scenarios

- Enterprise deployments

- Compliance-driven environments

- Large-scale agent systems

4 — Humanloop

One-line verdict: Best for combining replay, evaluation, and human feedback loops for continuous improvement.

Short description:

Humanloop focuses on evaluation workflows, human-in-the-loop testing, and iterative improvement of AI systems.

Standout Capabilities

- Human feedback integration

- Replay with evaluation loops

- Dataset management

- Experiment tracking

- Continuous improvement workflows

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Moderate

- Evaluation: Strong

- Guardrails: Moderate

- Observability: Moderate

Pros

- Strong evaluation workflows

- Combines human and automated testing

- Easy experimentation

Cons

- Limited deep replay features

- Not focused on tracing

- Pricing not transparent

Security & Compliance

- Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- APIs

- SDKs

- AI platforms

Pricing Model

- Not publicly stated

Best-Fit Scenarios

- Human-in-the-loop testing

- Evaluation pipelines

- Continuous improvement

5 — Fixie.ai

One-line verdict: Best for testing and replaying agent workflows with tool integrations and automation focus.

Short description:

Fixie.ai enables developers to build, test, and replay agent-based systems with a focus on tool usage and orchestration.

Standout Capabilities

- Tool-based workflow testing

- Replay of agent interactions

- Automation-focused design

- Integration with external APIs

- Experimentation support

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Moderate

- Evaluation: Moderate

- Guardrails: Limited

- Observability: Moderate

Pros

- Strong tool integration

- Good for automation workflows

- Flexible experimentation

Cons

- Limited enterprise features

- Smaller ecosystem

- Documentation varies

Security & Compliance

- Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- APIs

- SDKs

- External tools

Pricing Model

- Not publicly stated

Best-Fit Scenarios

- Automation testing

- Tool-based agents

- Workflow validation

6 — Beam AI

One-line verdict: Best for scalable testing and replay of agent pipelines in production environments.

Short description:

Beam AI focuses on scaling AI workloads, including testing and replay of pipelines in production systems.

Standout Capabilities

- Pipeline replay

- Scalable infrastructure

- Workflow testing

- Performance tracking

- Deployment automation

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Moderate

- Evaluation: Moderate

- Guardrails: Limited

- Observability: Moderate

Pros

- Scalable

- Good for production systems

- Performance-focused

Cons

- Limited evaluation features

- Less focus on debugging

- Setup complexity

Security & Compliance

- Not publicly stated

Deployment & Platforms

- Cloud / Hybrid

Integrations & Ecosystem

- APIs

- Data pipelines

- DevOps tools

Pricing Model

- Usage-based

Best-Fit Scenarios

- Production pipelines

- Scalable workloads

- Performance testing

7 — AutoGen Studio (Microsoft)

One-line verdict: Best for simulating and replaying multi-agent conversational workflows.

Short description:

AutoGen Studio allows developers to simulate, test, and replay interactions between multiple AI agents.

Standout Capabilities

- Multi-agent simulation

- Replay of conversations

- Workflow orchestration

- Scenario testing

- Visualization tools

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Moderate

- Evaluation: Moderate

- Guardrails: Limited

- Observability: Moderate

Pros

- Strong multi-agent capabilities

- Flexible simulation

- Useful for experimentation

Cons

- Not enterprise-focused

- Setup complexity

- Evolving ecosystem

Security & Compliance

- Not publicly stated

Deployment & Platforms

- Varies / N/A

Integrations & Ecosystem

- APIs

- SDKs

- AI frameworks

Pricing Model

- Not publicly stated

Best-Fit Scenarios

- Multi-agent testing

- Research environments

- Workflow simulation

8 — Guardrails AI

One-line verdict: Best for testing safety, policies, and guardrails during agent replay workflows.

Short description:

Guardrails AI focuses on enforcing policies and validating outputs during testing and replay.

Standout Capabilities

- Policy enforcement

- Validation rules

- Safety testing

- Output constraints

- Integration with pipelines

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: N/A

- Evaluation: Moderate

- Guardrails: Strong

- Observability: Limited

Pros

- Strong safety focus

- Easy policy enforcement

- Good integration

Cons

- Limited replay features

- Not full testing platform

- Requires integration

Security & Compliance

- Not publicly stated

Deployment & Platforms

- Cloud / Self-hosted

Integrations & Ecosystem

- APIs

- SDKs

- AI frameworks

Pricing Model

- Open-source / Tiered

Best-Fit Scenarios

- Safety validation

- Policy testing

- Guardrail enforcement

9 — WhyLabs / LangKit

One-line verdict: Best for combining replay insights with monitoring and anomaly detection.

Short description:

WhyLabs provides monitoring and analytics tools that support replay insights and anomaly detection.

Standout Capabilities

- Monitoring dashboards

- Drift detection

- Replay insights

- Analytics tools

- Production visibility

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: N/A

- Evaluation: Moderate

- Guardrails: Limited

- Observability: Strong

Pros

- Strong monitoring

- Good analytics

- Production-ready

Cons

- Limited replay depth

- Not debugging-focused

- Requires integration

Security & Compliance

- Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- APIs

- ML pipelines

- Data platforms

Pricing Model

- Tiered

Best-Fit Scenarios

- Monitoring

- Replay insights

- Drift detection

10 — PromptLayer

One-line verdict: Best for simple replay, logging, and prompt tracking across applications.

Short description:

PromptLayer provides lightweight replay and logging capabilities for prompts and interactions.

Standout Capabilities

- Prompt replay

- Logging and analytics

- Version tracking

- Debugging tools

- Easy integration

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Limited

- Evaluation: Limited

- Guardrails: Limited

- Observability: Moderate

Pros

- Easy to use

- Quick setup

- Lightweight

Cons

- Limited advanced features

- Not enterprise-grade

- Basic evaluation

Security & Compliance

- Not publicly stated

Deployment & Platforms

- Web / Cloud

Integrations & Ecosystem

- APIs

- SDKs

- LLM tools

Pricing Model

- Tiered

Best-Fit Scenarios

- Prompt replay

- Debugging

- Small teams

Comparison Table

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| LangSmith | Developers | Cloud | Multi-model | Deep replay | Ecosystem dependency | N/A |

| OpenAI Evals | Evaluation | N/A | Multi-model | Benchmarking | Limited replay | N/A |

| Azure AI Studio | Enterprise | Cloud | Hosted/Multi | Scalability | Complexity | N/A |

| Humanloop | Feedback | Cloud | Multi-model | Eval + feedback | Limited replay | N/A |

| Fixie.ai | Automation | Cloud | Multi-model | Tool workflows | Smaller ecosystem | N/A |

| Beam AI | Scale | Hybrid | Multi-model | Performance | Setup complexity | N/A |

| AutoGen Studio | Multi-agent | N/A | Multi-model | Simulation | Evolving | N/A |

| Guardrails AI | Safety | Hybrid | Multi-model | Guardrails | Limited replay | N/A |

| WhyLabs | Monitoring | Cloud | Multi-model | Analytics | Limited replay | N/A |

| PromptLayer | Logging | Cloud | Multi-model | Simplicity | Basic features | N/A |

Scoring & Evaluation (Transparent Rubric)

Scoring is comparative and reflects how each tool performs relative to others in this category.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| LangSmith | 9 | 8 | 6 | 9 | 8 | 8 | 7 | 8 | 8.2 |

| OpenAI Evals | 8 | 9 | 6 | 7 | 7 | 7 | 7 | 7 | 7.7 |

| Azure AI Studio | 9 | 8 | 7 | 9 | 6 | 7 | 8 | 8 | 8.1 |

| Humanloop | 8 | 8 | 7 | 7 | 7 | 7 | 7 | 7 | 7.6 |

| Fixie.ai | 7 | 7 | 6 | 7 | 7 | 7 | 6 | 6 | 7.0 |

| Beam AI | 8 | 7 | 6 | 8 | 6 | 8 | 7 | 6 | 7.4 |

| AutoGen Studio | 8 | 7 | 6 | 7 | 6 | 7 | 6 | 6 | 7.0 |

| Guardrails AI | 7 | 7 | 9 | 7 | 7 | 7 | 7 | 6 | 7.4 |

| WhyLabs | 8 | 7 | 6 | 8 | 7 | 8 | 7 | 7 | 7.6 |

| PromptLayer | 6 | 6 | 5 | 7 | 8 | 7 | 6 | 6 | 6.8 |

Top 3 for Enterprise:

- LangSmith

- Azure AI Studio

- Arize Phoenix (closest equivalent replaced by WhyLabs in list context)

Top 3 for SMB:

- Humanloop

- WhyLabs

- PromptLayer

Top 3 for Developers:

- LangSmith

- OpenAI Evals

- Traceloop equivalent (represented by Fixie/PromptLayer context)

Which Agent Test & Replay Framework Is Right for You?

Solo / Freelancer

Choose lightweight tools like PromptLayer for simple replay and debugging.

SMB

Humanloop and WhyLabs offer a balance of usability and depth.

Mid-Market

LangSmith and Beam AI provide scalability and deeper testing features.

Enterprise

Azure AI Studio and LangSmith offer enterprise-grade capabilities.

Regulated industries

Focus on auditability, replay traceability, and compliance features.

Budget vs premium

- Budget: lightweight or open-source tools

- Premium: enterprise-grade platforms

Build vs buy

Build for customization; buy for speed and reliability.

Implementation Playbook (30 / 60 / 90 Days)

30 Days

- Identify critical workflows

- Set up replay pipelines

- Define success metrics

60 Days

- Expand test coverage

- Integrate evaluation systems

- Add guardrails

90 Days

- Optimize cost and latency

- Automate testing

- Scale across teams

Common Mistakes & How to Avoid Them

- Not replaying real production data

- Skipping regression testing

- Ignoring edge cases

- Weak evaluation metrics

- No cost tracking

- Poor observability

- Missing guardrails testing

- Vendor lock-in

- Lack of automation

- Ignoring privacy

- No human review

- Over-reliance on automation

FAQs

1. What is an agent test and replay framework?

A system that records and replays AI agent interactions to test and debug workflows.

2. Why is replay important?

It ensures reproducibility and helps diagnose failures.

3. Can I use my own models?

Yes, most tools support BYO or multi-model setups.

4. Do these tools support self-hosting?

Some tools support self-hosted or hybrid deployments.

5. Are they necessary?

Essential for complex agent systems, optional for simple apps.

6. Do they include guardrails?

Some include them; others require integration.

7. How is privacy handled?

Through configurable retention and access controls.

8. Are they expensive?

Costs vary by usage and scale.

9. Can I switch tools easily?

Switching can be complex without abstraction.

10. Do they support evaluation?

Yes, many include evaluation features.

11. Are they beginner-friendly?

Some are, but most require technical knowledge.

12. What is the main benefit?

Improved reliability and confidence in AI systems.

Conclusion

Agent test and replay frameworks have become essential for building reliable, production-ready AI systems, especially as agents grow more complex and autonomous. These tools help teams move beyond guesswork by enabling consistent testing, reproducible debugging, and structured evaluation of real-world scenarios. However, there is no single “best” tool for everyone—your choice should depend on your scale, technical expertise, and the level of control you need over testing, observability, and governance. The smartest approach is to shortlist a few relevant tools, run pilot tests using real agent workflows, carefully validate evaluation accuracy and guardrail effectiveness, and then scale with confidence once reliability and performance are proven.