Introduction

Model Governance Workflows help organizations control how AI and machine learning models are proposed, built, evaluated, approved, deployed, monitored, reviewed, and retired. In simple words, they create a structured operating system for responsible AI: who owns the model, what data it uses, how it was tested, what risks exist, who approved it, and what happens if it fails.

They matter because AI is now used in customer support, lending, hiring, healthcare operations, cybersecurity, fraud detection, marketing, product recommendations, legal workflows, and internal copilots. Without governance, teams can lose track of model versions, training data, prompts, approvals, risk reviews, monitoring evidence, and compliance obligations.

Real-world use cases include:

- Managing AI model inventory across teams

- Reviewing model risk before deployment

- Tracking model approvals, ownership, and accountability

- Documenting training data, evaluation results, and limitations

- Monitoring drift, fairness, hallucinations, and performance

- Supporting audits for regulated or high-risk AI systems

Evaluation criteria for buyers:

- Model inventory and registry workflows

- Risk assessment and approval management

- Model cards, documentation, and lineage

- Policy enforcement and control mapping

- Evaluation, monitoring, and drift evidence

- Human review and sign-off workflows

- Audit logs and change history

- RBAC, SSO, and admin controls

- Integration with MLOps, data, and monitoring tools

- Support for LLMs, RAG, agents, and traditional ML

- Reporting for compliance and executive oversight

- Deployment flexibility and vendor lock-in risk

Best for: AI governance teams, risk leaders, compliance teams, ML engineers, AI platform teams, data science leaders, security teams, enterprise architects, regulated industries, and organizations deploying production AI systems at scale.

Not ideal for: small teams running only low-risk prototypes, individual AI experiments, or casual prompt usage. In early stages, a simple model inventory, review checklist, and manual approval process may be enough before adopting a full governance workflow platform.

What’s Changed in Model Governance Workflows

- Governance now covers LLMs, agents, and RAG systems. Traditional model governance focused on predictive models, but modern workflows also need prompts, embeddings, retrieval pipelines, model providers, guardrails, and tool-calling logic.

- Model inventory is becoming broader. Enterprises now track not only internally trained models but also hosted models, open-source models, vendor AI features, copilots, AI agents, and embedded AI inside business applications.

- Approval workflows are more risk-based. Low-risk internal models may need lightweight review, while customer-facing, regulated, or decision-impacting systems need deeper assessment.

- Evaluation evidence matters more. Governance teams increasingly want proof of testing: accuracy, bias, drift, hallucination rate, refusal behavior, robustness, latency, and cost impact.

- Human accountability is central. Organizations need named owners for model development, business approval, risk acceptance, monitoring, and incident response.

- Prompt governance is becoming part of model governance. System prompts, prompt templates, policy prompts, evaluator prompts, and agent instructions must be versioned and reviewed.

- AI monitoring is connected to governance. Drift alerts, quality failures, unsafe outputs, user complaints, and cost spikes should feed back into governance reviews.

- Third-party AI risk is harder to manage. Teams need to govern models and AI features they did not build, especially when vendors do not expose full training or evaluation details.

- Security-by-design is expected. Governance workflows now include privacy review, data retention, prompt injection risk, access control, secrets handling, and auditability.

- Cross-functional review is now normal. AI governance often involves legal, compliance, security, data science, product, engineering, and business owners.

- Model retirement is getting more attention. Governance is not only about launching models; teams also need processes to deprecate, replace, or archive models safely.

- Executive reporting is becoming practical. Leaders want dashboards showing AI risk, inventory coverage, overdue reviews, incidents, model health, and compliance posture.

Quick Buyer Checklist

Use this checklist to shortlist model governance workflow tools quickly:

- Does the platform maintain a complete AI and model inventory?

- Can it track model owners, business purpose, risk level, and lifecycle stage?

- Does it support approval workflows before deployment?

- Can it document datasets, features, prompts, model versions, and evaluation results?

- Does it support traditional ML, LLMs, RAG systems, and AI agents?

- Can it connect with model registries, MLOps tools, monitoring tools, and data catalogs?

- Does it provide audit logs and change history?

- Can it manage model cards, risk assessments, and review evidence?

- Does it support human review and sign-off workflows?

- Can it track policy controls, exceptions, and remediation actions?

- Does it support privacy, data retention, and sensitive-data governance?

- Can it surface drift, hallucination, fairness, latency, and cost signals?

- Does it provide RBAC, SSO, and admin controls?

- Can reports be used by compliance, risk, and executive teams?

- Does it reduce vendor lock-in through APIs, exports, and integrations?

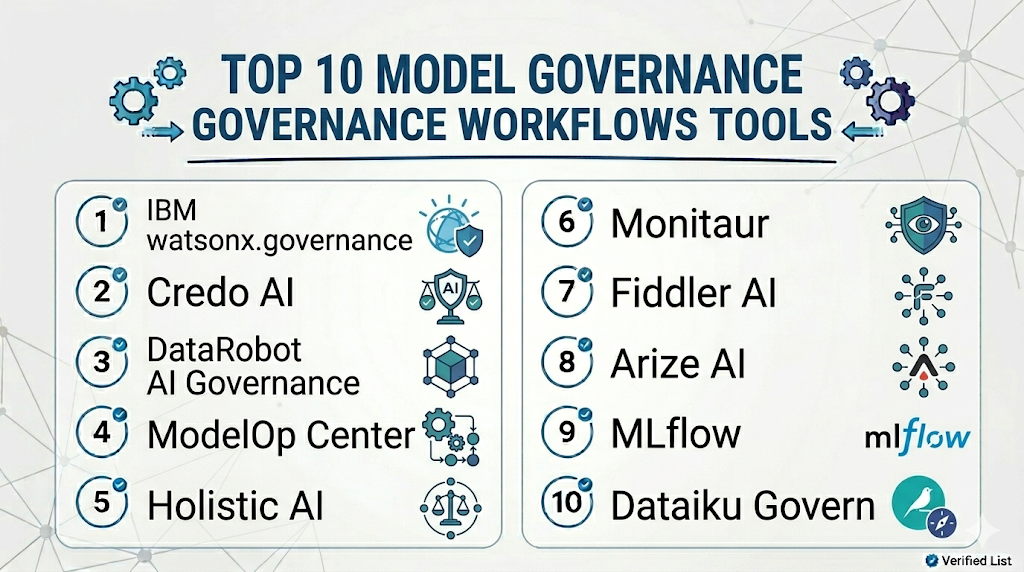

Top 10 Model Governance Workflows Tools

1 — IBM watsonx.governance

One-line verdict: Best for enterprises needing structured AI governance, risk workflows, and model lifecycle oversight.

Short description :

IBM watsonx.governance helps organizations govern AI models, manage risk, track lifecycle workflows, and support responsible AI processes. It is useful for enterprises that need governance visibility across model development, deployment, monitoring, and compliance review.

Standout Capabilities

- AI governance workflow support

- Model lifecycle documentation and oversight

- Risk and compliance management patterns

- Support for model inventory and review workflows

- Monitoring and evaluation evidence tracking depending on setup

- Useful for enterprise responsible AI programs

- Fits organizations standardizing AI governance practices

AI-Specific Depth Must Include

- Model support: Traditional ML, generative AI, and enterprise AI workflows depending on configuration

- RAG / knowledge integration: Varies / N/A, usually governed through connected application and documentation workflows

- Evaluation: Evaluation evidence, risk review, model validation, monitoring signals depending on setup

- Guardrails: Governance and policy workflows; technical guardrails may require companion tooling

- Observability: Governance dashboards, lifecycle status, risk views, audit evidence, and monitoring integrations depending on setup

Pros

- Strong enterprise governance positioning

- Useful for cross-functional risk and compliance review

- Good fit for organizations managing many AI systems

Cons

- May be more complex than smaller teams need

- Exact capabilities depend on configuration and broader IBM ecosystem usage

- Pricing and deployment details should be verified directly

Security & Compliance

Enterprise security features such as SSO, RBAC, audit logs, encryption, data controls, and admin workflows may vary by deployment and plan. Certifications are Not publicly stated here.

Deployment & Platforms

- Web-based enterprise platform

- Cloud and enterprise deployment options: Varies / N/A

- API and integration-based workflows

- Works with AI governance, risk, and model lifecycle processes

- Platform access depends on enterprise configuration

Integrations & Ecosystem

IBM watsonx.governance fits organizations that want governance workflows connected with model lifecycle management, risk review, and enterprise AI oversight.

- AI lifecycle workflows

- Model monitoring tools

- Data and governance systems

- Risk management processes

- Model documentation workflows

- Enterprise reporting

- MLOps integrations depending on setup

Pricing Model No exact prices unless confident

Typically enterprise-oriented pricing depending on deployment, modules, scale, and support needs. Exact pricing is Not publicly stated.

Best-Fit Scenarios

- Enterprises building formal AI governance programs

- Regulated teams needing model risk workflows

- Organizations needing executive AI governance reporting

2 — Credo AI

One-line verdict: Best for organizations needing responsible AI governance, risk assessment, and policy workflow management.

Short description :

Credo AI focuses on AI governance, risk management, policy alignment, and responsible AI workflows. It is useful for teams that need to document AI systems, assess risk, manage controls, and coordinate review across legal, compliance, security, and AI teams.

Standout Capabilities

- AI governance and risk assessment workflows

- Policy and control management

- AI system inventory and documentation

- Cross-functional review and approval patterns

- Evidence collection for AI governance processes

- Useful for responsible AI program management

- Supports governance across internal and third-party AI systems

AI-Specific Depth Must Include

- Model support: Traditional ML, generative AI, third-party AI, and AI system governance workflows

- RAG / knowledge integration: Varies / N/A, governed through system documentation and risk review

- Evaluation: Risk assessments, control evidence, review workflows, evaluation documentation depending on setup

- Guardrails: Policy and governance controls; technical guardrails may require companion tools

- Observability: Governance dashboards, risk status, control tracking, review history, and evidence management

Pros

- Strong focus on responsible AI governance

- Useful for policy-driven AI risk management

- Good fit for cross-functional governance teams

Cons

- Not a model training or serving platform

- Technical monitoring may require integrations

- Exact security, pricing, and deployment details should be verified directly

Security & Compliance

Security features such as SSO, RBAC, audit logs, encryption, retention controls, and admin workflows may vary by plan. Certifications are Not publicly stated here.

Deployment & Platforms

- Web-based platform

- Cloud deployment: Varies / N/A

- Self-hosted or hybrid: Varies / N/A

- Supports governance workflows across AI systems

- API and integration capabilities: Varies / N/A

Integrations & Ecosystem

Credo AI works well when model governance is driven by policy, risk, accountability, and review workflows rather than only technical model registry tasks.

- AI system inventory

- Risk assessment workflows

- Policy control frameworks

- Compliance review processes

- Evidence collection

- Third-party AI governance

- Enterprise reporting workflows

Pricing Model No exact prices unless confident

Typically enterprise-oriented pricing depending on governance scope, users, modules, and support needs. Exact pricing is Not publicly stated.

Best-Fit Scenarios

- Responsible AI governance programs

- AI risk and compliance teams

- Enterprises managing third-party and internal AI systems

3 — DataRobot AI Governance

One-line verdict: Best for DataRobot-centered teams needing model governance, monitoring, and lifecycle control.

Short description :

DataRobot AI Governance supports model management, documentation, monitoring, validation, and governance workflows within the DataRobot ecosystem. It is useful for organizations using DataRobot to build, deploy, monitor, and govern production models.

Standout Capabilities

- Model governance and lifecycle management

- Model inventory and documentation workflows

- Monitoring and performance visibility depending on setup

- Support for approval and validation processes

- Useful for governed enterprise AI deployment

- Fits teams using DataRobot for AI development

- Can connect governance with model operations

AI-Specific Depth Must Include

- Model support: Strongest within DataRobot workflows; broader model support varies by setup

- RAG / knowledge integration: Varies / N/A

- Evaluation: Model validation, monitoring, documentation, and governance evidence depending on configuration

- Guardrails: Governance and review workflows; technical guardrails vary by use case

- Observability: Model monitoring, lifecycle status, deployment visibility, risk and performance signals depending on setup

Pros

- Strong fit for teams already using DataRobot

- Connects governance with model lifecycle operations

- Useful for enterprise model oversight

Cons

- Best value appears within the DataRobot ecosystem

- May be less flexible for teams using many external stacks

- Exact features and pricing should be verified directly

Security & Compliance

Enterprise security features such as SSO, RBAC, audit logs, encryption, retention, and admin controls may vary by plan. Certifications are Not publicly stated here.

Deployment & Platforms

- Web-based enterprise platform

- Cloud and enterprise deployment options: Varies / N/A

- Works with DataRobot model lifecycle workflows

- API and integration support: Varies / N/A

- Platform access depends on configuration

Integrations & Ecosystem

DataRobot AI Governance fits teams that want governance tied closely to model development, deployment, and monitoring workflows inside DataRobot.

- DataRobot platform workflows

- Model deployment and monitoring

- Model documentation

- Validation workflows

- Risk review processes

- Enterprise reporting

- MLOps processes

Pricing Model No exact prices unless confident

Typically enterprise-oriented pricing based on platform usage, modules, scale, and support needs. Exact pricing is Not publicly stated.

Best-Fit Scenarios

- DataRobot customers needing governance workflows

- Enterprises managing model lifecycle risk

- Teams connecting governance with model operations

4 — ModelOp Center

One-line verdict: Best for enterprises needing model inventory, lifecycle governance, and operational risk control.

Short description :

ModelOp Center is focused on model governance and model operations across enterprise AI environments. It helps teams inventory models, manage lifecycle workflows, track risk, and coordinate governance across technical and business stakeholders.

Standout Capabilities

- Enterprise model inventory management

- Model lifecycle workflow governance

- Risk and compliance review patterns

- Approval and oversight workflows

- Model monitoring and operational visibility depending on setup

- Useful for regulated model risk programs

- Supports governance across diverse model environments

AI-Specific Depth Must Include

- Model support: Traditional ML, AI models, and enterprise model workflows depending on integration

- RAG / knowledge integration: Varies / N/A

- Evaluation: Validation evidence, model review, monitoring signals, lifecycle governance depending on setup

- Guardrails: Governance controls and policy workflows; technical guardrails require companion systems

- Observability: Model inventory, lifecycle stage, risk status, governance evidence, monitoring integrations

Pros

- Strong enterprise model governance focus

- Useful for regulated and high-control environments

- Helps centralize model inventory and lifecycle workflows

Cons

- Not a model development platform by itself

- Technical integrations may require planning

- Exact deployment and security details should be verified directly

Security & Compliance

Security features such as SSO, RBAC, audit logs, encryption, retention, and admin controls may vary by deployment and plan. Certifications are Not publicly stated here.

Deployment & Platforms

- Web-based enterprise platform

- Cloud, self-hosted, or hybrid: Varies / N/A

- API and integration-based workflows

- Works across model governance and operations processes

- Platform access depends on enterprise configuration

Integrations & Ecosystem

ModelOp Center fits organizations that need a central governance layer across different model development, deployment, and monitoring environments.

- Model inventories

- MLOps platforms

- Monitoring systems

- Risk workflows

- Validation processes

- Compliance reporting

- Enterprise model lifecycle workflows

Pricing Model No exact prices unless confident

Typically enterprise-oriented pricing depending on deployment, scale, users, and governance scope. Exact pricing is Not publicly stated.

Best-Fit Scenarios

- Enterprises with many models across teams

- Regulated model risk management programs

- Organizations needing centralized lifecycle governance

5 — Holistic AI

One-line verdict: Best for teams needing AI governance, risk management, and compliance workflow support.

Short description :

Holistic AI supports AI governance, risk, compliance, and assurance workflows. It is useful for organizations that need structured reviews, documentation, testing evidence, and governance processes for AI systems.

Standout Capabilities

- AI governance and risk workflow support

- AI system documentation and assessment

- Compliance and assurance process support

- Review workflows for responsible AI practices

- Risk tracking and governance reporting

- Useful for cross-functional AI oversight

- Supports governance across different AI system types

AI-Specific Depth Must Include

- Model support: Traditional AI, generative AI, and AI system governance workflows depending on setup

- RAG / knowledge integration: Varies / N/A

- Evaluation: Risk assessments, testing evidence, compliance review, governance documentation

- Guardrails: Policy and governance controls; technical guardrails may require companion tooling

- Observability: Governance status, risk dashboards, assessment records, review history depending on setup

Pros

- Strong governance and assurance orientation

- Useful for compliance-focused AI programs

- Helps coordinate non-technical and technical stakeholders

Cons

- Not an MLOps or deployment platform

- Technical monitoring may require integrations

- Exact platform details should be validated directly

Security & Compliance

Security features such as SSO, RBAC, audit logs, encryption, retention controls, and residency may vary by plan. Certifications are Not publicly stated here.

Deployment & Platforms

- Web-based governance platform: Varies / N/A

- Cloud deployment: Varies / N/A

- Self-hosted or hybrid: Varies / N/A

- API and integration capabilities: Varies / N/A

- Works with AI governance and compliance workflows

Integrations & Ecosystem

Holistic AI is useful when teams need governance workflows that involve legal, compliance, risk, data science, and business owners.

- AI risk assessments

- Governance documentation

- Compliance workflows

- Review and approval processes

- Policy mapping

- Evidence collection

- Reporting workflows

Pricing Model No exact prices unless confident

Pricing is Not publicly stated. Buyers should verify pricing based on governance scope, users, modules, and deployment requirements.

Best-Fit Scenarios

- AI governance and assurance teams

- Compliance-led AI risk programs

- Organizations documenting and reviewing AI systems

6 — Monitaur

One-line verdict: Best for teams needing AI governance workflows, model documentation, and accountability tracking.

Short description :

Monitaur focuses on AI governance, model documentation, policy workflows, and accountability processes. It is useful for organizations that need clear records of model purpose, ownership, review, testing, and oversight.

Standout Capabilities

- AI governance workflow support

- Model documentation and evidence tracking

- Accountability and review processes

- Useful for regulated and high-risk AI workflows

- Supports model lifecycle visibility

- Helps align technical and compliance stakeholders

- Governance-focused reporting patterns

AI-Specific Depth Must Include

- Model support: Traditional ML and AI governance workflows depending on setup

- RAG / knowledge integration: Varies / N/A

- Evaluation: Documentation of testing evidence, review results, risk assessments, and monitoring signals

- Guardrails: Governance and policy workflows; technical guardrails require companion tooling

- Observability: Governance records, review history, model status, evidence artifacts, and workflow dashboards depending on setup

Pros

- Strong focus on accountability and documentation

- Useful for compliance and model risk workflows

- Helps formalize model review processes

Cons

- Not a model-serving or training platform

- Technical observability may require external integrations

- Exact feature coverage should be verified directly

Security & Compliance

Security features such as SSO, RBAC, audit logs, encryption, retention, and admin controls may vary by deployment and plan. Certifications are Not publicly stated here.

Deployment & Platforms

- Web-based governance platform: Varies / N/A

- Cloud deployment: Varies / N/A

- Self-hosted or hybrid: Varies / N/A

- API and integration capabilities: Varies / N/A

- Supports governance and documentation workflows

Integrations & Ecosystem

Monitaur fits teams that need evidence-driven governance for AI systems, especially when accountability and documentation are central.

- Model documentation

- Governance review workflows

- Risk assessment processes

- Compliance evidence

- Monitoring inputs through integrations

- Approval workflows

- Reporting processes

Pricing Model No exact prices unless confident

Pricing is Not publicly stated. Buyers should verify pricing based on governance requirements, scale, and deployment needs.

Best-Fit Scenarios

- Organizations needing model accountability records

- Regulated teams documenting AI risk

- Enterprises formalizing model governance workflows

7 — Fiddler AI

One-line verdict: Best for teams combining model governance with explainability, monitoring, and responsible AI visibility.

Short description :

Fiddler AI focuses on model monitoring, explainability, performance tracking, and responsible AI workflows. It is useful when governance teams need visibility into model behavior, drift, fairness, and decision explanations.

Standout Capabilities

- Model performance monitoring

- Drift and data quality tracking

- Explainability for model behavior

- Responsible AI and fairness visibility

- Dashboards for model health and risk

- Useful for regulated model review workflows

- Connects technical monitoring with governance evidence

AI-Specific Depth Must Include

- Model support: Multi-model monitoring workflows depending on integration

- RAG / knowledge integration: Varies / N/A

- Evaluation: Performance monitoring, explainability analysis, drift checks, fairness review patterns

- Guardrails: Varies / N/A, focused more on visibility and responsible AI monitoring

- Observability: Model health dashboards, drift metrics, explainability, alerts, and performance trends

Pros

- Strong explainability and monitoring focus

- Useful for governance evidence and model risk review

- Good fit for regulated AI workflows

Cons

- Not a full governance workflow platform by itself

- May require companion tools for approvals and model inventory

- Generative AI governance depth should be verified for specific use cases

Security & Compliance

Security controls such as SSO, RBAC, audit logs, encryption, retention, and residency may vary by plan. Certifications are Not publicly stated here.

Deployment & Platforms

- Web-based platform

- Cloud deployment

- Enterprise deployment options: Varies / N/A

- API-based integrations

- Works with production AI and ML systems

Integrations & Ecosystem

Fiddler AI fits governance programs that need technical evidence about model performance, drift, fairness, and explainability.

- Model serving systems

- Data pipelines

- ML workflows

- Monitoring dashboards

- Governance workflows

- Risk review processes

- Business reporting workflows

Pricing Model No exact prices unless confident

Typically enterprise or tiered pricing based on scale, monitoring volume, and deployment needs. Exact pricing is Not publicly stated.

Best-Fit Scenarios

- Regulated teams needing explainability evidence

- Governance programs requiring model monitoring signals

- Organizations tracking fairness, drift, and performance risk

8 — Arize AI

One-line verdict: Best for AI platform teams needing observability evidence for governance and model risk reviews.

Short description :

Arize AI provides AI observability for model monitoring, drift detection, LLM monitoring, embeddings, and production performance. It is useful for governance workflows that require evidence from live model behavior.

Standout Capabilities

- Model observability and performance monitoring

- Drift detection across features, predictions, and embeddings

- LLM and RAG monitoring patterns

- Root-cause analysis for model issues

- Dashboards for model quality and risk signals

- Useful for production AI governance evidence

- Supports monitoring across model portfolios

AI-Specific Depth Must Include

- Model support: Multi-model workflows across traditional ML and generative AI systems

- RAG / knowledge integration: Supports RAG, retrieval, and embedding monitoring depending on setup

- Evaluation: Monitoring metrics, drift analysis, LLM evaluation workflows, human review patterns

- Guardrails: Varies / N/A, usually paired with governance and safety controls

- Observability: Traces, latency, embeddings, drift metrics, model performance, quality dashboards

Pros

- Strong production observability evidence

- Useful for both ML and LLM governance signals

- Helps governance teams understand model behavior over time

Cons

- Not a complete policy governance platform alone

- Requires integration with model systems

- Exact enterprise details should be verified directly

Security & Compliance

Security features such as SSO, RBAC, audit logs, encryption, retention, and residency may vary by plan. Certifications are Not publicly stated here.

Deployment & Platforms

- Web-based platform

- Cloud deployment

- Enterprise deployment options: Varies / N/A

- API and SDK-based workflows

- Works with production AI systems through integrations

Integrations & Ecosystem

Arize AI can provide monitoring evidence that supports governance reviews, incident response, and model lifecycle decisions.

- ML pipelines

- Model serving platforms

- Data warehouses

- Feature stores

- LLM applications

- RAG pipelines

- Monitoring and alerting workflows

Pricing Model No exact prices unless confident

Typically tiered or enterprise-oriented depending on usage, model volume, monitoring needs, and support requirements. Exact pricing is Not publicly stated.

Best-Fit Scenarios

- AI governance programs needing live monitoring evidence

- Teams tracking drift, quality, and model health

- Enterprises managing model portfolios

9 — MLflow

One-line verdict: Best for teams needing model registry, lifecycle tracking, and experiment evidence for governance.

Short description :

MLflow supports experiment tracking, model packaging, model registry workflows, and lifecycle management. It is useful for governance teams that need model lineage, version history, metrics, and promotion status.

Standout Capabilities

- Experiment tracking for parameters, metrics, and artifacts

- Model registry and lifecycle stage tracking

- Model versioning and promotion workflows

- Useful for reproducibility and lineage

- Works across many ML frameworks

- Flexible for custom governance processes

- Strong fit as a technical model record layer

AI-Specific Depth Must Include

- Model support: BYO models across many ML frameworks and workflows

- RAG / knowledge integration: Varies / N/A, can track custom RAG or embedding experiments if designed

- Evaluation: Experiment metrics, model comparison, custom evaluation tracking

- Guardrails: Varies / N/A

- Observability: Experiment history, artifacts, model registry metadata, parameters, metrics, and lineage depending on setup

Pros

- Strong technical tracking and registry foundation

- Useful for reproducibility and model lifecycle evidence

- Flexible across many model development stacks

Cons

- Not a full governance workflow system by itself

- Approval, policy, and risk workflows require companion processes or tools

- Security and governance depend on deployment and hosting setup

Security & Compliance

Security depends on deployment, identity integration, access control, artifact storage, encryption, logging, and hosting model. Certifications are Not publicly stated.

Deployment & Platforms

- Open-source and managed options depending on environment

- Cloud, self-hosted, or hybrid

- Web-based tracking UI depending on setup

- Works across Windows, macOS, and Linux development environments

- Integrates with training and deployment workflows

Integrations & Ecosystem

MLflow is useful as a technical backbone for model governance because it records experiments, artifacts, versions, and model lifecycle stages.

- ML frameworks

- Model registries

- Artifact stores

- CI/CD pipelines

- Cloud ML platforms

- Experiment tracking workflows

- Deployment integrations

Pricing Model No exact prices unless confident

Open-source usage is available. Managed or enterprise pricing varies by provider and deployment model.

Best-Fit Scenarios

- Teams needing model registry evidence

- MLOps teams tracking model lifecycle history

- Organizations building custom governance workflows

10 — Dataiku Govern

One-line verdict: Best for Dataiku teams needing AI governance, project oversight, and model lifecycle coordination.

Short description :

Dataiku Govern supports governance workflows for AI and analytics projects inside the Dataiku ecosystem. It is useful for organizations that want oversight across AI projects, model development, approvals, and operational lifecycle processes.

Standout Capabilities

- AI project governance workflows

- Model and analytics project oversight

- Approval and review process support

- Lifecycle visibility for AI initiatives

- Useful for Dataiku-centered organizations

- Helps align technical and business teams

- Supports governance reporting and accountability workflows

AI-Specific Depth Must Include

- Model support: Strongest within Dataiku workflows; broader support varies by integration

- RAG / knowledge integration: Varies / N/A

- Evaluation: Project review, model validation evidence, approval workflows, governance tracking depending on setup

- Guardrails: Varies / N/A, governance controls depend on configuration

- Observability: Governance status, project lifecycle visibility, review records, and reporting depending on setup

Pros

- Strong fit for Dataiku users

- Helps connect business governance with AI project workflows

- Useful for organizations managing many analytics and AI initiatives

Cons

- Best value appears inside the Dataiku ecosystem

- May be less flexible for non-Dataiku environments

- Exact feature depth should be verified directly

Security & Compliance

Security features such as SSO, RBAC, audit logs, encryption, retention, and admin controls may vary by deployment and plan. Certifications are Not publicly stated here.

Deployment & Platforms

- Web-based governance workflow platform

- Cloud and enterprise deployment options: Varies / N/A

- Works with Dataiku ecosystem workflows

- API and integration capabilities: Varies / N/A

- Platform access depends on configuration

Integrations & Ecosystem

Dataiku Govern fits organizations already using Dataiku for AI and analytics development. It helps connect project lifecycle governance with approval and oversight workflows.

- Dataiku project workflows

- AI project documentation

- Model lifecycle processes

- Approval workflows

- Governance reporting

- Analytics project oversight

- Business review processes

Pricing Model No exact prices unless confident

Typically enterprise-oriented pricing depending on platform usage, modules, users, and deployment needs. Exact pricing is Not publicly stated.

Best-Fit Scenarios

- Dataiku-centered AI governance teams

- Organizations governing analytics and AI projects

- Enterprises coordinating business and technical model reviews

Comparison Table

| Tool Name | Best For | Deployment Cloud/Self-hosted/Hybrid | Model Flexibility Hosted / BYO / Multi-model / Open-source | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| IBM watsonx.governance | Enterprise AI governance | Cloud, hybrid varies | Multi-model | Governance and risk workflows | Enterprise setup needed | N/A |

| Credo AI | Responsible AI governance | Cloud, hybrid varies | Multi-system governance | Policy and risk management | Not model-serving tool | N/A |

| DataRobot AI Governance | DataRobot model governance | Cloud, hybrid varies | DataRobot-centered, multi-model varies | Lifecycle governance | Ecosystem dependency | N/A |

| ModelOp Center | Model risk operations | Cloud, self-hosted, hybrid varies | Multi-model | Centralized model inventory | Integration planning needed | N/A |

| Holistic AI | AI compliance workflows | Cloud, hybrid varies | Multi-system governance | Assurance and risk review | Technical monitoring needs integrations | N/A |

| Monitaur | Accountability workflows | Cloud, hybrid varies | Multi-system governance | Documentation and evidence | Feature depth should be verified | N/A |

| Fiddler AI | Explainability evidence | Cloud, hybrid varies | Multi-model | Monitoring and explainability | Not full governance suite alone | N/A |

| Arize AI | Observability evidence | Cloud, hybrid varies | Multi-model | Production model visibility | Needs governance companion tools | N/A |

| MLflow | Model registry evidence | Cloud, self-hosted, hybrid | BYO, multi-framework | Tracking and lineage | Not policy workflow alone | N/A |

| Dataiku Govern | Dataiku project governance | Cloud, hybrid varies | Dataiku-centered | AI project oversight | Best inside Dataiku ecosystem | N/A |

Scoring & Evaluation Transparent Rubric

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| IBM watsonx.governance | 9 | 8 | 8 | 8 | 7 | 6 | 9 | 8 | 8.00 |

| Credo AI | 9 | 8 | 8 | 8 | 8 | 6 | 8 | 8 | 8.00 |

| DataRobot AI Governance | 8 | 8 | 7 | 8 | 8 | 7 | 8 | 8 | 7.80 |

| ModelOp Center | 9 | 8 | 7 | 8 | 7 | 6 | 8 | 8 | 7.70 |

| Holistic AI | 8 | 8 | 8 | 7 | 7 | 6 | 8 | 7 | 7.45 |

| Monitaur | 8 | 7 | 7 | 7 | 7 | 6 | 8 | 7 | 7.15 |

| Fiddler AI | 7 | 8 | 6 | 8 | 7 | 7 | 8 | 8 | 7.35 |

| Arize AI | 7 | 8 | 6 | 9 | 7 | 8 | 8 | 8 | 7.60 |

| MLflow | 7 | 7 | 4 | 8 | 8 | 7 | 6 | 8 | 6.90 |

| Dataiku Govern | 8 | 7 | 7 | 8 | 8 | 7 | 8 | 8 | 7.65 |

Top 3 for Enterprise

- IBM watsonx.governance

- Credo AI

- ModelOp Center

Top 3 for SMB

- MLflow

- Fiddler AI

- Arize AI

Top 3 for Developers

- MLflow

- Arize AI

- Fiddler AI

Which Model Governance Workflows Tool Is Right for You?

Solo / Freelancer

Solo users usually do not need a full governance workflow platform. If you are building a small AI project, start with basic documentation, model version tracking, evaluation records, and clear notes about data sources and limitations.

Recommended options:

- MLflow for experiment tracking and model version history

- Arize AI if you need monitoring evidence for a live model

- Fiddler AI if explainability and performance visibility matter

For low-risk prototypes, a simple model card, test report, and manual approval checklist may be enough.

SMB

Small and midsize businesses should focus on practical governance without creating heavy bureaucracy. The best tool should help teams answer basic questions: What AI systems exist, who owns them, how were they tested, and are they still safe?

Recommended options:

- MLflow for technical lineage and registry evidence

- Arize AI for production monitoring evidence

- Fiddler AI for explainability and risk signals

- Credo AI if responsible AI governance is becoming a formal business requirement

SMBs should prioritize governance workflows that are simple enough for teams to actually use.

Mid-Market

Mid-market organizations often have multiple AI systems across product, operations, analytics, support, and risk workflows. They need model inventory, approvals, evidence collection, and monitoring signals.

Recommended options:

- Credo AI for responsible AI policy and risk workflows

- ModelOp Center for centralized model lifecycle governance

- Dataiku Govern if Dataiku is already central to AI development

- Arize AI or Fiddler AI for monitoring evidence

- MLflow as a technical registry and lineage layer

Mid-market buyers should evaluate how governance connects with existing MLOps, data governance, and monitoring processes.

Enterprise

Enterprises need formal governance workflows, auditability, policy mapping, role-based access, ownership models, risk tiers, approval workflows, monitoring evidence, and executive reporting.

Recommended options:

- IBM watsonx.governance for enterprise AI governance workflows

- Credo AI for responsible AI policy and control management

- ModelOp Center for model operations governance

- DataRobot AI Governance for DataRobot-centered organizations

- Dataiku Govern for Dataiku-centered organizations

- Fiddler AI or Arize AI for monitoring and explainability evidence

Enterprise buyers should verify RBAC, SSO, audit logs, data retention, deployment boundaries, reporting workflows, integrations, and support expectations.

Regulated industries finance/healthcare/public sector

Regulated teams need governance workflows that produce evidence. It is not enough to say a model was reviewed; teams need records showing who reviewed it, what was tested, what risks were accepted, and how ongoing monitoring is handled.

Important priorities:

- Model inventory and ownership

- Risk tiering and approval workflows

- Data lineage and model documentation

- Bias, fairness, and explainability evidence

- Drift, quality, and monitoring records

- Audit logs and review history

- Human sign-off for high-risk systems

- Incident handling and rollback processes

- Third-party AI vendor documentation

- Retention and residency controls

Strong-fit options may include IBM watsonx.governance, Credo AI, ModelOp Center, Holistic AI, Monitaur, Fiddler AI, and Arize AI, depending on governance scope and existing systems.

Budget vs premium

Budget-conscious teams should start with lightweight governance workflows and technical evidence before adopting a full enterprise platform.

Budget-friendly direction:

- MLflow for model registry and experiment evidence

- Manual model cards and review checklists

- Arize AI or Fiddler AI when monitoring evidence becomes necessary

- Existing data governance tools if already available internally

Premium direction:

- IBM watsonx.governance for enterprise AI governance

- Credo AI for responsible AI risk and policy workflows

- ModelOp Center for model risk operations

- DataRobot AI Governance or Dataiku Govern if aligned with existing platform investments

- Holistic AI or Monitaur for compliance and accountability workflows

The right choice depends on whether your biggest need is policy governance, model inventory, monitoring evidence, risk review, ecosystem alignment, or audit readiness.

Build vs buy when to DIY

DIY can work when:

- You have a small number of models

- AI systems are low risk

- Reviews are simple and infrequent

- You can maintain documentation manually

- Compliance requirements are light

- Technical teams already track model versions and evaluations

Buy or adopt a dedicated platform when:

- AI systems affect customers or regulated decisions

- Multiple teams deploy models

- You need formal approvals and audit trails

- You need risk tiering and control mapping

- You manage third-party AI systems

- You need monitoring evidence tied to governance

- Executives need governance dashboards

- You need repeatable review and incident workflows

A practical approach is to start with a model inventory and review checklist, then move to a governance platform as AI risk, scale, and regulatory pressure increase.

Implementation Playbook 30 / 60 / 90 Days

30 Days: Pilot and success metrics

Start with one business-critical AI system. Do not try to govern every model at once without a working process.

Key tasks:

- Create an initial inventory of active AI systems

- Select one high-impact model or LLM application for governance pilot

- Identify model owner, business owner, technical owner, and reviewer

- Document model purpose, data sources, users, outputs, and risk level

- Collect existing evaluation results and monitoring evidence

- Define required approval steps before deployment or continued use

- Create a basic model card or governance record

- Define success metrics such as completeness, review time, risk coverage, and issue resolution

- Document rollback and incident response process

- Review privacy, retention, and access controls

AI-specific tasks:

- Build an initial evaluation evidence checklist

- Add prompt, model, and data version tracking where relevant

- Add red-team and hallucination review for LLM workflows

- Track latency, cost, and monitoring signals

- Define incident handling for unsafe or degraded AI behavior

60 Days: Harden security, evaluation, and rollout

After the pilot works, expand governance workflows and connect them with technical systems.

Key tasks:

- Add risk tiers for different AI systems

- Create approval templates by risk level

- Connect governance records to model registry or inventory tools

- Add review steps for data, security, legal, and compliance where needed

- Add monitoring evidence for production models

- Add audit logs and ownership history

- Define exception handling and remediation workflows

- Train model owners and reviewers

- Expand governance to more models or business units

- Create dashboards for overdue reviews and high-risk systems

AI-specific tasks:

- Add prompt injection and jailbreak review for LLM applications

- Add RAG quality and hallucination evidence where relevant

- Add guardrail failure tracking

- Track model version, prompt version, and data version together

- Convert incidents into governance review triggers

- Review sensitive data exposure in prompts, logs, and training data

90 Days: Optimize cost, latency, governance, and scale

Once governance workflows are reliable, scale them across the organization and connect them with executive oversight.

Key tasks:

- Standardize governance templates

- Create model lifecycle policies

- Automate evidence collection where possible

- Add executive dashboards for AI inventory, risk, and review status

- Define periodic review cadence by risk tier

- Add model retirement and deprecation workflows

- Review third-party AI systems and vendor models

- Connect governance with incident management

- Review vendor lock-in and export options

- Create an internal AI governance playbook

AI-specific tasks:

- Add advanced red-team review for high-risk LLM applications

- Monitor hallucination, drift, latency, cost, and unsafe output trends

- Add governance gates before model, prompt, or retrieval changes

- Improve fallback and rollback governance

- Add human review for regulated outputs

- Scale evaluation, guardrails, monitoring, and governance evidence across teams

Common Mistakes & How to Avoid Them

- Treating governance as paperwork only: Governance should connect to real model behavior, evaluation evidence, monitoring, incidents, and release decisions.

- No complete model inventory: Teams cannot govern models they do not know exist.

- Ignoring third-party AI systems: Vendor AI tools, embedded AI features, and hosted models also need governance review.

- No clear ownership: Every AI system should have business, technical, and risk owners.

- Skipping evaluation evidence: Approval should be based on testing, monitoring, and documented risk review.

- No risk tiering: Low-risk internal models and high-risk decision systems should not follow the same review process.

- Ignoring LLM-specific risks: Prompt injection, hallucinations, data leakage, unsafe outputs, and retrieval failures need governance coverage.

- No audit trail: Governance workflows should record who approved what, when, why, and based on which evidence.

- Overcomplicating early workflows: If governance is too heavy, teams will avoid it. Start practical and scale by risk.

- No monitoring connection: Governance should use drift, quality, latency, cost, and incident signals from production systems.

- No incident response process: AI failures should trigger review, remediation, rollback, and updated controls.

- Weak data governance link: Model governance depends on knowing what data was used, where it came from, and whether it is allowed.

- Vendor lock-in without export planning: Governance records, model cards, inventories, and evidence should be portable where possible.

- No retirement process: Old or unused models should be reviewed, archived, replaced, or decommissioned safely.

FAQs

1. What is a model governance workflow?

A model governance workflow is a structured process for documenting, reviewing, approving, monitoring, and retiring AI models. It defines who owns the model and what evidence is required before and after deployment.

2. Why is model governance important?

Model governance helps reduce risk by ensuring models are tested, documented, approved, monitored, and accountable. It is especially important when AI affects customers, compliance, safety, or business decisions.

3. Is model governance only for regulated industries?

No. Regulated industries need it most, but any company using production AI can benefit from model inventory, ownership, review workflows, and monitoring evidence.

4. How is model governance different from MLOps?

MLOps focuses on building, deploying, and operating models. Model governance focuses on accountability, review, documentation, approval, risk controls, and compliance evidence.

5. Do LLM applications need model governance?

Yes. LLM systems need governance for prompts, models, retrieval sources, evaluation results, hallucination risk, guardrails, monitoring, and human review workflows.

6. Can governance tools manage BYO models?

Many governance platforms can document and govern BYO models, but technical integration varies. Buyers should verify registry, monitoring, and deployment connectivity.

7. Do governance workflows support self-hosted models?

Yes, governance can apply to self-hosted models, cloud-hosted models, open-source models, and third-party vendor AI systems. Tool support varies by integration.

8. How do these tools help with privacy?

They help document data usage, ownership, access controls, retention policies, and review evidence. Technical privacy enforcement may still require data governance and security tools.

9. What should be included in a model governance record?

A strong record includes model purpose, owner, data sources, model version, training method, evaluation results, risk tier, approvals, monitoring plan, limitations, and incident process.

10. How often should models be reviewed?

Review frequency depends on risk level, business impact, regulation, drift, incidents, and model changes. High-risk systems should be reviewed more often than low-risk internal tools.

11. Can governance tools detect hallucinations or drift?

Some governance platforms track evidence from monitoring tools, while observability platforms may directly detect drift or quality issues. Often, governance and monitoring tools work together.

12. What are alternatives to model governance platforms?

Alternatives include spreadsheets, model cards, internal approval forms, data governance tools, model registries, ticketing systems, and custom workflows. These can work early but become harder to scale.

13. Can I switch governance tools later?

Yes, but switching is easier if model inventories, evidence, documentation, approvals, and audit records can be exported or recreated reliably.

14. What is the biggest risk without model governance?

The biggest risk is losing control over who built a model, what it uses, how it was tested, whether it is safe, and who is accountable if it fails.

15. Do governance workflows replace model monitoring?

No. Governance defines accountability and controls. Monitoring provides live evidence about model behavior. Production AI teams usually need both.

Conclusion

Model Governance Workflows help organizations move from informal AI experimentation to accountable, auditable, and risk-aware AI operations. The best tool depends on context: IBM watsonx.governance, Credo AI, ModelOp Center, Holistic AI, and Monitaur fit formal governance and risk workflows; DataRobot AI Governance and Dataiku Govern fit teams already using those ecosystems; Fiddler AI and Arize AI provide monitoring and explainability evidence; and MLflow supports technical lineage, registry, and model lifecycle records. There is no single universal winner because governance needs vary by risk level, industry, tool stack, model type, and compliance expectations. Start by shortlisting three tools, run a pilot on one real AI system, verify security, evaluation evidence, monitoring connections, approval workflows, and auditability, then scale governance across more models and AI applications.