Introduction

MLOps Lifecycle Management Platforms are software solutions designed to streamline the entire machine learning (ML) workflow, from data ingestion and feature engineering to model deployment, monitoring, and governance. They bridge the gap between data science experimentation and production-level operations, enabling teams to operationalize ML models efficiently and consistently. As AI adoption accelerates, enterprises face increasing pressure to scale ML models across multiple environments while ensuring reliability, security, and compliance.

These platforms are vital for organizations seeking to reduce time-to-market for AI initiatives, improve model reproducibility, and enforce governance standards. Real-world use cases include: deploying predictive models for customer churn, automating anomaly detection in IoT data, managing recommendation engines for e-commerce, orchestrating NLP pipelines for document analysis, monitoring real-time fraud detection models, and auditing AI models for regulatory compliance. Key evaluation criteria buyers should focus on include: deployment flexibility, scalability, model monitoring, reproducibility, security & compliance, integration with data pipelines, cost efficiency, latency optimization, guardrails, AI evaluation/testing frameworks, multi-model support, and observability.

Best for: AI engineers, ML Ops teams, data-driven enterprises, and organizations in regulated industries like finance, healthcare, and telecom.

Not ideal for: companies with very simple ML needs, low volume experimentation, or where manual model deployment and monitoring are sufficient.

What’s Changed in MLOps Lifecycle Management Platforms

- Integration with agentic workflows for automated model retraining and pipeline optimization.

- Native support for multimodal AI pipelines including text, image, audio, and tabular data.

- Advanced evaluation frameworks to detect model drift, bias, and hallucinations in real-time.

- Guardrails against prompt injection, adversarial inputs, and unsafe model outputs.

- Enhanced observability with tracing of inference, token usage, and cost per request.

- Multi-cloud and hybrid deployment options with BYO model capabilities.

- Improved governance and compliance tracking for data residency, retention, and audit.

- Cost and latency optimization features for routing workloads to the most efficient compute.

- Centralized model registry with versioning and rollback capabilities.

- Support for RAG (retrieval-augmented generation) pipelines and vector database integrations.

- End-to-end metadata tracking for reproducibility and lineage visualization.

- Native security and identity controls, including SSO, RBAC, and audit logging.

Quick Buyer Checklist (Scan-Friendly)

- Data privacy and retention policies are enforceable.

- Model choice: hosted vs BYO vs open-source support.

- Support for RAG pipelines and connectors to knowledge bases.

- Built-in evaluation frameworks for regression, drift, and bias.

- Guardrails for prompt injection, adversarial inputs, and unsafe outputs.

- Latency and cost controls for high-volume deployments.

- Observability and monitoring for inference and pipeline performance.

- Admin controls and audit logging for compliance.

- Vendor lock-in risk assessment and portability options.

- Versioning and rollback capabilities for model lifecycle management.

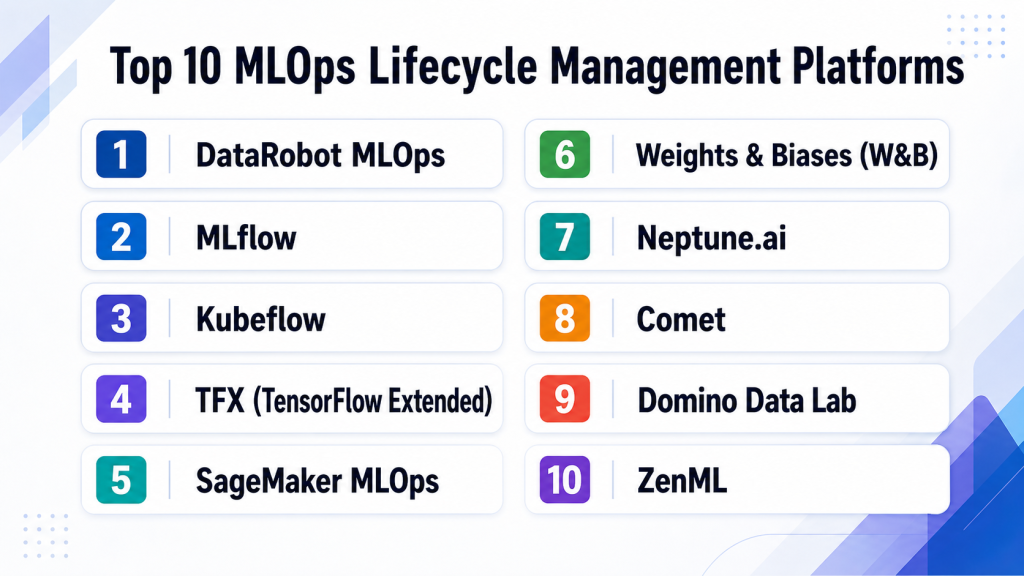

Top 10 MLOps Lifecycle Management Platforms

1 — DataRobot MLOps

One-line verdict: Best for enterprises seeking end-to-end automation and scalable ML deployment.

Short description: DataRobot MLOps centralizes model deployment, monitoring, and governance for enterprise AI teams, supporting both internal and external ML workflows.

Standout Capabilities

- Automated model deployment and retraining pipelines.

- Centralized model registry with versioning.

- Advanced monitoring for performance, drift, and bias.

- BYO model support with multi-framework integration.

- Cost and latency optimization features.

- Governance dashboards and audit-ready reporting.

- Integration with popular cloud platforms and data lakes.

AI-Specific Depth

- Model support: Proprietary + BYO models.

- RAG / knowledge integration: Varies / N/A

- Evaluation: Regression, bias, drift detection.

- Guardrails: Policy checks, prompt/adversarial input handling.

- Observability: Tracing, token/cost metrics, latency dashboards.

Pros

- Enterprise-ready with strong compliance features.

- Scalable pipelines for multiple models.

- Detailed monitoring and governance tools.

Cons

- Steeper learning curve for small teams.

- Cost may be high for smaller deployments.

- Limited open-source framework support.

Security & Compliance

- SSO/SAML, RBAC, audit logs, encryption, data residency controls.

- Certifications: Not publicly stated.

Deployment & Platforms

- Web, Cloud, Hybrid.

- Varies / N/A for on-premises.

Integrations & Ecosystem

Extensive integrations with cloud platforms, data warehouses, and ML frameworks.

- APIs and SDKs for Python and R

- Connectors for Snowflake, AWS S3, Azure Data Lake

- CI/CD integration

- Slack and Teams notifications

- Extensible for custom monitoring plugins

Pricing Model

- Usage-based tiered enterprise subscription.

- Not publicly stated.

Best-Fit Scenarios

- Large enterprises with multiple ML teams.

- Regulated industries requiring auditability.

- Organizations scaling ML models across regions.

2 — MLflow

One-line verdict: Ideal for developers and data scientists needing open-source, flexible lifecycle management.

Short description: MLflow is an open-source MLOps platform providing experiment tracking, model registry, and deployment support across frameworks.

Standout Capabilities

- Experiment tracking and version control.

- Model registry with approval workflows.

- Flexible deployment options (REST API, containers).

- Multi-framework support including PyTorch, TensorFlow, Scikit-learn.

- Open-source extensibility.

- Integration with popular CI/CD pipelines.

- Minimal vendor lock-in.

AI-Specific Depth

- Model support: Open-source + BYO models.

- RAG / knowledge integration: N/A

- Evaluation: Experiment tracking and regression testing.

- Guardrails: Varies / N/A

- Observability: Token usage tracking varies by integration.

Pros

- Free and open-source.

- Highly extensible and framework-agnostic.

- Supports multiple deployment targets.

Cons

- Lacks built-in enterprise monitoring dashboards.

- Requires setup and maintenance.

- Limited security compliance features out-of-the-box.

Security & Compliance

- Depends on deployment; Not publicly stated.

Deployment & Platforms

- Web, Cloud, Linux, Windows.

- Self-hosted or cloud-managed.

Integrations & Ecosystem

Strong community with many integrations:

- Python and R SDKs

- Docker and Kubernetes deployment

- CI/CD tools (Jenkins, GitLab)

- Cloud connectors (AWS, GCP, Azure)

Pricing Model

- Open-source; enterprise support available.

- Not publicly stated.

Best-Fit Scenarios

- Developer teams seeking flexible MLOps.

- Academic research groups.

- Organizations with BYO model frameworks.

3 — Kubeflow

One-line verdict: Best for organizations requiring scalable, Kubernetes-native MLOps pipelines.

Short description: Kubeflow enables orchestration of ML workflows in Kubernetes, including training, deployment, and monitoring for complex pipelines.

Standout Capabilities

- Kubernetes-native orchestration.

- Pipelines for training, deployment, and monitoring.

- Multi-framework model support.

- Scalable distributed training.

- Integration with cloud storage and compute.

- Open-source extensibility.

- Reproducible pipelines with versioning.

AI-Specific Depth

- Model support: Open-source + BYO.

- RAG / knowledge integration: N/A

- Evaluation: Offline evaluation and regression tests.

- Guardrails: Varies / N/A

- Observability: Traces, metrics, token logs vary by integration.

Pros

- Highly scalable for production ML.

- Strong integration with Kubernetes ecosystem.

- Open-source flexibility.

Cons

- Steep learning curve.

- Requires DevOps expertise.

- Monitoring dashboards not native.

Security & Compliance

- Depends on Kubernetes setup; Not publicly stated.

Deployment & Platforms

- Linux, Cloud, Kubernetes.

- Self-hosted.

Integrations & Ecosystem

- Python SDK

- TensorFlow, PyTorch support

- Argo workflows integration

- Cloud storage connectors

- CI/CD pipelines

Pricing Model

- Open-source; enterprise support optional.

- Not publicly stated.

Best-Fit Scenarios

- Teams with Kubernetes expertise.

- Large-scale distributed ML pipelines.

- Organizations needing reproducibility at scale.

4 — TFX (TensorFlow Extended)

One-line verdict: Suited for teams standardizing ML pipelines with TensorFlow at scale.

Short description: TFX offers production-ready ML pipelines for TensorFlow, focusing on data validation, model training, deployment, and monitoring.

Standout Capabilities

- End-to-end TensorFlow pipeline orchestration.

- Data validation and schema enforcement.

- Model analysis and monitoring.

- Serving and deployment with TF Serving.

- Integration with cloud storage.

- Scalability for large datasets.

- Open-source community support.

AI-Specific Depth

- Model support: TensorFlow; BYO limited.

- RAG / knowledge integration: N/A

- Evaluation: Offline and online evaluation, regression tests.

- Guardrails: Varies / N/A

- Observability: Metrics for latency and performance.

Pros

- Standardized TensorFlow pipeline.

- Scalable and production-ready.

- Strong community support.

Cons

- TensorFlow-centric.

- Limited multi-framework support.

- Requires engineering resources.

Security & Compliance

- Depends on deployment; Not publicly stated.

Deployment & Platforms

- Linux, Cloud; containerized deployments.

Integrations & Ecosystem

- TensorFlow models

- Apache Beam for data pipelines

- Kubernetes, Airflow integration

- Cloud connectors

Pricing Model

- Open-source; enterprise support optional.

Best-Fit Scenarios

- TensorFlow-heavy ML teams.

- Enterprise ML pipelines.

- Teams requiring strong pipeline reproducibility.

5 — SageMaker MLOps

One-line verdict: Best for AWS users seeking integrated deployment, monitoring, and governance for ML models.

Short description: SageMaker MLOps provides end-to-end model lifecycle management within AWS, with automation, monitoring, and compliance features.

Standout Capabilities

- Model deployment, monitoring, and retraining.

- Integrated CI/CD for ML.

- Built-in monitoring and drift detection.

- Governance dashboards for compliance.

- Auto-scaling and multi-region support.

- Model registry with versioning.

- Integration with AWS ecosystem.

AI-Specific Depth

- Model support: BYO + proprietary AWS models.

- RAG / knowledge integration: Connectors via AWS services.

- Evaluation: Offline and online testing frameworks.

- Guardrails: Policy checks, role-based access.

- Observability: Inference tracing, cost, and latency metrics.

Pros

- Full AWS integration.

- Enterprise-grade monitoring and governance.

- Scales automatically with demand.

Cons

- AWS lock-in.

- Can be expensive at scale.

- Learning curve for non-AWS teams.

Security & Compliance

- IAM, RBAC, SSO; audit logs; encryption.

- Certifications: Not publicly stated.

Deployment & Platforms

- Web, Cloud (AWS).

Integrations & Ecosystem

- AWS S3, Lambda, RDS

- CloudWatch for monitoring

- API integration

- SDKs for Python and Java

Pricing Model

- Usage-based, tiered by instance type and feature.

- Not publicly stated.

Best-Fit Scenarios

- Enterprises heavily on AWS.

- Teams requiring governance and monitoring.

- ML pipelines with multi-region deployment.

6 — Weights & Biases (W&B)

One-line verdict: Developer-focused tool for tracking, versioning, and collaborative model management.

Short description: W&B provides experiment tracking, dataset versioning, and model registry for data scientists and ML engineers.

Standout Capabilities

- Experiment tracking and visualization.

- Model and dataset versioning.

- Collaboration tools for teams.

- Automated logging from scripts and notebooks.

- Reports and dashboards for stakeholders.

- Integrates with CI/CD pipelines.

- Open-source friendly.

AI-Specific Depth

- Model support: BYO + multi-framework.

- RAG / knowledge integration: N/A

- Evaluation: Tracking experiments and regression.

- Guardrails: Varies / N/A

- Observability: Metrics, performance dashboards.

Pros

- Developer-friendly and lightweight.

- Strong visualization and collaboration.

- Framework agnostic.

Cons

- Limited end-to-end MLOps pipelines.

- Paid tiers required for large teams.

- Less governance control.

Security & Compliance

- SSO/RBAC, audit logs in enterprise plan.

- Certifications: Not publicly stated.

Deployment & Platforms

- Web, Cloud, Linux, Windows.

Integrations & Ecosystem

- Python SDK

- TensorFlow, PyTorch support

- Jupyter integration

- Slack notifications

- CI/CD connectors

Pricing Model

- Free tier + usage-based enterprise tiers.

- Not publicly stated.

Best-Fit Scenarios

- Developer teams and freelancers.

- Experiment-driven ML workflows.

- Collaborative research projects.

7 — Neptune.ai

One-line verdict: Flexible experiment tracking and model registry for cross-framework ML teams.

Short description: Neptune.ai centralizes ML experiment management, dataset tracking, and model registry for teams of any size.

Standout Capabilities

- Experiment tracking with metadata storage.

- Model registry and versioning.

- Dataset versioning and lineage tracking.

- Web-based dashboards for visualization.

- API and SDK integrations.

- CI/CD pipeline support.

- Customizable alerts and notifications.

AI-Specific Depth

- Model support: BYO + multi-framework.

- RAG / knowledge integration: N/A

- Evaluation: Experiment regression and metrics logging.

- Guardrails: Varies / N/A

- Observability: Traces and performance metrics.

Pros

- Flexible and framework-agnostic.

- Strong experiment tracking.

- Easy collaboration across teams.

Cons

- Not a full MLOps pipeline solution.

- Limited deployment orchestration.

- Paid tiers required for advanced features.

Security & Compliance

- SSO/RBAC; audit logging available.

- Certifications: Not publicly stated.

Deployment & Platforms

- Web, Cloud, Linux, Windows.

Integrations & Ecosystem

- Python, R SDKs

- TensorFlow, PyTorch, Scikit-learn

- CI/CD pipelines

- Slack & Teams notifications

Pricing Model

- Usage-based; free tier available.

Best-Fit Scenarios

- Cross-framework development teams.

- Academic and research labs.

- Teams requiring experiment reproducibility.

8 — Comet

One-line verdict: Comprehensive ML experiment tracking and deployment monitoring for data science teams.

Short description: Comet tracks experiments, monitors deployments, and provides collaboration tools for ML teams across industries.

Standout Capabilities

- Experiment logging and visualization.

- Model registry and deployment monitoring.

- Dataset versioning and lineage tracking.

- Real-time collaboration dashboards.

- Alerting for anomalies.

- API/SDK integration.

- Flexible deployment options.

AI-Specific Depth

- Model support: Multi-framework BYO.

- RAG / knowledge integration: N/A

- Evaluation: Metrics tracking, drift alerts.

- Guardrails: Varies / N/A

- Observability: Tracing, token metrics.

Pros

- Easy team collaboration.

- Strong tracking and visualization.

- Flexible integration options.

Cons

- Not a full pipeline orchestration tool.

- Enterprise features require paid plans.

- Limited governance capabilities.

Security & Compliance

- SSO, RBAC, audit logs; encryption.

- Certifications: Not publicly stated.

Deployment & Platforms

- Web, Cloud, Linux, Windows.

Integrations & Ecosystem

- Python SDK

- TensorFlow, PyTorch, Scikit-learn

- Slack, Teams, CI/CD pipelines

Pricing Model

- Tiered, usage-based; free tier available.

Best-Fit Scenarios

- Teams needing experiment tracking.

- Organizations with multi-framework ML pipelines.

- Developers seeking collaboration dashboards.

9 — Domino Data Lab

One-line verdict: Enterprise MLOps platform for model deployment, reproducibility, and governance at scale.

Short description: Domino offers end-to-end model lifecycle management, focusing on collaboration, governance, and enterprise compliance.

Standout Capabilities

- Model versioning and reproducibility.

- Deployment pipelines and monitoring.

- Collaborative environment for data scientists.

- Governance and audit capabilities.

- Hybrid and multi-cloud deployment.

- Experiment tracking dashboards.

- Integration with popular ML frameworks.

AI-Specific Depth

- Model support: BYO + proprietary.

- RAG / knowledge integration: Varies / N/A

- Evaluation: Offline, regression, and human review.

- Guardrails: Policy checks, injection protection.

- Observability: Metrics, traces, cost dashboards.

Pros

- Enterprise-grade features.

- Strong governance and reproducibility.

- Multi-cloud support.

Cons

- High learning curve.

- Costly for smaller teams.

- Onboarding requires training.

Security & Compliance

- SSO, RBAC, audit logs; encryption.

- Certifications: Not publicly stated.

Deployment & Platforms

- Web, Cloud, Hybrid.

Integrations & Ecosystem

- Python, R SDKs

- TensorFlow, PyTorch support

- CI/CD pipelines

- Slack & Teams integration

Pricing Model

- Usage-based enterprise tiers.

Best-Fit Scenarios

- Large enterprise ML teams.

- Regulated industries.

- Multi-cloud deployment needs.

10 — ZenML

One-line verdict: Lightweight, open-source MLOps framework for building reproducible ML pipelines quickly.

Short description: ZenML provides a framework to standardize ML pipelines, track metadata, and integrate with CI/CD, ideal for developer-driven teams.

Standout Capabilities

- Pipeline orchestration and metadata tracking.

- Experiment reproducibility.

- CI/CD integration.

- Open-source extensibility.

- Multi-framework support.

- Lightweight and developer-friendly.

- Local and cloud deployment options.

AI-Specific Depth

- Model support: BYO + open-source frameworks.

- RAG / knowledge integration: N/A

- Evaluation: Regression and experiment tracking.

- Guardrails: Varies / N/A

- Observability: Metrics and traces.

Pros

- Open-source and free to use.

- Quick pipeline setup.

- Framework agnostic.

Cons

- Limited enterprise monitoring and governance.

- Requires DevOps integration.

- Smaller community than enterprise platforms.

Security & Compliance

- Depends on deployment; Not publicly stated.

Deployment & Platforms

- Linux, Cloud, Windows.

- Self-hosted or cloud.

Integrations & Ecosystem

- Python SDK

- TensorFlow, PyTorch, Scikit-learn

- CI/CD pipelines

- Cloud storage connectors

Pricing Model

- Open-source; enterprise support optional.

Best-Fit Scenarios

- Developers needing reproducible pipelines.

- Small to medium ML teams.

- Teams experimenting with multiple frameworks.

Comparison Table

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| DataRobot MLOps | Enterprise automation | Cloud/Hybrid | BYO/Proprietary | Scalable pipelines | Expensive | N/A |

| MLflow | Developers & researchers | Self-hosted/Cloud | Open-source/BYO | Flexible tracking | Setup required | N/A |

| Kubeflow | Kubernetes teams | Self-hosted | BYO/Open-source | Scalable pipelines | Steep learning | N/A |

| TFX | TensorFlow teams | Cloud/Self-hosted | TensorFlow | Standardized pipelines | TensorFlow-centric | N/A |

| SageMaker MLOps | AWS enterprises | Cloud | BYO + AWS | Integrated ecosystem | AWS lock-in | N/A |

| W&B | Developer teams | Cloud | Multi-framework BYO | Experiment tracking | Limited governance | N/A |

| Neptune.ai | Cross-framework teams | Cloud | Multi-framework BYO | Experiment reproducibility | Limited orchestration | N/A |

| Comet | Data science teams | Cloud | Multi-framework BYO | Collaboration dashboards | Limited pipelines | N/A |

| Domino Data Lab | Enterprise teams | Cloud/Hybrid | BYO + Proprietary | Governance & reproducibility | Costly | N/A |

| ZenML | Developers & SMB | Self-hosted/Cloud | BYO/Open-source | Lightweight & reproducible | Limited enterprise features | N/A |

Scoring & Evaluation (Transparent Rubric)

Scoring is comparative and helps buyers prioritize tools based on enterprise needs, developer focus, and SMB workflows. Scores are from 1–10, weighted as follows: Core features – 20%, AI reliability & evaluation – 15%, Guardrails & safety – 10%, Integrations & ecosystem – 15%, Ease of use – 10%, Performance & cost – 15%, Security/admin – 10%, Support/community – 5%.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| DataRobot MLOps | 9 | 9 | 8 | 8 | 7 | 8 | 8 | 7 | 8.3 |

| MLflow | 7 | 7 | 5 | 7 | 8 | 7 | 5 | 6 | 6.7 |

| Kubeflow | 8 | 8 | 6 | 7 | 6 | 8 | 6 | 5 | 7.0 |

| TFX | 8 | 8 | 6 | 7 | 7 | 7 | 6 | 5 | 7.0 |

| SageMaker MLOps | 9 | 9 | 8 | 9 | 7 | 8 | 8 | 7 | 8.4 |

| W&B | 7 | 7 | 5 | 7 | 8 | 7 | 6 | 6 | 6.7 |

| Neptune.ai | 7 | 7 | 5 | 7 | 8 | 7 | 6 | 6 | 6.7 |

| Comet | 7 | 7 | 5 | 7 | 8 | 7 | 6 | 6 | 6.7 |

| Domino Data Lab | 9 | 9 | 8 | 8 | 7 | 8 | 8 | 7 | 8.3 |

| ZenML | 7 | 7 | 5 | 7 | 8 | 7 | 6 | 6 | 6.7 |

Top 3 for Enterprise: SageMaker MLOps, DataRobot MLOps, Domino Data Lab

Top 3 for SMB: ZenML, MLflow, W&B

Top 3 for Developers: MLflow, ZenML, W&B

Which MLOps Lifecycle Management Platform Is Right for You?

Solo / Freelancer

- Lightweight platforms like ZenML or W&B are ideal for experimentation, reproducibility, and minimal overhead.

SMB

- MLflow and Neptune.ai balance flexibility, experiment tracking, and low operational complexity.

Mid-Market

- TFX and Comet offer structured pipelines, monitoring, and team collaboration without full enterprise complexity.

Enterprise

- DataRobot MLOps, SageMaker MLOps, and Domino Data Lab deliver scalable pipelines, governance, and compliance capabilities.

Regulated industries

- Choose platforms with strong audit logs, policy enforcement, and SSO/RBAC support such as DataRobot or Domino Data Lab.

Budget vs premium

- Open-source solutions (MLflow, ZenML) reduce cost but require internal maintenance.

- Enterprise platforms offer support, scalability, and compliance at a higher price.

Build vs buy

- DIY with MLflow or ZenML is suitable for teams with engineering expertise.

- Buy enterprise-grade platforms for mission-critical, scalable, and compliant deployments.

Implementation Playbook (30 / 60 / 90 Days)

- 30 days: Pilot core pipelines, define success metrics, implement basic tracking, and test BYO models.

- 60 days: Harden security, implement guardrails, integrate evaluation frameworks, expand CI/CD pipelines, and test multi-model routing.

- 90 days: Optimize cost and latency, scale pipelines, enforce governance, implement version control, and conduct red-team stress tests for AI models.

Common Mistakes & How to Avoid Them

- Ignoring prompt injection and adversarial attacks.

- Failing to evaluate models for drift, bias, or hallucinations.

- Unmanaged data retention leading to compliance issues.

- Lack of observability for pipeline performance and costs.

- Over-automation without human-in-the-loop review.

- Underestimating vendor lock-in risk.

- Insufficient CI/CD integration for ML models.

- Not versioning datasets or model artifacts.

- Ignoring latency and cost optimization.

- Limited guardrails for production AI workflows.

- Overlooking multi-cloud or hybrid deployment challenges.

- Poor documentation and team onboarding.

- Skipping regulatory and audit readiness checks.

- Not prioritizing reproducibility and collaboration.

FAQs

1. What is MLOps Lifecycle Management?

MLOps platforms manage the end-to-end lifecycle of ML models, including experimentation, deployment, monitoring, and governance.

2. Do these platforms support BYO models?

Many platforms support Bring-Your-Own models, allowing flexibility across frameworks and proprietary systems.

3. Can I host MLOps platforms on-premises?

Some tools like MLflow, Kubeflow, and ZenML support self-hosted deployments, while enterprise tools may be cloud-native.

4. How is model evaluation handled?

Platforms offer regression tests, drift detection, bias monitoring, and offline or human-in-the-loop evaluation frameworks.

5. Are guardrails included?

Advanced platforms provide policy checks, prompt injection prevention, and adversarial input defenses.

6. What about data privacy?

Enterprise platforms provide RBAC, SSO, audit logs, encryption, and data retention/residency controls.

7. How scalable are these platforms?

Platforms like DataRobot, SageMaker, and Kubeflow scale across multiple models, users, and cloud regions.

8. Are open-source platforms viable for enterprises?

Yes, open-source platforms like MLflow or ZenML are cost-effective but require internal DevOps support.

9. How do I choose between build vs buy?

Choose open-source DIY if you have engineering resources; select enterprise solutions for scalability, compliance, and support.

10. Can these platforms integrate with RAG or vector databases?

Some platforms offer connectors for knowledge bases and vector DBs to support retrieval-augmented pipelines.

11. How do I monitor performance and cost?

Observability dashboards and token/inference metrics allow tracking model latency, utilization, and cost per request.

12. What industries benefit most?

Finance, healthcare, telecom, e-commerce, and regulated enterprises gain the most from MLOps lifecycle management platforms.

Conclusion

MLOps Lifecycle Management Platforms are critical for scaling machine learning in production environments while maintaining reliability, reproducibility, and compliance. Choosing the right platform requires evaluating team size, deployment flexibility, model support, governance requirements, and cost considerations. Enterprises benefit from platforms offering full automation, governance, and monitoring, while smaller teams may prioritize open-source or lightweight frameworks for experimentation and reproducibility.