Introduction

Document ingestion and chunking pipelines are foundational components in modern AI systems, especially for retrieval-augmented generation workflows. These tools transform raw, unstructured data such as PDFs, emails, web pages, and databases into structured, searchable chunks that large language models can process effectively. Without proper ingestion and chunking, even the most advanced AI models struggle with accuracy, context retention, and response quality.

As AI systems evolve toward agentic workflows, multimodal processing, and real-time reasoning, the importance of high-quality ingestion pipelines has significantly increased. These tools now play a crucial role in improving relevance, reducing hallucinations, optimizing cost and latency, and ensuring compliance with enterprise data policies.

Common use cases include:

- Enterprise knowledge assistants for internal teams

- Customer support automation using company documentation

- Legal and compliance document retrieval systems

- AI-powered research copilots

- Data-driven decision systems using internal knowledge bases

- Intelligent document search platforms

Key evaluation criteria buyers should consider:

- Support for diverse data formats and sources

- Flexibility in chunking strategies such as semantic or token-based splitting

- Metadata extraction and enrichment capabilities

- Integration with vector databases and retrieval systems

- Evaluation and testing capabilities for accuracy

- Data privacy, retention, and governance controls

- Observability including cost, latency, and usage tracking

- Scalability for large datasets

- Guardrails against prompt injection and malformed inputs

- Model compatibility and flexibility

Best for: AI engineers, CTOs, data teams, and enterprises building scalable RAG systems and AI assistants.

Not ideal for: Teams that only require basic file storage or simple search without AI-driven retrieval.

What’s Changed in Document Ingestion & Chunking Pipelines

- Shift from static pipelines to dynamic, agent-driven ingestion workflows that adapt based on context

- Increased adoption of semantic chunking techniques over basic rule-based splitting

- Native support for multimodal inputs including images, scanned documents, and transcripts

- Integration of evaluation frameworks to measure retrieval accuracy and reduce hallucinations

- Stronger emphasis on prompt-injection detection during ingestion stages

- Enterprise-grade data governance with retention policies and access controls

- Real-time ingestion capabilities for streaming and continuously updating data

- Built-in observability tools tracking token usage, latency, and performance metrics

- Emergence of composable architectures combining open-source and managed services

- AI-assisted metadata enrichment for better retrieval quality

- Focus on cost optimization through intelligent chunk sizing and model routing

- Increasing demand for privacy-first ingestion pipelines

Quick Buyer Checklist

- Supports multiple formats such as PDFs, HTML, APIs, and databases

- Provides flexible chunking strategies including semantic and hierarchical approaches

- Integrates easily with vector databases and AI frameworks

- Allows multi-model or bring-your-own-model flexibility

- Includes evaluation and testing tools for accuracy validation

- Offers guardrails against malicious or malformed inputs

- Tracks cost, latency, and system performance

- Supports role-based access control and audit logs

- Scales efficiently for large enterprise datasets

- Minimizes vendor lock-in through open APIs and modular design

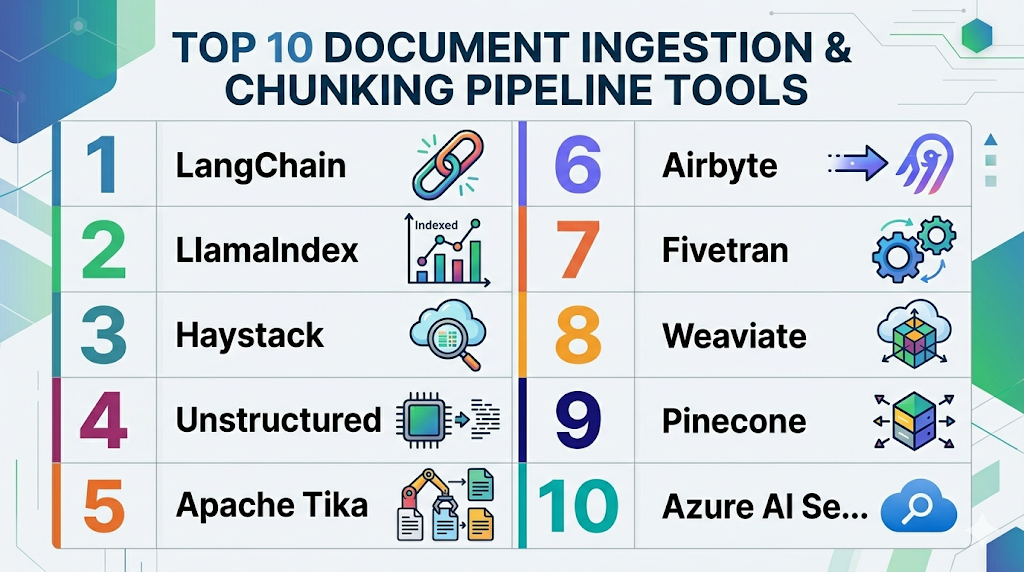

Top 10 Document Ingestion & Chunking Pipeline Tools

1 — LangChain

One-line verdict: Ideal for developers who need full control over custom ingestion and chunking workflows.

Short description:

LangChain is a flexible framework that enables developers to build custom pipelines for ingesting, transforming, and retrieving data for AI applications.

Standout Capabilities

- Modular document loaders supporting multiple formats

- Flexible chunking strategies including recursive splitting

- Workflow orchestration with chains and agents

- Integration with multiple vector databases

- Extensive ecosystem support

- Custom pipeline building

- Strong developer community

AI-Specific Depth

- Model support: Multi-model / BYO

- RAG / knowledge integration: Strong support with connectors

- Evaluation: Basic, requires external tools

- Guardrails: Limited native support

- Observability: Basic logging and tracing

Pros

- Highly customizable for complex use cases

- Large ecosystem and active community

- Works with most AI tools and databases

Cons

- Requires significant development effort

- Limited built-in enterprise features

- Observability needs external tools

Deployment & Platforms

Cloud, Self-hosted

Integrations & Ecosystem

LangChain integrates with a wide variety of tools, making it highly extensible for custom AI workflows

- OpenAI and other LLM providers

- Pinecone, Weaviate, and other vector databases

- APIs and custom connectors

- Data processing tools

Pricing Model

Open-source with optional enterprise offerings

Best-Fit Scenarios

- Custom AI applications

- Complex RAG pipelines

- Developer-driven environments

2 — LlamaIndex

One-line verdict: Best for quickly building efficient RAG pipelines with strong indexing and chunking features.

Short description:

LlamaIndex focuses on simplifying data ingestion and indexing for LLM applications, making it easier to connect data sources to AI systems.

Standout Capabilities

- Advanced indexing techniques

- Flexible chunking and document parsing

- Metadata-aware retrieval

- Easy-to-use APIs

- Strong RAG support

- Lightweight design

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Strong

- Evaluation: Basic support

- Guardrails: Limited

- Observability: Moderate

Pros

- Simple and fast setup

- Designed specifically for RAG workflows

- Good balance between flexibility and usability

Cons

- Limited enterprise features

- Smaller ecosystem compared to alternatives

- Less control for complex pipelines

Deployment & Platforms

Cloud, Local

Integrations & Ecosystem

LlamaIndex integrates easily into modern AI stacks

- Vector databases

- APIs and SDKs

- Data connectors

- AI frameworks

Pricing Model

Open-source

Best-Fit Scenarios

- Rapid prototyping

- Data-heavy applications

- Small to mid-scale AI systems

3 — Haystack

One-line verdict: Strong choice for production-ready search and ingestion pipelines in enterprise environments.

Short description:

Haystack provides a robust framework for building scalable NLP pipelines with strong ingestion, indexing, and retrieval capabilities.

Standout Capabilities

- Pipeline orchestration for complex workflows

- Built-in retrievers and ranking models

- Evaluation tools for accuracy measurement

- Scalable architecture

- Integration with search engines

- Production-ready deployment

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Strong

- Evaluation: Advanced tools available

- Guardrails: Limited

- Observability: Moderate

Pros

- Production-grade capabilities

- Strong search performance

- Built-in evaluation tools

Cons

- Complex setup process

- Requires technical expertise

- Limited guardrails

Deployment & Platforms

Cloud, Self-hosted

Integrations & Ecosystem

Haystack integrates with enterprise search and AI tools

- Elasticsearch and OpenSearch

- APIs and SDKs

- AI model providers

- Data pipelines

Pricing Model

Open-source with enterprise support

Best-Fit Scenarios

- Enterprise search systems

- QA systems

- Production AI pipelines

4 — Unstructured

One-line verdict: Best for transforming messy, unstructured documents into clean data ready for chunking.

Short description:

Unstructured specializes in parsing and cleaning raw data from various document formats before ingestion into AI systems.

Standout Capabilities

- Document parsing across multiple formats

- OCR and scanned document support

- Metadata extraction

- Preprocessing pipelines

- Data normalization

- Format conversion

AI-Specific Depth

- Model support: N/A

- RAG / knowledge integration: Strong preprocessing support

- Evaluation: N/A

- Guardrails: N/A

- Observability: Limited

Pros

- Handles complex document formats effectively

- Improves downstream chunk quality

- Supports large-scale ingestion

Cons

- Not a full pipeline solution

- Requires integration with other tools

- Limited AI-specific features

Deployment & Platforms

Cloud, Self-hosted

Integrations & Ecosystem

Unstructured fits well into broader AI pipelines

- APIs

- Data pipelines

- Vector databases

- AI frameworks

Pricing Model

Varies / N/A

Best-Fit Scenarios

- Document-heavy industries

- OCR pipelines

- Data preprocessing workflows

5 — Apache Tika

One-line verdict: Reliable open-source tool for extracting content and metadata from diverse file formats.

Short description:

Apache Tika is widely used for extracting structured content from documents, forming the first step in ingestion pipelines.

Standout Capabilities

- Supports a wide range of file formats

- Extracts text and metadata

- Scalable processing

- Language detection

- Mature ecosystem

- Lightweight integration

AI-Specific Depth

- Model support: N/A

- RAG / knowledge integration: Preprocessing layer

- Evaluation: N/A

- Guardrails: N/A

- Observability: Limited

Pros

- Highly reliable and proven

- Extensive format support

- Open-source and flexible

Cons

- No native AI features

- Requires additional tools for chunking

- Basic functionality

Deployment & Platforms

Self-hosted

Integrations & Ecosystem

- Java ecosystem

- APIs

- Data processing tools

Pricing Model

Open-source

Best-Fit Scenarios

- File extraction pipelines

- Legacy system integration

- Large-scale ingestion

6 — Airbyte

One-line verdict: Best for structured data ingestion into AI pipelines with strong connector support.

Short description:

Airbyte is a data integration platform that simplifies ingestion from multiple sources into data warehouses and AI systems.

Standout Capabilities

- Extensive connector library

- ETL pipeline automation

- Scheduling and orchestration

- Scalable architecture

- Open-source flexibility

AI-Specific Depth

- Model support: N/A

- RAG / knowledge integration: Indirect

- Evaluation: N/A

- Guardrails: N/A

- Observability: Moderate

Pros

- Strong data ingestion capabilities

- Scalable for enterprise use

- Flexible deployment

Cons

- Not AI-specific

- Limited chunking features

- Requires integration

Deployment & Platforms

Cloud, Self-hosted

Integrations & Ecosystem

- Databases

- SaaS platforms

- APIs

- Data warehouses

Pricing Model

Open-source with cloud tiers

Best-Fit Scenarios

- ETL workflows

- Data ingestion pipelines

- Structured data processing

7 — Fivetran

One-line verdict: Enterprise-grade managed ingestion for syncing SaaS data into AI-ready systems.

Short description:

Fivetran automates data ingestion from various SaaS platforms, ensuring reliable and consistent data pipelines.

Standout Capabilities

- Fully managed pipelines

- High reliability

- Automatic schema updates

- Enterprise scalability

- Minimal maintenance

AI-Specific Depth

- Model support: N/A

- RAG / knowledge integration: Indirect

- Evaluation: N/A

- Guardrails: N/A

- Observability: Moderate

Pros

- Easy to use

- Highly reliable

- Scalable

Cons

- Expensive for large usage

- Limited customization

- Not AI-native

Deployment & Platforms

Cloud

Integrations & Ecosystem

- SaaS tools

- Data warehouses

- APIs

Pricing Model

Usage-based

Best-Fit Scenarios

- Enterprise ingestion

- SaaS data pipelines

- Analytics and reporting

8 — Weaviate

One-line verdict: Strong option for combining vector search with ingestion and semantic chunking.

Short description:

Weaviate provides vector database functionality with ingestion and indexing capabilities for AI systems.

Standout Capabilities

- Vector-native architecture

- Hybrid search support

- Semantic indexing

- Metadata filtering

- Scalable infrastructure

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Strong

- Evaluation: Limited

- Guardrails: Limited

- Observability: Moderate

Pros

- Built-in vector database

- Scalable

- Strong semantic search

Cons

- Limited pipeline customization

- Requires integration

- Moderate complexity

Deployment & Platforms

Cloud, Self-hosted

Integrations & Ecosystem

- APIs

- AI frameworks

- Data pipelines

- Vector tools

Pricing Model

Varies / N/A

Best-Fit Scenarios

- Semantic search systems

- RAG pipelines

- AI indexing

9 — Pinecone

One-line verdict: Managed vector database optimized for high-performance ingestion and retrieval.

Short description:

Pinecone provides a fully managed vector database with ingestion capabilities designed for production AI applications.

Standout Capabilities

- High-performance vector search

- Managed infrastructure

- Scalability

- API-driven ingestion

- Reliable performance

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: Strong

- Evaluation: Limited

- Guardrails: Limited

- Observability: Moderate

Pros

- Easy to scale

- High performance

- Managed service

Cons

- Higher cost

- Limited ingestion customization

- Vendor dependency

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- AI frameworks

- Data tools

- Vector ecosystems

Pricing Model

Usage-based

Best-Fit Scenarios

- Production AI systems

- Large-scale retrieval

- Managed environments

10 — Azure AI Search

One-line verdict: Enterprise-ready solution combining ingestion, indexing, and AI-powered search.

Short description:

Azure AI Search offers a fully managed pipeline for ingesting, indexing, and retrieving data within a secure enterprise ecosystem.

Standout Capabilities

- Integrated ingestion and search

- AI enrichment features

- Enterprise-grade security

- Scalability

- Built-in indexing pipelines

AI-Specific Depth

- Model support: Hosted

- RAG / knowledge integration: Strong

- Evaluation: Limited

- Guardrails: Moderate

- Observability: Strong

Pros

- Enterprise-ready

- Secure and compliant environment

- Fully managed

Cons

- Vendor lock-in

- Less flexibility

- Cloud dependency

Deployment & Platforms

Cloud

Integrations & Ecosystem

Azure AI Search integrates deeply with enterprise systems

- Azure ecosystem tools

- APIs and SDKs

- AI services

- Data platforms

Pricing Model

Usage-based

Best-Fit Scenarios

- Enterprise AI applications

- Secure data environments

- Large-scale deployments

Comparison Table

| Tool | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| LangChain | Developers | Hybrid | Multi-model | Flexibility | Complexity | N/A |

| LlamaIndex | RAG pipelines | Hybrid | Multi-model | Indexing | Limited enterprise features | N/A |

| Haystack | Enterprise NLP | Hybrid | Multi-model | Search performance | Setup complexity | N/A |

| Unstructured | Data preprocessing | Hybrid | N/A | Data cleaning | Not full pipeline | N/A |

| Apache Tika | File extraction | Self-hosted | N/A | Format support | No AI features | N/A |

| Airbyte | ETL pipelines | Hybrid | N/A | Connectors | Not AI-native | N/A |

| Fivetran | Enterprise ingestion | Cloud | N/A | Reliability | Cost | N/A |

| Weaviate | Vector search | Hybrid | Multi-model | Semantic search | Integration effort | N/A |

| Pinecone | Managed vector DB | Cloud | BYO | Performance | Cost | N/A |

| Azure AI Search | Enterprise AI | Cloud | Hosted | Integration | Vendor lock-in | N/A |

Scoring & Evaluation

This scoring is comparative, not absolute. Each tool is evaluated based on how well it performs across critical dimensions like core ingestion capabilities, AI reliability, guardrails, integrations, ease of use, performance, security, and support. Scores reflect typical strengths and trade-offs rather than exact measurements.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| LangChain | 9 | 7 | 6 | 9 | 6 | 8 | 7 | 8 | 7.8 |

| LlamaIndex | 8 | 7 | 6 | 8 | 8 | 7 | 7 | 7 | 7.6 |

| Haystack | 8 | 8 | 6 | 7 | 6 | 8 | 7 | 7 | 7.5 |

| Unstructured | 7 | 6 | 5 | 7 | 7 | 7 | 6 | 6 | 6.7 |

| Apache Tika | 7 | 5 | 4 | 6 | 6 | 7 | 6 | 6 | 6.2 |

| Airbyte | 7 | 6 | 5 | 8 | 7 | 7 | 7 | 7 | 6.9 |

| Fivetran | 8 | 7 | 6 | 8 | 9 | 8 | 8 | 8 | 7.9 |

| Weaviate | 8 | 7 | 6 | 8 | 7 | 8 | 7 | 7 | 7.5 |

| Pinecone | 8 | 7 | 6 | 8 | 8 | 9 | 8 | 7 | 7.9 |

| Azure AI Search | 9 | 8 | 7 | 9 | 8 | 8 | 9 | 8 | 8.4 |

Top 3 for Enterprise: Azure AI Search, Pinecone, Fivetran

Top 3 for SMB: LlamaIndex, Weaviate, LangChain

Top 3 for Developers: LangChain, LlamaIndex, Haystack

Which Document Ingestion & Chunking Pipeline Tool Is Right for You?

Solo / Freelancer

LlamaIndex or LangChain is ideal due to flexibility, low cost, and strong community support.

SMB

Weaviate or LlamaIndex offers a balance between ease of use and capability without heavy infrastructure requirements.

Mid-Market

Haystack or Pinecone provides scalability and performance for growing AI workloads.

Enterprise

Azure AI Search or Fivetran is best for organizations needing strong governance, security, and managed infrastructure.

Regulated industries

Choose tools with strong data governance, auditability, and secure deployment such as Azure AI Search.

Budget vs premium

Open-source tools like LangChain and LlamaIndex are cost-effective, while Pinecone and Azure offer premium managed services.

Build vs buy

Build using LangChain if you need customization. Buy managed solutions like Pinecone if you need speed and reliability.

Implementation Playbook

30 Days

- Define ingestion pipeline architecture

- Select chunking strategy and tools

- Run pilot with small dataset

- Establish evaluation metrics

60 Days

- Add security and guardrails

- Improve chunking and retrieval accuracy

- Expand data ingestion sources

- Introduce monitoring and observability

90 Days

- Optimize performance and cost

- Scale ingestion pipelines

- Implement governance and compliance policies

- Automate workflows

Common Mistakes & How to Avoid Them

- Using naive chunking instead of semantic chunking

- Ignoring evaluation and testing

- Lack of data governance

- Poor observability leading to blind spots

- Unexpected cost spikes

- Over-automation without human validation

- Vendor lock-in without abstraction layers

- Ignoring prompt injection risks

- Poor metadata management

- Weak integration planning

FAQs

1. What is document ingestion in AI?

Document ingestion is the process of collecting, processing, and preparing data so AI systems can use it effectively for retrieval and reasoning.

2. What is chunking and why is it important?

Chunking splits large documents into smaller parts, helping AI models retrieve accurate and relevant information.

3. What is semantic chunking?

Semantic chunking groups content based on meaning instead of fixed sizes, improving context and retrieval quality.

4. Can I use open-source tools for ingestion pipelines?

Yes, tools like LangChain and LlamaIndex provide strong open-source options for building pipelines.

5. What is RAG in ingestion pipelines?

RAG combines retrieval and generation, allowing AI to fetch relevant data before generating responses.

6. How do I prevent hallucinations in AI systems?

Use proper chunking, evaluation frameworks, and high-quality data sources to improve accuracy.

7. Are these tools secure for enterprise use?

Some tools provide enterprise-grade security, but details may vary and should be verified.

8. Can I bring my own AI model?

Many tools support BYO models or multi-model setups depending on architecture.

9. What is the cost structure of these tools?

Costs vary and may include usage-based, subscription, or open-source models.

10. Do these tools support real-time data ingestion?

Some tools support streaming ingestion, but capabilities vary.

11. How do I evaluate pipeline performance?

Use metrics like retrieval accuracy, latency, and cost efficiency.

12. Can I switch tools later?

Yes, but switching can be complex if vendor lock-in is high, so plan architecture carefully.

Conclusion

Document ingestion and chunking pipelines are essential for building accurate, scalable, and trustworthy AI systems. The best tool depends on your data sources, team skills, security needs, deployment model, and long-term AI roadmap. Start by shortlisting tools that match your use case, then run a focused pilot with real documents, retrieval tests, and cost tracking. Before scaling, verify security controls, evaluation quality, guardrails, and observability so your AI system remains reliable, controlled, and production-ready.

Next steps:

- Shortlist tools based on your use case, data sources, and required integrations.

- Run a pilot using real documents to test chunking quality, retrieval accuracy, and cost efficiency.

- Verify security, evaluation, and governance before scaling to production to ensure reliability and compliance.