Introduction

Agent Memory Stores are specialized systems that allow AI agents to retain, recall, and evolve knowledge over time. Unlike traditional stateless AI interactions, these platforms enable agents to build contextual awareness across sessions—making them significantly more useful for real-world applications.

This category has become critical as AI shifts from simple prompt-response systems to persistent, autonomous agents capable of long-running workflows. Memory stores power everything from personalized copilots to enterprise automation systems that learn continuously.

real world use cases include:

- Long-term conversational agents (customer support, assistants)

- Personalized recommendation systems

- Autonomous research agents

- Workflow automation with historical context

- Multi-agent collaboration with shared memory

Key evaluation criteria:

- Memory types (short-term vs long-term)

- Retrieval accuracy and latency

- Vector + structured storage support

- Integration with RAG pipelines

- Observability and debugging tools

- Data privacy and retention controls

- Cost efficiency at scale

- Scalability and performance

- Model compatibility (BYO vs hosted)

- Governance and auditability

Best for: AI engineers, CTOs, and teams building persistent AI agents, copilots, or automation systems requiring contextual memory.

Not ideal for: Simple stateless applications, one-off prompts, or lightweight chatbots where memory adds unnecessary complexity.

What’s Changed in Agent Memory Stores

- Shift from simple vector databases to hybrid memory (vector + graph + structured)

- Native support for agentic workflows and tool-calling memory updates

- Emergence of episodic, semantic, and procedural memory layers

- Built-in evaluation tools for memory relevance and recall accuracy

- Guardrails to prevent sensitive data leakage across sessions

- Multi-agent shared memory environments becoming standard

- Fine-grained retention controls for compliance and privacy

- Cost-aware memory pruning and summarization strategies

- Observability tools for tracing memory usage and decisions

- BYO model support and multi-model compatibility

- Real-time memory updates for streaming agents

Quick Buyer Checklist (Scan-Friendly)

- Does it support both short-term and long-term memory?

- Can it integrate with your RAG pipeline or vector database?

- Does it offer memory evaluation or testing tools?

- Are guardrails available to prevent data leakage?

- What are the latency and cost implications at scale?

- Does it support BYO models or multi-model routing?

- Are audit logs and admin controls available?

- How easy is it to debug memory-related issues?

- Does it support multi-agent shared memory?

- Is there a risk of vendor lock-in?

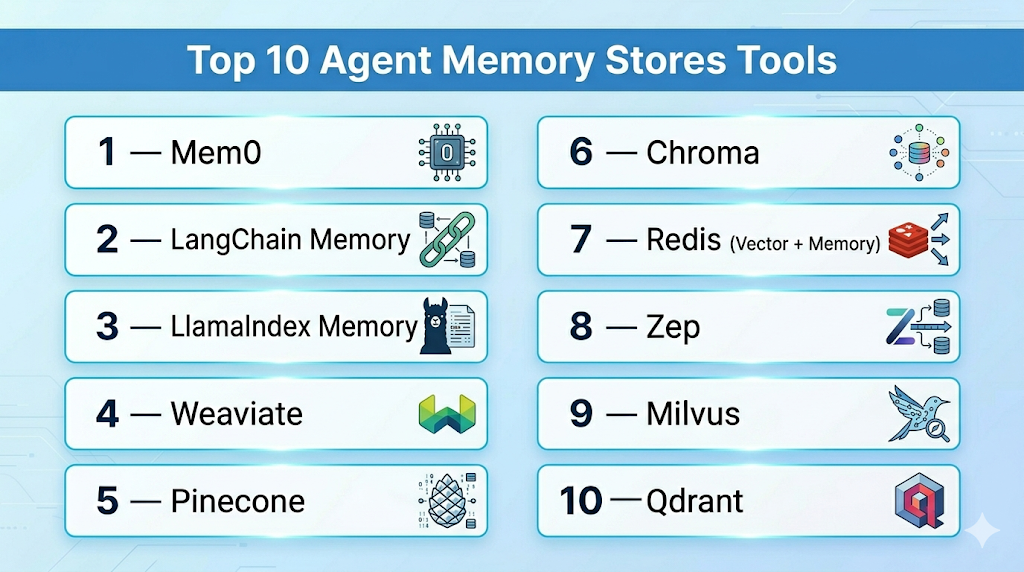

Top 10 Agent Memory Stores Tools

1 — Mem0

One-line verdict: Best for developers building persistent AI agents with structured and contextual memory layers.

Short description:

Mem0 focuses on structured memory management for AI agents, enabling long-term memory storage with retrieval optimization. It is commonly used in agent-based applications requiring contextual continuity.

Standout Capabilities

- Structured memory abstraction

- Automatic memory summarization

- Context-aware retrieval

- Lightweight integration

- Agent-specific memory isolation

- Memory scoring and prioritization

AI-Specific Depth

- Model support: BYO model

- RAG / knowledge integration: Compatible with vector databases

- Evaluation: Basic memory scoring

- Guardrails: Limited

- Observability: Basic

Pros

- Simple to integrate

- Optimized for agent workflows

- Lightweight architecture

Cons

- Limited enterprise features

- Basic observability

- Guardrails not robust

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud / Self-hosted

Integrations & Ecosystem

Provides APIs and SDKs for integration into agent frameworks.

- Python SDK

- REST APIs

- Vector DB compatibility

- Agent frameworks

Pricing Model

Not publicly stated

Best-Fit Scenarios

- Personal AI assistants

- Lightweight agent systems

- Startup prototypes

2 — LangChain Memory

One-line verdict: Best for developers already using LangChain who need flexible memory components.

Short description:

LangChain provides modular memory systems that integrate directly into agent pipelines, supporting various memory strategies.

Standout Capabilities

- Multiple memory types

- Tight agent integration

- Custom memory pipelines

- Flexible storage backends

- Community ecosystem

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Strong

- Evaluation: Limited

- Guardrails: N/A

- Observability: Basic

Pros

- Highly flexible

- Large ecosystem

- Easy to extend

Cons

- Requires engineering effort

- Limited built-in governance

- Debugging complexity

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud / Self-hosted

Integrations & Ecosystem

- Vector DBs

- APIs

- Custom storage

- Agent frameworks

Pricing Model

Open-source

Best-Fit Scenarios

- Custom AI agents

- RAG systems

- Developer experimentation

3 — LlamaIndex Memory

One-line verdict: Best for data-centric memory systems tightly integrated with retrieval pipelines.

Short description:

LlamaIndex focuses on connecting data sources with memory systems, enabling structured retrieval and persistent context.

Standout Capabilities

- Data connectors

- Index-based memory

- Retrieval optimization

- Query transformation

- Context persistence

AI-Specific Depth

- Model support: BYO / Multi-model

- RAG / knowledge integration: Strong

- Evaluation: Basic

- Guardrails: N/A

- Observability: Moderate

Pros

- Strong data integration

- Flexible indexing

- Scalable

Cons

- Requires setup effort

- Limited guardrails

- Complex tuning

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud / Self-hosted

Integrations & Ecosystem

- Data connectors

- APIs

- Vector DBs

- Storage layers

Pricing Model

Open-source + enterprise

Best-Fit Scenarios

- Knowledge-heavy agents

- Enterprise search

- Data pipelines

4 — Weaviate

One-line verdict: Best for scalable vector-based memory with built-in AI-native capabilities.

Short description:

Weaviate is a vector database with memory capabilities, supporting semantic search and contextual storage.

Standout Capabilities

- Vector-native storage

- Hybrid search

- Schema-based data

- Scalability

- Built-in modules

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Strong

- Evaluation: N/A

- Guardrails: Limited

- Observability: Moderate

Pros

- Highly scalable

- Strong performance

- Flexible schema

Cons

- Not agent-native

- Requires setup

- Limited guardrails

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud / Self-hosted

Integrations & Ecosystem

- APIs

- SDKs

- Vector tools

- ML pipelines

Pricing Model

Usage-based / Open-source

Best-Fit Scenarios

- Large-scale memory

- Semantic search

- RAG pipelines

5 — Pinecone

One-line verdict: Best for production-grade vector memory with low latency and high reliability.

Short description:

Pinecone is a managed vector database optimized for fast similarity search and scalable memory storage.

Standout Capabilities

- Managed infrastructure

- Low-latency retrieval

- Scalability

- High availability

- Indexing performance

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: Strong

- Evaluation: N/A

- Guardrails: N/A

- Observability: Moderate

Pros

- Fully managed

- Reliable performance

- Easy scaling

Cons

- Cost at scale

- Limited native agent features

- Vendor dependency

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- SDKs

- ML tools

- Data pipelines

Pricing Model

Usage-based

Best-Fit Scenarios

- Production AI systems

- Large-scale retrieval

- Enterprise apps

6 — Chroma

One-line verdict: Best for simple, open-source memory storage with quick setup.

Short description:

Chroma is an open-source vector database designed for simplicity and fast experimentation.

Standout Capabilities

- Easy setup

- Local-first approach

- Lightweight

- Open-source flexibility

- Developer-friendly

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: Moderate

- Evaluation: N/A

- Guardrails: N/A

- Observability: Basic

Pros

- Simple to use

- Free and open-source

- Fast prototyping

Cons

- Limited scalability

- Basic features

- Not enterprise-ready

Security & Compliance

Not publicly stated

Deployment & Platforms

Local / Self-hosted

Integrations & Ecosystem

- Python SDK

- APIs

- Vector tools

Pricing Model

Open-source

Best-Fit Scenarios

- Prototyping

- Small apps

- Developer testing

7 — Redis (Vector + Memory)

One-line verdict: Best for combining traditional caching with vector-based memory for real-time agents.

Short description:

Redis extends into AI memory with vector search and real-time data storage capabilities.

Standout Capabilities

- Real-time performance

- Hybrid storage

- Scalability

- Caching + memory

- Low latency

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: Strong

- Evaluation: N/A

- Guardrails: N/A

- Observability: Strong

Pros

- Extremely fast

- Mature ecosystem

- Flexible

Cons

- Requires expertise

- Not agent-native

- Setup complexity

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud / Self-hosted

Integrations & Ecosystem

- APIs

- SDKs

- Databases

- Cloud platforms

Pricing Model

Usage-based / Open-source

Best-Fit Scenarios

- Real-time agents

- High-frequency workloads

- Hybrid systems

8 — Zep

One-line verdict: Best for chat memory persistence with long-term conversational context.

Short description:

Zep focuses on storing and retrieving chat histories efficiently for conversational AI systems.

Standout Capabilities

- Chat history storage

- Memory summarization

- Searchable conversations

- Long-term persistence

- Lightweight API

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: Moderate

- Evaluation: N/A

- Guardrails: Limited

- Observability: Basic

Pros

- Great for chat apps

- Simple API

- Efficient storage

Cons

- Limited beyond chat

- Basic features

- Not enterprise-grade

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud / Self-hosted

Integrations & Ecosystem

- APIs

- SDKs

- Chat frameworks

Pricing Model

Not publicly stated

Best-Fit Scenarios

- Chatbots

- Conversational agents

- Support tools

9 — Milvus

One-line verdict: Best for high-performance vector memory at massive scale.

Short description:

Milvus is an open-source vector database designed for large-scale similarity search and memory storage.

Standout Capabilities

- High scalability

- Distributed architecture

- Fast indexing

- Large datasets

- Performance optimization

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: Strong

- Evaluation: N/A

- Guardrails: N/A

- Observability: Moderate

Pros

- Scales massively

- Open-source

- High performance

Cons

- Complex setup

- Requires expertise

- Limited agent features

Security & Compliance

Not publicly stated

Deployment & Platforms

Self-hosted / Cloud

Integrations & Ecosystem

- APIs

- SDKs

- Data tools

Pricing Model

Open-source

Best-Fit Scenarios

- Enterprise scale

- Big data AI

- Search systems

10 — Qdrant

One-line verdict: Best for flexible vector memory with strong filtering and metadata support.

Short description:

Qdrant provides vector storage with advanced filtering and structured metadata capabilities.

Standout Capabilities

- Payload filtering

- Hybrid search

- High performance

- Easy deployment

- REST APIs

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: Strong

- Evaluation: N/A

- Guardrails: N/A

- Observability: Moderate

Pros

- Flexible filtering

- Good performance

- Easy setup

Cons

- Not agent-native

- Limited evaluation tools

- Requires integration effort

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud / Self-hosted

Integrations & Ecosystem

- APIs

- SDKs

- ML tools

Pricing Model

Open-source + managed

Best-Fit Scenarios

- Metadata-heavy memory

- RAG systems

- AI apps

Comparison Table

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| Mem0 | Agent memory | Hybrid | BYO | Structured memory | Limited features | N/A |

| LangChain Memory | Developers | Self-hosted | Multi-model | Flexibility | Complexity | N/A |

| LlamaIndex | Data pipelines | Hybrid | Multi-model | Data integration | Setup effort | N/A |

| Weaviate | Scale | Hybrid | Multi-model | Vector-native | Not agent-native | N/A |

| Pinecone | Production | Cloud | BYO | Reliability | Cost | N/A |

| Chroma | Prototyping | Local | BYO | Simplicity | Limited scale | N/A |

| Redis | Real-time | Hybrid | BYO | Speed | Complexity | N/A |

| Zep | Chat memory | Hybrid | BYO | Conversation focus | Limited scope | N/A |

| Milvus | Large scale | Hybrid | BYO | Performance | Setup complexity | N/A |

| Qdrant | Flexible storage | Hybrid | BYO | Filtering | Integration effort | N/A |

Scoring & Evaluation

Scores are comparative and based on practical usability across real-world deployments, not absolute benchmarks.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| Mem0 | 8 | 6 | 5 | 7 | 8 | 7 | 6 | 6 | 7.0 |

| LangChain | 9 | 6 | 5 | 9 | 6 | 7 | 6 | 8 | 7.4 |

| LlamaIndex | 9 | 7 | 5 | 8 | 6 | 7 | 6 | 7 | 7.4 |

| Weaviate | 8 | 6 | 5 | 8 | 7 | 8 | 6 | 7 | 7.3 |

| Pinecone | 8 | 7 | 5 | 8 | 8 | 8 | 7 | 7 | 7.6 |

| Chroma | 6 | 5 | 4 | 6 | 9 | 6 | 5 | 6 | 6.1 |

| Redis | 9 | 7 | 5 | 9 | 6 | 9 | 7 | 8 | 7.9 |

| Zep | 7 | 5 | 5 | 6 | 8 | 7 | 6 | 6 | 6.6 |

| Milvus | 9 | 6 | 5 | 7 | 5 | 9 | 6 | 7 | 7.3 |

| Qdrant | 8 | 6 | 5 | 8 | 7 | 8 | 6 | 7 | 7.2 |

Top 3 for Enterprise

- Pinecone

- Redis

- Milvus

Top 3 for SMB

- Qdrant

- Weaviate

- Zep

Top 3 for Developers

- LangChain Memory

- LlamaIndex

- Chroma

Which Agent Memory Store Is Right for You?

Solo / Freelancer

Use Chroma or Zep for simplicity and fast setup.

SMB

Qdrant or Weaviate offer a good balance of scalability and usability.

Mid-Market

LlamaIndex or Pinecone provide structured scaling and better integrations.

Enterprise

Redis, Pinecone, or Milvus for performance, reliability, and scale.

Regulated industries

Focus on self-hosted options with strict data control (Redis, Milvus).

Budget vs premium

- Budget: Chroma, Qdrant

- Premium: Pinecone, Redis

Build vs buy

- Build: LangChain + vector DB

- Buy: Managed solutions like Pinecone

Implementation Playbook

30 Days

- Define use cases

- Build pilot

- Set evaluation metrics

60 Days

- Add guardrails

- Improve memory accuracy

- Begin rollout

90 Days

- Optimize cost

- Add observability

- Scale deployment

Common Mistakes & How to Avoid Them

- Ignoring memory evaluation

- Over-storing irrelevant data

- No retention policy

- Poor observability

- Cost mismanagement

- Lack of guardrails

- Over-automation

- Vendor lock-in

- Weak security controls

- No testing framework

FAQs

1. What is an agent memory store?

An agent memory store is a system that allows AI agents to retain, organize, and retrieve past interactions or knowledge to improve future responses.

2. Do all AI agents need memory?

No. Stateless agents or simple tasks don’t require memory, but complex, persistent workflows benefit significantly from it.

3. Can I use open-source tools for memory storage?

Yes. Tools like Chroma, Milvus, and Qdrant provide open-source options suitable for experimentation and production.

4. What is the difference between a vector database and a memory store?

A vector database handles similarity search, while a memory store includes logic for context management, retrieval strategies, and agent interaction.

5. Is memory storage expensive?

Costs can increase at scale due to storage, retrieval, and compute usage, especially in high-frequency systems.

6. How do I evaluate memory quality?

You can measure retrieval accuracy, relevance of stored context, latency, and how well the agent uses memory in responses.

7. Are these tools secure?

Security varies by platform. Many require proper configuration for encryption, access control, and data governance.

8. Can I self-host these memory systems?

Yes. Most tools support self-hosting, which is often preferred for better control over data privacy.

9. Do these tools support multiple AI models?

Many support BYO models or multi-model environments, depending on the architecture.

10. How is user data handled in memory systems?

Data handling depends on configuration—retention policies, encryption, and access controls must be set properly.

11. Can I migrate from one memory store to another?

Yes, but migration can be complex due to differences in schema, indexing, and data formats.

12. Are memory stores required for RAG systems?

Not strictly required, but they significantly enhance context retention and retrieval quality in RAG pipelines.

Conclusion

Agent Memory Stores play a critical role in enabling AI agents to move beyond stateless interactions into truly intelligent, context-aware systems. The right choice depends on your specific needs—whether it’s simplicity, scalability, or control—so focus on testing real use cases, validating memory accuracy, and ensuring strong privacy and cost management before scaling.