Introduction

In the current era of rapid digital transformation, data has become the most valuable asset an organization possesses. However, simply having data is not enough. The real challenge lies in the ability to process, analyze, and deliver that data at the speed of business. Having navigated the shifts from monolithic data warehouses to distributed cloud architectures over the last two decades, I have seen that the most common point of failure is not the technology, but the “data silos” and manual handoffs between teams. This is where DataOps changes the game. It is a collaborative data management practice focused on improving the communication, integration, and automation of data flows between data managers and data consumers across an organization. The DataOps Certified Professional (DOCP) program is designed to turn these theoretical principles into actionable skills that drive real-world business value.

What is DataOps Certified Professional (DOCP)?

The DataOps Certified Professional (DOCP) is a specialized certification that validates an individual’s ability to implement DataOps methodologies. It focuses on breaking down the barriers between data engineering, data science, and operations to create a seamless, automated data supply chain. Unlike traditional data certifications that focus on a specific database, DOCP focuses on the process and orchestration of the entire lifecycle.

Comprehensive Certification Overview

| Track | Level | Who it’s for | Prerequisites | Skills Covered | Recommended Order |

| DataOps | Professional | Engineers, Architects, Leads | SQL/Linux Basics | Airflow, Kafka, dbt, CI/CD, IaC | 1st in DataOps Track |

Deep Dive: DataOps Certified Professional (DOCP)

What it is

The DOCP is an intensive 60-hour certification program provided by DevOpsSchool. It is built on the foundation of the DataOps Manifesto, emphasizing agile development, DevOps practices, and statistical process control. It teaches you how to treat “Data as Code,” ensuring that every change in your data pipeline is versioned, tested, and deployed automatically.

Who should take it

- Data Engineers: Who want to move away from manual “firefighting” and start building resilient, self-healing data pipelines.

- Software Engineers: Looking to pivot into the data domain by applying their existing knowledge of CI/CD and automation.

- Engineering Managers: Who need to understand the technical requirements of building a modern data team and how to measure their success.

- Data Architects: Who are responsible for designing the high-level flow of data across multiple cloud and on-premise environments.

Skills you’ll gain

- End-to-End Orchestration: Learn to use tools like Apache Airflow and Dagster to manage complex dependencies in data workflows.

- Automated Data Testing: Implement “Data Quality as Code” using tools like Great Expectations to catch schema changes or data drift before they reach the dashboard.

- Data Version Control: Mastering tools like dbt (data build tool) and Git to manage transformations and history.

- Cloud Infrastructure for Data: Deploying scalable data platforms using Kubernetes, Terraform, and cloud-native services (AWS, Azure, or GCP).

- Observability and Monitoring: Building real-time monitoring for your data pipelines using Prometheus, Grafana, and ELK stack to ensure 99.9% reliability.

Real-world projects you should be able to do

- Real-time Analytics Pipeline: Design and deploy a system that ingests data from IoT devices via Kafka, processes it with Spark, and stores it in a data lake.

- Zero-Downtime Data Migrations: Automate the migration of data from a legacy SQL server to a modern cloud warehouse like Snowflake or BigQuery without interrupting business operations.

- Self-Service Data Platform: Build a platform where data scientists can spin up their own isolated environments with pre-cleaned data using Docker and Kubernetes.

- Automated Governance Suite: Create a pipeline that automatically masks PII (Personally Identifiable Information) and checks for compliance with GDPR/CCPA.

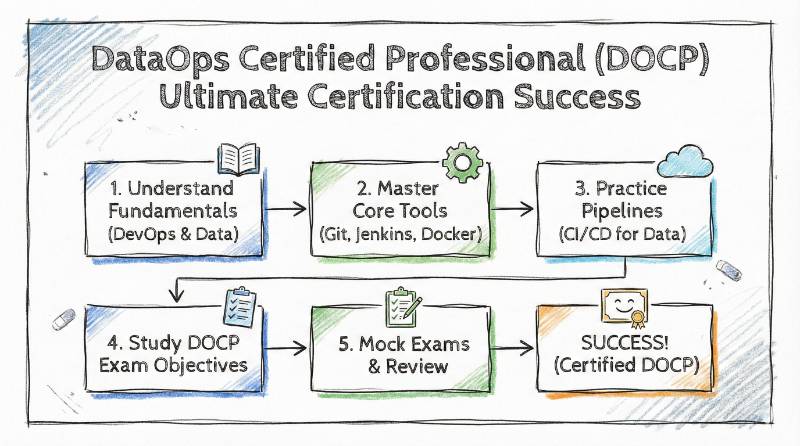

Preparation plan

- 7–14 Days (The Fast Track): Best for experienced DevOps engineers. Focus heavily on the differences between application CI/CD and Data CI/CD. Study the integration of dbt with Airflow.

- 30 Days (The Standard Path): Dedicate 2 hours daily. Spend the first two weeks on tool-specific labs (Docker, Airflow, SQL). Spend the last two weeks on orchestration and pipeline security.

- 60 Days (The Foundation Path): Recommended for those new to “Ops.” Start with Linux administration and Git. Move into Python scripting and SQL optimization, then finish with the core DOCP modules and a full-scale capstone project.

Common mistakes

- Over-Engineering: Trying to use every tool in the “Modern Data Stack” at once. Start simple and automate the biggest bottleneck first.

- Neglecting the Cultural Shift: Thinking DataOps is just about tools. If the data team and the ops team don’t talk, the tools won’t save you.

- Lack of Testing: Assuming the data is correct just because the pipeline didn’t “fail.” You must test the content of the data, not just the flow.

Best next certification after this

Once you have mastered the flow of data, the next logical step is mastering the flow of Machine Learning models. The MLOps Certified Professional (MLOCP) is the perfect follow-up, as it applies the DOCP principles to the specific challenges of model training and deployment.

Choose Your Path: 6 Learning Tracks

In my time leading engineering departments, I’ve found that career growth is most effective when you choose a “major” but stay familiar with the “minors.” Here are the six primary paths:

- DevOps: The core of modern software. Focus on the speed of delivery for applications.

- DevSecOps: Integrating security at every stage. A must-have for banking and healthcare sectors.

- SRE (Site Reliability Engineering): The bridge between development and operations, focusing on system health and availability.

- AIOps/MLOps: The cutting edge. Using AI to manage operations and managing the lifecycle of ML models.

- DataOps: The focus of this guide. Streamlining the data supply chain for analytics and BI.

- FinOps: The business side of engineering. Optimizing cloud costs and ensuring every dollar spent on AWS/Azure is justified.

Role → Recommended Certifications

| Current Role | Recommended Certification Journey |

| DevOps Engineer | DevOps Certified Professional (DCP) → SRE → DOCP |

| SRE | SRE Certified Professional → AIOps Certified Professional |

| Platform Engineer | Certified Kubernetes Administrator (CKA) → DOCP |

| Cloud Engineer | AWS/Azure Solutions Architect → SRE Certified Professional |

| Security Engineer | DevSecOps Certified Professional (DSOCP) → DOCP |

| Data Engineer | DataOps Certified Professional (DOCP) → MLOps (MLOCP) |

| FinOps Practitioner | FinOps Certified Professional → Business Analytics |

| Engineering Manager | DOCP + SRE + Master in DevOps Engineering (MDE) |

Top Institutions for DOCP Training & Certification

- DevOpsSchool As the primary delivery partner for the DOCP program, DevOpsSchool offers a robust, 60-hour instructor-led curriculum. They are highly regarded for their “Performance-Based” learning approach, providing students with 24/7 access to cloud-native labs and a lifetime of technical support. Their focus is on ensuring you can build real-world pipelines, not just pass a multiple-choice test.

- Cotocus Cotocus is a leading IT consulting and training firm that specializes in large-scale digital transformations. Their DOCP training is heavily focused on corporate use cases, making it an excellent choice for teams looking to implement DataOps within existing enterprise frameworks. They emphasize project-based milestones and peer-to-peer collaboration.

- Scmgalaxy One of the oldest and most trusted community hubs in the DevOps ecosystem, Scmgalaxy provides a wealth of documentation and community-driven tutorials for DOCP aspirants. They offer specialized bootcamps that focus on the integration of Source Code Management (SCM) with data pipelines, ensuring that “Data as Code” is more than just a buzzword.

- BestDevOps BestDevOps focuses on career-oriented training, blending technical mastery with job-readiness. Their DOCP program includes dedicated modules on portfolio building and interview preparation specifically tailored for the high-demand DataOps market. It is a great choice for professionals looking to pivot roles quickly while gaining deep technical depth.

- DevSecOpsSchool While their primary focus is security, DevSecOpsSchool provides critical elective training for DOCP candidates who need to focus on “Secure Data Delivery.” They teach how to automate security scanning and PII masking within your data pipelines, which is a mandatory skill for those working in highly regulated industries like FinTech or Healthcare.

- SRESchool SRESchool bridges the gap between data flows and system reliability. For the DOCP track, they provide advanced modules on monitoring and observability, teaching you how to use Prometheus and Grafana to track pipeline health and “Data SLIs.” This is the go-to institution for learning how to keep your data platforms running with 99.9% uptime.

- AIOpsSchool AIOpsSchool is essential for those who want to see where DataOps meets Artificial Intelligence. They offer specialized labs on using AI to manage data operations and provide the foundational knowledge needed if you plan to take the MLOps track immediately after your DOCP certification.

- DataOpsSchool This is a niche portal dedicated entirely to the DataOps domain. It serves as a concentrated resource center for DOCP, offering deep-dives into specific tools like dbt, Airflow, and Snowflake. It’s perfect for engineers who want a focused, no-distractions environment centered solely on data orchestration and management.

- FinOpsSchool As data storage and processing costs skyrocket in the cloud, FinOpsSchool provides DOCP students with the “Cloud Economics” perspective. They offer modules on how to monitor and optimize the costs associated with massive data pipelines, ensuring that your automated workflows remain profitable for the business.

Next Certifications to Take

Professional development is a continuous loop. After completing your DOCP, consider these three directions:

- Same Track (Specialization): MLOps Certified Professional (MLOCP). This allows you to handle the data needs of AI/ML teams, which is a highly lucrative niche.

- Cross-Track (Broadening): Site Reliability Engineering (SRE). This gives you the skills to ensure the data platforms you build are highly available and scalable.

- Leadership (Growth): Master in DevOps Engineering (MDE). If you aim to be a CTO or a Head of Engineering, this certification covers the architectural and managerial aspects of the entire “Ops” ecosystem.

FAQs on DataOps Certified Professional (DOCP)

- What is the passing score for the DOCP exam?The exam typically requires a 70% or higher score. It consists of both multiple-choice questions and a practical assessment where you must solve a pipeline issue.

- Does DOCP cover cloud-specific tools?Yes, while the principles are cloud-agnostic, the labs usually utilize AWS or Azure to show how these tools work in a real cloud environment.

- How long is the DOCP certification valid?The certification comes with lifetime validity. However, the technology moves fast, so attending the alumni webinars provided by DevOpsSchool is recommended.

- Can a Fresher (New Graduate) take this course?Yes, but it is highly recommended to take the “DevOps Foundation” or a basic SQL/Python course first to get the most out of the professional-level content.

- Is there a community I can join after the certification?Yes, all DOCP certified professionals get access to a private Discord/Slack community where experts share job openings and technical solutions.

- Does the course include dbt and Airflow?Yes, these are the “core” tools of the DOCP curriculum. You will spend a significant amount of time building projects with them.

- Is this a self-paced or instructor-led course?It is primarily instructor-led (Live Online) to ensure students can ask questions in real-time, but recorded sessions are provided for review.

- How does DataOps help with AI/ML projects?AI is only as good as the data it’s fed. DataOps ensures that the data reaching your ML models is clean, consistent, and timely.

- Are the lab environments provided by the institute?Yes, institutions like DevOpsSchool provide pre-configured cloud labs so you don’t have to worry about your own hardware limitations.

- What kind of jobs can I get after DOCP?Common titles include DataOps Engineer, Senior Data Engineer, Analytics Operations Lead, and Data Platform Architect.

- How does DataOps improve “Time to Value”?By automating testing and deployment, you reduce the time it takes to go from a business request to a live, data-driven insight.

- Is there any refund policy for the training?Most partner institutions have a transparent refund policy before the start of the batch. Check the specific terms on the DevOpsSchool website.

FAQs on DataOps Certified Professional (DOCP)

1. How difficult is the DOCP exam for someone without a coding background? While the DOCP is a professional-level certification, it is designed to be accessible. You don’t need to be a software developer, but you should be comfortable with basic SQL and logic flows. The training program at institutions like DevOpsSchool includes foundational modules to bridge any technical gaps before you hit the advanced orchestration labs.

2. What is the recommended sequence: Should I do DevOps before DataOps? Ideally, yes. DataOps is an evolution of DevOps principles applied to data. Having a “DevOps Foundation” or “DevOps Certified Professional” (DCP) background makes understanding concepts like CI/CD for data, containerization, and automated testing much more intuitive.

3. How much study time should I realistically set aside? For a working professional, I recommend a 30-to-60-day window. This allows you to complete the 60 hours of formal training and spend an additional 40 hours on hands-on labs. Consistency—roughly 1.5 to 2 hours a day—is far more effective than “cramming” over a weekend.

4. Are the labs based on real-world production scenarios? Yes. A key requirement of the DOCP is demonstrating you can handle “dirty data” and pipeline failures. The labs mimic production environments where you must automate the recovery of a broken Airflow DAG or fix a data mismatch in a dbt transformation without manual intervention.

5. Does this certification help in transitioning from a DBA to a Data Engineer? Absolutely. In fact, this is one of the most common career paths I see. The DOCP provides the “Ops” skills (automation, orchestration, and cloud-native architecture) that traditional Database Administrators (DBAs) need to remain relevant in the modern, cloud-first data market.

6. What makes DOCP different from a standard “Big Data” certification? Standard big data certifications usually focus on how to use a specific tool (like Hadoop or Snowflake). DOCP focuses on the process. It teaches you how to build a factory that produces data insights reliably, focusing on the collaboration between teams and the automation of the entire supply chain.

7. Is the DOCP certification recognized globally? Yes, it is widely recognized across India, North America, and Europe. Organizations following Agile and Lean methodologies specifically look for “Ops-certified” professionals because they bring a mindset of efficiency and scalability that standard engineers often lack.

8. What is the career outcome in terms of salary and roles? DataOps is currently a “high-scarcity” niche. Professionals with a DOCP certification often see a significant jump in compensation—sometimes 30% to 50%—as they qualify for roles like DataOps Engineer, Senior Data Architect, or Analytics Operations Lead.

Testimonials

“Before DOCP, our data deployments were a nightmare. We had no version control for our SQL transforms. Now, we use dbt and Airflow for everything, and our deployment errors have dropped by 80%.”

— Rajesh K., Lead Data Engineer

“The transition from traditional DBA to DataOps was the best career move I’ve made. The DOCP program gave me the exact tools I needed to stay relevant in a cloud-first world.”

— Anjali S., Cloud Data Architect

Conclusion

Building a data-driven organization is not about finding a “silver bullet” tool; it’s about building a culture of automation and continuous improvement. Having spent 20 years in the industry, I can confidently say that the “DataOps” approach is the only sustainable way to manage the massive volumes of data we face today.The DataOps Certified Professional (DOCP) is your entry point into this world. It provides the structured learning, the hands-on practice, and the industry recognition needed to lead these transformations. Whether you are an engineer looking to upskill or a manager looking to optimize your team, the time to embrace DataOps is now.