Introduction

LLM Routing & Model Gateway Platforms act as a smart layer between your application and multiple AI models. Instead of hardcoding a single model (like GPT or an open-source LLM), these platforms dynamically route requests to the best model based on cost, latency, task type, or reliability. Think of them as an “AI traffic controller” that optimizes performance while reducing costs and vendor lock-in.

This category has become critical as organizations adopt multi-model strategies, combine proprietary and open-source models, and build agentic workflows that require dynamic decision-making. Without routing, teams either overspend on premium models or risk poor outputs from cheaper ones.

Common use cases include:

- Cost optimization by routing simple queries to cheaper models

- Latency optimization for real-time applications

- Multi-model experimentation and A/B testing

- Fallback handling when models fail or degrade

- AI agents selecting tools and models dynamically

- Governance enforcement across model usage

What to evaluate:

- Model routing logic (rules-based vs AI-driven)

- Multi-model support (OpenAI, Anthropic, open-source, etc.)

- Cost and latency optimization features

- Observability (logs, traces, token usage)

- Guardrails and safety layers

- Evaluation and testing capabilities

- Deployment flexibility (cloud vs self-hosted)

- Security and data handling

- Integration ecosystem

- Vendor lock-in risk

Best for: AI engineers, platform teams, CTOs, and enterprises building multi-model or agent-based systems at scale.

Not ideal for: Small projects using a single model with low traffic, where routing adds unnecessary complexity.

What’s Changed in LLM Routing & Model Gateway Platforms

- Shift from static APIs to dynamic multi-model routing engines

- Rise of agentic orchestration, where agents select models per task

- Built-in cost optimization policies (auto-switch to cheaper models)

- Latency-aware routing for real-time applications

- Integration with evaluation pipelines for model selection

- Stronger guardrails and prompt filtering layers

- BYO model support including private/self-hosted LLMs

- Improved observability dashboards (token usage, errors, traces)

- Enterprise focus on data residency and privacy controls

- Emergence of fallback and retry strategies for reliability

- Growth of multi-modal routing (text, image, audio models)

- Increasing demand for vendor-neutral abstraction layers

Quick Buyer Checklist (Scan-Friendly)

- Supports multiple providers (OpenAI, Anthropic, open-source)

- Offers intelligent routing (rules or AI-based)

- Provides cost tracking and optimization controls

- Includes latency monitoring and routing decisions

- Has built-in guardrails and prompt filtering

- Supports evaluation and A/B testing

- Offers strong observability (logs, traces, metrics)

- Allows BYO/self-hosted models

- Includes admin controls and audit logs

- Enables fallback and retry mechanisms

- Avoids vendor lock-in via abstraction layer

- Integrates with your existing stack (APIs, SDKs)

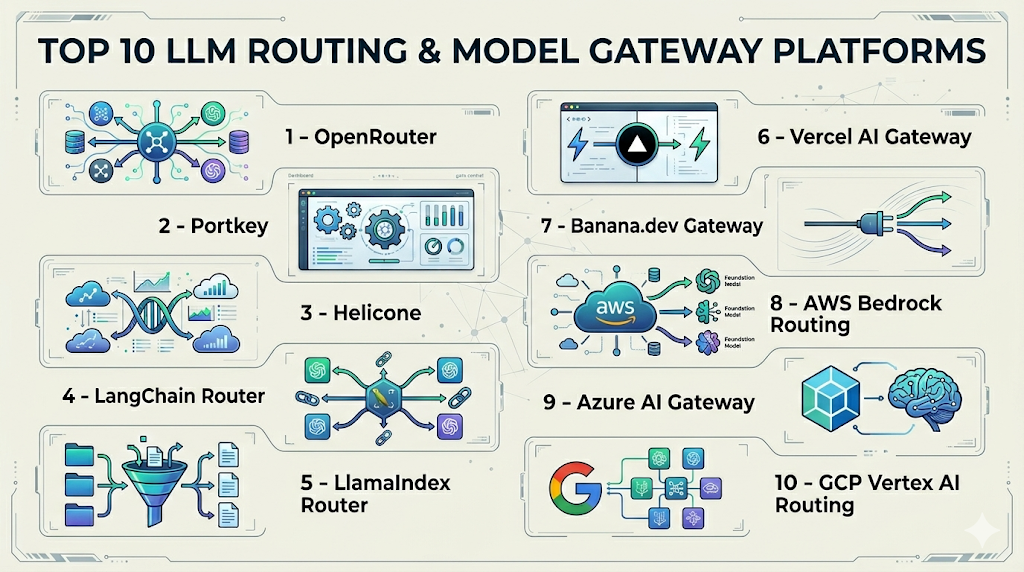

Top 10 LLM Routing & Model Gateway Platforms

1 — OpenRouter

One-line verdict: Best for developers needing simple multi-model routing with broad provider coverage.

Short description:

OpenRouter provides a unified API to access multiple LLM providers with automatic routing and fallback capabilities. It’s popular among developers who want flexibility without building infrastructure.

Standout Capabilities

- Unified API across many model providers

- Automatic fallback when models fail

- Cost-aware routing options

- Supports both proprietary and open models

- Easy integration with existing apps

- Minimal setup required

AI-Specific Depth

- Model support: Multi-model / BYO

- RAG / knowledge integration: N/A

- Evaluation: Basic routing metrics

- Guardrails: Limited / N/A

- Observability: Basic usage tracking

Pros

- Very easy to adopt

- Wide model compatibility

- Reduces vendor lock-in

Cons

- Limited enterprise features

- Basic observability

- Not ideal for complex routing logic

Security & Compliance

Not publicly stated

Deployment & Platforms

- Web / API

- Cloud

Integrations & Ecosystem

Simple API-based integration with developer tools and frameworks.

- REST APIs

- SDK support

- Compatible with LangChain

- Works with agent frameworks

Pricing Model

Usage-based

Best-Fit Scenarios

- Multi-model experimentation

- Startup AI apps

- Rapid prototyping

2 — Portkey

One-line verdict: Best for teams needing observability, governance, and advanced routing in production environments.

Short description:

Portkey acts as a full LLM gateway with routing, logging, caching, and governance features designed for production-grade applications.

Standout Capabilities

- Advanced routing rules and policies

- Centralized observability dashboard

- Request caching and retries

- Multi-provider support

- Governance and access control

- Latency and cost optimization

AI-Specific Depth

- Model support: Multi-model / BYO

- RAG / knowledge integration: N/A

- Evaluation: Basic monitoring + integrations

- Guardrails: Policy-based controls

- Observability: Strong tracing + metrics

Pros

- Production-ready features

- Strong observability

- Flexible routing policies

Cons

- Setup complexity

- Learning curve

- Pricing not transparent

Security & Compliance

SSO, RBAC, encryption (certifications: Not publicly stated)

Deployment & Platforms

- Web / API

- Cloud / Hybrid

Integrations & Ecosystem

Designed to integrate into modern AI stacks.

- APIs and SDKs

- Observability tools

- Logging systems

- Agent frameworks

Pricing Model

Usage-based / enterprise tiers

Best-Fit Scenarios

- Production AI systems

- Enterprise deployments

- Multi-team environments

3 — Helicone

One-line verdict: Best for lightweight routing with strong observability and cost tracking.

Short description:

Helicone provides a proxy layer for LLM APIs, enabling logging, routing, and monitoring with minimal setup.

Standout Capabilities

- Proxy-based integration

- Real-time request tracking

- Cost monitoring dashboard

- Multi-provider support

- Easy setup

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: N/A

- Evaluation: Limited

- Guardrails: Basic

- Observability: Strong

Pros

- Simple integration

- Great visibility

- Fast setup

Cons

- Limited routing logic

- Not enterprise-grade

- Basic evaluation tools

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

Works well with developer tools and APIs.

- REST APIs

- Logging tools

- AI frameworks

- Monitoring tools

Pricing Model

Freemium / usage-based

Best-Fit Scenarios

- Early-stage products

- Monitoring AI usage

- Cost tracking

4 — LangChain Router

One-line verdict: Best for developers building custom routing logic inside agent workflows.

Short description:

LangChain provides routing chains that allow developers to dynamically select models or tools based on input.

Standout Capabilities

- Custom routing logic

- Agent integration

- Prompt-based routing

- Tool selection

- Flexible architecture

AI-Specific Depth

- Model support: Multi-model / BYO

- RAG / knowledge integration: Strong

- Evaluation: Varies

- Guardrails: Custom

- Observability: Limited (needs add-ons)

Pros

- Highly flexible

- Open-source ecosystem

- Agent-native

Cons

- Requires engineering effort

- Limited built-in observability

- No managed platform

Security & Compliance

Varies / N/A

Deployment & Platforms

- Local / Cloud

Integrations & Ecosystem

Extensive ecosystem for developers.

- Vector DBs

- APIs

- Agent frameworks

- Plugins

Pricing Model

Open-source

Best-Fit Scenarios

- Custom AI agents

- Research projects

- Complex workflows

5 — LlamaIndex Router

One-line verdict: Best for routing queries across multiple data sources and models in RAG systems.

Short description:

LlamaIndex enables intelligent routing across knowledge sources and models, particularly in RAG pipelines.

Standout Capabilities

- Query routing across data sources

- RAG optimization

- Multi-index orchestration

- Model selection logic

- Flexible pipelines

AI-Specific Depth

- Model support: Multi-model / BYO

- RAG / knowledge integration: Strong

- Evaluation: Limited

- Guardrails: Custom

- Observability: Limited

Pros

- Strong RAG support

- Flexible routing

- Developer-friendly

Cons

- Limited enterprise features

- Requires setup

- Basic observability

Security & Compliance

Varies / N/A

Deployment & Platforms

- Local / Cloud

Integrations & Ecosystem

Designed for RAG-heavy systems.

- Vector DBs

- APIs

- LLM providers

- Data connectors

Pricing Model

Open-source

Best-Fit Scenarios

- RAG applications

- Knowledge assistants

- Data-driven AI apps

6 — Vercel AI Gateway

One-line verdict: Best for frontend-heavy applications needing simple routing and performance optimization.

Short description:

Vercel AI Gateway simplifies LLM access with routing, caching, and performance improvements tailored for web apps.

Standout Capabilities

- Built-in caching

- Edge deployment support

- Multi-model routing

- Low-latency optimization

- Developer-friendly APIs

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: N/A

- Evaluation: Limited

- Guardrails: Basic

- Observability: Moderate

Pros

- Great for frontend apps

- Low latency

- Easy integration

Cons

- Limited advanced routing

- Not enterprise-focused

- Basic evaluation

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud / Edge

Integrations & Ecosystem

Works within modern web stacks.

- APIs

- Frontend frameworks

- Serverless environments

- AI SDKs

Pricing Model

Usage-based

Best-Fit Scenarios

- Web apps

- Real-time AI features

- Edge deployments

7 — Banana.dev Gateway

One-line verdict: Best for simple routing with GPU-backed inference infrastructure.

Short description:

Banana.dev offers an inference and routing layer optimized for performance and scalability.

Standout Capabilities

- GPU-backed inference

- API routing

- Scalable infrastructure

- Model deployment support

- Performance optimization

AI-Specific Depth

- Model support: Open-source / BYO

- RAG / knowledge integration: N/A

- Evaluation: Limited

- Guardrails: N/A

- Observability: Basic

Pros

- High performance

- Scalable

- Supports custom models

Cons

- Limited routing intelligence

- Basic features

- Smaller ecosystem

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

Focused on infrastructure-level integration.

- APIs

- ML pipelines

- Deployment tools

- Model hosting

Pricing Model

Usage-based

Best-Fit Scenarios

- Custom model deployment

- High-performance inference

- Backend systems

8 — AWS Bedrock Routing (via APIs)

One-line verdict: Best for enterprises using AWS needing managed multi-model orchestration and governance.

Short description:

AWS Bedrock provides access to multiple foundation models with built-in routing and enterprise-grade controls.

Standout Capabilities

- Multi-model access

- Enterprise security

- Integration with AWS ecosystem

- Scalable infrastructure

- Managed service

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Strong (AWS stack)

- Evaluation: Integrated tools

- Guardrails: Policy-based

- Observability: Strong

Pros

- Enterprise-grade

- Secure

- Highly scalable

Cons

- Vendor lock-in

- Complexity

- Pricing unclear

Security & Compliance

Enterprise-grade (certifications: Not publicly stated)

Deployment & Platforms

- Cloud

Integrations & Ecosystem

Deep integration within AWS ecosystem.

- S3

- Lambda

- SageMaker

- IAM

Pricing Model

Usage-based

Best-Fit Scenarios

- Enterprise AI platforms

- Regulated environments

- AWS-native teams

9 — Azure AI Gateway (via Azure OpenAI)

One-line verdict: Best for Microsoft ecosystem users needing secure and governed model routing.

Short description:

Azure provides routing and access to multiple models with enterprise-grade compliance and integration.

Standout Capabilities

- Enterprise security

- Multi-model access

- Integration with Azure services

- Governance controls

- Scalable infrastructure

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Strong

- Evaluation: Integrated

- Guardrails: Strong

- Observability: Strong

Pros

- Strong compliance

- Enterprise-ready

- Integration ecosystem

Cons

- Vendor lock-in

- Complex setup

- Cost visibility

Security & Compliance

Enterprise-grade (certifications: Not publicly stated)

Deployment & Platforms

- Cloud

Integrations & Ecosystem

Tightly integrated with Microsoft tools.

- Azure services

- APIs

- Data platforms

- Identity systems

Pricing Model

Usage-based

Best-Fit Scenarios

- Enterprise deployments

- Microsoft ecosystem

- Secure applications

10 — GCP Vertex AI Routing

One-line verdict: Best for data-driven teams leveraging Google Cloud for multi-model orchestration.

Short description:

Vertex AI offers model routing and orchestration with strong integration into data and ML pipelines.

Standout Capabilities

- Model orchestration

- Data integration

- Scalable infrastructure

- Multi-model support

- ML lifecycle tools

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Strong

- Evaluation: Integrated

- Guardrails: Policy-based

- Observability: Strong

Pros

- Strong data integration

- Scalable

- Enterprise-ready

Cons

- Complexity

- Vendor lock-in

- Learning curve

Security & Compliance

Enterprise-grade (certifications: Not publicly stated)

Deployment & Platforms

- Cloud

Integrations & Ecosystem

Deep integration with Google Cloud services.

- BigQuery

- APIs

- ML pipelines

- Data tools

Pricing Model

Usage-based

Best-Fit Scenarios

- Data-heavy AI systems

- ML teams

- Enterprise workflows

Comparison Table (Top 10)

| Tool | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| OpenRouter | Simple routing | Cloud | Multi-model | Easy API | Limited features | N/A |

| Portkey | Production routing | Cloud/Hybrid | Multi-model | Observability | Complexity | N/A |

| Helicone | Monitoring + routing | Cloud | Multi-model | Visibility | Limited routing | N/A |

| LangChain Router | Custom logic | Local/Cloud | BYO | Flexibility | Dev effort | N/A |

| LlamaIndex | RAG routing | Local/Cloud | BYO | Data routing | Limited enterprise | N/A |

| Vercel AI Gateway | Web apps | Cloud/Edge | Multi-model | Low latency | Basic features | N/A |

| Banana.dev | Infra routing | Cloud | BYO | Performance | Limited ecosystem | N/A |

| AWS Bedrock | Enterprise | Cloud | Multi-model | Security | Lock-in | N/A |

| Azure AI | Enterprise | Cloud | Multi-model | Compliance | Complexity | N/A |

| GCP Vertex AI | Data teams | Cloud | Multi-model | Data integration | Learning curve | N/A |

Scoring & Evaluation (Transparent Rubric)

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| OpenRouter | 7 | 7 | 6 | 7 | 9 | 9 | 6 | 7 | 7.6 |

| Portkey | 9 | 8 | 8 | 9 | 7 | 8 | 8 | 8 | 8.3 |

| Helicone | 7 | 7 | 6 | 8 | 9 | 9 | 6 | 7 | 7.8 |

| LangChain | 8 | 7 | 6 | 9 | 6 | 7 | 6 | 8 | 7.5 |

| LlamaIndex | 8 | 7 | 6 | 8 | 7 | 7 | 6 | 7 | 7.4 |

| Vercel AI | 7 | 6 | 6 | 8 | 9 | 8 | 6 | 7 | 7.4 |

| Banana.dev | 7 | 6 | 5 | 6 | 7 | 8 | 6 | 6 | 6.8 |

| AWS Bedrock | 9 | 9 | 9 | 9 | 6 | 7 | 9 | 8 | 8.6 |

| Azure AI | 9 | 9 | 9 | 9 | 6 | 7 | 9 | 8 | 8.6 |

| GCP Vertex | 9 | 8 | 8 | 9 | 6 | 7 | 9 | 8 | 8.4 |

Top 3 for Enterprise

- AWS Bedrock

- Azure AI

- Portkey

Top 3 for SMB

- OpenRouter

- Helicone

- Vercel AI Gateway

Top 3 for Developers

- LangChain Router

- LlamaIndex

- OpenRouter

FAQs

1. What is LLM routing?

It’s a system that selects the best AI model dynamically based on cost, latency, or task type.

2. Why use a model gateway?

To reduce cost, improve performance, and avoid vendor lock-in.

3. Can I use multiple models together?

Yes, most platforms support multi-model orchestration.

4. Does routing reduce cost?

Yes, by sending simple tasks to cheaper models.

5. Is it needed for small apps?

Not always—single-model apps may not benefit.

6. Do these tools support open-source models?

Many platforms support BYO models.

7. Are they secure?

Enterprise platforms offer strong security; others vary.

8. Can I self-host?

Some tools allow it; others are cloud-only.

9. Do they include evaluation?

Some include basic evaluation; others require external tools.

10. What about latency?

Routing can optimize for faster responses.

11. Can I switch providers easily?

Yes, gateways reduce vendor lock-in.

12. Are they expensive?

Costs vary based on usage and platform.

Conclusion

LLM routing and gateway platforms are becoming essential as teams move from single-model setups to complex, multi-model AI systems that demand cost efficiency, reliability, and flexibility. The right tool depends heavily on your scale, infrastructure, and need for control—developers may prefer flexible frameworks like LangChain, while enterprises often lean toward managed platforms like AWS or Azure for governance and security. Instead of chasing a “best” solution, focus on alignment with your architecture and operational maturity: shortlist 2–3 tools, run a pilot with real workloads, and validate performance, cost savings, and observability before scaling across production.