Introduction

Private LLM Hosting (Air-Gapped) Platforms allow organizations to deploy and operate large language models entirely within isolated environments, without relying on external APIs or internet connectivity. These systems ensure that sensitive data remains fully contained within internal infrastructure, making them essential for environments where data exposure is unacceptable.

This category has gained importance as AI moves from experimentation to mission-critical deployment. Organizations now need complete control over data flow, model behavior, and infrastructure performance. Air-gapped platforms make this possible by combining model hosting, inference serving, and governance inside controlled environments.

Real-world use cases include:

- Running intelligence analysis models inside classified defense networks

- Processing sensitive financial data within internal banking systems

- Analyzing confidential patient records inside hospital infrastructure

- Executing legal document analysis without external exposure

- Deploying AI systems in remote or disconnected industrial environments

Key evaluation criteria include:

- Deployment flexibility (on-prem, hybrid, air-gapped readiness)

- Model compatibility and customization

- Hardware optimization and inference efficiency

- Built-in evaluation and testing capabilities

- Security architecture and isolation controls

- Observability and performance monitoring

- Cost and latency optimization

- Integration with internal systems

- Governance, audit, and compliance support

- Scalability across clusters or edge environments

Best for: Enterprises, government agencies, regulated sectors, and organizations handling highly sensitive or confidential data.

Not ideal for: Teams needing fast iteration, minimal infrastructure overhead, or continuous access to hosted cutting-edge models.

What’s Changed in Private LLM Hosting Platforms

- Rise of offline AI agents capable of executing workflows without external dependencies

- Increased support for multimodal models running locally (text + image + limited audio)

- Adoption of secure internal model routing within air-gapped clusters

- Integration of offline evaluation harnesses for benchmarking and validation

- Stronger focus on prompt injection defense in isolated environments

- Growth in hardware-aware optimizations (quantization, batching, GPU scheduling)

- Expansion of BYO model strategies with fine-tuning pipelines

- Improved observability without external telemetry dependencies

- Emphasis on data sovereignty and strict residency controls

- Integration with zero-trust and enterprise IAM architectures

Quick Buyer Checklist (Scan-Friendly)

- Does the platform support fully air-gapped deployment?

- Can you run open-source and custom models locally?

- Are evaluation and benchmarking tools available offline?

- Does it include guardrails for prompt injection and misuse?

- Can you track latency, token usage, and performance metrics?

- Does it support GPU/CPU optimization and scaling?

- Are audit logs, RBAC, and access controls included?

- Can it integrate with internal data sources and APIs?

- How strong is vendor lock-in risk?

- Is model lifecycle management supported?

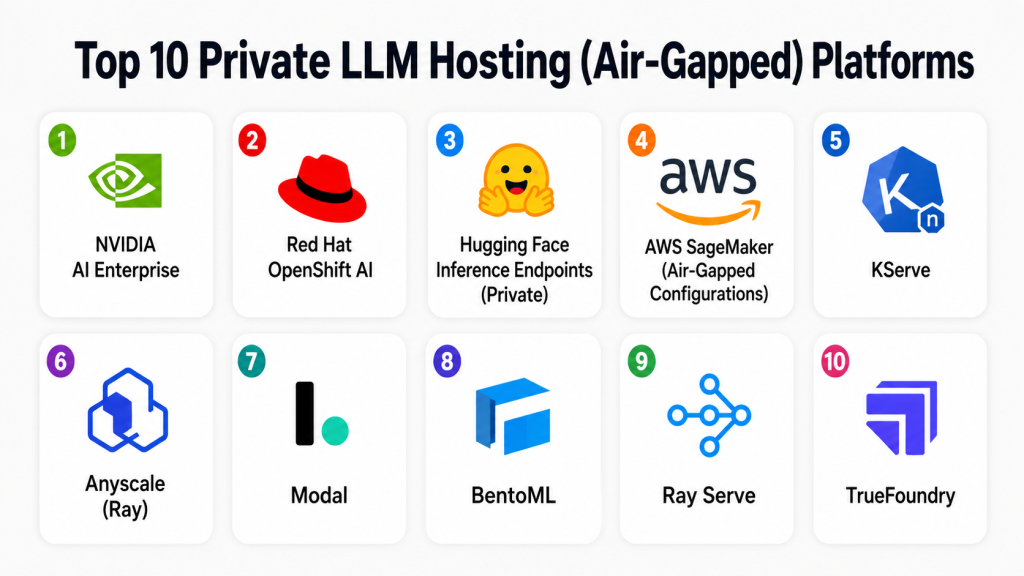

Top 10 Private LLM Hosting (Air-Gapped) Platforms

1 — NVIDIA AI Enterprise

One-line verdict: Best for GPU-optimized, large-scale enterprise AI deployments requiring high performance and secure infrastructure.

Short description:

A full-stack AI platform designed for deploying, optimizing, and managing models on NVIDIA hardware. Commonly used in enterprises and secure environments for high-performance workloads.

Standout Capabilities

- Deep GPU optimization with TensorRT acceleration

- Scalable distributed inference across clusters

- Integrated AI frameworks and pretrained models

- Enterprise lifecycle management tools

- High-throughput, low-latency inference pipelines

- Strong support for containerized deployments

- Hardware-aware performance tuning

AI-Specific Depth

- Model support: Open-source + proprietary + BYO

- RAG / knowledge integration: Supported via ecosystem tools

- Evaluation: Varies / N/A

- Guardrails: Varies / N/A

- Observability: GPU metrics, latency tracking

Pros

- Best-in-class performance optimization

- Mature enterprise ecosystem

- Highly scalable infrastructure

Cons

- Requires NVIDIA hardware

- High cost for smaller teams

- Complex setup

Security & Compliance

SSO, RBAC, encryption supported; certifications: Not publicly stated

Deployment & Platforms

Linux, On-prem, Cloud

Integrations & Ecosystem

Strong integration with enterprise AI stack and infrastructure

- CUDA, TensorRT

- Kubernetes

- ML frameworks

- Data pipelines

Pricing Model

Enterprise licensing; varies by deployment

Best-Fit Scenarios

- High-performance inference workloads

- Secure enterprise AI infrastructure

- GPU-intensive deployments

2 — Red Hat OpenShift AI

One-line verdict: Best for Kubernetes-native secure AI deployments with strong enterprise control and flexibility.

Short description:

A container-based AI platform built on Kubernetes, enabling secure, scalable, and air-gapped deployments across hybrid environments.

Standout Capabilities

- Kubernetes-native AI orchestration

- Hybrid and air-gapped deployment support

- Strong DevOps and CI/CD integration

- Containerized model serving

- Enterprise-grade security controls

- Flexible scaling across clusters

AI-Specific Depth

- Model support: BYO + open-source

- RAG / knowledge integration: Supported via integrations

- Evaluation: Varies / N/A

- Guardrails: Varies / N/A

- Observability: Built-in monitoring tools

Pros

- Highly flexible architecture

- Strong enterprise ecosystem

- Scalable deployments

Cons

- Requires Kubernetes expertise

- Setup complexity

- Operational overhead

Security & Compliance

RBAC, audit logs supported; certifications: Not publicly stated

Deployment & Platforms

Linux, Hybrid, On-prem

Integrations & Ecosystem

- Kubernetes ecosystem

- CI/CD tools

- APIs and SDKs

- Data platforms

Pricing Model

Subscription-based

Best-Fit Scenarios

- Hybrid AI infrastructure

- DevOps-driven teams

- Secure containerized deployments

3 — Hugging Face Inference Endpoints (Private)

One-line verdict: Best for flexible open-source model hosting with private deployment options and strong developer accessibility.

Short description:

Provides infrastructure to deploy open-source models in controlled environments, allowing teams to manage inference privately.

Standout Capabilities

- Large open-source model ecosystem

- Easy deployment workflows

- Support for fine-tuned models

- Flexible infrastructure options

- Developer-friendly APIs

- Strong community ecosystem

AI-Specific Depth

- Model support: Open-source + BYO

- RAG / knowledge integration: Supported

- Evaluation: Limited

- Guardrails: Limited

- Observability: Basic

Pros

- Massive model availability

- Easy to use

- Flexible deployment

Cons

- Limited enterprise controls

- Guardrails not mature

- Observability limited

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud, Private

Integrations & Ecosystem

- Transformers

- APIs

- ML pipelines

- SDKs

Pricing Model

Usage-based

Best-Fit Scenarios

- Open-source experimentation

- Private model hosting

- Research environments

4 — AWS SageMaker (Air-Gapped Configurations)

One-line verdict: Best for organizations leveraging AWS ecosystem with secure and controlled deployment configurations.

Short description:

A managed ML platform that supports secure, isolated deployments through private networking and controlled environments.

Standout Capabilities

- End-to-end ML lifecycle management

- Scalable infrastructure

- Secure VPC-based isolation

- Built-in monitoring and logging

- Integration with cloud services

- Flexible model deployment

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Built-in tools

- Guardrails: Varies

- Observability: Strong

Pros

- Mature ecosystem

- Scalable infrastructure

- Strong integrations

Cons

- Vendor lock-in risk

- Complex pricing

- Requires cloud expertise

Security & Compliance

Encryption, IAM supported; certifications: Not publicly stated

Deployment & Platforms

Cloud, Hybrid

Integrations & Ecosystem

- Cloud services

- APIs

- Data lakes

- Pipelines

Pricing Model

Usage-based

Best-Fit Scenarios

- Cloud-integrated AI

- Secure ML pipelines

- Enterprise deployments

5 — KServe

One-line verdict: Best for open-source Kubernetes-based model serving in fully controlled environments.

Short description:

An open-source model serving platform built on Kubernetes, designed for scalable and flexible AI inference.

Standout Capabilities

- Serverless model inference

- Autoscaling capabilities

- Multi-framework support

- Kubernetes-native design

- Flexible deployment pipelines

- Open-source extensibility

AI-Specific Depth

- Model support: Open-source + BYO

- RAG / knowledge integration: Supported via integrations

- Evaluation: N/A

- Guardrails: N/A

- Observability: Metrics via Kubernetes

Pros

- Open-source flexibility

- Highly scalable

- Strong Kubernetes integration

Cons

- Requires DevOps expertise

- Limited built-in guardrails

- Setup complexity

Security & Compliance

Varies / N/A

Deployment & Platforms

Linux, Self-hosted

Integrations & Ecosystem

- Kubernetes

- APIs

- ML frameworks

- Monitoring tools

Pricing Model

Open-source

Best-Fit Scenarios

- Kubernetes-based deployments

- Custom AI infrastructure

- Scalable inference systems

6 — Anyscale (Ray)

One-line verdict: Best for distributed AI workloads and scalable inference pipelines using Ray ecosystem.

Short description:

Built on Ray, Anyscale enables distributed AI workloads with flexible deployment in private environments.

Standout Capabilities

- Distributed computing with Ray

- Scalable inference pipelines

- Flexible deployment

- High-performance task scheduling

- Multi-model orchestration

- Cluster-level optimization

AI-Specific Depth

- Model support: Multi-model + BYO

- RAG / knowledge integration: Supported

- Evaluation: Limited

- Guardrails: Limited

- Observability: Cluster metrics

Pros

- Scalable distributed system

- Flexible architecture

- Strong performance

Cons

- Learning curve

- Requires tuning

- Limited guardrails

Security & Compliance

Not publicly stated

Deployment & Platforms

Hybrid, On-prem

Integrations & Ecosystem

- Ray ecosystem

- APIs

- Data pipelines

- ML frameworks

Pricing Model

Usage-based / enterprise

Best-Fit Scenarios

- Distributed AI workloads

- Large-scale inference

- Custom pipelines

7 — Modal

One-line verdict: Best for lightweight, developer-friendly deployment of models in controlled environments.

Short description:

A platform for deploying models with minimal setup, focusing on developer productivity and simplicity.

Standout Capabilities

- Simple deployment workflows

- Fast iteration cycles

- Lightweight infrastructure

- Scalable execution

- Developer-focused APIs

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: N/A

- Evaluation: N/A

- Guardrails: N/A

- Observability: Basic

Pros

- Easy to use

- Fast setup

- Developer-friendly

Cons

- Limited enterprise features

- Basic observability

- Not ideal for large-scale deployments

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- SDKs

- Dev tools

Pricing Model

Usage-based

Best-Fit Scenarios

- Rapid prototyping

- Developer workflows

- Lightweight deployments

8 — BentoML

One-line verdict: Best for packaging and deploying models with flexibility in private and hybrid environments.

Short description:

An open-source platform focused on model packaging, deployment, and serving across environments.

Standout Capabilities

- Model packaging tools

- Flexible deployment options

- API-based serving

- Integration with ML workflows

- Open-source extensibility

AI-Specific Depth

- Model support: Open-source + BYO

- RAG / knowledge integration: Supported

- Evaluation: Limited

- Guardrails: Limited

- Observability: Basic

Pros

- Developer-friendly

- Flexible

- Open-source

Cons

- Requires scaling effort

- Limited built-in features

- Manual setup

Security & Compliance

Varies / N/A

Deployment & Platforms

Hybrid, Self-hosted

Integrations & Ecosystem

- APIs

- ML pipelines

- SDKs

- Dev tools

Pricing Model

Open-source

Best-Fit Scenarios

- Model packaging

- Custom deployments

- Hybrid infrastructure

9 — Ray Serve

One-line verdict: Best for scalable, high-performance model serving using distributed infrastructure.

Short description:

A scalable serving layer built on Ray, enabling efficient model deployment and inference.

Standout Capabilities

- Distributed serving

- High throughput

- Flexible routing

- Scalable infrastructure

- Integration with Ray ecosystem

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Limited

- Guardrails: Limited

- Observability: Metrics

Pros

- High performance

- Scalable

- Flexible

Cons

- Complexity

- Requires expertise

- Limited guardrails

Security & Compliance

Not publicly stated

Deployment & Platforms

Hybrid

Integrations & Ecosystem

- Ray

- APIs

- ML frameworks

Pricing Model

Open-source

Best-Fit Scenarios

- High-performance serving

- Distributed systems

- Scalable AI

10 — TrueFoundry

One-line verdict: Best for simplifying AI deployment with platform abstraction and enterprise-ready workflows.

Short description:

A platform that abstracts infrastructure complexity and simplifies model deployment across environments.

Standout Capabilities

- Platform abstraction

- Easy deployment workflows

- Multi-model support

- Integrated pipelines

- Enterprise-friendly UI

AI-Specific Depth

- Model support: Multi-model + BYO

- RAG / knowledge integration: Supported

- Evaluation: Limited

- Guardrails: Limited

- Observability: Basic

Pros

- Easy to use

- Reduces complexity

- Flexible

Cons

- Platform maturity evolving

- Limited deep controls

- Less customizable

Security & Compliance

Not publicly stated

Deployment & Platforms

Hybrid

Integrations & Ecosystem

- APIs

- Pipelines

- Dev tools

Pricing Model

Subscription / enterprise

Best-Fit Scenarios

- Simplified deployments

- SMB to mid-market

- Platform abstraction

Comparison Table (Top 10)

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| NVIDIA AI Enterprise | Enterprise GPU workloads | On-prem/Cloud | Multi-model | Performance | Hardware dependency | N/A |

| OpenShift AI | Kubernetes deployments | Hybrid | BYO | Flexibility | Complexity | N/A |

| Hugging Face | Open-source hosting | Cloud/Private | Open-source | Ecosystem | Guardrails limited | N/A |

| SageMaker | Cloud AI pipelines | Hybrid | Multi-model | Integration | Lock-in | N/A |

| KServe | Kubernetes inference | Self-hosted | Open-source | Scalability | Setup complexity | N/A |

| Anyscale | Distributed workloads | Hybrid | Multi-model | Performance | Learning curve | N/A |

| Modal | Lightweight deployment | Cloud | BYO | Simplicity | Limited enterprise features | N/A |

| BentoML | Model packaging | Hybrid | Open-source | Flexibility | Scaling effort | N/A |

| Ray Serve | Scalable serving | Hybrid | Multi-model | Throughput | Complexity | N/A |

| TrueFoundry | Platform abstraction | Hybrid | Multi-model | Ease of use | Maturity | N/A |

Scoring & Evaluation (Transparent Rubric)

Scoring is comparative, not absolute, and reflects how well each platform performs across real deployment scenarios. Scores consider both technical depth and operational practicality.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| NVIDIA AI Enterprise | 9 | 8 | 7 | 9 | 7 | 9 | 9 | 8 | 8.4 |

| OpenShift AI | 8 | 8 | 7 | 9 | 6 | 8 | 9 | 8 | 8.0 |

| Hugging Face | 7 | 7 | 5 | 8 | 9 | 7 | 6 | 8 | 7.2 |

| SageMaker | 9 | 8 | 7 | 9 | 7 | 8 | 9 | 8 | 8.3 |

| KServe | 8 | 7 | 6 | 8 | 6 | 8 | 8 | 7 | 7.6 |

| Anyscale | 8 | 8 | 6 | 8 | 7 | 8 | 7 | 7 | 7.7 |

| Modal | 7 | 7 | 5 | 7 | 9 | 7 | 6 | 6 | 7.0 |

| BentoML | 8 | 7 | 6 | 8 | 8 | 7 | 7 | 7 | 7.5 |

| Ray Serve | 8 | 8 | 6 | 8 | 6 | 9 | 7 | 7 | 7.8 |

| TrueFoundry | 8 | 7 | 6 | 8 | 8 | 7 | 7 | 7 | 7.6 |

Top 3 for Enterprise: NVIDIA AI Enterprise, SageMaker, OpenShift AI

Top 3 for SMB: TrueFoundry, BentoML, Hugging Face

Top 3 for Developers: Ray Serve, Modal, KServe

Which Private LLM Hosting Tool Is Right for You?

Solo / Freelancer

Choose lightweight tools like BentoML or Modal for simplicity and lower setup overhead.

SMB

Use TrueFoundry or Hugging Face for flexibility without heavy infrastructure complexity.

Mid-Market

Adopt OpenShift AI or Anyscale for balanced scalability and control.

Enterprise

NVIDIA AI Enterprise and SageMaker provide performance, security, and ecosystem maturity.

Regulated industries (finance/healthcare/public sector)

Prioritize fully air-gapped deployments with strict access control and audit logging.

Budget vs premium

Open-source reduces cost but increases operational burden; enterprise tools simplify management.

Build vs buy (when to DIY)

Build if you need deep customization; buy if speed and reliability matter more.

Implementation Playbook (30 / 60 / 90 Days)

30 Days

- Define use cases and success metrics

- Run pilot deployments

- Establish evaluation benchmarks

60 Days

- Add guardrails and monitoring

- Expand usage

- Conduct testing and validation

90 Days

- Optimize performance and cost

- Scale deployments

- Implement governance policies

Common Mistakes & How to Avoid Them

- Ignoring prompt injection risks

- Skipping evaluation pipelines

- Poor data isolation

- Lack of observability

- Unexpected infrastructure costs

- Over-automation without review

- Vendor lock-in

- Weak access control

- No audit logs

- Poor model versioning

- Inadequate testing

- Ignoring latency optimization

FAQs

1. What is an air-gapped AI platform?

A system that runs completely isolated from external networks to ensure maximum security.

2. Can LLMs run fully offline?

Yes, using local infrastructure and open-source models.

3. Are these platforms secure?

They are highly secure if configured correctly.

4. What models can be used?

Primarily open-source or licensed models.

5. Do they support evaluation?

Some do; others require external tools.

6. Is cloud required?

No, but hybrid setups are common.

7. How is performance optimized?

Through hardware tuning and efficient inference pipelines.

8. Are guardrails included?

Often limited; additional layers may be needed.

9. What about cost?

Depends on infrastructure and scale.

10. Can I switch platforms easily?

Depends on architecture and abstraction.

11. What skills are needed?

ML, DevOps, and infrastructure expertise.

12. Are they suitable for startups?

Generally not due to complexity and cost.

Conclusion

Private LLM Hosting (Air-Gapped) Platforms are essential for organizations that prioritize complete control over data, security, and AI behavior. While they introduce operational complexity, they unlock the ability to run advanced AI systems in highly sensitive and regulated environments without external dependencies. The right choice depends on your infrastructure maturity, performance needs, and security requirements. Start by shortlisting platforms aligned with your environment, validate them through controlled pilots with real workloads, ensure evaluation and guardrails are properly implemented, and then scale with strong