Introduction

Model compression toolkits are platforms and frameworks designed to reduce the size, complexity, and computational requirements of AI models while preserving as much performance as possible. In simple terms, they make large AI models smaller, faster, and more efficient to deploy in real-world environments.

Compression typically combines techniques like pruning (removing unnecessary parameters), quantization (reducing numerical precision), and distillation (training smaller models from larger ones). As AI systems scale—especially with large language models (LLMs), multimodal systems, and agent-based workflows—compression has become essential for managing cost, latency, and infrastructure demands.

Real-world use cases include:

- Deploying AI models on mobile, edge, or IoT devices

- Reducing cloud inference costs for large-scale AI applications

- Improving response time in real-time AI systems

- Enabling offline AI capabilities in low-resource environments

- Optimizing AI agents for continuous execution

- Scaling AI systems without exponential infrastructure costs

What to evaluate:

- Supported compression techniques (pruning, quantization, distillation)

- Compatibility with LLMs and multimodal models

- Accuracy vs compression trade-offs

- Hardware optimization (CPU, GPU, edge devices)

- Integration with training and inference pipelines

- Evaluation and benchmarking tools

- Observability (latency, throughput, cost metrics)

- Deployment flexibility (cloud, edge, hybrid)

- Ease of implementation and automation

- Security and data handling

- Support for BYO models

- Ecosystem and community maturity

Best for: AI engineers, ML teams, and organizations deploying models at scale where performance efficiency, cost control, and latency optimization are critical.

Not ideal for: Teams running small-scale experiments or use cases where maximum accuracy is required and infrastructure cost is not a concern.

What’s Changed in Model Compression Toolkits

- Increased adoption of combined compression pipelines (quantization + pruning + distillation)

- Strong focus on LLM compression for production AI agents

- Support for multimodal model compression (text, image, audio)

- Integration with real-time inference and streaming applications

- Built-in evaluation tools for accuracy vs efficiency trade-offs

- Growing emphasis on hardware-aware optimization (GPU, CPU, edge chips)

- Improved automation of compression workflows in MLOps pipelines

- Enhanced observability (latency, cost, throughput metrics)

- Integration with model routing and dynamic inference systems

- Better alignment with privacy and data governance requirements

- Support for edge and on-device AI deployments

- Use of synthetic data for compression and optimization workflows

Quick Buyer Checklist (Scan-Friendly)

- Does it support multiple compression techniques (pruning, quantization, distillation)?

- Can you use your own models (BYO support)?

- Are evaluation tools available to measure accuracy loss?

- Does it provide hardware-specific optimization?

- Are latency and cost metrics visible?

- Does it integrate with RAG pipelines or vector databases?

- Are guardrails preserved after compression?

- Is data privacy and retention clearly defined?

- Does it support edge deployment?

- Can workflows be automated end-to-end?

- Are there APIs and SDKs for integration?

- What is the vendor lock-in risk?

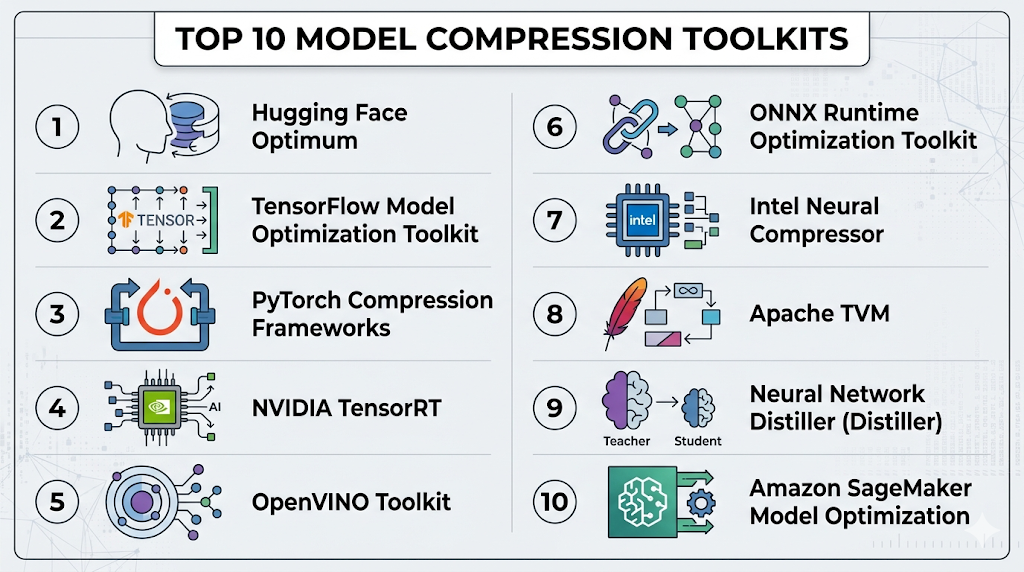

Top 10 Model Compression Toolkits

1 — Hugging Face Optimum

One-line verdict: Best for flexible, multi-technique compression across transformer models and diverse hardware backends.

Short description:

A toolkit within the Hugging Face ecosystem that supports quantization, pruning, and optimization workflows for transformer-based models.

Standout Capabilities

- Multi-technique compression support

- Hardware-aware optimization

- Integration with Transformers

- Multi-backend support (ONNX, TensorRT)

- Easy deployment workflows

- Strong developer ecosystem

AI-Specific Depth

- Model support: Open-source + BYO + multi-model

- RAG / knowledge integration: Compatible

- Evaluation: External tools required

- Guardrails: N/A

- Observability: Limited

Pros

- Flexible and extensible

- Strong ecosystem

- Multi-hardware support

Cons

- Requires expertise

- Limited built-in evaluation

- No native UI

Deployment & Platforms

Linux, macOS; Cloud + self-hosted

Integrations & Ecosystem

- Transformers

- ONNX

- TensorRT

- Accelerate

Pricing Model

Open-source

Best-Fit Scenarios

- LLM optimization

- Multi-hardware deployment

- Custom pipelines

2 — TensorFlow Model Optimization Toolkit

One-line verdict: Best for TensorFlow users needing integrated pruning, quantization, and compression workflows.

Short description:

A toolkit offering pruning, quantization, and clustering for TensorFlow models.

Standout Capabilities

- Multiple compression techniques

- TensorFlow integration

- Production-ready tools

- Performance tuning

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: N/A

- Evaluation: Metrics

- Guardrails: N/A

- Observability: Basic

Pros

- Strong ecosystem

- Production-ready

- Flexible

Cons

- TensorFlow-only

- Requires expertise

- Limited UI

Deployment & Platforms

Cloud, self-hosted

Integrations & Ecosystem

- TensorFlow

- Keras

- ML pipelines

Pricing Model

Open-source

Best-Fit Scenarios

- TensorFlow pipelines

- Production optimization

- Model compression workflows

3 — PyTorch Compression Frameworks

One-line verdict: Best for developers needing full control over custom compression workflows and experimentation.

Short description:

A set of tools and libraries for implementing compression techniques in PyTorch.

Standout Capabilities

- Custom compression pipelines

- Flexible architectures

- Research-friendly

- Integration with ML workflows

AI-Specific Depth

- Model support: BYO + open-source

- RAG / knowledge integration: Compatible

- Evaluation: External

- Guardrails: N/A

- Observability: Metrics via tools

Pros

- Highly flexible

- Widely used

- Strong community

Cons

- Requires expertise

- No standardization

- Setup effort

Deployment & Platforms

Cloud, self-hosted

Integrations & Ecosystem

- PyTorch

- APIs

- ML frameworks

Pricing Model

Open-source

Best-Fit Scenarios

- Research

- Custom pipelines

- Advanced optimization

4 — NVIDIA TensorRT

One-line verdict: Best for GPU-optimized compression and ultra-low latency inference in production systems.

Short description:

A high-performance inference optimizer supporting quantization and compression for NVIDIA GPUs.

Standout Capabilities

- GPU acceleration

- Low-latency inference

- Model compression

- High throughput

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: N/A

- Evaluation: Performance metrics

- Guardrails: N/A

- Observability: Latency and throughput

Pros

- High performance

- Production-ready

- GPU optimization

Cons

- GPU dependency

- Complex setup

- Limited flexibility

Deployment & Platforms

Linux; Cloud

Integrations & Ecosystem

- CUDA

- ML frameworks

- APIs

Pricing Model

Varies / N/A

Best-Fit Scenarios

- Real-time inference

- GPU workloads

- High-performance systems

5 — OpenVINO Toolkit

One-line verdict: Best for edge-focused compression and optimization on Intel hardware.

Short description:

A toolkit for optimizing and compressing models for Intel-based devices.

Standout Capabilities

- Hardware-aware optimization

- Edge deployment support

- Model compression tools

- Performance tuning

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: N/A

- Evaluation: Metrics

- Guardrails: N/A

- Observability: Latency tracking

Pros

- Strong edge performance

- Hardware optimization

- Production-ready

Cons

- Hardware-specific

- Setup complexity

- Limited flexibility

Deployment & Platforms

Windows, Linux; Edge + cloud

Integrations & Ecosystem

- Intel ecosystem

- APIs

- ML frameworks

Pricing Model

Open-source

Best-Fit Scenarios

- Edge AI

- Real-time systems

- Hardware optimization

6 — ONNX Runtime Optimization Toolkit

One-line verdict: Best for cross-platform compression and deployment across diverse frameworks.

Short description:

A toolkit enabling model optimization, compression, and deployment across platforms.

Standout Capabilities

- Cross-platform compatibility

- Model optimization

- Performance tuning

- Interoperability

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: N/A

- Evaluation: Metrics

- Guardrails: N/A

- Observability: Performance metrics

Pros

- Flexible

- Efficient

- Strong compatibility

Cons

- Requires conversion

- Setup complexity

- Limited UI

Deployment & Platforms

Windows, Linux; Cloud + self-hosted

Integrations & Ecosystem

- ONNX

- APIs

- ML frameworks

Pricing Model

Open-source

Best-Fit Scenarios

- Cross-platform deployment

- Model portability

- Optimization workflows

7 — Intel Neural Compressor

One-line verdict: Best for automated compression workflows combining quantization, pruning, and tuning.

Short description:

A toolkit that automates model optimization and compression.

Standout Capabilities

- Automated workflows

- Multi-technique compression

- Performance tuning

- Ease of use

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: N/A

- Evaluation: Built-in metrics

- Guardrails: N/A

- Observability: Performance tracking

Pros

- Easy to use

- Efficient

- Good automation

Cons

- Hardware bias

- Limited customization

- Documentation varies

Deployment & Platforms

Cloud, local

Integrations & Ecosystem

- TensorFlow

- PyTorch

- APIs

Pricing Model

Open-source

Best-Fit Scenarios

- Automated optimization

- CPU workloads

- Cost reduction

8 — Apache TVM

One-line verdict: Best for advanced compiler-level compression and optimization across diverse hardware.

Short description:

An open-source deep learning compiler stack for optimizing models.

Standout Capabilities

- Compiler-level optimization

- Cross-hardware support

- Advanced compression

- Performance tuning

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: N/A

- Evaluation: External

- Guardrails: N/A

- Observability: Limited

Pros

- Highly powerful

- Flexible

- Cross-platform

Cons

- Steep learning curve

- Complex setup

- Limited UI

Deployment & Platforms

Cloud, local

Integrations & Ecosystem

- ML frameworks

- APIs

- Compilers

Pricing Model

Open-source

Best-Fit Scenarios

- Advanced optimization

- Research

- Cross-hardware deployment

9 — Neural Network Distiller (Distiller)

One-line verdict: Best for research-focused compression experiments with detailed control over model behavior.

Short description:

A framework for experimenting with compression techniques like pruning and quantization.

Standout Capabilities

- Fine-grained control

- Research tools

- Compression techniques

- Experimentation

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: N/A

- Evaluation: Metrics

- Guardrails: N/A

- Observability: Basic

Pros

- Flexible

- Research-friendly

- Detailed control

Cons

- Not production-ready

- Limited ecosystem

- Requires expertise

Deployment & Platforms

Local

Integrations & Ecosystem

- PyTorch

- ML frameworks

Pricing Model

Open-source

Best-Fit Scenarios

- Research

- Experimentation

- Academic use

10 — Amazon SageMaker Model Optimization

One-line verdict: Best for enterprises needing scalable, managed compression workflows within cloud ML pipelines.

Short description:

A cloud platform offering model optimization and compression capabilities within ML pipelines.

Standout Capabilities

- Managed infrastructure

- Scalable workflows

- Integration with ML pipelines

- Automation

AI-Specific Depth

- Model support: Hosted + BYO

- RAG / knowledge integration: Compatible

- Evaluation: Built-in

- Guardrails: Limited

- Observability: Strong

Pros

- Scalable

- Integrated ecosystem

- Managed services

Cons

- Vendor lock-in

- Pricing varies

- Less flexibility

Security & Compliance

Encryption, IAM controls; certifications: Not publicly stated

Deployment & Platforms

Cloud

Integrations & Ecosystem

- ML pipelines

- APIs

- Data services

Pricing Model

Usage-based

Best-Fit Scenarios

- Enterprise AI

- Cloud-native pipelines

- Scalable deployment

Comparison Table (Top 10)

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| Hugging Face Optimum | General use | Hybrid | Multi-model | Ecosystem | Complexity | N/A |

| TensorFlow Toolkit | TF users | Hybrid | BYO | Integration | TF-only | N/A |

| PyTorch Frameworks | Custom workflows | Hybrid | BYO | Flexibility | Setup effort | N/A |

| TensorRT | GPU workloads | Cloud | BYO | Performance | GPU dependency | N/A |

| OpenVINO | Edge AI | Hybrid | BYO | Hardware optimization | Lock-in | N/A |

| ONNX Runtime | Cross-platform | Hybrid | BYO | Interoperability | Conversion needed | N/A |

| Neural Compressor | Automation | Hybrid | BYO | Ease | Limited customization | N/A |

| Apache TVM | Advanced users | Hybrid | BYO | Deep optimization | Complexity | N/A |

| Distiller | Research | Local | BYO | Control | Not production-ready | N/A |

| SageMaker | Enterprise | Cloud | Hosted + BYO | Scalability | Lock-in | N/A |

Scoring & Evaluation (Transparent Rubric)

Scoring is comparative and reflects how tools perform relative to each other across key criteria.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| Hugging Face Optimum | 9 | 7 | 5 | 9 | 7 | 8 | 7 | 9 | 7.9 |

| TensorFlow Toolkit | 8 | 7 | 5 | 8 | 6 | 8 | 7 | 7 | 7.4 |

| PyTorch | 9 | 7 | 5 | 8 | 6 | 8 | 7 | 8 | 7.7 |

| TensorRT | 8 | 7 | 5 | 7 | 5 | 10 | 7 | 7 | 7.5 |

| OpenVINO | 8 | 7 | 5 | 7 | 6 | 9 | 7 | 7 | 7.5 |

| ONNX Runtime | 8 | 7 | 5 | 9 | 6 | 9 | 7 | 7 | 7.6 |

| Neural Compressor | 7 | 7 | 5 | 7 | 8 | 9 | 6 | 6 | 7.4 |

| Apache TVM | 9 | 7 | 5 | 8 | 5 | 9 | 6 | 6 | 7.4 |

| Distiller | 7 | 6 | 4 | 6 | 6 | 7 | 6 | 6 | 6.5 |

| SageMaker | 8 | 8 | 6 | 9 | 8 | 7 | 8 | 8 | 8.0 |

Top 3 for Enterprise: SageMaker, TensorRT, Hugging Face Optimum

Top 3 for SMB: Hugging Face Optimum, ONNX Runtime, Neural Compressor

Top 3 for Developers: PyTorch Frameworks, Apache TVM, Hugging Face Optimum

Which Model Compression Toolkit Is Right for You?

Solo / Freelancer

Use Hugging Face Optimum or PyTorch for flexibility and experimentation.

SMB

ONNX Runtime or Neural Compressor offer a balance of simplicity and performance.

Mid-Market

Combine TensorFlow or PyTorch tools with hardware-specific optimizers.

Enterprise

SageMaker or TensorRT for scalable and production-ready solutions.

Regulated industries (finance/healthcare/public sector)

Prefer self-hosted or hybrid deployments with strong data control.

Budget vs premium

Open-source tools reduce cost; managed platforms simplify operations.

Build vs buy (when to DIY)

Build for flexibility; buy for speed and scalability.

Implementation Playbook (30 / 60 / 90 Days)

30 Days

- Identify performance bottlenecks

- Select compression strategy

- Run pilot experiments

60 Days

- Evaluate accuracy vs efficiency

- Integrate into pipelines

- Add monitoring

90 Days

- Optimize deployment

- Scale usage

- Implement governance

Common Mistakes & How to Avoid Them

- Ignoring accuracy degradation

- Over-compressing models

- No evaluation framework

- Poor hardware alignment

- Lack of observability

- Cost surprises

- Weak testing

- Ignoring guardrails

- Data leakage risks

- Vendor lock-in

- Poor documentation

- Over-automation

FAQs

1. What is model compression?

Model compression reduces size and complexity while maintaining performance.

2. What techniques are used?

Pruning, quantization, and distillation are common.

3. Does compression affect accuracy?

Yes, but usually within acceptable limits.

4. Can I compress any model?

Most tools support BYO models.

5. Is it useful for LLMs?

Yes, especially for deployment optimization.

6. Can compressed models run on edge devices?

Yes, that’s a primary use case.

7. Are evaluation tools included?

Varies by toolkit.

8. What are guardrails?

Safety mechanisms for AI outputs.

9. How does it reduce cost?

By lowering compute and memory requirements.

10. Can workflows be automated?

Yes, many tools support automation.

11. Is data privacy important?

Yes, especially during training and deployment.

12. What are alternatives?

Model distillation or hardware scaling.

Conclusion

Model compression toolkits are essential for making modern AI systems efficient, scalable, and production-ready by reducing model size, latency, and cost, but the right choice depends on your infrastructure, performance requirements, and deployment environment—so shortlist tools aligned with your stack, run controlled experiments to balance efficiency and accuracy, and validate performance, security, and cost before scaling.