Introduction

Model Benchmarking Suites are specialized tools used to evaluate, compare, and validate the performance of AI models across a range of tasks, datasets, and real-world scenarios. In simple terms, they help teams answer a critical question: “Is this model actually good enough for production?” These platforms go beyond basic accuracy metrics, offering structured testing for reliability, hallucinations, bias, latency, and cost efficiency.

As AI systems become more agentic, multimodal, and business-critical, benchmarking has shifted from a one-time evaluation step to a continuous process. Teams now need to test models across evolving prompts, workflows, and edge cases—especially in high-stakes environments.

Common use cases include:

- Comparing LLMs before deployment

- Regression testing after prompt or model updates

- Evaluating hallucination rates and factual accuracy

- Measuring latency and cost across providers

- Validating AI agents and workflows

What to evaluate:

- Evaluation depth (offline vs real-world scenarios)

- Dataset flexibility and customization

- Support for LLMs, multimodal models, and agents

- Observability and traceability

- Guardrails and safety testing

- Integration with pipelines and CI/CD

- Cost and latency benchmarking

- Human-in-the-loop review capabilities

- Version control for prompts and tests

- Reporting and auditability

Best for: AI engineers, ML teams, product leaders, and enterprises deploying LLMs or AI agents in production environments.

Not ideal for: Small teams running simple models without production-level evaluation needs, or projects where basic accuracy checks are sufficient.

What’s Changed in Model Benchmarking Suites

- Benchmarking now includes agent workflows, not just static prompts or outputs

- Rise of multimodal evaluation (text, image, audio inputs combined)

- Built-in hallucination detection and factual consistency scoring

- Strong focus on prompt injection and adversarial testing

- Support for BYO models and multi-model comparison pipelines

- Integration with RAG pipelines and knowledge base validation

- Advanced observability with trace-level debugging

- Cost and latency benchmarking across providers is now standard

- Shift toward continuous evaluation in CI/CD pipelines

- Increased demand for enterprise privacy controls and audit logs

- Emergence of human-in-the-loop evaluation workflows

- Standardization of evaluation datasets and benchmarks

Quick Buyer Checklist

- Does the tool support custom evaluation datasets?

- Can you benchmark multiple models side-by-side?

- Are hallucination and reliability metrics included?

- Does it integrate with your RAG or data pipeline?

- Are guardrails and adversarial tests supported?

- Can you track latency and cost metrics?

- Is there trace-level observability?

- Does it support BYO models or only hosted ones?

- Are there audit logs and version control?

- How easy is it to integrate into CI/CD workflows?

- Is there a risk of vendor lock-in?

- Are human review workflows available?

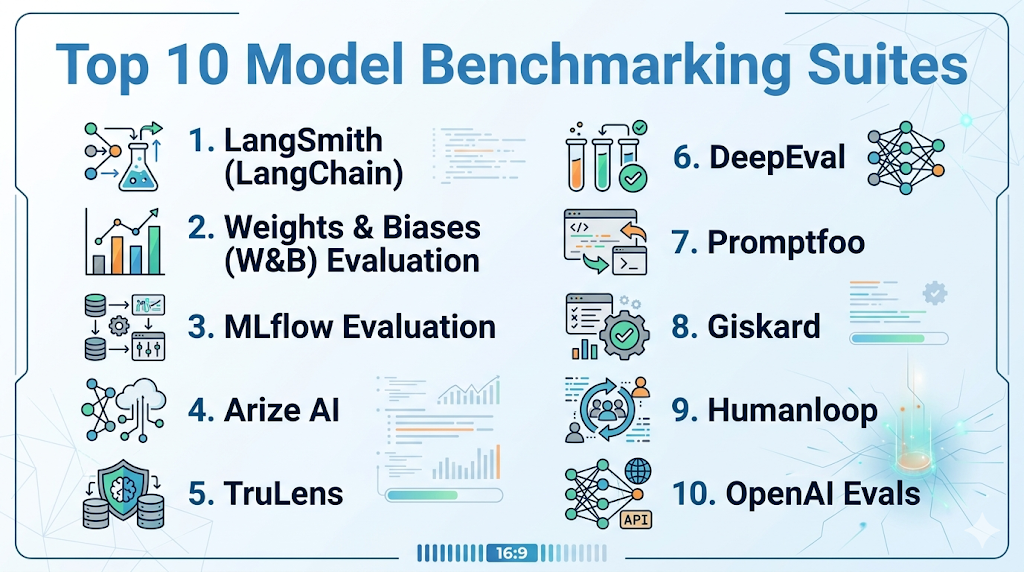

Top 10 Model Benchmarking Suites

1 — LangSmith (LangChain)

One-line verdict: Best for developers building LLM apps needing deep tracing, evaluation, and debugging workflows.

Short description :

LangSmith is an evaluation and observability platform designed for LLM applications built with LangChain or similar frameworks. It enables developers to test prompts, trace execution flows, and benchmark model outputs across different scenarios. It fits into both development and production monitoring workflows.

Standout Capabilities

- End-to-end tracing of LLM calls and workflows

- Dataset-driven evaluation pipelines

- Prompt versioning and experiment tracking

- Integration with agent workflows

- Debugging tools for complex chains

- Comparative evaluation across models

- Feedback loops for human evaluation

AI-Specific Depth

- Model support: BYO model, multi-model routing

- RAG / knowledge integration: Supports RAG evaluation via datasets

- Evaluation: Prompt testing, regression, human review

- Guardrails: Basic evaluation-based safeguards

- Observability: Deep traces, latency, token metrics

Pros

- Strong developer-focused tooling

- Excellent debugging and trace visibility

- Tight integration with LangChain ecosystem

Cons

- Best suited for LangChain users

- Learning curve for new users

- Limited standalone usage outside ecosystem

Security & Compliance

SSO/SAML, RBAC, encryption, audit logs. Certifications: Not publicly stated.

Deployment & Platforms

Web; Cloud-based.

Integrations & Ecosystem

LangSmith integrates deeply with modern LLM stacks and developer tools.

- LangChain

- APIs and SDKs

- Python/JS workflows

- Custom datasets

- CI/CD pipelines

Pricing Model

Tiered and usage-based.

Best-Fit Scenarios

- Debugging LLM pipelines

- Evaluating agent workflows

- Continuous model testing in production

2 — Weights & Biases (W&B) Evaluation

One-line verdict: Best for ML teams needing experiment tracking combined with robust model evaluation workflows.

Short description :

Weights & Biases provides experiment tracking and evaluation tools that extend into LLM benchmarking. It allows teams to compare models, track metrics, and manage evaluation datasets across training and deployment stages. It integrates well into ML pipelines.

Standout Capabilities

- Unified experiment tracking and evaluation

- Dataset versioning

- Visualization dashboards

- Model comparison tools

- Collaboration features

- Integration with training workflows

AI-Specific Depth

- Model support: Open-source, BYO

- RAG / knowledge integration: Limited / N/A

- Evaluation: Offline evaluation, regression

- Guardrails: N/A

- Observability: Metrics, experiment tracking

Pros

- Mature ML tooling ecosystem

- Strong visualization capabilities

- Widely adopted in ML workflows

Cons

- Less specialized for LLM-specific guardrails

- Setup complexity

- Some features require scaling plans

Security & Compliance

SSO/SAML, RBAC, encryption. Certifications: Not publicly stated.

Deployment & Platforms

Cloud / Self-hosted.

Integrations & Ecosystem

- Python SDK

- ML frameworks

- Data pipelines

- Experiment tracking APIs

- CI/CD integrations

Pricing Model

Freemium + enterprise tiers.

Best-Fit Scenarios

- Model experimentation tracking

- Comparing training runs

- Evaluating model performance over time

3 — MLflow Evaluation

One-line verdict: Best for teams already using MLflow for lifecycle management and wanting integrated evaluation.

Short description :

MLflow provides model lifecycle management with built-in evaluation capabilities for comparing models and tracking performance metrics. It helps teams manage experiments, versions, and evaluation workflows in a centralized system.

Standout Capabilities

- Model lifecycle tracking

- Experiment logging

- Evaluation metrics tracking

- Model registry

- Version control

AI-Specific Depth

- Model support: BYO model

- RAG / knowledge integration: N/A

- Evaluation: Offline evaluation

- Guardrails: N/A

- Observability: Metrics tracking

Pros

- Open-source and flexible

- Widely adopted

- Integrates with many ML tools

Cons

- Limited LLM-specific features

- Requires setup and customization

- Basic evaluation compared to newer tools

Security & Compliance

Varies / N/A.

Deployment & Platforms

Cloud / Self-hosted.

Integrations & Ecosystem

- ML pipelines

- Data tools

- APIs

- Experiment tracking

- Model registry

Pricing Model

Open-source + enterprise support.

Best-Fit Scenarios

- Traditional ML evaluation

- Lifecycle management

- Model comparison workflows

4 — Arize AI

One-line verdict: Best for production monitoring and evaluation of deployed AI models at scale.

Short description :

Arize AI focuses on monitoring and evaluating models in production environments. It helps teams detect drift, measure performance, and analyze model outputs in real-world usage scenarios.

Standout Capabilities

- Production monitoring

- Drift detection

- Performance analytics

- Data visualization

- Real-time evaluation

AI-Specific Depth

- Model support: BYO, multi-model

- RAG / knowledge integration: Limited

- Evaluation: Real-world evaluation

- Guardrails: N/A

- Observability: Strong monitoring and tracing

Pros

- Strong production insights

- Scalable for enterprise

- Real-time monitoring

Cons

- Less focus on pre-deployment testing

- Complex setup

- Pricing not transparent

Security & Compliance

Not publicly stated.

Deployment & Platforms

Cloud.

Integrations & Ecosystem

- APIs

- Data pipelines

- ML systems

- Monitoring tools

- Dashboards

Pricing Model

Enterprise-focused.

Best-Fit Scenarios

- Production monitoring

- Drift detection

- Real-time evaluation

5 — TruLens

One-line verdict: Best for evaluating LLM applications with feedback-based metrics and transparency.

Short description :

TruLens is designed for evaluating LLM applications with a focus on transparency and feedback-based scoring. It allows developers to define evaluation criteria and measure outputs accordingly.

Standout Capabilities

- Feedback-based evaluation

- Custom scoring metrics

- LLM app evaluation

- Transparency tools

- Integration with pipelines

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: Supported

- Evaluation: Custom, feedback-based

- Guardrails: N/A

- Observability: Moderate

Pros

- Flexible evaluation metrics

- Transparent scoring

- Developer-friendly

Cons

- Smaller ecosystem

- Requires setup

- Limited enterprise features

Security & Compliance

Not publicly stated.

Deployment & Platforms

Varies / N/A.

Integrations & Ecosystem

- APIs

- LLM frameworks

- Evaluation pipelines

- Custom metrics

- Data tools

Pricing Model

Open-source.

Best-Fit Scenarios

- LLM evaluation

- Feedback-driven scoring

- Custom benchmarking

6 — DeepEval

One-line verdict: Best for automated LLM testing with unit-test-style evaluation workflows.

Short description :

DeepEval enables developers to write evaluation tests for LLM outputs similar to unit tests in software development. It helps ensure consistent performance and detect regressions.

Standout Capabilities

- Unit-test-style evaluations

- Automated testing

- Regression detection

- Custom test cases

- CI/CD integration

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: Supported

- Evaluation: Automated testing

- Guardrails: Basic

- Observability: Limited

Pros

- Developer-friendly

- Easy automation

- CI/CD integration

Cons

- Limited visualization

- Early-stage ecosystem

- Requires manual setup

Security & Compliance

Not publicly stated.

Deployment & Platforms

Local / Cloud.

Integrations & Ecosystem

- Python

- CI/CD tools

- APIs

- Testing frameworks

- LLM pipelines

Pricing Model

Open-source.

Best-Fit Scenarios

- Automated testing

- Regression checks

- CI/CD integration

7 — Promptfoo

One-line verdict: Best for lightweight prompt testing and quick benchmarking across multiple models.

Short description :

Promptfoo is a simple yet powerful tool for testing prompts and comparing outputs across models. It is widely used for quick evaluations and experimentation workflows.

Standout Capabilities

- Prompt testing

- Model comparison

- CLI-based workflow

- Quick setup

- Lightweight evaluation

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: N/A

- Evaluation: Prompt testing

- Guardrails: N/A

- Observability: Minimal

Pros

- Easy to use

- Fast setup

- Lightweight

Cons

- Limited enterprise features

- Minimal observability

- Basic evaluation

Security & Compliance

Varies / N/A.

Deployment & Platforms

Local / CLI.

Integrations & Ecosystem

- CLI

- APIs

- LLM providers

- Testing workflows

- Developer tools

Pricing Model

Open-source.

Best-Fit Scenarios

- Prompt testing

- Quick comparisons

- Lightweight workflows

8 — Giskard

One-line verdict: Best for AI testing with focus on bias, fairness, and risk detection.

Short description :

Giskard is an AI testing platform that emphasizes model validation, bias detection, and risk analysis. It helps teams ensure responsible AI deployment.

Standout Capabilities

- Bias detection

- Risk analysis

- Model testing

- Evaluation datasets

- Reporting tools

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: Limited

- Evaluation: Risk-based evaluation

- Guardrails: Strong

- Observability: Moderate

Pros

- Strong focus on responsible AI

- Risk detection features

- Compliance-oriented

Cons

- Less focus on performance benchmarking

- Setup complexity

- Smaller ecosystem

Security & Compliance

Not publicly stated.

Deployment & Platforms

Cloud / Self-hosted.

Integrations & Ecosystem

- APIs

- ML tools

- Data pipelines

- Testing workflows

- Reporting tools

Pricing Model

Tiered.

Best-Fit Scenarios

- Bias detection

- Risk analysis

- Responsible AI validation

9 — Humanloop

One-line verdict: Best for teams combining human feedback with structured evaluation workflows for LLMs.

Short description :

Humanloop provides tools for prompt management and evaluation with human-in-the-loop workflows. It helps teams refine models based on real feedback.

Standout Capabilities

- Human feedback integration

- Prompt management

- Evaluation workflows

- Version control

- Collaboration tools

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: Supported

- Evaluation: Human review

- Guardrails: Moderate

- Observability: Moderate

Pros

- Strong human-in-loop workflows

- Collaboration features

- Prompt versioning

Cons

- Less automation

- Limited deep observability

- Pricing not transparent

Security & Compliance

Not publicly stated.

Deployment & Platforms

Cloud.

Integrations & Ecosystem

- APIs

- LLM tools

- Data pipelines

- Prompt systems

- Collaboration tools

Pricing Model

Not publicly stated.

Best-Fit Scenarios

- Human evaluation

- Prompt refinement

- Feedback loops

10 — OpenAI Evals

One-line verdict: Best for developers evaluating models using standardized benchmarks and custom datasets.

Short description :

OpenAI Evals is an open framework for evaluating language models using structured benchmarks and datasets. It allows developers to create custom evaluations and compare results.

Standout Capabilities

- Open evaluation framework

- Custom benchmarks

- Dataset-based evaluation

- Community contributions

- Flexible setup

AI-Specific Depth

- Model support: OpenAI + BYO

- RAG / knowledge integration: N/A

- Evaluation: Benchmark-based

- Guardrails: N/A

- Observability: Limited

Pros

- Flexible and open

- Community-driven

- Custom evaluations

Cons

- Requires setup

- Limited UI

- Not enterprise-focused

Security & Compliance

Varies / N/A.

Deployment & Platforms

Local / Cloud.

Integrations & Ecosystem

- APIs

- Datasets

- LLM tools

- Testing frameworks

- Developer workflows

Pricing Model

Open-source.

Best-Fit Scenarios

- Benchmark testing

- Custom evaluation

- Research workflows

Comparison Table

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| LangSmith | LLM debugging | Cloud | Multi-model | Deep tracing | Ecosystem lock-in | N/A |

| W&B | ML tracking | Cloud/Self | BYO | Visualization | Complexity | N/A |

| MLflow | Lifecycle mgmt | Cloud/Self | BYO | Flexibility | Limited LLM focus | N/A |

| Arize | Production eval | Cloud | Multi-model | Monitoring | Setup complexity | N/A |

| TruLens | LLM eval | N/A | BYO | Custom metrics | Smaller ecosystem | N/A |

| DeepEval | Testing | Local/Cloud | BYO | Automation | Limited UI | N/A |

| Promptfoo | Prompt testing | Local | Multi-model | Simplicity | Basic features | N/A |

| Giskard | Risk eval | Cloud/Self | BYO | Bias detection | Limited perf focus | N/A |

| Humanloop | Human eval | Cloud | BYO | Feedback loops | Limited automation | N/A |

| OpenAI Evals | Benchmarks | Local/Cloud | BYO | Flexibility | Setup effort | N/A |

Scoring & Evaluation

Scoring is comparative and based on practical usability, not absolute capability. Different tools excel in different scenarios depending on team size, workflow complexity, and deployment needs.

| Tool | Core | Reliability | Guardrails | Integrations | Ease | Perf/Cost | Security | Support | Total |

|---|---|---|---|---|---|---|---|---|---|

| LangSmith | 9 | 9 | 7 | 9 | 8 | 8 | 8 | 8 | 8.4 |

| W&B | 9 | 8 | 6 | 9 | 7 | 8 | 8 | 9 | 8.1 |

| MLflow | 8 | 7 | 5 | 8 | 7 | 8 | 7 | 8 | 7.5 |

| Arize | 9 | 9 | 6 | 8 | 7 | 8 | 8 | 8 | 8.2 |

| TruLens | 7 | 8 | 5 | 7 | 7 | 7 | 6 | 7 | 7.1 |

| DeepEval | 7 | 8 | 6 | 7 | 8 | 8 | 6 | 7 | 7.4 |

| Promptfoo | 6 | 7 | 4 | 6 | 9 | 8 | 5 | 6 | 6.8 |

| Giskard | 8 | 8 | 9 | 7 | 6 | 7 | 7 | 7 | 7.6 |

| Humanloop | 8 | 8 | 7 | 7 | 7 | 7 | 7 | 7 | 7.5 |

| OpenAI Evals | 7 | 7 | 5 | 7 | 6 | 7 | 6 | 7 | 6.9 |

Top 3 for Enterprise: LangSmith, Arize, Weights & Biases

Top 3 for SMB: DeepEval, Humanloop, TruLens

Top 3 for Developers: Promptfoo, OpenAI Evals, DeepEval

Which Tool Is Right for You?

Solo / Freelancer

Use lightweight tools like Promptfoo or OpenAI Evals for quick testing without heavy setup.

SMB

Choose DeepEval or Humanloop for balance between automation and usability.

Mid-Market

LangSmith or W&B provide strong evaluation with scalability.

Enterprise

Arize, LangSmith, and W&B offer full observability and governance.

Regulated industries

Giskard is better due to bias and risk analysis features.

Budget vs premium

Open-source tools (MLflow, DeepEval) vs enterprise platforms (Arize, W&B).

Build vs buy

DIY if you need flexibility; buy if you need speed and reliability.

Implementation Playbook

30 Days

- Define evaluation metrics

- Set up datasets

- Run pilot benchmarks

60 Days

- Add CI/CD evaluation

- Implement guardrails

- Expand datasets

90 Days

- Optimize cost/latency

- Add governance

- Scale evaluation workflows

Common Mistakes

- No evaluation pipeline

- Ignoring hallucinations

- No regression testing

- Poor dataset quality

- Lack of observability

- Over-automation

- Ignoring cost metrics

- No human review

- Weak guardrails

- Vendor lock-in

- No version control

- No audit logs

FAQs

1. What is a model benchmarking suite?

It is a tool used to evaluate and compare AI model performance across different metrics and scenarios.

2. Why is benchmarking important?

It ensures models are reliable, accurate, and safe before deployment.

3. Can I use open-source tools?

Yes, many tools like MLflow and DeepEval are open-source.

4. Do these tools support multiple models?

Most modern tools support multi-model evaluation.

5. What about privacy?

Varies by tool; enterprise tools offer better controls.

6. Can I self-host?

Some tools support self-hosting; others are cloud-only.

7. Are these tools expensive?

Costs vary from free open-source to enterprise pricing.

8. Do they support RAG?

Some tools support RAG evaluation workflows.

9. What metrics should I track?

Accuracy, latency, cost, hallucination rates.

10. Can I automate evaluations?

Yes, many tools integrate with CI/CD pipelines.

11. Do I need human evaluation?

For critical use cases, yes.

12. Can I switch tools later?

Possible, but migration effort varies.

Conclusion

Model benchmarking suites have become essential for building reliable AI systems, especially as models grow more complex and business-critical. The right tool depends on your team’s maturity, workflow, and evaluation depth requirements—there is no one-size-fits-all solution. Start by shortlisting tools that align with your stack, run a pilot to validate real-world performance, and prioritize strong evaluation and observability before scaling.