Introduction

Retrieval-Augmented Generation RAG Frameworks help teams build AI applications that answer questions using trusted external knowledge instead of relying only on a model’s built-in training. In simple words, a RAG system searches your documents, databases, knowledge bases, tickets, policies, product manuals, or internal content, then sends the most relevant context to an LLM so the answer is more grounded and useful.

RAG matters because businesses want AI assistants that can answer from private, changing, or domain-specific information. A general model may not know your latest policies, customer documentation, product data, legal guidance, or support history. RAG frameworks help developers connect LLMs with real knowledge sources while improving accuracy, traceability, and control.

Real-world use cases include:

- Internal knowledge assistants for employees

- Customer support bots grounded in help-center articles

- Legal, finance, and compliance document search

- Developer documentation copilots

- Enterprise search with conversational answers

- AI agents that retrieve information before taking action

Evaluation criteria for buyers:

- Document loading and parsing support

- Chunking and indexing flexibility

- Vector database compatibility

- Hybrid search and reranking support

- Prompt orchestration and context assembly

- Evaluation and regression testing workflows

- Guardrails and prompt-injection defense

- Observability, tracing, and cost visibility

- Multi-model and BYO model support

- Deployment flexibility

- Security and access-control patterns

- Developer experience and ecosystem maturity

Best for: AI engineers, backend developers, data teams, platform teams, CTOs, product teams, enterprise AI teams, and startups building knowledge-grounded AI assistants, copilots, search systems, and agentic applications.

Not ideal for: teams that only need simple static chatbots, basic keyword search, or one-off prompt experiments. If the knowledge source is small and rarely changes, a simple prompt template or managed chatbot builder may be enough before adopting a full RAG framework.

What’s Changed in Retrieval-Augmented Generation RAG Frameworks

- RAG is moving from simple vector search to full retrieval pipelines. Teams now combine keyword search, semantic search, metadata filters, rerankers, query rewriting, and answer validation.

- Agentic RAG is becoming common. Modern applications do not only retrieve once; agents can plan searches, call tools, inspect documents, retry retrieval, and refine answers.

- Multimodal RAG is growing. Teams increasingly retrieve from PDFs, tables, images, diagrams, audio transcripts, videos, screenshots, and structured records.

- Evaluation is now a core requirement. Buyers want frameworks that help test answer relevance, faithfulness, citation quality, retrieval quality, hallucination risk, and regression behavior.

- Guardrails matter more. RAG systems can be vulnerable to prompt injection, malicious documents, unsafe retrieval, and over-trusting retrieved context.

- Enterprise access control is a major concern. RAG apps must respect document permissions, tenant boundaries, user roles, and data retention rules.

- Hybrid search is becoming standard. Pure vector search is often not enough; teams combine semantic similarity with exact keyword, metadata, and graph-based retrieval.

- Chunking strategy is more important. Poor chunking leads to weak retrieval, missing context, hallucinated answers, and higher token cost.

- RAG observability is now expected. Teams need traces showing query, retrieved chunks, scores, prompt context, model output, latency, and cost.

- Model flexibility is a buyer priority. Teams want to switch between hosted models, open-source models, local embeddings, and BYO inference endpoints.

- Reranking is becoming a quality lever. Rerankers help select better context before sending it to the model, improving answer relevance.

- RAG is becoming part of governance. Teams need records of data sources, retrieval decisions, prompt versions, generated answers, and human review outcomes.

Quick Buyer Checklist

Use this checklist to shortlist RAG frameworks quickly:

- Does the framework support your data sources and file types?

- Can it parse PDFs, HTML, Markdown, tables, code, and structured data?

- Does it support flexible chunking and metadata enrichment?

- Can it work with your preferred vector database?

- Does it support hybrid search, filters, reranking, and query rewriting?

- Can it support hosted, BYO, and open-source models?

- Does it support RAG evaluation and regression testing?

- Can it track retrieved context, answer quality, latency, and cost?

- Does it support access control and tenant isolation?

- Can it defend against prompt injection and unsafe retrieved content?

- Does it support agents, tool calling, and multi-step workflows?

- Can it be deployed in cloud, self-hosted, or hybrid environments?

- Does it integrate with observability and governance tools?

- Can developers customize retrieval logic deeply?

- Does it avoid vendor lock-in through open interfaces and exports?

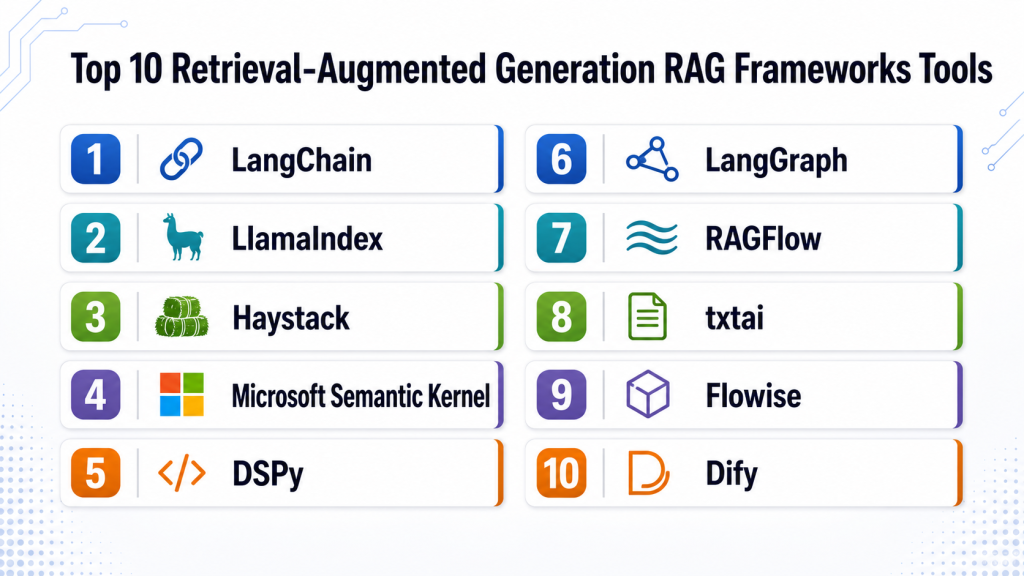

Top 10 Retrieval-Augmented Generation RAG Frameworks Tools

1 — LangChain

One-line verdict: Best for developers building flexible RAG, agents, tools, and custom LLM workflows.

Short description :

LangChain is a developer framework for building LLM applications, including RAG systems, agents, chains, tools, and retrieval workflows. It is commonly used by engineering teams that need flexibility across models, vector databases, retrievers, prompts, and orchestration patterns.

Standout Capabilities

- Broad ecosystem for LLM application development

- Supports RAG pipelines, agents, chains, tools, and memory patterns

- Works with many model providers and vector databases

- Flexible retriever and document loader patterns

- Strong developer community and extension ecosystem

- Useful for custom backend and agentic RAG workflows

- Can connect with tracing and observability tools depending on setup

AI-Specific Depth Must Include

- Model support: Multi-model, hosted models, BYO model, and open-source workflows depending on integration

- RAG / knowledge integration: Strong support for document loaders, retrievers, vector databases, chunking, and tool-based retrieval

- Evaluation: Varies / N/A, can integrate with external evaluation and tracing workflows

- Guardrails: Varies / N/A, guardrails usually require companion tools or custom policies

- Observability: Traces, callbacks, latency, token usage, and run metadata depending on instrumentation

Pros

- Very flexible for custom RAG and agentic applications

- Large ecosystem of integrations and patterns

- Good fit for developers who need control over orchestration

Cons

- Can feel complex for beginners

- Production quality depends heavily on developer architecture

- Requires careful evaluation and observability setup

Security & Compliance

Security depends on how the application is built and deployed. SSO, RBAC, audit logs, encryption, data retention, and residency are usually handled by the surrounding app, infrastructure, and connected services. Certifications are Not publicly stated.

Deployment & Platforms

- Python and JavaScript development workflows

- Cloud, self-hosted, or hybrid depending on application deployment

- Works across Windows, macOS, and Linux developer environments

- Web/mobile support depends on the application built with it

- Backend and API service deployment patterns

Integrations & Ecosystem

LangChain fits teams that need broad integration flexibility across LLM providers, data systems, vector stores, and custom tools.

- LLM providers

- Vector databases

- Document loaders

- Agent tools

- Embedding models

- Observability tools

- Backend frameworks and APIs

Pricing Model No exact prices unless confident

Open-source usage is available. Costs depend on hosting, model providers, vector databases, infrastructure, observability tools, and engineering effort.

Best-Fit Scenarios

- Custom enterprise RAG applications

- Agentic workflows with tool calling

- Developer teams needing maximum orchestration flexibility

2 — LlamaIndex

One-line verdict: Best for data-centric RAG applications that need strong indexing and retrieval workflows.

Short description :

LlamaIndex focuses on connecting private or enterprise data to LLM applications through indexing, retrieval, and query workflows. It is useful for teams building RAG systems over documents, databases, knowledge bases, and structured or unstructured data.

Standout Capabilities

- Strong data ingestion and indexing focus

- Flexible retrieval and query engine patterns

- Supports document, database, and knowledge workflows

- Good fit for enterprise knowledge assistants

- Supports multiple vector stores and model providers

- Useful for RAG evaluation and retrieval experimentation depending on setup

- Developer-friendly abstractions for data-connected AI

AI-Specific Depth Must Include

- Model support: Multi-model, hosted, BYO, and open-source workflows depending on integration

- RAG / knowledge integration: Strong indexing, connectors, retrievers, query engines, and vector database compatibility

- Evaluation: Evaluation workflows and custom metrics depending on setup

- Guardrails: Varies / N/A, requires companion controls or custom policies

- Observability: Query traces, retrieval metadata, latency, and token/cost signals depending on instrumentation

Pros

- Strong fit for data-heavy RAG systems

- Good abstraction around indexing and retrieval

- Useful for teams working with many data sources

Cons

- Production behavior depends on architecture and evaluation discipline

- Access control needs careful application-level design

- Some advanced workflows may require custom engineering

Security & Compliance

Security depends on deployment, connected data sources, access-control design, encryption, logging, retention, and infrastructure. Certifications are Not publicly stated.

Deployment & Platforms

- Python and developer workflows

- Cloud, self-hosted, or hybrid depending on application architecture

- Works across common developer environments

- Backend and API deployment patterns

- Web or mobile access depends on the application built with it

Integrations & Ecosystem

LlamaIndex is useful when the hardest part of RAG is preparing, indexing, retrieving, and querying private data.

- Document loaders

- Vector databases

- SQL and structured data systems

- LLM providers

- Embedding models

- Query engines

- Evaluation and observability tools through integration

Pricing Model No exact prices unless confident

Open-source usage is available. Managed or enterprise options may vary. Costs depend on infrastructure, models, storage, vector databases, and support needs.

Best-Fit Scenarios

- Enterprise document intelligence

- Knowledge assistants over private data

- Teams prioritizing indexing and retrieval quality

3 — Haystack

One-line verdict: Best for production-focused teams building search, QA, and RAG pipelines with modular components.

Short description :

Haystack is an open-source framework for building search, question-answering, and RAG pipelines. It is useful for teams that want modular pipeline components for retrieval, ranking, generation, and document processing.

Standout Capabilities

- Modular pipeline architecture

- Strong search and question-answering roots

- Supports retrievers, rankers, generators, and document stores

- Useful for production-style RAG systems

- Works with different model providers and backends

- Supports custom pipelines for enterprise search

- Good fit for teams needing structured retrieval workflows

AI-Specific Depth Must Include

- Model support: Hosted, BYO, and open-source workflows depending on components and integrations

- RAG / knowledge integration: Strong support for document stores, retrievers, ranking, pipelines, and generation steps

- Evaluation: Varies / N/A, can support evaluation workflows through custom pipeline design

- Guardrails: Varies / N/A

- Observability: Pipeline logs, retrieval outputs, latency, and component-level signals depending on setup

Pros

- Strong modular design for RAG pipelines

- Good fit for search and QA-heavy applications

- Flexible for teams that want production-oriented components

Cons

- Requires engineering effort to tune and deploy well

- Ecosystem may feel narrower than broader LLM frameworks

- Guardrails and governance need companion tooling

Security & Compliance

Security depends on hosting, data storage, access controls, logging, encryption, deployment architecture, and connected systems. Certifications are Not publicly stated.

Deployment & Platforms

- Python-based framework

- Cloud, self-hosted, or hybrid depending on deployment

- Works across common developer and server environments

- Backend service deployment patterns

- Web/mobile support depends on the application built with it

Integrations & Ecosystem

Haystack fits teams building serious retrieval pipelines with control over each step of the process.

- Document stores

- Search engines

- Vector databases

- LLM providers

- Embedding models

- Rankers and rerankers

- Pipeline orchestration workflows

Pricing Model No exact prices unless confident

Open-source usage is available. Costs depend on infrastructure, models, document stores, vector databases, and support or managed services if used.

Best-Fit Scenarios

- Enterprise search applications

- RAG question-answering systems

- Teams needing modular retrieval and ranking pipelines

4 — Microsoft Semantic Kernel

One-line verdict: Best for teams building enterprise AI orchestration with planners, plugins, and Microsoft ecosystem alignment.

Short description :

Microsoft Semantic Kernel is an SDK for integrating AI models with application logic, plugins, memory, and orchestration workflows. It is useful for teams building RAG-enabled copilots, assistants, and enterprise applications that connect AI with business systems.

Standout Capabilities

- AI orchestration SDK for application developers

- Plugin and function-based integration patterns

- Memory and retrieval patterns for knowledge-grounded workflows

- Useful for enterprise copilots and assistants

- Works with multiple model and service patterns depending on setup

- Supports structured application integration

- Good fit for teams aligned with Microsoft development ecosystems

AI-Specific Depth Must Include

- Model support: Hosted, BYO, and multi-model workflows depending on configuration

- RAG / knowledge integration: Memory, connectors, plugins, and retrieval patterns depending on application design

- Evaluation: Varies / N/A, external evaluation and testing may be required

- Guardrails: Varies / N/A, policy checks require application-level or companion tooling

- Observability: Logging, traces, latency, and model call metadata depending on instrumentation

Pros

- Strong fit for enterprise app developers

- Good plugin and orchestration model

- Useful for building AI features into business applications

Cons

- RAG implementation depth depends on application architecture

- Less focused purely on retrieval than dedicated RAG frameworks

- Requires engineering discipline for production quality

Security & Compliance

Security depends on surrounding Microsoft, cloud, identity, data, and application architecture. RBAC, SSO, audit logs, encryption, retention, and residency vary by deployment and services used. Certifications are Not publicly stated here.

Deployment & Platforms

- SDK-based development

- Cloud, self-hosted, or hybrid depending on application deployment

- Works with common developer environments

- Backend and enterprise application deployment patterns

- Web/mobile support depends on application implementation

Integrations & Ecosystem

Semantic Kernel fits teams building AI assistants that need to call business functions, retrieve context, and interact with enterprise systems.

- AI model providers

- Plugins and functions

- Application backends

- Enterprise systems

- Memory and retrieval stores

- Microsoft ecosystem workflows

- Observability through application instrumentation

Pricing Model No exact prices unless confident

Open-source SDK usage is available. Costs depend on model providers, infrastructure, cloud services, storage, and engineering effort.

Best-Fit Scenarios

- Enterprise copilots and assistants

- AI apps using plugins and business functions

- Microsoft-aligned application development teams

5 — DSPy

One-line verdict: Best for advanced teams optimizing prompts, retrieval pipelines, and LLM programs systematically.

Short description :

DSPy is a framework for programming and optimizing language model pipelines using declarative signatures and optimization techniques. It is useful for teams that want to move beyond manual prompt tweaking and systematically improve RAG behavior.

Standout Capabilities

- Declarative programming style for LM pipelines

- Optimization-oriented approach to prompts and modules

- Useful for RAG pipeline tuning and evaluation loops

- Supports retriever and generator program patterns

- Strong fit for research-minded engineering teams

- Helps reduce manual prompt engineering guesswork

- Good for systematic experimentation and pipeline improvement

AI-Specific Depth Must Include

- Model support: BYO and hosted model workflows depending on integration

- RAG / knowledge integration: Supports retrieval-augmented programs and custom retriever integration

- Evaluation: Strong focus on optimization and evaluation-driven improvement

- Guardrails: Varies / N/A, requires companion policy controls

- Observability: Experiment results, program outputs, metrics, and optimization traces depending on setup

Pros

- Strong for systematic RAG optimization

- Useful when prompt engineering becomes hard to manage manually

- Good fit for teams that value evaluation-driven design

Cons

- Learning curve can be higher than simpler frameworks

- Smaller ecosystem than broad LLM orchestration tools

- Best suited for technical teams comfortable with programmatic design

Security & Compliance

Security depends on models, data sources, deployment environment, logging, access controls, and application architecture. Certifications are Not publicly stated.

Deployment & Platforms

- Python-based development workflows

- Cloud, self-hosted, or hybrid depending on application deployment

- Works across common developer environments

- Backend service deployment possible

- Web/mobile access depends on the application built with it

Integrations & Ecosystem

DSPy fits teams that want RAG quality to be improved through structured evaluation and optimization rather than manual prompt iteration alone.

- Language model providers

- Custom retrievers

- Evaluation datasets

- Pipeline optimization workflows

- Python AI applications

- Research workflows

- RAG experimentation systems

Pricing Model No exact prices unless confident

Open-source usage is available. Costs depend on model calls, evaluation runs, infrastructure, retrievers, vector stores, and engineering effort.

Best-Fit Scenarios

- RAG optimization experiments

- Teams improving prompt and retrieval quality

- Research-oriented AI engineering groups

6 — LangGraph

One-line verdict: Best for stateful agentic RAG workflows that need control, branching, and durable execution.

Short description :

LangGraph helps developers build stateful, graph-based agent and LLM workflows. It is useful for agentic RAG systems where retrieval, reasoning, tool calls, human review, and multi-step control flow need to be explicit.

Standout Capabilities

- Graph-based workflow orchestration

- Useful for stateful agents and multi-step RAG

- Supports branching, loops, and controlled execution

- Good fit for human-in-the-loop workflows

- Works with broader LangChain ecosystem patterns

- Helps structure complex AI agents

- Useful for durable and inspectable RAG flows

AI-Specific Depth Must Include

- Model support: Multi-model workflows depending on application and integrations

- RAG / knowledge integration: Supports agentic retrieval workflows through graph nodes and retriever integrations

- Evaluation: Varies / N/A, can be paired with tracing and evaluation tools

- Guardrails: Varies / N/A, policy steps can be implemented as workflow nodes

- Observability: State transitions, traces, node-level execution, latency, and model call metadata depending on setup

Pros

- Strong for complex agentic RAG applications

- Makes workflow control more explicit than simple chains

- Useful for multi-step, human-in-the-loop processes

Cons

- More advanced than simple RAG frameworks

- Requires careful design to avoid complex agent behavior

- Production observability and guardrails need deliberate setup

Security & Compliance

Security depends on application deployment, data access controls, model providers, logs, workflow state storage, and connected systems. Certifications are Not publicly stated.

Deployment & Platforms

- Developer framework

- Cloud, self-hosted, or hybrid depending on app deployment

- Works across common Python development environments

- Backend workflow and agent deployment patterns

- Web/mobile support depends on application implementation

Integrations & Ecosystem

LangGraph is useful when RAG requires explicit workflow steps, state management, review loops, and controlled agent behavior.

- LangChain ecosystem

- LLM providers

- Retrievers and vector stores

- Tool-calling workflows

- Human review systems

- Tracing and observability tools

- Backend AI applications

Pricing Model No exact prices unless confident

Open-source framework usage is available. Managed or platform costs may vary. Infrastructure, model, observability, and vector database costs depend on deployment.

Best-Fit Scenarios

- Agentic RAG workflows

- Stateful AI assistants

- Human-in-the-loop retrieval and decision systems

7 — RAGFlow

One-line verdict: Best for teams needing document-heavy RAG with parsing, knowledge base, and workflow features.

Short description :

RAGFlow is focused on building RAG applications over documents and knowledge bases. It is useful for teams that want document ingestion, parsing, retrieval, and answer generation workflows with less need to assemble every component manually.

Standout Capabilities

- Document-focused RAG workflows

- Knowledge base creation patterns

- Document parsing and ingestion support

- Retrieval and answer generation workflows

- Useful for business document assistants

- Can reduce setup effort for document-heavy RAG

- Good fit for teams prioritizing document understanding

AI-Specific Depth Must Include

- Model support: Hosted, BYO, and open-source support may vary by setup

- RAG / knowledge integration: Strong focus on document ingestion, knowledge bases, retrieval, and answer generation

- Evaluation: Varies / N/A

- Guardrails: Varies / N/A

- Observability: Retrieval behavior, document processing, query history, and system metrics depend on deployment

Pros

- Good fit for document-centric RAG use cases

- Reduces need to build every RAG component from scratch

- Useful for knowledge base and enterprise document workflows

Cons

- Flexibility may be lower than developer-first frameworks

- Enterprise controls should be verified directly

- Advanced custom retrieval may require engineering work

Security & Compliance

Security depends on deployment, access control, document storage, encryption, logging, retention, and connected model providers. Certifications are Not publicly stated.

Deployment & Platforms

- Web-based and application-style workflows: Varies / N/A

- Cloud, self-hosted, or hybrid: Varies / N/A

- Developer and knowledge-base deployment patterns

- Platform support depends on setup

- Works with document and knowledge workflows

Integrations & Ecosystem

RAGFlow fits teams that want a more packaged path for document-based RAG applications.

- Document repositories

- Knowledge bases

- Vector databases

- LLM providers

- Embedding models

- Document parsing workflows

- Application APIs depending on setup

Pricing Model No exact prices unless confident

Open-source and commercial options may vary depending on deployment and support. Exact pricing is Not publicly stated.

Best-Fit Scenarios

- Document-heavy RAG assistants

- Internal knowledge base chat

- Teams wanting faster document RAG setup

8 — txtai

One-line verdict: Best for developers needing lightweight semantic search, embeddings, and local RAG-style workflows.

Short description :

txtai is a framework for semantic search, embeddings, similarity workflows, and AI-powered search applications. It is useful for developers building lightweight retrieval systems, local search, and RAG-style pipelines.

Standout Capabilities

- Semantic search and embedding workflows

- Lightweight framework for search applications

- Supports local and custom retrieval patterns

- Useful for similarity search and indexing

- Can support RAG-style applications

- Developer-friendly Python workflows

- Good fit for smaller or self-contained projects

AI-Specific Depth Must Include

- Model support: BYO and open-source model workflows depending on setup

- RAG / knowledge integration: Supports embeddings, indexing, similarity search, and retrieval-style workflows

- Evaluation: Varies / N/A

- Guardrails: N/A, requires custom controls

- Observability: Varies / N/A, usually requires custom logging and monitoring

Pros

- Lightweight and developer-friendly

- Useful for local or self-hosted semantic search

- Good for custom retrieval workflows without heavy orchestration

Cons

- Less comprehensive than full RAG orchestration frameworks

- Enterprise governance and access control need custom design

- Advanced observability and evaluation require companion tooling

Security & Compliance

Security depends on how the application is deployed, where embeddings and documents are stored, and how access controls are implemented. Certifications are Not publicly stated.

Deployment & Platforms

- Python-based framework

- Local, cloud, self-hosted, or hybrid depending on app design

- Works across common developer environments

- Backend application deployment patterns

- Web/mobile support depends on application implementation

Integrations & Ecosystem

txtai is useful when teams want a lightweight semantic search foundation that can be embedded into custom AI applications.

- Embedding models

- Local indexes

- Python applications

- Document processing workflows

- Similarity search

- Custom APIs

- RAG-style pipelines

Pricing Model No exact prices unless confident

Open-source usage is available. Costs depend on compute, storage, model providers, hosting, and engineering effort.

Best-Fit Scenarios

- Lightweight semantic search applications

- Local or self-hosted retrieval workflows

- Developers building custom RAG prototypes

9 — Flowise

One-line verdict: Best for teams wanting visual low-code RAG and LLM application workflow building.

Short description :

Flowise is a visual builder for LLM workflows, including RAG-style applications. It is useful for teams that want to prototype and assemble AI apps using a low-code interface rather than writing every pipeline component from scratch.

Standout Capabilities

- Visual workflow builder for LLM apps

- Supports RAG-style flows with document and vector components

- Useful for rapid prototyping

- Can connect models, retrievers, tools, and memory components

- Helpful for non-specialist teams exploring RAG

- Supports developer extension patterns depending on setup

- Good fit for internal tools and proof-of-concepts

AI-Specific Depth Must Include

- Model support: Hosted, BYO, and open-source workflows may vary by integrations

- RAG / knowledge integration: Supports visual RAG pipelines, vector stores, document loaders, and retrieval components depending on setup

- Evaluation: Varies / N/A, usually requires companion evaluation workflows

- Guardrails: Varies / N/A

- Observability: Workflow logs and run details depending on deployment; advanced observability may require integrations

Pros

- Easier entry point for RAG prototyping

- Visual design helps teams understand pipeline flow

- Useful for fast internal demos and workflow assembly

Cons

- May be less suitable for highly custom production systems

- Governance and access control need careful deployment design

- Advanced testing and observability may require companion tools

Security & Compliance

Security depends on deployment, authentication, data connectors, model providers, vector stores, logging, and hosting configuration. Certifications are Not publicly stated.

Deployment & Platforms

- Web-based visual interface

- Cloud, self-hosted, or hybrid: Varies / N/A

- Works with backend workflow deployments

- Developer extension support depends on setup

- Web/mobile app support depends on built application

Integrations & Ecosystem

Flowise fits teams that want to visually connect RAG components and build proof-of-concept or internal LLM workflows quickly.

- LLM providers

- Vector databases

- Document loaders

- Embedding models

- Memory components

- API workflows

- Custom tools depending on setup

Pricing Model No exact prices unless confident

Open-source and hosted or commercial options may vary. Exact pricing is Not publicly stated.

Best-Fit Scenarios

- Low-code RAG prototypes

- Internal knowledge assistants

- Teams learning and validating RAG workflows quickly

10 — Dify

One-line verdict: Best for teams building RAG-powered AI applications with app-building and knowledge base workflows.

Short description :

Dify is an LLM application development platform that supports building AI apps, workflows, and knowledge-based assistants. It is useful for teams that want a more application-oriented way to create RAG systems with knowledge bases and deployment workflows.

Standout Capabilities

- AI application development workflows

- Knowledge base and RAG-style features

- Supports workflow and app-building patterns

- Useful for teams building assistants and internal tools

- Can reduce engineering effort compared with fully custom frameworks

- Supports model integration patterns depending on setup

- Good fit for practical business-facing AI applications

AI-Specific Depth Must Include

- Model support: Hosted, BYO, and open-source model workflows may vary by deployment and integration

- RAG / knowledge integration: Knowledge base and retrieval workflows for app development

- Evaluation: Varies / N/A

- Guardrails: Varies / N/A, policy and moderation controls depend on setup

- Observability: App logs, workflow records, usage, latency, and cost signals may vary by setup

Pros

- Good fit for business-facing AI apps

- Combines RAG with app and workflow building

- Faster path to deployable assistants than fully custom code

Cons

- Less flexible than fully code-first frameworks for complex retrieval logic

- Enterprise security and governance should be verified directly

- Advanced evaluation may require companion tools

Security & Compliance

Security depends on deployment, identity controls, data connectors, model providers, storage, encryption, logging, retention, and administration setup. Certifications are Not publicly stated.

Deployment & Platforms

- Web-based application development platform

- Cloud, self-hosted, or hybrid: Varies / N/A

- API and app deployment workflows

- Works with knowledge base and workflow patterns

- Platform support depends on deployment

Integrations & Ecosystem

Dify fits teams that want to build and deploy RAG-powered assistants and AI workflows with less custom engineering.

- LLM providers

- Knowledge bases

- Workflow tools

- APIs

- Embedding models

- Vector storage depending on setup

- Business application integrations

Pricing Model No exact prices unless confident

Open-source and hosted or commercial options may vary depending on deployment, users, and usage. Exact pricing is Not publicly stated.

Best-Fit Scenarios

- Business knowledge assistants

- RAG apps with faster deployment needs

- Teams wanting application workflows plus knowledge retrieval

Comparison Table

| Tool Name | Best For | Deployment Cloud/Self-hosted/Hybrid | Model Flexibility Hosted / BYO / Multi-model / Open-source | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| LangChain | Flexible RAG and agents | Cloud, self-hosted, hybrid | Multi-model, BYO, open-source | Broad ecosystem | Can become complex | N/A |

| LlamaIndex | Data-centric RAG | Cloud, self-hosted, hybrid | Multi-model, BYO, open-source | Indexing and retrieval | Needs careful access design | N/A |

| Haystack | Modular RAG pipelines | Cloud, self-hosted, hybrid | Hosted, BYO, open-source | Search and QA pipelines | Smaller ecosystem than broad frameworks | N/A |

| Semantic Kernel | Enterprise AI orchestration | Cloud, self-hosted, hybrid | Multi-model, BYO | Plugins and app integration | Less retrieval-specific | N/A |

| DSPy | RAG optimization | Cloud, self-hosted, hybrid | Hosted and BYO | Evaluation-driven optimization | Higher learning curve | N/A |

| LangGraph | Agentic RAG workflows | Cloud, self-hosted, hybrid | Multi-model | Stateful graph workflows | More advanced setup | N/A |

| RAGFlow | Document-heavy RAG | Cloud, self-hosted, hybrid varies | Hosted, BYO varies | Document knowledge bases | Verify enterprise controls | N/A |

| txtai | Lightweight semantic search | Local, cloud, self-hosted | BYO, open-source | Simple embedding search | Limited enterprise workflow | N/A |

| Flowise | Low-code RAG prototyping | Cloud, self-hosted, hybrid varies | Multi-model varies | Visual workflow building | Production hardening needed | N/A |

| Dify | RAG app development | Cloud, self-hosted, hybrid varies | Multi-model varies | App and knowledge workflows | Less code-level control | N/A |

Scoring & Evaluation Transparent Rubric

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| LangChain | 9 | 7 | 5 | 10 | 7 | 8 | 6 | 9 | 7.85 |

| LlamaIndex | 9 | 8 | 5 | 9 | 8 | 8 | 6 | 8 | 7.95 |

| Haystack | 8 | 7 | 5 | 8 | 7 | 8 | 6 | 8 | 7.25 |

| Semantic Kernel | 8 | 6 | 5 | 8 | 7 | 8 | 7 | 8 | 7.20 |

| DSPy | 8 | 9 | 4 | 7 | 5 | 8 | 5 | 7 | 6.95 |

| LangGraph | 8 | 7 | 5 | 8 | 6 | 8 | 6 | 8 | 7.15 |

| RAGFlow | 8 | 6 | 4 | 7 | 8 | 7 | 6 | 7 | 6.85 |

| txtai | 7 | 5 | 3 | 6 | 8 | 8 | 4 | 7 | 6.15 |

| Flowise | 7 | 5 | 4 | 8 | 9 | 7 | 5 | 7 | 6.75 |

| Dify | 8 | 6 | 5 | 8 | 8 | 7 | 6 | 7 | 7.05 |

Top 3 for Enterprise

- LlamaIndex

- LangChain

- Semantic Kernel

Top 3 for SMB

- Dify

- Flowise

- Haystack

Top 3 for Developers

- LangChain

- LlamaIndex

- DSPy

Which Retrieval-Augmented Generation RAG Framework Is Right for You?

Solo / Freelancer

Solo users should choose a framework that is easy to start with but still flexible enough to grow. If you are building a small knowledge assistant, avoid overengineering the stack.

Recommended options:

- Flowise for visual RAG prototypes

- txtai for lightweight semantic search

- LangChain for flexible coding workflows

- LlamaIndex for data-centric document retrieval

- Dify if you want app-building plus knowledge base workflows

For early experiments, focus on document loading, chunking, retrieval quality, and answer evaluation before scaling the architecture.

SMB

Small and midsize businesses usually need a balance of speed, reliability, and manageable complexity. The best framework should help the team launch useful RAG applications without requiring a large AI platform team.

Recommended options:

- Dify for business-facing AI apps and knowledge workflows

- Flowise for low-code internal prototypes

- Haystack for modular search and QA pipelines

- LlamaIndex for document and knowledge-heavy RAG

- LangChain if the team has strong developers and needs customization

SMBs should prioritize frameworks that reduce setup time, support common data sources, and allow future production hardening.

Mid-Market

Mid-market teams often need RAG across support, internal knowledge, sales enablement, engineering documentation, and operations. They need flexible retrieval, observability, evaluation, and governance.

Recommended options:

- LlamaIndex for indexing and retrieval depth

- LangChain for complex orchestration and integrations

- Haystack for modular RAG pipelines

- LangGraph for agentic RAG workflows

- Semantic Kernel for enterprise app integration

Mid-market buyers should evaluate how well each framework supports metadata filtering, access control, evaluation, and integration with existing infrastructure.

Enterprise

Enterprises need RAG frameworks that can support security, access control, governance, observability, model flexibility, and integration with business systems.

Recommended options:

- LlamaIndex for data-centric enterprise retrieval

- LangChain for broad integration and custom orchestration

- Semantic Kernel for enterprise application integration

- Haystack for production-style search and QA workflows

- LangGraph for controlled agentic RAG

Enterprise teams should verify tenant isolation, document permissions, data retention, logging controls, model provider policies, and auditability before production rollout.

Regulated industries finance/healthcare/public sector

Regulated teams need RAG systems that can explain where answers came from, control access to sensitive documents, log retrieval evidence, and support human review.

Important priorities:

- Permission-aware retrieval

- Source citation and traceability

- Data retention and residency controls

- Prompt injection defense

- Evaluation for hallucination and faithfulness

- Human review for high-risk answers

- Audit logs for retrieved content and generated outputs

- Versioning for prompts, indexes, embeddings, and models

- Secure vector database design

- Incident handling and rollback processes

Strong-fit options may include LlamaIndex, LangChain, Haystack, Semantic Kernel, and LangGraph, depending on internal engineering maturity and governance needs.

Budget vs premium

Budget-conscious teams can start with open-source frameworks and pay mainly for model usage, vector databases, hosting, and observability.

Budget-friendly direction:

- txtai for lightweight semantic search

- LangChain for open-source RAG development

- LlamaIndex for open-source data-centric RAG

- Haystack for open-source modular pipelines

- Flowise for quick visual prototypes

Premium direction:

- Managed deployments, enterprise support, hosted vector databases, observability platforms, evaluation tools, and governance layers

- Dify or Flowise where faster app development matters

- Enterprise support around framework, infrastructure, and security architecture

The right choice depends on whether the main constraint is engineering time, retrieval quality, compliance, scale, or cost.

Build vs buy when to DIY

DIY can work when:

- You have strong engineering skills

- You need deep control over retrieval and ranking

- Your data sources are complex

- You need custom access control

- You want to avoid vendor lock-in

- Your RAG system is a strategic product capability

Buy or use a packaged platform when:

- You need fast deployment

- You have limited AI engineering resources

- Your use case is a standard knowledge assistant

- You prefer visual workflows

- You need business users to manage knowledge bases

- Your first goal is validation, not deep customization

A practical approach is to prototype with a low-code or packaged framework, then move to a code-first stack if retrieval complexity, compliance, or scale increases.

Implementation Playbook 30 / 60 / 90 Days

30 Days: Pilot and success metrics

Start with one focused knowledge domain. Do not ingest every company document at once.

Key tasks:

- Select one clear RAG use case

- Identify trusted source documents

- Define users and expected questions

- Choose one framework and one vector database

- Build ingestion, chunking, embedding, and retrieval pipeline

- Create a small evaluation dataset

- Define success metrics such as answer relevance, faithfulness, latency, and user satisfaction

- Add source citation or evidence display

- Review data privacy and access control needs

- Document prompt, model, index, and embedding versions

AI-specific tasks:

- Build an initial evaluation harness

- Add hallucination and faithfulness checks

- Run prompt injection tests against retrieved content

- Track token usage, latency, and cost

- Define incident handling for wrong, unsafe, or missing answers

60 Days: Harden security, evaluation, and rollout

After the pilot works, improve quality, access control, monitoring, and user experience.

Key tasks:

- Improve chunking and metadata strategy

- Add hybrid search or reranking where needed

- Add permission-aware retrieval

- Add observability for queries, retrieved chunks, prompts, and outputs

- Add regression tests for common questions

- Add feedback capture from users

- Improve document update and reindexing workflows

- Review sensitive data handling

- Add fallback behavior when retrieval is weak

- Expand to more data sources carefully

AI-specific tasks:

- Add RAG evaluation for retrieval precision and answer faithfulness

- Add red-team tests for prompt injection and data leakage

- Track prompt, embedding, retriever, and model versions

- Monitor latency and cost by query type

- Add human review for high-risk responses

- Convert bad answers into regression tests

90 Days: Optimize cost, latency, governance, and scale

Once the RAG workflow is reliable, turn it into a production-grade system with governance and operating discipline.

Key tasks:

- Standardize ingestion and indexing workflows

- Add automated evaluation before index or prompt changes

- Build dashboards for quality, latency, cost, and usage

- Add governance for source documents and knowledge ownership

- Add versioning for prompts, indexes, and embeddings

- Add incident playbooks for bad answers or retrieval failures

- Optimize token usage and retrieved context size

- Add query routing for different domains

- Review vendor lock-in and export options

- Scale across teams or business units

AI-specific tasks:

- Add advanced prompt injection and jailbreak testing

- Monitor hallucination and citation quality trends

- Add evaluator versioning and human review workflows

- Connect RAG failures to incident management

- Improve fallback, refusal, and escalation strategies

- Scale evaluation, guardrails, retrieval, and observability across applications

Common Mistakes & How to Avoid Them

- Ingesting everything without curation: Bad or outdated documents create bad answers. Start with trusted sources.

- Ignoring access control: RAG systems must not retrieve documents a user is not allowed to see.

- Using only vector search: Hybrid search, filters, and reranking often improve retrieval quality.

- Poor chunking strategy: Chunks that are too small lose context, while chunks that are too large increase cost and confusion.

- No evaluation dataset: Without test questions and expected answers, teams cannot measure improvement.

- No source traceability: Users should know where the answer came from, especially in business or regulated workflows.

- No prompt injection defense: Malicious or untrusted documents can try to manipulate the model.

- Ignoring document freshness: RAG systems need reindexing and update workflows when knowledge changes.

- Overloading context: Sending too many chunks increases token cost and may reduce answer quality.

- No observability: Teams need to see retrieved chunks, scores, prompts, outputs, latency, and cost.

- No fallback behavior: If retrieval is weak, the system should say it does not know or ask for clarification.

- Treating RAG as a one-time project: RAG quality requires continuous evaluation, feedback, and tuning.

- Ignoring metadata: Metadata filters can improve relevance, permissions, and routing.

- No ownership for knowledge sources: Every source should have a business owner responsible for quality and updates.

FAQs

1. What is a RAG framework?

A RAG framework helps developers build applications that retrieve relevant information from external sources and pass it to an LLM to generate grounded answers.

2. Why is RAG useful?

RAG helps AI systems answer using current, private, or domain-specific information. It reduces reliance on the model’s built-in knowledge and improves answer traceability.

3. Does RAG eliminate hallucinations?

No. RAG can reduce hallucinations, but it does not eliminate them. Teams still need evaluation, source citation, guardrails, and monitoring.

4. What data sources can RAG use?

RAG can use documents, PDFs, websites, databases, tickets, wikis, transcripts, manuals, policies, code repositories, and structured records depending on the framework and connectors.

5. What is a vector database in RAG?

A vector database stores embeddings so the system can find semantically similar content. It is often used to retrieve relevant chunks for a user query.

6. What is chunking in RAG?

Chunking splits documents into smaller sections for indexing and retrieval. Good chunking improves context quality, retrieval accuracy, and token efficiency.

7. What is hybrid search?

Hybrid search combines semantic search with keyword search, metadata filters, or other ranking methods. It often improves retrieval accuracy compared with vector search alone.

8. Can RAG frameworks support BYO models?

Yes. Many RAG frameworks can work with hosted models, BYO models, open-source models, and custom inference endpoints depending on integrations.

9. Can RAG systems be self-hosted?

Yes. Many frameworks can be deployed in self-hosted or hybrid environments. Teams must also choose self-hosted models, vector databases, and storage if full control is required.

10. How do RAG frameworks help with privacy?

They can support private data retrieval, but privacy depends on deployment, data access controls, logging, retention, model provider policies, and vector database security.

11. What is RAG evaluation?

RAG evaluation measures retrieval quality, answer relevance, faithfulness, citation accuracy, hallucination risk, latency, and cost.

12. What are alternatives to RAG frameworks?

Alternatives include simple prompt stuffing, keyword search, managed chatbot platforms, fine-tuning, search engines, knowledge graphs, and custom retrieval pipelines.

13. Should I use RAG or fine-tuning?

Use RAG when answers need current or private knowledge. Use fine-tuning when the model needs to learn style, format, task behavior, or domain patterns. Many teams use both.

14. Can I switch RAG frameworks later?

Yes, but switching is easier if documents, embeddings, prompts, indexes, metadata, and evaluation datasets are portable.

15. What is the biggest mistake in RAG projects?

The biggest mistake is focusing only on the LLM and ignoring retrieval quality. Most RAG failures come from poor data, bad chunking, weak retrieval, missing evaluation, or lack of access control.

Conclusion

Retrieval-Augmented Generation RAG Frameworks are essential for building AI systems that answer from trusted, private, and changing knowledge sources. The best framework depends on your use case: LangChain is strong for flexible orchestration, LlamaIndex is strong for data-centric indexing and retrieval, Haystack is strong for modular search pipelines, Semantic Kernel fits enterprise app integration, DSPy supports systematic optimization, LangGraph supports agentic RAG, RAGFlow focuses on document-heavy workflows, txtai supports lightweight semantic search, Flowise enables visual prototyping, and Dify supports app-oriented knowledge assistants. There is no single universal winner because teams differ in data complexity, security needs, engineering skill, deployment strategy, and governance requirements. Start by shortlisting three tools, run a pilot on one real knowledge domain, verify security, evaluation quality, retrieval accuracy, latency, and cost, then scale RAG carefully across more data sources and AI applications.