Introduction

Batch Feature Store Platforms are centralized systems that store and serve precomputed features for machine learning workflows. Unlike online feature stores, which prioritize low-latency real-time access, batch feature stores focus on handling large-scale data efficiently and making it available for model training or bulk inference. They are critical for teams dealing with extensive datasets, complex feature engineering, and ensuring reproducibility and consistency across ML pipelines.

Real-world use cases include:

- Large-scale recommendation systems that update features nightly.

- Fraud detection models analyzing batches of financial transactions.

- Predictive maintenance with sensor data collected over time.

- Marketing analytics with aggregated user behavior data.

- Risk modeling in insurance or finance.

- Healthcare research requiring longitudinal data processing.

Best for: Data engineers, ML engineers, and enterprises managing high-volume batch pipelines.

Not ideal for: Organizations that require millisecond-level feature retrieval or primarily real-time inference pipelines.

Evaluation Criteria for Buyers

- Data volume handling: Ability to process and store large-scale feature datasets.

- Feature consistency: Reproducibility between training and inference datasets.

- Pipeline integration: Support for orchestration and batch processing frameworks.

- Latency requirements: Batch processing speed and scheduling flexibility.

- Security & governance: Encryption, access controls, audit logs.

- Observability: Logging, lineage tracking, and monitoring of feature computations.

- Scalability: Horizontal scaling for growing datasets.

- Cost efficiency: Optimized storage and compute resource usage.

What’s Changed in Batch Feature Store Platforms

- Integration with multimodal datasets including text, images, and embeddings.

- Automated feature validation and drift detection.

- Cloud-native scalability with multi-region batch processing.

- Enhanced observability with detailed metrics for latency and resource usage.

- Improved orchestration for ML pipelines including Airflow and Kubeflow connectors.

- Governance and access control with enterprise-grade RBAC.

- Optimized storage and cost management through smart caching and incremental updates.

- Support for BYO transformation logic and custom batch pipelines.

- Expanded integration with knowledge bases and RAG pipelines for feature augmentation.

- Improved compatibility with data lakes, warehouses, and streaming ingestion systems.

Quick Buyer Checklist

- Data privacy and retention policies.

- Batch processing and scheduling flexibility.

- Feature consistency and reproducibility.

- Pipeline orchestration integration.

- Observability and logging.

- Security and governance controls.

- Storage and compute cost management.

- Vendor lock-in assessment.

- Support for BYO transformations and open-source integration.

- Scalability and multi-region support.

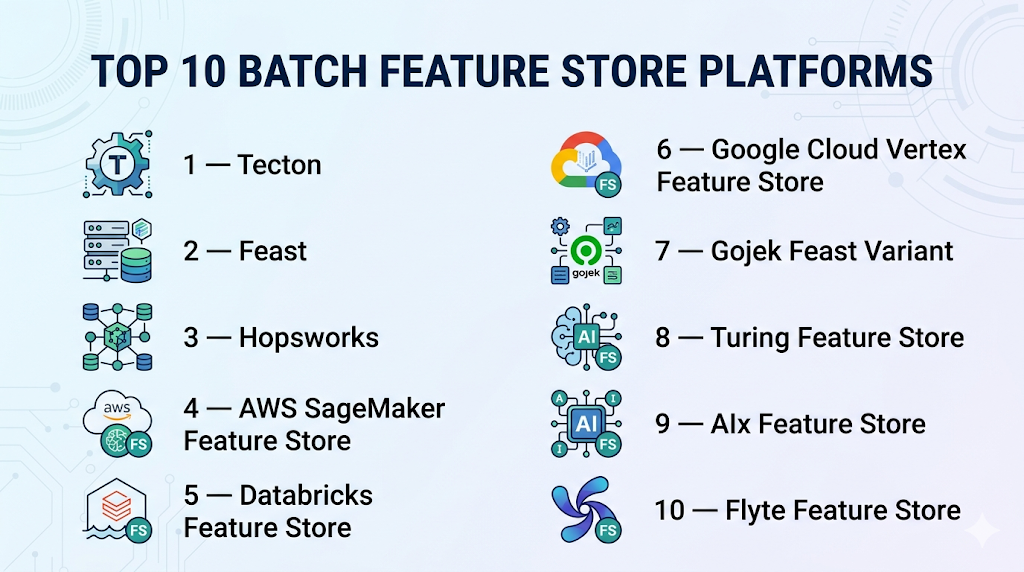

Top 10 Batch Feature Store Platforms

1 — Tecton

One-line verdict: Best for enterprises needing production-grade batch feature storage with integrated ML pipeline support.

Short description: Tecton centralizes batch feature computation, storage, and serving, ensuring feature consistency and observability for large teams.

Standout Capabilities

- Scheduled batch feature pipelines.

- Automatic versioning and lineage tracking.

- Integration with orchestration tools like Airflow.

- Multi-cloud and hybrid deployment options.

- Observability dashboards and metrics.

AI-Specific Depth

- Model support: BYO / Open-source

- RAG / knowledge integration: N/A

- Evaluation: Regression and drift detection

- Guardrails: Validation rules

- Observability: Latency, usage metrics

Pros

- Enterprise-ready for large pipelines.

- Strong governance and lineage.

- Scalable and reliable.

Cons

- High cost for small deployments.

- Requires engineering expertise.

- Complexity in hybrid environments.

Security & Compliance

- SSO/SAML, RBAC, audit logs, encryption; certifications: Not publicly stated

Deployment & Platforms

- Cloud, Hybrid

- Web interface, Python SDK

Integrations & Ecosystem

- Python SDK, REST APIs

- Airflow connectors

- Cloud storage connectors

- Monitoring dashboards

Pricing Model

Tiered, usage-based

Best-Fit Scenarios

- Large-scale model training pipelines.

- Nightly feature updates for recommendation systems.

- Enterprise ML feature governance.

2 — Feast

One-line verdict: Ideal for developers seeking open-source batch feature storage with flexibility and community support.

Short description: Feast provides batch feature computation and serving with strong integration to ML pipelines, suitable for engineering teams.

Standout Capabilities

- Open-source and flexible.

- Batch feature pipelines with scheduling.

- Integrates with Spark, Kafka, and data lakes.

- Feature versioning and validation.

- Community-driven extensibility.

AI-Specific Depth

- Model support: Open-source / BYO

- RAG / knowledge integration: N/A

- Evaluation: Offline validation

- Guardrails: Feature validation

- Observability: Usage metrics

Pros

- Free and extensible.

- Developer-friendly.

- Supports large datasets.

Cons

- Enterprise features require setup.

- Monitoring requires additional tools.

- Scaling demands engineering effort.

Security & Compliance

- Varies / N/A

Deployment & Platforms

- Cloud, Self-hosted

- Linux, Web interface

Integrations & Ecosystem

- Python SDK, REST APIs

- Spark and Kafka connectors

- Monitoring tools

Pricing Model

Open-source core; enterprise tier optional

Best-Fit Scenarios

- Developer-led ML pipelines.

- Startups with batch data processing.

- Open-source ML experimentation.

3 — Hopsworks

One-line verdict: Suited for MLOps teams needing integrated batch pipelines, governance, and feature orchestration.

Short description: Hopsworks offers batch and offline feature storage with full versioning, lineage, and orchestration support.

Standout Capabilities

- Batch feature pipelines with scheduling.

- Feature versioning and lineage.

- Pipeline integration with Airflow and Kubeflow.

- Multi-cloud support.

- Monitoring dashboards.

AI-Specific Depth

- Model support: BYO / Open-source

- RAG / knowledge integration: N/A

- Evaluation: Feature validation and drift detection

- Guardrails: Validation rules

- Observability: Latency, usage metrics

Pros

- Strong governance and MLOps integration.

- Supports large-scale pipelines.

- Multi-cloud ready.

Cons

- Setup complexity for small teams.

- Requires technical expertise.

- Cloud cost scales with volume.

Security & Compliance

- SSO, RBAC, encryption; certifications: Not publicly stated

Deployment & Platforms

- Cloud, Self-hosted, Hybrid

- Web interface, Python SDK

Integrations & Ecosystem

- ML pipelines integration (Kubeflow, Airflow)

- Kafka, Spark connectors

- Monitoring dashboards

Pricing Model

Tiered subscription

Best-Fit Scenarios

- Enterprises with batch pipelines.

- Predictive analytics for finance or retail.

- Large-scale feature governance.

4 — AWS SageMaker Feature Store

One-line verdict: Best for AWS enterprises leveraging batch features with native cloud service integration.

Short description: SageMaker Feature Store provides batch feature computation with full integration into the AWS ecosystem for ML workflows.

Standout Capabilities

- AWS-native batch pipelines.

- Feature versioning and lineage.

- Integration with SageMaker ML workflows.

- CloudWatch monitoring and logging.

- Multi-region support.

AI-Specific Depth

- Model support: Hosted / BYO

- RAG / knowledge integration: N/A

- Evaluation: Offline validation, drift detection

- Guardrails: Feature validation

- Observability: Metrics, logging

Pros

- Tight AWS integration.

- Fully managed batch pipelines.

- Enterprise-grade scalability.

Cons

- AWS lock-in.

- Limited customization outside AWS.

- Cost scales with usage.

Security & Compliance

- SSO/SAML, RBAC, encryption; certifications: Not publicly stated

Deployment & Platforms

- Cloud

- Web interface, Python SDK

Integrations & Ecosystem

- AWS Lambda, Step Functions

- S3, Redshift connectors

- Monitoring dashboards

Pricing Model

Usage-based subscription

Best-Fit Scenarios

- AWS-based enterprises.

- High-volume batch ML pipelines.

- Predictive analytics workflows.

5 — Databricks Feature Store

One-line verdict: Ideal for teams using Databricks for unified batch pipelines and collaborative feature engineering.

Short description: Databricks Feature Store centralizes batch feature computation, management, and serving with integrated ML workflow support.

Standout Capabilities

- Batch feature pipelines with scheduling.

- Collaborative workspace for feature engineering.

- Versioning and lineage tracking.

- Integration with MLflow for experiment tracking.

- Observability dashboards for metrics.

AI-Specific Depth

- Model support: BYO / Open-source

- RAG / knowledge integration: N/A

- Evaluation: Drift detection, offline validation

- Guardrails: Feature validation rules

- Observability: Latency, usage metrics

Pros

- Unified Databricks ecosystem.

- Collaborative feature engineering.

- Enterprise governance ready.

Cons

- Limited outside Databricks.

- Setup complexity for small teams.

- Learning curve for new users.

Security & Compliance

- RBAC, encryption, audit logs; certifications: Not publicly stated

Deployment & Platforms

- Cloud

- Web interface, Python SDK

Integrations & Ecosystem

- MLflow integration

- Spark and Delta Lake connectors

- Python SDK, REST API

- Monitoring dashboards

Pricing Model

Tiered subscription based on usage

Best-Fit Scenarios

- Collaborative ML pipelines.

- Batch feature computation for enterprise ML.

- Recommendation and prediction workflows.

6 — Google Cloud Vertex Feature Store

One-line verdict: Suited for Google Cloud users needing large-scale batch features with enterprise-grade support.

Short description: Vertex Feature Store provides centralized batch feature storage integrated tightly with Vertex AI pipelines.

Standout Capabilities

- Batch computation pipelines with scheduling.

- Multi-region support for high availability.

- Feature versioning and lineage.

- Integration with Vertex AI ML workflows.

- Observability dashboards and metrics.

AI-Specific Depth

- Model support: Hosted / BYO

- RAG / knowledge integration: N/A

- Evaluation: Drift detection, offline validation

- Guardrails: Feature validation policies

- Observability: Latency, usage metrics

Pros

- Cloud-native scalability.

- Strong integration with Vertex AI.

- Enterprise-grade observability.

Cons

- Cloud lock-in to Google Cloud.

- Limited offline/on-prem support.

- Cost scales with batch data volume.

Security & Compliance

- SSO/SAML, RBAC, encryption; certifications: Not publicly stated

Deployment & Platforms

- Cloud

- Web interface, Python SDK

Integrations & Ecosystem

- Vertex AI pipelines

- BigQuery and GCS connectors

- Python SDK, REST APIs

- Monitoring dashboards

Pricing Model

Usage-based subscription

Best-Fit Scenarios

- Google Cloud-first enterprises.

- Large-scale batch ML pipelines.

- Predictive analytics for finance, retail, or IoT.

7 — Gojek Feast Variant

One-line verdict: Optimal for developers needing open-source batch pipelines with streaming and batch support.

Short description: This Feast variant supports batch feature storage with strong community-driven flexibility and integration.

Standout Capabilities

- Batch and streaming feature pipelines.

- Open-source friendly and extensible.

- Real-time updates support.

- Integration with Kafka and Spark.

- Observability dashboards.

AI-Specific Depth

- Model support: Open-source / BYO

- RAG / knowledge integration: N/A

- Evaluation: Offline validation

- Guardrails: Feature validation

- Observability: Latency and usage metrics

Pros

- Developer-friendly and flexible.

- Strong batch and streaming support.

- Open-source extensibility.

Cons

- Limited enterprise support.

- Requires technical setup.

- Documentation may vary.

Security & Compliance

- Varies / N/A

Deployment & Platforms

- Cloud, Self-hosted

- Linux, Web interface

Integrations & Ecosystem

- Python SDK, REST APIs

- Kafka, Spark connectors

- Monitoring dashboards

Pricing Model

Open-source core; enterprise tier optional

Best-Fit Scenarios

- Developer-led ML teams.

- High-volume batch pipelines.

- Startups or prototyping projects.

8 — Turing Feature Store

One-line verdict: Best for teams needing batch computation with low-latency retrieval for large-scale ML workflows.

Short description: Turing Feature Store provides centralized batch storage with API support for ML engineers and data scientists.

Standout Capabilities

- Scheduled batch pipelines.

- Multi-cloud support.

- Versioning and lineage tracking.

- API-driven batch retrieval.

- Observability dashboards for feature usage.

AI-Specific Depth

- Model support: BYO / Open-source

- RAG / knowledge integration: N/A

- Evaluation: Offline validation tests

- Guardrails: Feature validation policies

- Observability: Latency and usage metrics

Pros

- Efficient batch processing.

- API-based access.

- Multi-cloud ready.

Cons

- Limited enterprise governance.

- Setup requires technical expertise.

- Scaling may need custom infrastructure.

Security & Compliance

- Encryption and access controls; certifications: Not publicly stated

Deployment & Platforms

- Cloud

- Web interface, Python SDK

Integrations & Ecosystem

- REST APIs, Python SDK

- Monitoring dashboards

- Spark and pipeline connectors

Pricing Model

Usage-based

Best-Fit Scenarios

- High-volume batch pipelines.

- Multi-cloud ML deployment.

- Data-intensive training pipelines.

9 — AIx Feature Store

One-line verdict: Suited for small to mid-size teams needing lightweight batch feature storage with developer-friendly APIs.

Short description: AIx Feature Store enables batch feature computation and management with quick deployment and easy integration.

Standout Capabilities

- Lightweight batch processing.

- Versioned feature storage.

- Developer-friendly API access.

- Observability dashboards.

- Integration with ML frameworks.

AI-Specific Depth

- Model support: BYO / Open-source

- RAG / knowledge integration: N/A

- Evaluation: Offline validation

- Guardrails: Basic feature validation

- Observability: Usage and latency metrics

Pros

- Quick to deploy.

- Low-latency batch retrieval.

- Developer-friendly.

Cons

- Limited enterprise features.

- Scaling may require extra setup.

- Governance controls are minimal.

Security & Compliance

- Varies / N/A

Deployment & Platforms

- Cloud

- Web interface, Python SDK

Integrations & Ecosystem

- Python SDK, REST API

- Monitoring dashboards

- Simple pipeline connectors

Pricing Model

Usage-based

Best-Fit Scenarios

- Startups and small ML teams.

- Batch ML pipelines.

- Rapid feature prototyping.

10 — Flyte Feature Store

One-line verdict: Optimal for pipeline-native ML teams needing batch feature orchestration and consistency.

Short description: Flyte Feature Store integrates with Flyte workflows for batch feature storage, versioning, and serving in production pipelines.

Standout Capabilities

- Pipeline-native batch orchestration.

- Versioning and lineage tracking.

- Multi-cloud support.

- Observability and metrics dashboards.

- API-based feature retrieval.

AI-Specific Depth

- Model support: BYO / Open-source

- RAG / knowledge integration: N/A

- Evaluation: Feature correctness tests

- Guardrails: Validation rules

- Observability: Latency, usage metrics

Pros

- Tight integration with pipelines.

- Versioned and consistent features.

- Supports large-scale ML pipelines.

Cons

- Requires Flyte expertise.

- Setup complexity for small teams.

- Enterprise support varies.

Security & Compliance

- Encryption, RBAC; certifications: Not publicly stated

Deployment & Platforms

- Cloud, Self-hosted

- Web interface, Python SDK

Integrations & Ecosystem

- Flyte workflow integration

- Python SDK, REST APIs

- Monitoring dashboards

- Multi-cloud connectors

Pricing Model

Usage-based, open-source core

Best-Fit Scenarios

- ML teams using Flyte orchestration.

- Large-scale batch feature pipelines.

- Production-grade ML workflows.

Comparison Table

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| Tecton | Enterprise ML pipelines | Cloud / Hybrid | BYO / Open-source | Full-featured batch | Cost | N/A |

| Feast | Developer-friendly | Cloud / Self-hosted | Open-source / BYO | Flexibility | Monitoring setup | N/A |

| Hopsworks | MLOps integration | Cloud / Hybrid | BYO / Open-source | Governance & pipelines | Setup complexity | N/A |

| AWS SageMaker FS | AWS-centric enterprise | Cloud | Hosted / BYO | AWS integration | Vendor lock-in | N/A |

| Databricks FS | Unified ML workflows | Cloud | BYO / Open-source | Collaborative engineering | Complexity | N/A |

| Google Vertex FS | Google Cloud optimized | Cloud | Hosted / BYO | Scale & latency | Cloud lock-in | N/A |

| Gojek Feast Variant | Developer pipelines | Cloud / Self-hosted | Open-source / BYO | Streaming support | Limited docs | N/A |

| Turing FS | Real-time batch | Cloud | BYO / Open-source | Fast retrieval | Limited governance | N/A |

| AIx FS | Lightweight / startups | Cloud | BYO / Open-source | Quick deployment | Scaling | N/A |

| Flyte FS | Pipeline-native ML | Cloud / Self-hosted | BYO / Open-source | Orchestration | Learning curve | N/A |

Scoring & Evaluation (Transparent Rubric)

Scoring is comparative, reflecting each tool’s strength across critical criteria. Weighted totals help identify top tools for different use cases.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| Tecton | 9 | 9 | 8 | 9 | 8 | 8 | 9 | 8 | 8.5 |

| Feast | 8 | 7 | 7 | 8 | 8 | 7 | 7 | 7 | 7.5 |

| Hopsworks | 9 | 8 | 8 | 9 | 7 | 8 | 8 | 7 | 8.0 |

| AWS SageMaker FS | 8 | 8 | 8 | 9 | 8 | 8 | 8 | 8 | 8.0 |

| Databricks FS | 9 | 8 | 8 | 9 | 7 | 8 | 8 | 7 | 8.0 |

| Google Vertex FS | 8 | 8 | 7 | 8 | 8 | 8 | 7 | 7 | 7.75 |

| Gojek Feast Variant | 7 | 7 | 6 | 7 | 7 | 7 | 6 | 6 | 6.75 |

| Turing FS | 7 | 7 | 6 | 7 | 7 | 7 | 6 | 6 | 6.75 |

| AIx FS | 6 | 6 | 6 | 6 | 7 | 6 | 6 | 6 | 6.5 |

| Flyte FS | 7 | 7 | 7 | 7 | 7 | 7 | 6 | 6 | 6.95 |

Top 3 for Enterprise: Tecton, Hopsworks, Databricks FS

Top 3 for SMB: Feast, AIx FS, Turing FS

Top 3 for Developers: Feast, Flyte FS, Gojek Feast Variant

Which Batch Feature Store Platform Is Right for You?

Solo / Freelancer

Use lightweight tools like Feast or AIx FS for small-scale batch feature pipelines and experimentation.

SMB

Feast, Gojek Feast Variant, or Turing FS offer manageable batch pipelines with low maintenance and cost.

Mid-Market

Hopsworks and Databricks FS provide structured pipelines, governance, and collaboration across teams.

Enterprise

Tecton, AWS SageMaker FS, and Google Vertex FS are suited for large-scale batch processing with enterprise-grade monitoring and compliance.

Regulated Industries

Focus on tools with audit logs, encryption, RBAC, and strict compliance workflows.

Budget vs Premium

Open-source tools reduce cost but require more engineering; premium platforms provide SLA-backed reliability and observability.

Build vs Buy

Build only if you need highly customized pipelines and control; buy to accelerate deployment, reduce overhead, and leverage ready integrations.

Implementation Playbook (30 / 60 / 90 Days)

30 Days: Pilot

- Identify key batch features for ML models.

- Build a small pilot batch pipeline.

- Measure latency, correctness, and reproducibility.

- Configure basic access controls and logging.

60 Days: Harden & Rollout

- Implement security, audit logs, and RBAC.

- Establish validation rules and drift monitoring.

- Integrate with orchestration pipelines (Airflow, Kubeflow).

- Expand pilot to additional teams or datasets.

90 Days: Optimize & Scale

- Optimize storage, caching, and compute costs.

- Add multi-region support and automated batch scheduling.

- Standardize governance, versioning, and incident handling.

- Scale pipelines enterprise-wide with observability dashboards.

Common Mistakes & How to Avoid Them

- Ignoring feature drift between training and batch inference.

- Deploying batch pipelines without proper evaluation.

- Unmanaged access controls or RBAC policies.

- Lack of monitoring and observability.

- Surprising storage or compute costs.

- Over-automation without human validation.

- Vendor lock-in without abstraction.

- Missing lineage and audit trails.

- Poor orchestration integration.

- Inadequate governance for regulated data.

- Ignoring scalability challenges.

- Skipping validation of new features in production.

FAQs

1. What is a batch feature store?

Centralized system to store and serve precomputed ML features for training or bulk inference workflows.

2. How is it different from online feature stores?

Batch stores handle large-scale offline datasets efficiently, while online stores provide low-latency, real-time retrieval.

3. Can I bring my own transformations?

Yes, most platforms support BYO transformations for batch pipelines.

4. Are batch feature stores suitable for small teams?

Lightweight options like Feast or AIx FS are ideal; enterprise platforms may be overkill.

5. How do these platforms handle feature consistency?

Through versioning, validation rules, and reproducible batch pipelines.

6. Do they integrate with orchestration tools?

Yes, most support Airflow, Kubeflow, or Spark pipelines.

7. Can they scale to large datasets?

Yes, batch stores are optimized for high-volume datasets and multi-cloud deployments.

8. How is security managed?

Platforms offer encryption, RBAC, audit logs, and compliance features; check vendor specifics.

9. What is observability in batch feature stores?

Monitoring pipeline execution, feature metrics, batch latency, and usage for quality assurance.

10. How do they reduce cost?

Optimized storage, incremental updates, caching, and efficient compute scheduling.

11. Can they integrate with ML frameworks?

Yes, most platforms support Python SDKs, Spark, MLflow, and REST APIs.

12. Are these tools suitable for regulated industries?

Yes, but verify audit, encryption, and governance features before deployment.

Conclusion

Batch Feature Store Platforms are essential for enterprises handling large-scale ML pipelines, ensuring reproducible features, governance, and optimized batch processing. The best platform depends on team size, pipeline complexity, regulatory requirements, and infrastructure. Start by shortlisting platforms, run a pilot to validate batch pipelines and observability, then scale with monitoring, security, and cost optimization.