Introduction

Online Feature Store Platforms are specialized tools that help organizations centralize, manage, and serve features for machine learning models in real-time. Unlike offline feature stores that focus on batch processing, online feature stores enable low-latency, production-grade feature retrieval, ensuring ML models can make fast, accurate predictions. These platforms are crucial for operationalizing AI at scale, maintaining feature consistency between training and inference, and enabling better monitoring and governance.

Real-world use cases include:

- Real-time recommendation systems in e-commerce or streaming platforms.

- Fraud detection in banking and payments.

- Predictive maintenance in manufacturing and IoT devices.

- Customer segmentation and personalization in marketing campaigns.

- Dynamic pricing and inventory optimization in logistics.

- Clinical decision support using up-to-date patient data in healthcare.

Best for: ML engineers, data scientists, and enterprises with production AI workflows that require high-availability, low-latency feature serving.

Not ideal for: Small teams with minimal real-time ML needs or those using only batch processing pipelines.

Evaluation Criteria for Buyers

- Feature consistency: Training and online inference feature alignment.

- Latency & performance: Millisecond-level retrieval for real-time models.

- Integration: APIs, SDKs, and pipeline connectors.

- Model support: Compatibility with multiple model frameworks.

- Security & governance: RBAC, encryption, audit logs, and compliance controls.

- Scalability: Horizontal scaling for high request volumes.

- Observability: Monitoring, logging, and alerts.

- Cost management: Efficient storage and retrieval, optimized for real-time usage.

What’s Changed in Online Feature Store Platforms

- Real-time feature computation pipelines integrated with streaming data.

- Support for multimodal features including images, text embeddings, and audio.

- Enhanced evaluation for feature drift, consistency, and correctness.

- Enterprise-grade security including data residency and retention controls.

- Low-latency retrieval with caching and multi-region routing.

- Observability dashboards for feature usage, latency, and cost metrics.

- Governance workflows for approval, versioning, and feature documentation.

- Support for BYO feature transformations and open-source integrations.

- Integration with RAG and knowledge store pipelines for feature augmentation.

- Multi-cloud support with seamless hybrid deployment.

Quick Buyer Checklist

- Data privacy and retention policies.

- Model and framework compatibility.

- Latency requirements and retrieval performance.

- Feature consistency across training and inference.

- Security and access control compliance.

- Integration with existing pipelines and orchestration tools.

- Observability and monitoring capabilities.

- Cost control for online feature computation and storage.

- Vendor lock-in risk assessment.

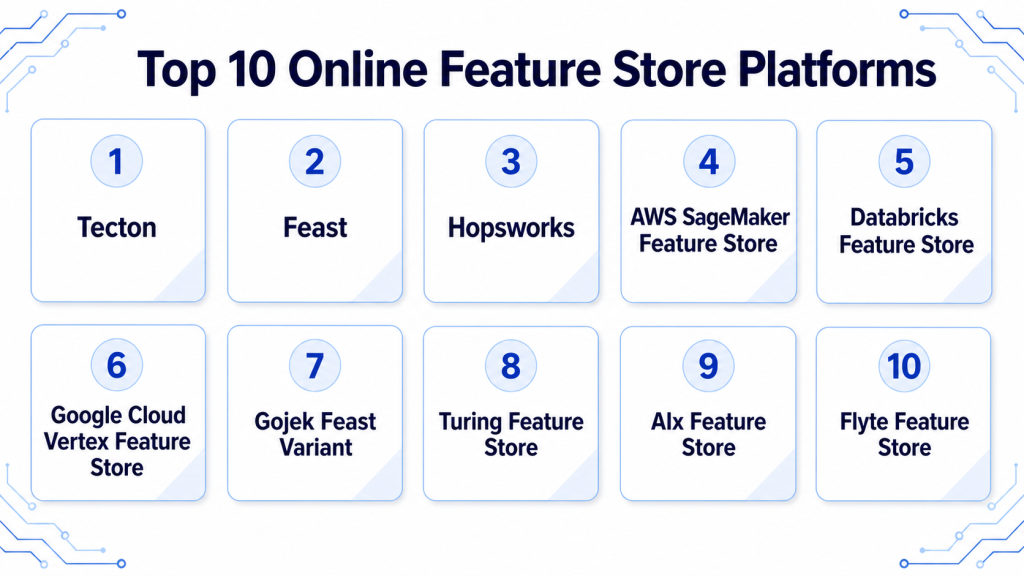

Top 10 Online Feature Store Platforms

1 — Tecton

One-line verdict: Best for enterprises needing a full-featured, production-grade online feature store with low-latency access.

Short description: Tecton offers centralized management for features, supporting both batch and real-time pipelines, ideal for large teams.

Standout Capabilities

- Real-time and batch feature pipelines.

- End-to-end feature lineage tracking.

- Multi-cloud deployment options.

- Integration with ML frameworks and orchestration tools.

- Observability dashboards and monitoring.

AI-Specific Depth

- Model support: Proprietary / BYO

- RAG / knowledge integration: N/A

- Evaluation: Drift detection, regression tests

- Guardrails: Feature validation rules

- Observability: Latency, usage metrics

Pros

- Enterprise-ready features with governance.

- Low-latency feature serving.

- Strong integration ecosystem.

Cons

- Cost may be high for smaller deployments.

- Requires ML and infrastructure expertise.

- Complex setup for hybrid environments.

Security & Compliance

- SSO/SAML, RBAC, audit logs, encryption, data retention controls; certifications: Not publicly stated

Deployment & Platforms

- Cloud, Hybrid

- Web interface, Python SDK

Integrations & Ecosystem

Supports orchestration, monitoring, and storage systems:

- Python SDK

- REST APIs

- Data pipelines connectors

- MLflow / Kubeflow integration

- Cloud storage connectors

Pricing Model

Tiered subscription, usage-based

Best-Fit Scenarios

- Real-time recommendations in e-commerce.

- Fraud detection pipelines.

- Predictive maintenance with IoT data.

2 — Feast

One-line verdict: Ideal for teams seeking open-source, flexible, and developer-friendly online feature storage.

Short description: Feast enables both batch and real-time feature serving with strong community support, suited for developers and ML teams.

Standout Capabilities

- Open-source and flexible.

- Real-time feature serving with low latency.

- Integrates with cloud and on-prem storage.

- Supports multi-model workflows.

- Active community and extensibility.

AI-Specific Depth

- Model support: Open-source / BYO

- RAG / knowledge integration: N/A

- Evaluation: Offline evaluation, drift detection

- Guardrails: Feature validation

- Observability: Latency and usage metrics

Pros

- Free and open-source core.

- Flexible and developer-friendly.

- Supports multiple ML frameworks.

Cons

- Enterprise features require extra setup.

- Limited out-of-the-box monitoring.

- Scaling requires engineering effort.

Security & Compliance

- Varies / N/A

Deployment & Platforms

- Cloud, Self-hosted

- Linux, macOS, Web interface

Integrations & Ecosystem

- Python SDK

- REST APIs

- Kafka / Spark connectors

- Cloud storage integration

- Monitoring tools

Pricing Model

Open-source core; enterprise support optional

Best-Fit Scenarios

- Developer-led AI teams.

- Open-source ML projects.

- Startups needing low-latency feature pipelines.

3 — Hopsworks

One-line verdict: Suited for organizations needing full MLOps integration with feature stores, orchestration, and governance.

Short description: Hopsworks provides both online and offline feature stores with strong versioning, governance, and pipeline support.

Standout Capabilities

- Real-time and batch feature pipelines.

- Feature versioning and lineage.

- Integrated ML model and pipeline management.

- Multi-cloud and hybrid support.

- Observability and monitoring dashboards.

AI-Specific Depth

- Model support: BYO / Open-source

- RAG / knowledge integration: N/A

- Evaluation: Feature correctness tests

- Guardrails: Validation rules for features

- Observability: Latency, usage metrics

Pros

- Complete MLOps integration.

- Strong governance and lineage.

- Supports complex pipelines.

Cons

- Requires learning for setup.

- Enterprise deployment can be complex.

- Cloud cost can scale.

Security & Compliance

- SSO, RBAC, encryption; certifications: Not publicly stated

Deployment & Platforms

- Cloud, Self-hosted, Hybrid

- Web, Python SDK

Integrations & Ecosystem

- ML pipelines integration (Kubeflow, MLflow)

- Kafka, Spark connectors

- Python SDK and REST APIs

- Monitoring dashboards

Pricing Model

Tiered, enterprise subscription

Best-Fit Scenarios

- Large-scale MLOps pipelines.

- Real-time model feature serving.

- Compliance-heavy industries.

4 — AWS SageMaker Feature Store

One-line verdict: Ideal for AWS-centric enterprises seeking seamless integration with ML pipelines and cloud services.

Short description: AWS SageMaker Feature Store enables real-time and batch feature storage with strong cloud-native support for ML teams.

Standout Capabilities

- Native integration with SageMaker ML pipelines.

- Real-time and batch feature serving.

- Automatic feature versioning and lineage.

- Multi-region low-latency retrieval.

- Built-in monitoring and metrics dashboards.

AI-Specific Depth

- Model support: Hosted / BYO

- RAG / knowledge integration: N/A

- Evaluation: Drift detection, regression tests

- Guardrails: Feature validation policies

- Observability: Latency, usage metrics

Pros

- Fully managed, minimal setup.

- Strong AWS ecosystem integration.

- Supports production-grade ML workflows.

Cons

- Vendor lock-in to AWS.

- Limited customization outside AWS ecosystem.

- Cost scales with usage and data volume.

Security & Compliance

- SSO/SAML, RBAC, encryption, audit logs, compliance controls; certifications: Not publicly stated

Deployment & Platforms

- Cloud

- Web interface, Python SDK

Integrations & Ecosystem

- Python SDK, REST APIs

- AWS Lambda and Step Functions

- S3 and Redshift connectors

- CloudWatch monitoring

Pricing Model

Usage-based, tiered enterprise plans

Best-Fit Scenarios

- Enterprises already on AWS.

- Real-time prediction pipelines.

- Large-scale ML model deployments.

5 — Databricks Feature Store

One-line verdict: Best for teams using Databricks for unified ML pipelines and collaborative feature engineering.

Short description: Databricks Feature Store provides centralized feature management for real-time and batch inference within Databricks environments.

Standout Capabilities

- Integration with MLflow for tracking experiments.

- Real-time and batch feature serving.

- Collaborative workspace for feature engineering.

- Feature versioning and lineage tracking.

- Unified governance for enterprise deployments.

AI-Specific Depth

- Model support: BYO / Open-source

- RAG / knowledge integration: N/A

- Evaluation: Offline feature validation, regression testing

- Guardrails: Feature validation rules

- Observability: Latency, usage metrics

Pros

- Unified with Databricks ecosystem.

- Collaborative feature engineering.

- Enterprise-grade governance.

Cons

- Limited outside Databricks environments.

- Complexity for small teams.

- Requires Databricks expertise.

Security & Compliance

- RBAC, encryption, audit logs; certifications: Not publicly stated

Deployment & Platforms

- Cloud

- Web interface, Python SDK

Integrations & Ecosystem

- Python SDK, REST APIs

- MLflow integration

- Spark and Delta Lake connectors

- Monitoring dashboards

Pricing Model

Tiered subscription based on usage

Best-Fit Scenarios

- Unified ML pipelines within Databricks.

- Collaborative enterprise teams.

- Real-time recommendation systems.

6 — Google Cloud Vertex Feature Store

One-line verdict: Suited for Google Cloud users needing enterprise-grade real-time feature serving and ML infrastructure.

Short description: Vertex Feature Store centralizes feature storage and serving, integrated tightly with Vertex AI pipelines for ML engineers.

Standout Capabilities

- Real-time low-latency feature serving.

- Tight integration with Vertex AI pipelines.

- Automatic feature versioning and lineage.

- Multi-region deployment and scaling.

- Observability and metrics dashboards.

AI-Specific Depth

- Model support: Hosted / BYO

- RAG / knowledge integration: N/A

- Evaluation: Drift detection, regression tests

- Guardrails: Feature validation rules

- Observability: Latency, usage metrics

Pros

- Cloud-native with Vertex AI integration.

- Supports real-time ML workflows.

- Enterprise-grade scalability.

Cons

- Cloud lock-in to Google Cloud.

- Limited offline/on-prem deployment.

- Pricing complexity with high volumes.

Security & Compliance

- SSO/SAML, RBAC, encryption; certifications: Not publicly stated

Deployment & Platforms

- Cloud

- Web interface, Python SDK

Integrations & Ecosystem

- Vertex AI pipelines

- BigQuery connectors

- Cloud logging and monitoring

- Python SDK and REST APIs

Pricing Model

Usage-based subscription

Best-Fit Scenarios

- Google Cloud-first enterprises.

- Low-latency feature serving.

- Large-scale ML production pipelines.

7 — Gojek Feast Variant

One-line verdict: Optimal for developer teams needing open-source-friendly, real-time feature storage with streaming support.

Short description: This Feast variant supports both batch and streaming pipelines for production ML features, ideal for developers in high-scale environments.

Standout Capabilities

- Stream and batch feature pipelines.

- Open-source flexibility for customization.

- Real-time low-latency retrieval.

- Integration with Kafka and Spark.

- Observability dashboards.

AI-Specific Depth

- Model support: Open-source / BYO

- RAG / knowledge integration: N/A

- Evaluation: Offline validation

- Guardrails: Feature validation rules

- Observability: Latency and usage metrics

Pros

- Open-source and customizable.

- Strong streaming pipeline support.

- Developer-friendly APIs.

Cons

- Limited enterprise support.

- Requires developer expertise for setup.

- Documentation may vary.

Security & Compliance

- Varies / N/A

Deployment & Platforms

- Cloud, Self-hosted

- Linux, Web interface

Integrations & Ecosystem

- Python SDK, REST APIs

- Kafka and Spark connectors

- Monitoring dashboards

- Custom pipelines

Pricing Model

Open-source; enterprise tier optional

Best-Fit Scenarios

- Startups and developer-led teams.

- Streaming data pipelines.

- Rapid ML prototyping.

8 — Turing Feature Store

One-line verdict: Designed for teams focusing on low-latency real-time ML feature serving in production workflows.

Short description: Turing Feature Store provides high-performance online feature storage with strong API support for ML engineers.

Standout Capabilities

- Millisecond-level feature retrieval.

- Real-time and batch pipelines.

- API-driven feature access.

- Multi-cloud support.

- Monitoring and alerting dashboards.

AI-Specific Depth

- Model support: BYO / Open-source

- RAG / knowledge integration: N/A

- Evaluation: Regression and validation tests

- Guardrails: Feature validation policies

- Observability: Latency and usage metrics

Pros

- Fast retrieval for real-time ML.

- Flexible integration with pipelines.

- Supports multi-cloud deployment.

Cons

- Limited enterprise governance features.

- Requires technical setup.

- Scaling needs engineering oversight.

Security & Compliance

- Encryption, access controls; certifications: Not publicly stated

Deployment & Platforms

- Cloud

- Web interface, Python SDK

Integrations & Ecosystem

- REST APIs, Python SDK

- Kafka, Spark connectors

- Monitoring dashboards

Pricing Model

Usage-based subscription

Best-Fit Scenarios

- Real-time recommendation systems.

- High-frequency ML pipelines.

- Multi-cloud ML deployments.

9 — AIx Feature Store

One-line verdict: Suited for startups and small teams needing lightweight, real-time feature storage with fast setup.

Short description: AIx Feature Store provides fast, developer-friendly feature serving for low-latency ML applications.

Standout Capabilities

- Real-time feature retrieval.

- Lightweight API access.

- Simple versioning and lineage.

- Supports multiple ML frameworks.

- Observability dashboards.

AI-Specific Depth

- Model support: BYO / Open-source

- RAG / knowledge integration: N/A

- Evaluation: Offline validation tests

- Guardrails: Basic feature validation

- Observability: Latency and usage metrics

Pros

- Quick to deploy.

- Low-latency feature serving.

- Developer-friendly API.

Cons

- Limited enterprise features.

- Scaling may require additional setup.

- Minimal governance controls.

Security & Compliance

- Varies / N/A

Deployment & Platforms

- Cloud

- Web interface, Python SDK

Integrations & Ecosystem

- Python SDK, REST API

- Monitoring dashboards

- Simple pipeline integration

Pricing Model

Usage-based

Best-Fit Scenarios

- Startups with small ML teams.

- Low-latency applications.

- Rapid prototyping.

10 — Flyte Feature Store

One-line verdict: Optimal for pipeline-native ML teams needing tight orchestration and feature versioning.

Short description: Flyte Feature Store integrates with Flyte workflows to provide consistent, versioned, and real-time feature serving for ML pipelines.

Standout Capabilities

- Pipeline-native feature orchestration.

- Real-time and batch pipelines.

- Versioning and lineage tracking.

- Multi-cloud support.

- Observability and metrics dashboards.

AI-Specific Depth

- Model support: BYO / Open-source

- RAG / knowledge integration: N/A

- Evaluation: Feature correctness tests

- Guardrails: Validation rules

- Observability: Latency, usage metrics

Pros

- Tight integration with ML pipelines.

- Versioned and consistent features.

- Supports production-grade ML workflows.

Cons

- Requires familiarity with Flyte.

- Setup complexity for small teams.

- Enterprise support varies.

Security & Compliance

- Encryption, RBAC; certifications: Not publicly stated

Deployment & Platforms

- Cloud, Self-hosted

- Web interface, Python SDK

Integrations & Ecosystem

- Flyte workflows integration

- Python SDK, REST APIs

- Monitoring dashboards

- Multi-cloud connectors

Pricing Model

Usage-based, open-source core

Best-Fit Scenarios

- ML teams using Flyte orchestration.

- Real-time low-latency features.

- Production-grade ML pipelines.

Comparison Table

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| Tecton | Enterprise real-time ML | Cloud / Hybrid | Hosted / BYO | Full-featured | Cost | N/A |

| Feast | Developer-friendly | Cloud / Self-hosted | Open-source / BYO | Flexibility | Monitoring setup | N/A |

| Hopsworks | MLOps integration | Cloud / Hybrid | BYO / Open-source | Governance | Learning curve | N/A |

| SageMaker Feature Store | AWS-centric enterprise | Cloud | Hosted / BYO | AWS integration | Vendor lock-in | N/A |

| Databricks Feature Store | Unified ML workflow | Cloud | BYO / Open-source | Pipeline integration | Complexity | N/A |

| Vertex Feature Store | Google Cloud optimized | Cloud | Hosted / BYO | Latency and scale | Cloud lock-in | N/A |

| Turing Feature Store | Real-time features for AI | Cloud | BYO / Open-source | Streaming support | Limited docs | N/A |

| Gojek Feast variant | Developer-scale | Cloud / Self-hosted | Open-source | Community | Enterprise support | N/A |

| AIx Feature Store | ML startups | Cloud | BYO | Lightweight and fast | Limited integrations | N/A |

| Flyte Feature Store | Pipeline-native | Cloud / Self-hosted | BYO / Open-source | Orchestration | Learning curve | N/A |

Scoring & Evaluation

This scoring is comparative, showing strengths across criteria rather than absolute values.

Scores consider feature completeness, real-time reliability, safety/guardrails, integrations, ease of use, performance, security, and support.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| Tecton | 9 | 9 | 8 | 9 | 8 | 8 | 9 | 8 | 8.5 |

| Feast | 8 | 7 | 7 | 8 | 8 | 7 | 7 | 7 | 7.5 |

| Hopsworks | 9 | 8 | 8 | 9 | 7 | 8 | 8 | 7 | 8.0 |

| SageMaker FS | 8 | 8 | 8 | 9 | 8 | 8 | 8 | 8 | 8.0 |

| Databricks FS | 9 | 8 | 8 | 9 | 7 | 8 | 8 | 7 | 8.0 |

| Vertex FS | 8 | 8 | 7 | 8 | 8 | 8 | 7 | 7 | 7.75 |

| Turing FS | 7 | 7 | 6 | 7 | 7 | 7 | 6 | 6 | 6.75 |

| Gojek Feast | 7 | 7 | 6 | 7 | 7 | 7 | 6 | 6 | 6.75 |

| AIx FS | 6 | 6 | 6 | 6 | 7 | 6 | 6 | 6 | 6.5 |

| Flyte FS | 7 | 7 | 7 | 7 | 7 | 7 | 6 | 6 | 6.95 |

Top 3 for Enterprise: Tecton, Hopsworks, Databricks FS

Top 3 for SMB: Feast, AIx FS, Turing FS

Top 3 for Developers: Feast, Flyte FS, Gojek Feast

Which Online Feature Store Platform Is Right for You?

Solo / Freelancer

Lightweight or open-source platforms like Feast or AIx FS allow fast integration without heavy infrastructure overhead.

SMB

Feast, Turing FS, and Flyte FS provide manageable pipelines with low-latency retrieval for small to mid-size teams.

Mid-Market

Hopsworks and Databricks FS are ideal for structured MLOps pipelines, governance, and multi-team collaboration.

Enterprise

Tecton, SageMaker FS, Vertex FS provide full-scale feature serving with enterprise-grade security, monitoring, and multi-cloud support.

Regulated Industries

Prioritize platforms with audit logs, encryption, compliance certifications, and strict RBAC.

Budget vs Premium

Open-source platforms reduce cost but require more engineering; premium enterprise platforms provide SLA-backed reliability and observability.

Build vs Buy

Build when highly customized feature computation is needed; buy to accelerate deployment, reduce engineering overhead, and leverage pre-built pipelines.

Implementation Playbook

30 Days

- Identify key features and models.

- Set up a small pilot for latency and correctness metrics.

- Configure basic access controls and logging.

60 Days

- Harden security, evaluation pipelines, and guardrails.

- Integrate monitoring, dashboards, and observability tools.

- Expand pilot to additional business units.

90 Days

- Optimize latency, caching, and multi-region routing.

- Standardize governance, versioning, and incident handling.

- Scale across all ML workflows with consistent monitoring.

Common Mistakes & How to Avoid Them

- Ignoring feature drift and consistency between training and inference.

- Deploying without evaluation and monitoring pipelines.

- Unmanaged access control or RBAC policies.

- Overlooking real-time latency and performance.

- Excessive storage or compute costs.

- Automating pipelines without human oversight.

- Vendor lock-in without abstraction.

- Missing data lineage and audit trails.

- Poor integration with ML pipelines.

- Inadequate governance for regulated data.

FAQs

1. What is an Online Feature Store?

A centralized platform for serving ML features in real-time, ensuring consistent predictions across training and inference.

2. Why do I need online feature serving?

It reduces latency for real-time predictions and ensures features are always up-to-date for accurate model outputs.

3. Can I bring my own models?

Yes, many platforms support BYO models, open-source, and custom feature transformations.

4. Are these platforms suitable for small teams?

Lightweight or open-source options like Feast or AIx FS are suitable; full enterprise platforms may be overkill.

5. How is feature consistency ensured?

Through feature versioning, pipelines, validation rules, and integration with ML workflow orchestration.

6. What is the difference between online and offline feature stores?

Online stores focus on low-latency, real-time access, whereas offline stores primarily support batch feature computation for training.

7. Do these platforms support multimodal features?

Many support text embeddings, numeric, categorical, and some image/audio features depending on vendor capabilities.

8. How do I monitor feature usage?

Observability dashboards provide latency, retrieval frequency, token/compute usage, and alerts for anomalies.

9. Can I self-host an online feature store?

Yes, open-source platforms like Feast and Flyte FS support self-hosting; enterprise platforms may also offer hybrid deployment.

10. How do I reduce cost?

Use caching, multi-region routing, batch computation where possible, and monitor retrieval metrics regularly.

11. Are they secure for sensitive data?

Platforms offer encryption, RBAC, audit logs, and compliance features, but always verify vendor capabilities.

12. What integrations are common?

Python SDKs, REST APIs, orchestration tools like Kubeflow, Spark, Kafka, and cloud storage services.

Conclusion

Online Feature Store Platforms are essential for production-grade ML deployments, enabling real-time predictions, consistent features, and operational governance. The best platform depends on your team size, latency needs, regulatory environment, and infrastructure preferences. Start by shortlisting suitable platforms, run a pilot to validate latency, correctness, and governance, then scale with monitoring, security, and cost optimization in mind.