Introduction

Model Serving Platforms are specialized software solutions that allow organizations to deploy, manage, and monitor machine learning models in production environments. They act as the bridge between model development and real-world application, ensuring models operate reliably, securely, and at scale. These platforms are essential for companies that want consistent, high-performance AI outputs, observability, and governance while mitigating risks like model drift, unsafe outputs, or bias.

Real-world use cases include:

- Deploying NLP and multimodal AI for chatbots, virtual assistants, and customer support.

- Real-time fraud detection in finance and e-commerce platforms.

- Predictive maintenance and anomaly detection in industrial IoT systems.

- Personalized recommendations in retail, streaming, and content platforms.

- Clinical decision support in healthcare systems.

- Dynamic pricing, logistics optimization, and inventory forecasting.

Best for: AI engineers, data scientists, ML teams, and enterprises of all sizes looking to operationalize models safely.

Not ideal for: Organizations with minimal AI needs, or those relying solely on pre-built SaaS AI services without customization requirements.

Evaluation Criteria Buyers

- Model flexibility: Hosted, BYO, open-source, and multi-model support.

- Deployment options: Cloud, self-hosted, hybrid, or private deployment.

- Performance: Low latency, high throughput, and reliable scaling.

- Observability: Logs, traces, token usage, cost tracking, and latency metrics.

- Evaluation: Prompt testing, regression testing, human review, and quality checks.

- Guardrails: Prompt injection defense, content filtering, and policy controls.

- Security: SSO, RBAC, encryption, audit logs, and data retention controls.

- Integrations: APIs, SDKs, vector databases, CI/CD, and cloud tools.

What’s Changed in Model Serving Platforms

- Support for agentic workflows and tool calling.

- Integration of multimodal model inputs (text, image, video, audio).

- Enhanced evaluation frameworks for hallucination detection and output reliability.

- Advanced guardrails for prompt injection and unsafe content prevention.

- Enterprise-grade privacy with data residency and retention controls.

- Cost and latency optimization with model routing and multi-cloud support.

- Observability enhancements including tracing, token usage, and latency metrics.

- Expanded governance and compliance expectations.

- Better support for BYO (Bring Your Own) models alongside hosted options.

- Improved multi-tenancy and role-based access controls.

- Enhanced CI/CD integration for continuous model updates.

- Support for real-time and batch inference pipelines.

Quick Buyer Checklist

- Data privacy and retention policies.

- Model choice: hosted, BYO, or open-source.

- RAG / knowledge base integration capabilities.

- Model evaluation and testing frameworks.

- Guardrails for safe outputs and policy enforcement.

- Latency and cost controls.

- Auditability and admin controls.

- Vendor lock-in risk assessment.

- Multi-cloud and hybrid deployment options.

- Observability: logging, tracing, token/cost metrics.

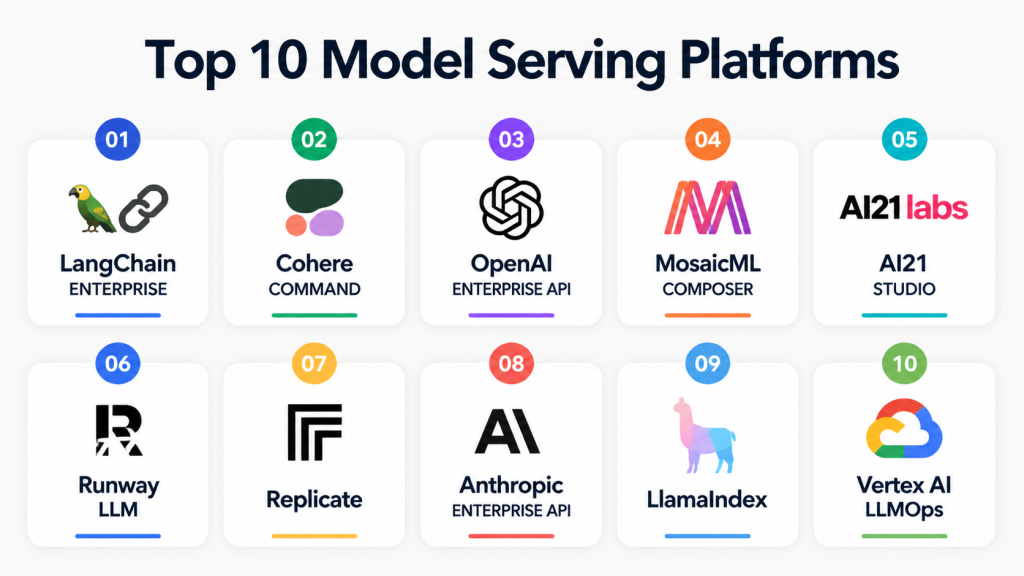

Top 10 Model Serving Platforms

1 — LangChain Enterprise

One-line verdict: Ideal for organizations seeking enterprise-grade orchestration for large language models and AI agents.

Short description: LangChain Enterprise provides robust model deployment, orchestration, and monitoring features, suitable for ML teams and AI developers.

Standout Capabilities

- Multi-model routing and orchestration.

- API-driven inference pipelines.

- End-to-end logging and observability.

- Model versioning and rollback.

- Enterprise-grade authentication and access control.

AI-Specific Depth

- Model support: BYO / Open-source

- RAG / knowledge integration: Vector DB connectors

- Evaluation: Regression tests, prompt-based evaluations

- Guardrails: Policy enforcement, prompt injection defense

- Observability: Traces, latency, token usage

Pros

- Scalable across multi-cloud deployments.

- Strong observability and monitoring tools.

- Flexible for both developers and enterprise teams.

Cons

- Steeper learning curve for non-technical users.

- Requires configuration for optimal performance.

- Advanced features may increase deployment complexity.

Security & Compliance

- SSO/SAML, RBAC, audit logs, encryption, data residency controls.

- Certifications: Not publicly stated

Deployment & Platforms

- Web, Linux, Windows, macOS

- Cloud, Self-hosted

Integrations & Ecosystem

Integrates with common ML frameworks and APIs for extensibility.

- Python SDK

- REST APIs

- Vector databases

- Monitoring tools

- Cloud storage connectors

Pricing Model

Tiered enterprise pricing, usage-based for API calls.

Best-Fit Scenarios

- Deploying LLMs with knowledge integrations.

- Enterprises needing multi-model orchestration.

- Teams requiring deep observability and monitoring.

2 — Cohere Command

One-line verdict: Suitable for teams seeking managed model serving with fine-tuned NLP capabilities.

Short description: Cohere Command enables enterprise-ready deployment of NLP models with support for custom fine-tuning and monitoring.

Standout Capabilities

- Managed hosting for NLP models.

- Fine-tuning support for domain-specific language models.

- Token-level usage tracking.

- Multi-region deployment options.

- Enterprise authentication integration.

AI-Specific Depth

- Model support: Proprietary / BYO

- RAG / knowledge integration: N/A

- Evaluation: Offline evaluation, regression tests

- Guardrails: Output policy checks

- Observability: Token usage, latency metrics

Pros

- Easy to integrate with existing ML pipelines.

- Strong NLP-specific performance.

- Secure and compliant enterprise deployments.

Cons

- Limited multimodal support.

- Less flexible than open-source alternatives.

- Some advanced features require enterprise tier.

Security & Compliance

- RBAC, SSO, encryption, audit logs

- Certifications: Not publicly stated

Deployment & Platforms

- Web-based

- Cloud

Integrations & Ecosystem

- REST APIs

- Python SDK

- ML monitoring tools

- Enterprise authentication

- Logging and analytics platforms

Pricing Model

Usage-based with enterprise plans for larger workloads.

Best-Fit Scenarios

- Domain-specific NLP deployments.

- Teams wanting managed services.

- Enterprise-grade monitoring requirements.

3 — OpenAI Enterprise API

One-line verdict: Perfect for developers seeking hosted access to OpenAI models with enterprise governance.

Short description: OpenAI Enterprise API provides robust API access to LLMs with features for model monitoring, safety, and compliance.

Standout Capabilities

- Access to proprietary models with updates.

- Built-in safety and content moderation.

- Multi-region latency optimization.

- Audit logs for usage tracking.

- API-based integration with internal systems.

AI-Specific Depth

- Model support: Proprietary

- RAG / knowledge integration: Connectors via embeddings

- Evaluation: Prompt tests, regression evaluations

- Guardrails: Policy checks, prompt injection defense

- Observability: Usage and latency metrics

Pros

- Reliable, high-quality LLMs.

- Fully managed infrastructure.

- Strong security and compliance controls.

Cons

- Cost may scale with heavy usage.

- Limited flexibility for custom model modifications.

- Dependent on vendor availability.

Security & Compliance

- Encryption, SSO/SAML, audit logs, RBAC

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud only

- Web, Python, Java, Node.js SDKs

Integrations & Ecosystem

- Embedding connectors

- Third-party analytics

- Enterprise API integrations

- Monitoring dashboards

Pricing Model

Tiered, usage-based subscription.

Best-Fit Scenarios

- Quick deployment of enterprise LLMs.

- Teams needing high availability.

- AI initiatives requiring compliance monitoring.

4 — MosaicML Composer

One-line verdict: Designed for ML engineers looking to deploy large-scale models with open-source flexibility.

Short description: Composer offers tools for model training, deployment, and orchestration with a focus on custom and open-source model support.

Standout Capabilities

- Support for BYO and open-source models.

- Fine-tuning pipelines included.

- Scalable orchestration for multi-cloud environments.

- Token and latency observability.

- Integration with experiment tracking tools.

AI-Specific Depth

- Model support: BYO / Open-source

- RAG / knowledge integration: N/A

- Evaluation: Regression tests, offline eval

- Guardrails: N/A

- Observability: Traces, latency metrics

Pros

- Highly flexible for developers.

- Open-source friendly.

- Strong orchestration and observability.

Cons

- Requires ML expertise to deploy.

- Less turnkey for non-technical users.

- Enterprise support varies.

Security & Compliance

- RBAC, encryption, logging; certifications: Not publicly stated

Deployment & Platforms

- Cloud, Self-hosted

- Linux, macOS, Windows

Integrations & Ecosystem

- Python SDKs

- ML frameworks (PyTorch, TensorFlow)

- Monitoring dashboards

- CI/CD pipelines

- Data connectors

Pricing Model

Open-source core; enterprise tier available.

Best-Fit Scenarios

- Custom ML deployment pipelines.

- BYO large language models.

- Multi-cloud scalable orchestration.

5 — AI21 Studio

One-line verdict: Ideal for teams focusing on generative NLP and high-throughput API deployments.

Short description: AI21 Studio enables rapid deployment of text generation and comprehension models with enterprise-grade APIs.

Standout Capabilities

- High-throughput API for generative AI.

- Fine-tuning and prompt engineering tools.

- Observability dashboards for latency and usage.

- Multi-region support.

- Policy and guardrail integration.

AI-Specific Depth

- Model support: Proprietary / BYO

- RAG / knowledge integration: Vector DB connectors

- Evaluation: Prompt testing, regression

- Guardrails: Policy checks, prompt injection defense

- Observability: Token and latency metrics

Pros

- Excellent for NLP-centric apps.

- Easy API integration.

- Observability built-in.

Cons

- Limited multimodal support.

- Enterprise features require higher tiers.

- Less suited for custom open-source models.

Security & Compliance

- SSO, RBAC, encryption; certifications: Not publicly stated

Deployment & Platforms

- Cloud

- Web and Python SDK

Integrations & Ecosystem

- REST API

- SDKs

- Embedding connectors

- Analytics dashboards

- Monitoring tools

Pricing Model

Usage-based; enterprise tiers available

Best-Fit Scenarios

- NLP applications and chatbots.

- Teams needing high API throughput.

- Enterprise deployments with compliance needs.

6 — Runway LLM

One-line verdict: Best for creative teams deploying multimodal models for content generation and media workflows.

Short description: Runway LLM allows seamless deployment of text, image, and video generation models in production.

Standout Capabilities

- Multimodal support (text, image, video).

- Managed inference and deployment pipelines.

- Real-time monitoring dashboards.

- Model versioning and rollback.

- API access with secure endpoints.

AI-Specific Depth

- Model support: Proprietary / BYO

- RAG / knowledge integration: N/A

- Evaluation: Offline evaluation, regression

- Guardrails: Output policies, safe content filters

- Observability: Latency, token, and usage metrics

Pros

- Strong multimodal capabilities.

- Creative content pipelines supported.

- Easy API integration.

Cons

- Focused on creative workflows, less on general ML.

- Enterprise features limited.

- May require technical expertise for large-scale deployments.

Security & Compliance

- RBAC, SSO, encryption; certifications: Not publicly stated

Deployment & Platforms

- Cloud

- Web-based, Windows, macOS

Integrations & Ecosystem

- REST APIs

- SDKs for Python/Node.js

- Workflow connectors

- Monitoring tools

Pricing Model

Usage-based subscription

Best-Fit Scenarios

- Generative media applications.

- Teams using multimodal AI.

- Content automation workflows.

7 — Replicate

One-line verdict: Suited for developers needing open-source-friendly model deployment with flexible inference.

Short description: Replicate provides a platform to host, run, and share machine learning models with community-driven support.

Standout Capabilities

- Hosting for open-source models.

- Version control for model updates.

- API and SDK access.

- Observability dashboards.

- Community model sharing.

AI-Specific Depth

- Model support: Open-source / BYO

- RAG / knowledge integration: N/A

- Evaluation: User-driven tests

- Guardrails: Varies / N/A

- Observability: Latency and usage

Pros

- Open-source friendly.

- Flexible deployment options.

- Community-driven ecosystem.

Cons

- Less enterprise-grade support.

- Limited guardrails.

- Requires developer expertise.

Security & Compliance

- Encryption, access controls; certifications: Not publicly stated

Deployment & Platforms

- Cloud, self-hosted

- Web, Linux, Windows, macOS

Integrations & Ecosystem

- Python SDK

- REST API

- Community model connectors

- Monitoring integrations

Pricing Model

Tiered usage; open-source models free

Best-Fit Scenarios

- Open-source ML deployment.

- Developer experimentation and prototyping.

- BYO models in production.

8 — Anthropic Enterprise API

One-line verdict: Tailored for enterprises needing safe and reliable LLM inference with strong AI guardrails.

Short description: Anthropic Enterprise API focuses on model safety, observability, and compliance for large-scale deployments.

Standout Capabilities

- Safety-first LLM deployment.

- Prompt injection protection.

- Token and latency observability.

- Multi-region deployment.

- Enterprise authentication and access control.

AI-Specific Depth

- Model support: Proprietary / Hosted

- RAG / knowledge integration: Connectors available

- Evaluation: Regression tests, prompt validation

- Guardrails: Strong policy enforcement

- Observability: Usage metrics, latency

Pros

- Strong AI safety and compliance.

- Reliable enterprise infrastructure.

- Built-in monitoring and observability.

Cons

- Proprietary models only.

- Cost may be high for large workloads.

- Limited flexibility for customization.

Security & Compliance

- SSO, RBAC, audit logs, encryption; certifications: Not publicly stated

Deployment & Platforms

- Cloud only

- Web, API

Integrations & Ecosystem

- REST APIs

- SDKs for Python/Node.js

- Analytics and monitoring tools

- Vector DB connectors

Pricing Model

Usage-based subscription

Best-Fit Scenarios

- Enterprises prioritizing AI safety.

- LLM deployments requiring guardrails.

- Compliance-heavy industries.

9 — LlamaIndex

One-line verdict: Optimal for developers building knowledge-driven AI with flexible connectors and RAG pipelines.

Short description: LlamaIndex specializes in connecting LLMs to external knowledge sources with vector database integration.

Standout Capabilities

- RAG pipelines built-in.

- Vector database connectors.

- API-driven inference.

- Token usage observability.

- Open-source friendly.

AI-Specific Depth

- Model support: BYO / Open-source

- RAG / knowledge integration: Vector DB integration

- Evaluation: Prompt testing, offline evaluation

- Guardrails: N/A

- Observability: Token usage, latency

Pros

- Strong knowledge integration.

- Flexible model support.

- Developer-focused APIs.

Cons

- Enterprise-grade features limited.

- Requires developer expertise.

- Guardrails are minimal.

Security & Compliance

- Encryption, RBAC; certifications: Not publicly stated

Deployment & Platforms

- Cloud, self-hosted

- Linux, Windows, macOS

Integrations & Ecosystem

- Python SDK

- REST API

- Vector DB connectors

- Monitoring dashboards

Pricing Model

Open-source core; enterprise subscription available

Best-Fit Scenarios

- Knowledge-based AI apps.

- RAG workflows for developers.

- Teams needing vector DB integrations.

10 — Vertex AI LLMOps

One-line verdict: Best for enterprises leveraging cloud-native infrastructure for scalable LLM operations and monitoring.

Short description: Vertex AI LLMOps provides cloud-native model serving, orchestration, and monitoring with enterprise-grade integrations.

Standout Capabilities

- Multi-cloud orchestration.

- Model versioning and rollback.

- Observability dashboards.

- API-driven inference.

- Security and compliance tools.

AI-Specific Depth

- Model support: Hosted / BYO

- RAG / knowledge integration: Connectors via embeddings

- Evaluation: Regression tests, prompt evaluation

- Guardrails: Policy enforcement

- Observability: Latency, token, cost metrics

Pros

- Cloud-native scalability.

- Enterprise integration-ready.

- Strong observability and governance.

Cons

- Limited offline/self-hosted options.

- Cost scales with usage.

- Vendor lock-in risk.

Security & Compliance

- SSO, RBAC, audit logs, encryption, data residency controls; certifications: Not publicly stated

Deployment & Platforms

- Cloud only

- Web, Python, Java SDKs

Integrations & Ecosystem

- REST API

- SDKs

- Monitoring tools

- CI/CD pipelines

- Vector DB connectors

Pricing Model

Usage-based subscription

Best-Fit Scenarios

- Enterprise LLM deployments.

- Multi-model orchestration.

- High observability and compliance needs.

Comparison Table

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| LangChain Enterprise | Enterprise LLM orchestration | Cloud/Self-hosted | BYO/Open-source | Observability | Complexity | N/A |

| Cohere Command | NLP-focused teams | Cloud | BYO/Proprietary | Managed NLP | Limited multimodal | N/A |

| OpenAI Enterprise API | Developers needing hosted LLMs | Cloud | Proprietary | Reliable models | Cost scales | N/A |

| MosaicML Composer | ML engineers deploying open-source | Cloud/Self-hosted | BYO/Open-source | Flexibility | Requires expertise | N/A |

| AI21 Studio | Generative NLP applications | Cloud | Proprietary/BYO | API throughput | Limited multimodal | N/A |

| Runway LLM | Creative multimodal workflows | Cloud | BYO/Proprietary | Multimodal support | Focused on creative | N/A |

| Replicate | Open-source developers | Cloud/Self-hosted | Open-source/BYO | Flexibility | Enterprise support limited | N/A |

| Anthropic Enterprise API | Safe LLM enterprise deployments | Cloud | Proprietary | Safety-first | Cost | N/A |

| LlamaIndex | Knowledge-driven AI | Cloud/Self-hosted | BYO/Open-source | RAG pipelines | Limited guardrails | N/A |

| Vertex AI LLMOps | Cloud-native enterprise LLM |

Scoring & Evaluation

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| Vertex AI LLMOps | 9 | 8 | 8 | 9 | 8 | 8 | 9 | 8 | 8.45 |

| OpenAI Enterprise API | 9 | 8 | 8 | 9 | 9 | 7 | 8 | 8 | 8.35 |

| Anthropic Enterprise API | 8 | 8 | 9 | 8 | 8 | 7 | 8 | 8 | 8.05 |

| LangChain Enterprise | 8 | 8 | 7 | 9 | 7 | 8 | 8 | 7 | 7.95 |

| Cohere Command | 8 | 7 | 7 | 8 | 8 | 8 | 8 | 7 | 7.75 |

| MosaicML Composer | 8 | 7 | 6 | 8 | 6 | 8 | 7 | 7 | 7.25 |

| LlamaIndex | 7 | 7 | 6 | 9 | 7 | 7 | 6 | 8 | 7.20 |

| Replicate | 7 | 6 | 5 | 8 | 8 | 8 | 6 | 7 | 6.95 |

| AI21 Studio | 7 | 7 | 7 | 7 | 8 | 7 | 7 | 7 | 7.10 |

| Runway LLM | 7 | 6 | 6 | 7 | 8 | 7 | 6 | 7 | 6.75 |

Top 3 for Enterprise

- Vertex AI LLMOps

- OpenAI Enterprise API

- Anthropic Enterprise API

Top 3 for SMB

- OpenAI Enterprise API

- Replicate

- Cohere Command

Top 3 for Developers

- LangChain Enterprise

- LlamaIndex

- Replicate

Which Model Serving Platform Is Right for You?

Solo / Freelancer

Solo developers and freelancers usually need fast setup, simple APIs, predictable usage, and minimal platform maintenance. OpenAI Enterprise API, Replicate, and LlamaIndex are strong choices depending on whether the goal is hosted inference, open-source experimentation, or RAG-based application development.

SMB

Small and growing businesses should focus on ease of use, managed infrastructure, cost visibility, and integration simplicity. OpenAI Enterprise API, Cohere Command, and Replicate are practical options because they reduce infrastructure overhead while still supporting real production use cases.

Mid-Market

Mid-market teams often need stronger governance, monitoring, integrations, and deployment control. LangChain Enterprise, Vertex AI LLMOps, and Cohere Command are good fits because they support more structured workflows, observability, and team-based operations.

Enterprise

Large enterprises should prioritize security controls, auditability, multi-team governance, model evaluation, incident handling, and cost management. Vertex AI LLMOps, OpenAI Enterprise API, Anthropic Enterprise API, and LangChain Enterprise are better suited for complex production environments.

Regulated Industries

Finance, healthcare, insurance, public sector, and other regulated industries should evaluate platforms based on data retention, encryption, access control, audit logs, residency options, evaluation workflows, and human review support. Do not rely only on model quality. Governance and traceability are equally important.

Budget vs Premium

Budget-focused teams can start with Replicate, LlamaIndex, or open-source-based deployments. Premium buyers should evaluate Vertex AI LLMOps, OpenAI Enterprise API, Anthropic Enterprise API, and LangChain Enterprise for stronger enterprise controls, support, and operational maturity.

Build vs Buy

Build your own serving layer only when your team has strong ML infrastructure skills, strict deployment constraints, or highly custom latency and cost requirements. Buy a managed platform when speed, governance, monitoring, reliability, and support matter more than full infrastructure control.

Implementation Playbook

First 30 Days: Pilot and Success Metrics

- Select one or two high-value use cases.

- Define clear success metrics such as latency, accuracy, cost per request, uptime, and user satisfaction.

- Choose a limited model set for testing.

- Create a basic evaluation harness for prompts, regression tests, and expected outputs.

- Set up logging for requests, responses, latency, and cost.

- Identify security and privacy requirements before production rollout.

- Run the first pilot with internal users only.

First 60 Days: Security, Evaluation, and Rollout

- Add role-based access controls and admin permissions.

- Configure SSO, audit logs, and encryption where available.

- Add guardrails for unsafe outputs, prompt injection, sensitive data exposure, and policy violations.

- Build prompt and model version control.

- Introduce human review for high-risk workflows.

- Create incident handling steps for model failures or unsafe responses.

- Begin rollout to a controlled group of business users.

First 90 Days: Cost, Governance, and Scale

- Optimize model routing based on latency, quality, and cost.

- Review token usage, compute costs, and peak-load behavior.

- Create governance rules for model selection, prompt changes, data access, and production approvals.

- Expand monitoring dashboards for business and technical teams.

- Run red-team testing for jailbreaks, prompt injection, and data leakage.

- Standardize documentation for future model deployments.

- Scale to additional teams only after evaluation and safety controls are proven.

Common Mistakes and How to Avoid Them

- Deploying models without evaluation tests.

- Ignoring prompt injection risks in user-facing applications.

- Allowing unmanaged data retention without clear policy review.

- Failing to monitor latency, token usage, and cost per request.

- Choosing a platform only because it has strong demos.

- Over-automating sensitive workflows without human review.

- Not tracking prompt and model versions.

- Using one model for every task instead of routing intelligently.

- Forgetting rollback planning when model behavior changes.

- Not testing edge cases, adversarial prompts, and unsafe inputs.

- Ignoring vendor lock-in until migration becomes expensive.

- Weak access controls for production model endpoints.

- Treating observability as optional instead of foundational.

- Scaling before governance and incident handling are ready.

FAQs

1. What is a Model Serving Platform?

A Model Serving Platform helps teams deploy machine learning and AI models into production. It manages inference, APIs, scaling, monitoring, versioning, and operational controls so models can be used reliably in real applications.

2. Why do companies need model serving instead of just training models?

Training creates a model, but serving makes it usable in production. Model serving handles real-time requests, scaling, monitoring, security, rollback, and performance management.

3. Can Model Serving Platforms support BYO models?

Yes, many platforms support bring-your-own models, open-source models, or custom fine-tuned models. However, support varies by vendor, so buyers should verify model formats, deployment options, and runtime compatibility.

4. Do these platforms support self-hosting?

Some platforms support self-hosted or hybrid deployment, while others are cloud-only. Teams with strict compliance, residency, or infrastructure requirements should confirm deployment flexibility before shortlisting.

5. What is the role of evaluation in model serving?

Evaluation helps teams test model quality, reliability, hallucination risk, regressions, and unsafe outputs before and after deployment. Without evaluation, teams may not detect quality drops until users are affected.

6. What are guardrails in model serving?

Guardrails are controls that reduce unsafe, inaccurate, or policy-violating outputs. They may include content filters, prompt-injection defenses, data leakage checks, and human review workflows.

7. How do Model Serving Platforms help control cost?

They help monitor token usage, compute usage, request volume, latency, and model routing. Some platforms allow cheaper models for simple tasks and stronger models for complex tasks.

8. Are Model Serving Platforms suitable for regulated industries?

Yes, but only when security, audit logs, encryption, access controls, data retention, and governance workflows meet internal requirements. Certifications and compliance claims should always be verified directly.

9. What is model routing?

Model routing sends each request to the most suitable model based on cost, speed, quality, risk, or task type. It helps reduce waste while maintaining strong performance.

10. Can these platforms support RAG applications?

Many platforms support RAG workflows directly or through integrations with vector databases, document connectors, and embedding models. RAG support varies, so teams should test retrieval quality before production.

11. What is observability in model serving?

Observability means tracking how models behave in production. It includes traces, latency, errors, token usage, cost, request logs, output quality, and user feedback.

12. How hard is it to switch Model Serving Platforms?

Switching can be difficult if prompts, APIs, monitoring, data pipelines, and model formats are tightly coupled to one vendor. Abstraction layers, portable prompts, and open standards can reduce migration risk.

13. Are open-source serving platforms better than managed platforms?

Open-source platforms offer flexibility and control, but they require more engineering ownership. Managed platforms are easier to operate but may involve higher usage costs and more vendor dependency.

14. What should buyers test in a pilot?

Buyers should test latency, cost, reliability, output quality, security controls, evaluation workflows, guardrails, admin controls, and integration fit. A pilot should reflect real production conditions.

15. What alternatives exist to Model Serving Platforms?

Alternatives include direct API usage, custom Kubernetes deployments, cloud ML services, serverless inference, and fully managed AI application platforms. The right option depends on complexity, scale, and internal expertise.

Conclusion

Model Serving Platforms are now a core part of modern AI infrastructure because they help teams move from experimentation to reliable production deployment. The best platform depends on your model strategy, team skills, governance needs, deployment preferences, and budget. Enterprise teams may prioritize security, observability, and admin control, while developers may care more about flexibility, APIs, and open-source support. Start by shortlisting platforms that match your use case, run a focused pilot with clear evaluation and safety metrics, verify security and governance controls, and then scale only after cost, latency, and reliability are proven in real workflows.