Introduction

Data deduplication for model training helps AI teams find and remove duplicate, near-duplicate, repeated, overly similar, or low-value examples from training, evaluation, fine-tuning, and retrieval datasets. In simple words, these tools help teams avoid training models on the same information again and again, which can waste compute, increase bias, reduce generalization, and distort evaluation results.

This category matters because modern AI teams work with massive datasets: web text, documents, images, videos, support tickets, code, logs, transcripts, product data, and multimodal records. When duplicate data enters training or evaluation pipelines, models may memorize repeated examples, perform poorly on rare cases, and show inflated benchmark results.

Real-World Use Cases

- LLM training cleanup: Remove duplicate documents, repeated paragraphs, copied web pages, and near-identical text chunks before training.

- RAG dataset preparation: Deduplicate knowledge base documents before embedding and storing them in vector databases.

- Computer vision dataset curation: Detect duplicate images, near-duplicate frames, similar scenes, and repeated visual samples.

- Evaluation dataset quality: Remove overlap between training and test sets to avoid misleading performance scores.

- Fine-tuning data cleanup: Remove repeated customer conversations, prompts, responses, support tickets, and labeled examples.

- Cost reduction: Reduce storage, labeling, embedding, and training costs by keeping only useful unique samples.

- Governance and privacy review: Detect repeated sensitive records that may increase privacy exposure across AI pipelines.

Evaluation Criteria for Buyers

- Duplicate detection depth: Check support for exact duplicates, fuzzy duplicates, semantic duplicates, image similarity, text similarity, and near-duplicate clusters.

- Data type support: Evaluate text, documents, code, images, videos, audio transcripts, tabular data, and multimodal datasets.

- Scale and performance: Test whether the tool can process large datasets without excessive latency or compute cost.

- Embedding and similarity support: Look for vector search, clustering, MinHash, perceptual hashing, semantic similarity, and model-based matching.

- Training workflow fit: Confirm compatibility with LLM training, fine-tuning, RAG ingestion, computer vision, and evaluation pipelines.

- Human review controls: Check whether reviewers can inspect duplicate groups before deletion or merging.

- False positive management: Make sure useful variations are not wrongly removed as duplicates.

- False negative management: Confirm the tool can catch subtle near-duplicates, not only exact file matches.

- Governance and auditability: Look for reports, dataset lineage, decision logs, approvals, and reproducible deduplication runs.

- Integration depth: Review APIs, SDKs, export formats, notebook support, storage connectors, and ML pipeline compatibility.

- Security and privacy: Check RBAC, SSO, audit logs, encryption, retention settings, and deployment options.

- Cost control: Evaluate how much the tool reduces labeling, embedding, storage, and training cost.

Best for: ML engineers, AI platform teams, data scientists, MLOps teams, data labeling teams, computer vision teams, LLM training teams, RAG teams, data governance teams, and enterprises preparing large datasets for model training or evaluation.

Not ideal for: very small datasets, one-time experiments with limited data, or teams that only need basic file-level duplicate removal. In simple cases, scripts, hashes, or spreadsheet cleanup may be enough.

What’s Changed in Data Deduplication for Model Training

- Deduplication is now model-quality work, not just storage cleanup. Duplicate data can increase memorization, distort evaluation, and make models look better than they really are.

- Near-duplicate detection matters more than exact matching. AI teams need to catch paraphrased documents, resized images, repeated frames, copied prompts, and semantically similar samples.

- RAG pipelines need deduplication before embedding. Duplicate documents increase vector database cost and can make retrieval results repetitive or noisy.

- LLM fine-tuning data needs stronger cleanup. Repeated examples can overweight certain behaviors, tones, labels, or policies during fine-tuning.

- Evaluation leakage is a major risk. If the same or similar examples appear in both training and test sets, evaluation results become unreliable.

- Multimodal deduplication is becoming common. Teams need to deduplicate images, videos, documents, text chunks, audio transcripts, and mixed data sources.

- Embedding-based clustering is more useful. Semantic similarity can identify duplicates that simple hashes miss.

- Human review remains important. Automated systems may remove useful variations, so high-risk deduplication decisions often need reviewer approval.

- Governance is now part of deduplication. Teams need reproducible runs, audit logs, dataset versions, and approval records.

- Cost optimization is a key driver. Removing repeated data reduces annotation cost, vector storage, training tokens, compute, and review workload.

- Privacy risk increases with duplication. Repeated sensitive records can increase exposure across prompts, embeddings, logs, and training files.

- Open-source and platform workflows are both growing. Developers often use libraries and notebooks, while enterprises prefer governed platforms with dashboards and review controls.

Quick Buyer Checklist

- Does the tool detect exact duplicates and near-duplicates?

- Can it handle your data type: text, documents, images, videos, code, tabular data, or multimodal records?

- Does it support semantic similarity, embeddings, clustering, hashing, or fuzzy matching?

- Can it deduplicate before fine-tuning, RAG ingestion, vectorization, or evaluation?

- Does it help prevent training-test leakage?

- Can humans review duplicate groups before data is deleted or merged?

- Does it provide dataset reports, quality metrics, and audit trails?

- Can it integrate with labeling tools, storage systems, notebooks, and ML pipelines?

- Does it support hosted, self-hosted, open-source, or hybrid workflows?

- Are RBAC, audit logs, encryption, and retention controls available?

- Can it preserve useful variations while removing true duplicates?

- Does it reduce storage, labeling, embedding, and training cost?

- Can duplicate decisions be exported and reproduced later?

- Does it avoid vendor lock-in through open formats and APIs?

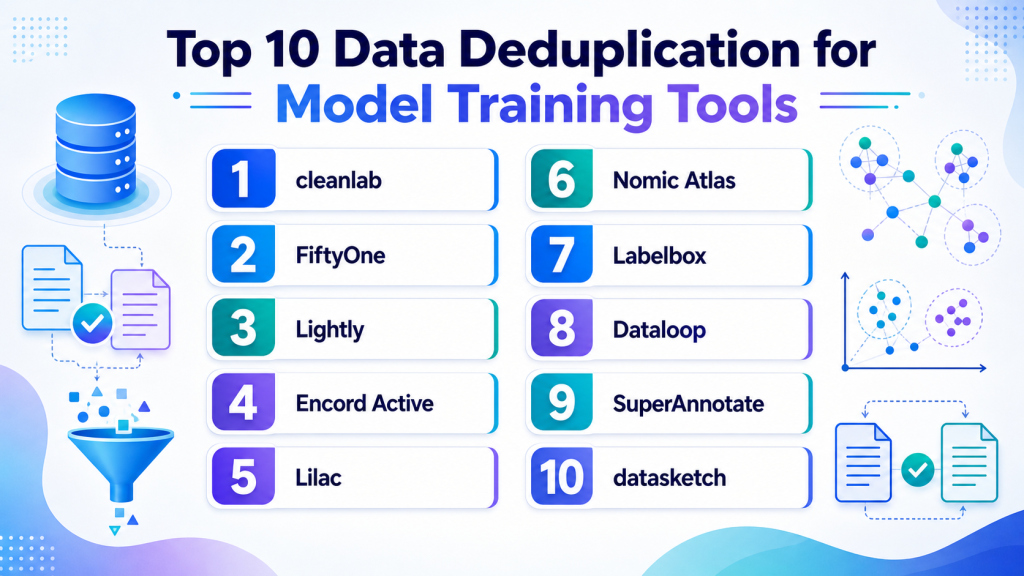

Top 10 Data Deduplication for Model Training Tools

1 — cleanlab

One-line verdict: Best for ML teams needing data quality checks, duplicate detection, label issue discovery, and cleaner training datasets.

Short description:

cleanlab helps teams find data quality problems such as label errors, outliers, duplicates, ambiguous examples, and low-confidence samples. It is useful for AI teams that want to improve training data quality before model development, fine-tuning, or evaluation.

Standout Capabilities

- Detects data quality issues that can weaken model performance.

- Useful for identifying duplicate or suspiciously similar samples.

- Helps uncover mislabeled, ambiguous, noisy, and low-value records.

- Supports data-centric AI workflows for improving datasets before training.

- Can help prioritize samples for human review.

- Useful for structured, text, and image-related workflows depending on setup.

- Developer-friendly for teams that want programmatic control.

- Helpful when deduplication is part of broader dataset cleaning.

AI-Specific Depth

- Model support: Model-agnostic and BYO model workflows may be supported depending on setup.

- RAG / knowledge integration: Varies / N/A; can support dataset cleanup before ingestion.

- Evaluation: Useful for improving evaluation datasets by detecting noisy or repeated examples.

- Guardrails: N/A for runtime prompt-injection defense; useful as a data quality guardrail.

- Observability: Data quality scores and issue reports may support dataset observability.

Pros

- Strong focus on data quality, not only duplicate matching.

- Useful for training data cleanup before modeling.

- Good fit for technical ML and data science teams.

Cons

- Requires understanding of datasets, model outputs, and quality signals.

- Not a full annotation or data governance platform by itself.

- Enterprise deployment and security details should be verified.

Security & Compliance

Security depends on deployment and plan. Buyers should verify SSO, RBAC, audit logs, encryption, retention controls, residency, and certifications directly. Certifications: Not publicly stated.

Deployment & Platforms

- Python and developer workflows may be supported.

- Web or enterprise platform options may vary.

- Cloud, local, or enterprise deployment: Varies / N/A.

- Windows, macOS, and Linux support depends on setup.

Integrations & Ecosystem

cleanlab fits into ML pipelines where teams work with labels, predictions, embeddings, and dataset metadata. It can be used before annotation, after annotation, before training, or during model improvement loops.

- Python ecosystem support may be available.

- Can work with model outputs and datasets.

- Notebook and ML pipeline workflows may be supported.

- Can complement labeling and review tools.

- Useful for dataset quality reporting.

- Enterprise integration details should be verified.

Pricing Model

Open-source and commercial options may be available. Exact enterprise pricing is not publicly stated.

Best-Fit Scenarios

- Cleaning noisy training datasets.

- Finding duplicate or low-value samples before model training.

- Improving model quality through data-centric workflows.

2 — FiftyOne

One-line verdict: Best for computer vision teams needing visual dataset exploration, duplicate detection, and model failure analysis.

Short description:

FiftyOne helps teams explore, visualize, filter, and curate image and video datasets. It is especially useful for computer vision teams that need to find duplicate images, near-duplicate frames, outliers, and model failure cases.

Standout Capabilities

- Strong visual dataset exploration for images and videos.

- Helps inspect labels, predictions, embeddings, and metadata.

- Useful for finding duplicate and near-duplicate visual samples.

- Can support similarity search and dataset slicing workflows.

- Helps identify outliers, rare cases, and repeated scenes.

- Useful for building cleaner evaluation and training datasets.

- Developer-friendly for notebook-based computer vision workflows.

- Strong fit for visual AI data curation.

AI-Specific Depth

- Model support: BYO model and open-source workflows may be supported through integration.

- RAG / knowledge integration: N/A for most visual dataset workflows.

- Evaluation: Supports model prediction review, dataset slicing, and failure-case analysis.

- Guardrails: N/A for runtime AI guardrails; useful for dataset quality control.

- Observability: Dataset-level observability and visual model behavior analysis may be supported.

Pros

- Excellent for visual duplicate detection and dataset inspection.

- Strong fit for image and video model workflows.

- Useful for finding repeated frames, scenes, and visual clusters.

Cons

- Less focused on LLM text deduplication.

- Requires technical setup for advanced usage.

- Enterprise governance details should be verified.

Security & Compliance

Security depends on deployment and configuration. Buyers should verify SSO, RBAC, audit logs, encryption, retention controls, residency, and certifications directly. Certifications: Not publicly stated.

Deployment & Platforms

- Developer and web-based workflows may be available.

- Local and cloud-style workflows vary by setup.

- Windows, macOS, and Linux support depends on environment.

- Self-hosted or enterprise options: Varies / N/A.

Integrations & Ecosystem

FiftyOne works well in ML engineering workflows where visual datasets, labels, predictions, and embeddings need to be inspected together.

- Python ecosystem support may be available.

- Works with images, videos, labels, predictions, and embeddings.

- Supports dataset slicing and filtering.

- Can connect with labeling workflows through exports.

- Useful with notebooks and ML experiments.

- Enterprise ecosystem details should be verified.

Pricing Model

Open-source and enterprise options may be available. Exact enterprise pricing is not publicly stated.

Best-Fit Scenarios

- Removing duplicate images and video frames.

- Curating computer vision training datasets.

- Building cleaner visual evaluation datasets.

3 — Lightly

One-line verdict: Best for visual AI teams reducing duplicate frames, redundant images, and low-value visual data.

Short description:

Lightly focuses on visual data curation, active learning, and dataset selection. It helps computer vision teams select diverse, representative, and high-value images or frames while reducing duplicate and redundant visual data.

Standout Capabilities

- Strong focus on visual data selection and deduplication.

- Helps reduce redundant images and video frames before labeling.

- Useful for large unlabeled image and video datasets.

- Can support embedding-based sample selection.

- Helps prioritize diverse and useful data for annotation.

- Reduces repeated labeling of near-identical visual samples.

- Good fit for computer vision cost optimization.

- Useful when visual data volume is very high.

AI-Specific Depth

- Model support: BYO model and embedding workflows may be supported depending on setup.

- RAG / knowledge integration: N/A.

- Evaluation: Can help build better training and evaluation sets through curated sample selection.

- Guardrails: N/A for runtime guardrails; useful for dataset quality control.

- Observability: Dataset diversity and selection visibility may be available; exact metrics vary.

Pros

- Strong for reducing visual data redundancy.

- Helps lower labeling and storage costs.

- Useful for image and video-heavy workflows.

Cons

- Less ideal for general text or tabular deduplication.

- Requires integration into data and annotation pipelines.

- Enterprise details should be verified directly.

Security & Compliance

Buyers should verify SSO, RBAC, audit logs, encryption, retention controls, residency, and certifications directly. Certifications: Not publicly stated.

Deployment & Platforms

- Web and developer workflows may be available.

- Cloud or self-hosted options: Varies / N/A.

- Windows, macOS, and Linux support depends on implementation.

- Mobile platforms: Varies / N/A.

Integrations & Ecosystem

Lightly fits visual ML pipelines where teams need to reduce duplicate frames, select high-value samples, and connect curated data to annotation or training workflows.

- API or Python-based workflows may be available.

- Works with visual datasets and embeddings.

- Can help select samples before annotation.

- May integrate with labeling tools and storage systems.

- Useful for model training pipelines.

- Export and pipeline support should be tested.

Pricing Model

Commercial, tiered, or enterprise options may be available. Exact pricing is not publicly stated.

Best-Fit Scenarios

- Deduplicating large visual datasets.

- Selecting diverse image and video samples.

- Reducing annotation cost for computer vision projects.

4 — Encord Active

One-line verdict: Best for visual AI teams needing dataset quality analysis, duplicate discovery, and curation workflows.

Short description:

Encord Active helps computer vision teams analyze and curate visual datasets. It is useful for finding duplicates, outliers, low-quality samples, model failure cases, and data quality problems before training or evaluation.

Standout Capabilities

- Strong focus on visual dataset quality analysis.

- Helps identify duplicates, outliers, and data issues.

- Useful for model failure discovery and dataset debugging.

- Can support active learning and data curation workflows.

- Helps prioritize samples for annotation or review.

- Useful for image and video-heavy AI projects.

- Supports better training and evaluation dataset preparation.

- Good fit for production visual AI workflows.

AI-Specific Depth

- Model support: BYO model and prediction analysis may be supported depending on setup.

- RAG / knowledge integration: N/A.

- Evaluation: Supports dataset and model performance analysis for visual workflows.

- Guardrails: N/A for runtime guardrails; useful for data quality governance.

- Observability: Dataset quality and model behavior insights may be available.

Pros

- Strong fit for visual dataset debugging.

- Useful for duplicate and outlier discovery.

- Helps improve dataset quality before training.

Cons

- Less suitable for LLM-only or text-only deduplication.

- Works best with visual metadata and model predictions.

- Enterprise details should be verified directly.

Security & Compliance

Enterprise security features may be available, but buyers should verify SSO, RBAC, audit logs, encryption, retention, residency, and certifications directly. Certifications: Not publicly stated.

Deployment & Platforms

- Web-based workflows may be available.

- Cloud deployment.

- Self-hosted or hybrid: Varies / N/A.

- Desktop and mobile: Varies / N/A.

Integrations & Ecosystem

Encord Active fits into visual AI pipelines where data quality, annotation, evaluation, and model improvement need to be connected.

- May integrate with annotation workflows.

- Supports visual dataset analysis.

- Can use model predictions and metadata.

- Helps create curated datasets.

- Useful for model failure review.

- Export and API support should be tested directly.

Pricing Model

Typically subscription or enterprise-based. Exact pricing is not publicly stated.

Best-Fit Scenarios

- Visual duplicate discovery.

- Computer vision dataset cleanup.

- Building cleaner image and video evaluation sets.

5 — Lilac

One-line verdict: Best for LLM teams exploring, filtering, clustering, and deduplicating text datasets before training.

Short description:

Lilac is an open-source data exploration and curation tool often used for LLM and text-heavy datasets. It helps teams inspect large text datasets, find patterns, filter low-quality data, and identify similar or repeated content.

Standout Capabilities

- Useful for exploring and curating text-heavy datasets.

- Helps teams inspect patterns, clusters, and repeated content.

- Supports dataset filtering for LLM training preparation.

- Developer-friendly for AI teams working with large text collections.

- Can help identify low-quality, toxic, sensitive, or repetitive data depending on setup.

- Useful before fine-tuning or evaluation dataset creation.

- Good fit for LLM data analysis workflows.

- Helps teams understand dataset composition before training.

AI-Specific Depth

- Model support: Open-source and BYO workflows may be supported through implementation.

- RAG / knowledge integration: Can help inspect and filter text before RAG ingestion.

- Evaluation: Useful for preparing cleaner evaluation and fine-tuning datasets.

- Guardrails: Varies / N/A; can help identify problematic content before training.

- Observability: Dataset exploration and clustering visibility may be available.

Pros

- Strong fit for LLM dataset exploration.

- Useful for text filtering and duplicate review.

- Open-source-friendly for technical teams.

Cons

- Requires technical setup and workflow design.

- Not a full enterprise governance platform by itself.

- Security and compliance controls depend on deployment.

Security & Compliance

Security depends on deployment and configuration. SSO, RBAC, audit logs, encryption, retention controls, residency, and certifications should be verified by the user’s setup. Certifications: Not publicly stated.

Deployment & Platforms

- Open-source and developer workflows.

- Local or self-hosted setup may be possible.

- Cloud deployment depends on implementation.

- Windows, macOS, and Linux support depends on environment.

Integrations & Ecosystem

Lilac fits well into LLM data preparation workflows where teams need to inspect, filter, cluster, and clean text before fine-tuning or evaluation.

- Open-source ecosystem support.

- Text dataset exploration.

- Filtering and clustering workflows.

- Can support local AI data preparation.

- May connect with notebooks and dataset tools.

- Enterprise integration requires custom setup.

Pricing Model

Open-source. Commercial support or managed options: Varies / N/A.

Best-Fit Scenarios

- LLM dataset exploration and deduplication.

- Filtering repeated or low-quality text samples.

- Preparing fine-tuning and evaluation datasets.

6 — Nomic Atlas

One-line verdict: Best for teams needing embedding-based dataset maps, semantic clusters, and duplicate discovery.

Short description:

Nomic Atlas helps teams visualize, search, and explore large datasets using embeddings and semantic maps. It is useful for identifying similar records, repeated clusters, near-duplicates, and dataset patterns before training or evaluation.

Standout Capabilities

- Embedding-based dataset exploration and visualization.

- Helps identify semantic clusters and similar examples.

- Useful for text, image, and embedding-based workflows depending on setup.

- Can support duplicate and near-duplicate discovery.

- Helps teams understand dataset composition.

- Useful for LLM and multimodal dataset review.

- Supports dataset search and filtering workflows.

- Helpful for spotting overrepresented groups or repetitive data.

AI-Specific Depth

- Model support: BYO embeddings and model-adjacent workflows may be supported.

- RAG / knowledge integration: Can help inspect documents before RAG ingestion.

- Evaluation: Useful for building diverse evaluation datasets and finding leakage patterns.

- Guardrails: Varies / N/A; can help review sensitive or repetitive clusters.

- Observability: Dataset maps and cluster visibility may support dataset observability.

Pros

- Strong for semantic duplicate discovery.

- Useful for dataset visualization and clustering.

- Good fit for LLM and embedding-heavy workflows.

Cons

- Deduplication decisions may still require human review.

- Advanced workflows require understanding embeddings.

- Security and deployment details should be verified.

Security & Compliance

Buyers should verify SSO, RBAC, audit logs, encryption, retention controls, residency, and certifications directly. Certifications: Not publicly stated.

Deployment & Platforms

- Web-based and developer workflows may be available.

- Cloud deployment: Varies / N/A.

- Self-hosted or private deployment: Varies / N/A.

- Platform support depends on implementation.

Integrations & Ecosystem

Nomic Atlas fits workflows where embeddings, dataset maps, and semantic search help teams inspect large datasets before model training or retrieval.

- Embedding workflow support may be available.

- Dataset visualization and search.

- Can support text and image exploration depending on setup.

- Useful for dataset curation and filtering.

- API or notebook workflows may be available.

- Integration details should be validated.

Pricing Model

Typically open-source, hosted, or enterprise options may vary. Exact pricing is not publicly stated.

Best-Fit Scenarios

- Semantic duplicate discovery in text datasets.

- Dataset map review before training.

- Finding repeated clusters in embedding spaces.

7 — Labelbox

One-line verdict: Best for teams connecting deduplication, data curation, annotation, and human review workflows.

Short description:

Labelbox is an AI data platform used for labeling, data curation, review, and dataset management. It can help teams organize and review selected datasets, especially when duplicate management is tied to annotation and model feedback workflows.

Standout Capabilities

- Supports data curation and labeling workflows.

- Useful across images, text, documents, and AI feedback workflows.

- Helps teams manage annotation and review processes.

- Can support dataset organization and quality workflows.

- Useful when duplicate review requires human validation.

- Good fit for large labeling operations.

- Can connect data curation with model improvement.

- Helps teams structure AI data operations.

AI-Specific Depth

- Model support: Varies / N/A; model-assisted workflows may be supported.

- RAG / knowledge integration: Varies / N/A; can support review of documents if configured.

- Evaluation: Human review and feedback workflows may support evaluation datasets.

- Guardrails: Workflow permissions and review controls may help; runtime guardrails vary.

- Observability: Labeling and quality analytics may be available; token metrics vary.

Pros

- Strong fit for annotation plus data curation.

- Useful when humans must review duplicate groups.

- Good for teams managing large AI data workflows.

Cons

- Not only a specialist deduplication engine.

- Advanced features may depend on plan.

- BYO deduplication workflows should be tested in pilot.

Security & Compliance

Enterprise controls may be available, but buyers should verify SSO, RBAC, audit logs, encryption, retention controls, residency, and certifications directly. Certifications: Not publicly stated.

Deployment & Platforms

- Web-based platform.

- Cloud deployment.

- Self-hosted or hybrid: Varies / N/A.

- Desktop and mobile: Varies / N/A.

Integrations & Ecosystem

Labelbox can fit into AI pipelines where data curation, duplicate review, annotation, and retraining need to work together.

- API access may be available.

- Cloud storage integrations may be supported.

- Export options vary by data type.

- Human review workflows support dataset improvement.

- Can connect with model-assisted workflows.

- Useful for ML and AI data operations.

Pricing Model

Typically tiered, usage-based, or enterprise-based. Exact pricing is not publicly stated.

Best-Fit Scenarios

- Duplicate review before annotation.

- Human validation of near-duplicate clusters.

- AI data operations with labeling workflows.

8 — Dataloop

One-line verdict: Best for teams needing AI data operations, workflow automation, and curated dataset management.

Short description:

Dataloop provides data annotation, automation, dataset management, and AI data operations workflows. It is useful when deduplication is part of a larger pipeline involving selection, labeling, review, automation, and model improvement.

Standout Capabilities

- Supports annotation and AI data operations in one environment.

- Useful for workflow automation around selected datasets.

- Can support human-in-the-loop and model-in-the-loop processes.

- Helps manage datasets, tasks, reviews, and pipeline steps.

- Suitable for visual and multimodal AI workflows.

- Useful when duplicate review connects to labeling operations.

- Good fit for teams needing operational control.

- Helps connect curation, review, and retraining processes.

AI-Specific Depth

- Model support: Varies / N/A; model-in-the-loop workflows may be supported.

- RAG / knowledge integration: Varies / N/A.

- Evaluation: Review workflows may support evaluation pipelines.

- Guardrails: Workflow governance and permissions may help; runtime guardrails vary.

- Observability: Workflow and project analytics may be available.

Pros

- Strong for operational AI data workflows.

- Useful for automation and review pipelines.

- Supports teams scaling beyond manual dataset cleanup.

Cons

- May require implementation effort.

- Smaller teams may not need full workflow depth.

- Exact deduplication-specific capabilities should be tested.

Security & Compliance

Enterprise features may be available, but buyers should verify SSO, RBAC, audit logs, encryption, retention controls, residency, and certifications directly. Certifications: Not publicly stated.

Deployment & Platforms

- Web-based platform.

- Cloud deployment.

- Hybrid or private deployment: Varies / N/A.

- Desktop and mobile: Varies / N/A.

Integrations & Ecosystem

Dataloop is useful when deduplication connects to larger AI data operations, including annotation queues, review workflows, model outputs, and retraining pipelines.

- API and SDK capabilities may be available.

- Automation workflows may be supported.

- Dataset management features support pipeline operations.

- Cloud storage integrations may be available.

- Export formats vary by use case.

- Custom workflow support may be available.

Pricing Model

Typically subscription, usage-based, or enterprise-based. Exact pricing is not publicly stated.

Best-Fit Scenarios

- AI data operations with duplicate review.

- Workflow automation around curated datasets.

- Large labeling and review pipelines.

9 — SuperAnnotate

One-line verdict: Best for annotation teams needing multimodal review, dataset organization, and quality workflows.

Short description:

SuperAnnotate supports annotation, data management, and review workflows for AI teams. It is useful when data deduplication needs to connect with labeling, QA, human review, and multimodal dataset operations.

Standout Capabilities

- Supports visual and multimodal annotation workflows.

- Helps manage datasets, labeling projects, and review operations.

- Can support AI-assisted labeling and human review.

- Useful for prioritizing selected samples for annotation.

- Supports collaboration across annotators, reviewers, and managers.

- Helps maintain quality control in dataset workflows.

- Useful when duplicate decisions require reviewer input.

- Suitable for teams needing annotation depth and structure.

AI-Specific Depth

- Model support: Varies / N/A; AI-assisted workflows may be supported.

- RAG / knowledge integration: N/A for most use cases.

- Evaluation: Human review and QA workflows may support evaluation datasets.

- Guardrails: Workflow controls and reviewer permissions may help; runtime guardrails vary.

- Observability: Annotation and review analytics may be available.

Pros

- Strong for annotation operations and review.

- Useful when deduplication connects to labeling.

- Good fit for visual and multimodal datasets.

Cons

- Not a pure deduplication engine.

- Setup effort may be needed for complex workflows.

- Exact data curation features should be validated.

Security & Compliance

Security controls may include administrative features, but buyers should verify SSO, RBAC, audit logs, encryption, retention controls, residency, and certifications directly. Certifications: Not publicly stated.

Deployment & Platforms

- Web-based platform.

- Cloud deployment.

- Self-hosted or private deployment: Varies / N/A.

- Desktop and mobile: Varies / N/A.

Integrations & Ecosystem

SuperAnnotate can connect labeling, review, and dataset operations for teams that need structured human-in-the-loop workflows.

- API support may be available.

- Dataset import and export options may be supported.

- Cloud storage connections may be available.

- Review workflows support QA.

- AI-assisted annotation may be supported.

- Integration depth varies by plan.

Pricing Model

Typically tiered or enterprise-based. Exact pricing is not publicly stated.

Best-Fit Scenarios

- Duplicate review before annotation.

- Multimodal dataset quality workflows.

- Teams needing annotation plus dataset management.

10 — datasketch

One-line verdict: Best for developers needing open-source MinHash and similarity search for large-scale deduplication.

Short description:

datasketch is an open-source Python library commonly used for approximate similarity, MinHash, and locality-sensitive hashing workflows. It is useful for developers building custom deduplication pipelines for text, documents, shingles, and large datasets.

Standout Capabilities

- Open-source Python library for similarity search and deduplication workflows.

- Useful for MinHash and locality-sensitive hashing patterns.

- Can help detect near-duplicate documents and text samples.

- Developer-friendly for custom large-scale pipelines.

- Useful when teams need control over deduplication logic.

- Can be combined with ETL, storage, and ML workflows.

- Good fit for technical teams handling text-heavy datasets.

- Flexible for building internal preprocessing systems.

AI-Specific Depth

- Model support: Open-source and BYO workflows through custom implementation.

- RAG / knowledge integration: Can deduplicate documents before RAG ingestion.

- Evaluation: Custom evaluation is required to measure precision and recall.

- Guardrails: N/A for runtime guardrails; useful as a preprocessing data quality control.

- Observability: Requires custom logging, reporting, and monitoring.

Pros

- Lightweight and flexible for developers.

- Useful for near-duplicate text detection.

- Good for custom preprocessing pipelines.

Cons

- Requires engineering expertise.

- No built-in enterprise governance layer.

- Human review and dashboards require custom work.

Security & Compliance

Security depends on user deployment. SSO, RBAC, audit logs, encryption, retention controls, residency, and certifications are not publicly stated for open-source self-managed use.

Deployment & Platforms

- Python library.

- Local or self-managed deployment.

- Windows, macOS, and Linux depending on Python environment.

- Cloud deployment depends on user implementation.

Integrations & Ecosystem

datasketch is best for technical teams building custom deduplication pipelines where approximate similarity and near-duplicate detection are required.

- Python ecosystem support.

- ETL pipeline integration through custom code.

- Works with document shingles and similarity workflows.

- Can be combined with data lakes and storage systems.

- Useful for RAG preprocessing.

- Enterprise features require custom implementation.

Pricing Model

Open-source. Commercial support: Varies / N/A.

Best-Fit Scenarios

- Near-duplicate text detection.

- Custom document deduplication before RAG ingestion.

- Developer-built preprocessing pipelines.

Comparison Table

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| cleanlab | Data quality and duplicate issue detection | Cloud / Local / Varies | BYO / Model-agnostic | Data quality scoring | Requires technical setup | N/A |

| FiftyOne | Visual dataset deduplication | Local / Cloud / Varies | Open-source / BYO | Visual dataset exploration | Best for technical teams | N/A |

| Lightly | Visual active selection and deduplication | Cloud / Varies | BYO / Embedding-based | Redundant sample reduction | Vision-focused | N/A |

| Encord Active | Visual dataset quality analysis | Cloud / Varies | BYO adjacent | Failure and duplicate discovery | Less text-focused | N/A |

| Lilac | LLM text dataset exploration | Self-hosted / Local / Varies | Open-source / BYO | Text dataset curation | Requires setup | N/A |

| Nomic Atlas | Embedding-based dataset maps | Cloud / Varies | BYO embeddings | Semantic duplicate discovery | Human review may be needed | N/A |

| Labelbox | Curation with annotation review | Cloud / Varies | Hosted / BYO adjacent | Human review workflows | Not a pure dedupe engine | N/A |

| Dataloop | AI data operations | Cloud / Hybrid / Varies | Hosted / BYO adjacent | Workflow automation | Can be complex | N/A |

| SuperAnnotate | Annotation and quality workflows | Cloud / Varies | Hosted / BYO adjacent | Multimodal review | Dedup depth varies | N/A |

| datasketch | Developer-built text dedupe | Self-hosted / Local | Open-source / BYO | MinHash similarity | Requires engineering | N/A |

Scoring & Evaluation

The scoring below is comparative, not absolute. It helps buyers compare tools based on deduplication fit, AI training workflow support, dataset quality value, integration depth, usability, and operational readiness. Scores may change depending on your data type, team skills, scale, deployment needs, and whether you need exact duplicates, near-duplicates, semantic duplicates, or visual similarity. A high score does not mean one universal winner. Always test tools with your own data, review false positives and false negatives, and measure impact on model quality.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| cleanlab | 9 | 8 | 6 | 8 | 7 | 9 | 7 | 8 | 7.95 |

| FiftyOne | 9 | 8 | 6 | 9 | 7 | 9 | 7 | 8 | 8.10 |

| Lightly | 8 | 8 | 6 | 8 | 7 | 9 | 7 | 7 | 7.75 |

| Encord Active | 8 | 8 | 6 | 8 | 8 | 8 | 7 | 8 | 7.70 |

| Lilac | 8 | 8 | 6 | 8 | 7 | 9 | 6 | 7 | 7.60 |

| Nomic Atlas | 8 | 8 | 6 | 8 | 8 | 8 | 7 | 7 | 7.65 |

| Labelbox | 8 | 8 | 7 | 8 | 8 | 8 | 8 | 8 | 7.95 |

| Dataloop | 8 | 8 | 7 | 9 | 7 | 8 | 7 | 7 | 7.85 |

| SuperAnnotate | 8 | 8 | 7 | 8 | 8 | 8 | 7 | 8 | 7.85 |

| datasketch | 7 | 7 | 5 | 8 | 6 | 9 | 5 | 7 | 6.95 |

Top 3 for Enterprise

- Labelbox

- Dataloop

- SuperAnnotate

Top 3 for SMB

- cleanlab

- Encord Active

- Lightly

Top 3 for Developers

- FiftyOne

- Lilac

- datasketch

Which Data Deduplication for Model Training Tool Is Right for You?

Solo / Freelancer

Solo developers should start with lightweight and flexible tools. datasketch is useful for custom text deduplication, Lilac can help with LLM dataset exploration, and FiftyOne is strong for visual dataset review. If you are cleaning a small dataset, simple hashes, scripts, or notebook workflows may be enough.

For more serious model training, use a tool that can handle near-duplicates, not just exact matches. This is especially important for text chunks, repeated support conversations, similar images, and evaluation datasets.

SMB

SMBs should focus on quick setup, clear value, and cost reduction. cleanlab can help detect data quality issues, Lightly and Encord Active are useful for visual datasets, and Labelbox or SuperAnnotate can help when duplicate review connects to annotation workflows.

SMBs should avoid overly complex enterprise systems unless data volume, labeling cost, or compliance needs justify them. Start with one dataset, measure duplicate rate, remove obvious redundancy, and track model improvement.

Mid-Market

Mid-market teams usually need stronger workflow control, dataset versioning, review queues, and integration with labeling or training pipelines. cleanlab, FiftyOne, Labelbox, Dataloop, Encord Active, and Nomic Atlas may fit depending on whether the main data is text, visual, multimodal, or embedding-heavy.

At this stage, deduplication should become a repeatable pipeline step. It should happen before annotation, before embedding, before training, and before evaluation.

Enterprise

Enterprises should prioritize governance, auditability, security controls, repeatable runs, human approval, and integration with AI data operations. Labelbox, Dataloop, SuperAnnotate, cleanlab, and FiftyOne-style workflows may be strong candidates depending on data type and maturity.

Enterprise teams should test whether deduplication decisions are explainable and reversible. Removing data at scale without audit records can create compliance, quality, and reproducibility problems.

Regulated industries: finance/healthcare/public sector

Regulated teams should treat deduplication as part of data governance. Duplicate sensitive records can increase privacy exposure, while incorrect removal can damage training value or audit completeness.

Healthcare, finance, insurance, legal, and public sector teams should keep deduplication reports, reviewer decisions, version history, and approval trails. Human review is important when records are sensitive or legally relevant.

Budget vs premium

Budget-conscious teams can start with open-source tools such as datasketch, Lilac, and FiftyOne. These are useful when the team has engineering skills and needs local control.

Premium platforms make sense when teams need annotation workflows, dashboards, audit logs, managed operations, access controls, and scalable review processes. The best value often comes from reducing labeling, storage, and training cost.

Build vs buy

Build your own deduplication workflow when your logic is custom, your data format is narrow, and your engineering team can maintain the pipeline. Libraries like datasketch can be strong foundations.

Buy or adopt a platform when you need team collaboration, governance, dataset review, integration with labeling, and repeatable production workflows. Many teams combine both approaches: custom algorithms plus platform-based review and approval.

Implementation Playbook: 30 / 60 / 90 Days

30 Days: Pilot and Success Metrics

- Select one high-value dataset such as documents, images, support tickets, prompts, or video frames.

- Define duplicate types: exact duplicates, near-duplicates, semantic duplicates, repeated labels, or visual similarity.

- Create baseline metrics such as duplicate rate, storage cost, labeling cost, training time, and evaluation leakage risk.

- Run a pilot deduplication workflow on a sample dataset.

- Review duplicate clusters with humans before deletion.

- Measure false positives and false negatives.

- Test whether removing duplicates improves model quality or reduces cost.

- Document deduplication rules, thresholds, and approval steps.

- Create a small evaluation set to measure training-test overlap.

60 Days: Harden Security, Evaluation, and Rollout

- Add dataset versioning and reproducible deduplication runs.

- Create review workflows for high-risk duplicate groups.

- Add custom rules for domain-specific repetition.

- Expand deduplication to more sources and file types.

- Connect dedupe workflows to annotation, RAG ingestion, and training pipelines.

- Add red-team checks for evaluation leakage and prompt overlap.

- Add prompt and version control for LLM fine-tuning data.

- Define incident handling for accidental data deletion or leakage.

- Build reports for data governance and AI platform teams.

90 Days: Optimize Cost, Latency, Governance, and Scale

- Automate deduplication before training, retrieval, labeling, and evaluation.

- Track storage savings, labeling savings, embedding cost reduction, and training cost reduction.

- Monitor whether deduplication improves model generalization.

- Add dashboards for duplicate rate, cluster types, approval status, and dataset quality.

- Standardize thresholds by data type and model workflow.

- Review privacy risks from repeated sensitive records.

- Confirm exportability of dedupe decisions and reports.

- Scale to additional teams, models, and data sources.

- Continue improving thresholds based on model performance and reviewer feedback.

Common Mistakes & How to Avoid Them

- Only removing exact duplicates: Use semantic, fuzzy, visual, and near-duplicate detection for better cleanup.

- Deleting useful variations: Review duplicate clusters before removing data that may represent meaningful diversity.

- Ignoring evaluation leakage: Check overlap between training and test sets to avoid inflated model results.

- Deduplicating after embedding: Clean data before vectorization to reduce storage cost and retrieval noise.

- No human review: Use reviewers for ambiguous clusters, sensitive records, and high-impact datasets.

- No dataset versioning: Keep records of what was removed, merged, retained, and approved.

- No false positive tracking: Measure when useful records are incorrectly flagged as duplicates.

- No false negative tracking: Test whether subtle duplicates are being missed.

- Ignoring multimodal data: Deduplicate text, images, videos, transcripts, and documents differently.

- Cost surprises: Monitor compute, storage, labeling, embedding, and reprocessing cost.

- Prompt injection exposure: For LLM workflows, check repeated malicious or unsafe prompts before training or evaluation.

- Vendor lock-in: Keep dedupe reports, clusters, and cleaned datasets exportable.

- No governance process: Define who can approve data removal and how decisions are audited.

- Treating deduplication as one-time cleanup: Make it continuous across ingestion, annotation, training, retrieval, and evaluation.

FAQs

1. What is data deduplication for model training?

It means finding and removing repeated, near-repeated, or overly similar data before model training. It helps improve quality and reduce unnecessary cost.

2. Why does duplicate data hurt model training?

Duplicate data can cause memorization, bias, inflated evaluation scores, and wasted compute. It can also overrepresent certain examples or behaviors.

3. What is the difference between exact and near-duplicate detection?

Exact detection finds identical records, while near-duplicate detection finds similar but not identical content. Near-duplicate detection is more useful for AI datasets.

4. Can deduplication help RAG systems?

Yes, it removes repeated documents before embedding and retrieval. This reduces vector storage cost and improves answer diversity.

5. Is deduplication useful for LLM fine-tuning?

Yes, repeated prompts, conversations, or labels can overweight certain patterns. Deduplication helps create cleaner fine-tuning data.

6. Can these tools work with BYO models?

Many workflows can use BYO embeddings, predictions, confidence scores, or similarity logic. Exact support depends on the tool and setup.

7. Do deduplication tools support self-hosting?

Some tools are open-source or self-hosted, while others are cloud-based or enterprise platforms. Deployment should be verified before selection.

8. How does deduplication reduce cost?

It reduces storage, labeling, embedding, training tokens, compute, and human review workload. Cost savings depend on dataset size and duplicate rate.

9. Can deduplication remove useful data by mistake?

Yes, especially when near-duplicates represent real variation. Human review and careful thresholds help avoid over-removal.

10. How do teams evaluate deduplication quality?

Track false positives, false negatives, duplicate clusters, model performance, evaluation leakage, and cost savings. Use real datasets for testing.

11. Is deduplication important for evaluation datasets?

Yes, training-test overlap can make model results look better than they are. Deduplicating evaluation data improves trust in results.

12. What are guardrails in deduplication workflows?

Guardrails include approval rules, retention policies, review queues, deletion controls, audit logs, and safe handling of sensitive records.

13. What alternatives exist to deduplication platforms?

Alternatives include file hashes, database queries, custom scripts, MinHash libraries, embedding search, manual review, and ETL-based cleanup.

14. How can teams switch tools later?

Keep cleaned datasets, duplicate reports, thresholds, and decisions exportable. Avoid storing deduplication logic only inside one vendor system.

15. How should teams start?

Start with one high-value dataset, measure duplicate rate, test tools, review clusters, and compare model results before scaling. Keep the workflow repeatable.

Conclusion

Data deduplication for model training is essential because repeated or near-repeated data can increase cost, distort evaluation, reduce diversity, and weaken model quality. The best tool depends on data type, scale, workflow maturity, and whether you need text deduplication, visual similarity, embedding-based clustering, annotation review, or enterprise governance. Developer teams may prefer datasketch, Lilac, or FiftyOne, while AI data teams may choose cleanlab, Lightly, Encord Active, Labelbox, Dataloop, SuperAnnotate, or Nomic Atlas depending on workflow needs.

Next steps:

- Shortlist: Pick 3 tools based on data type, duplicate type, deployment needs, and pipeline fit.

- Pilot: Test with real datasets, duplicate clusters, human review, and model quality metrics.

- Verify and scale: Confirm cost savings, evaluation quality, governance, exportability, and workflow stability before rollout.