Introduction

Bias & Fairness Testing Suites are specialized tools designed to evaluate whether AI systems behave fairly across different user groups. In simple terms, these tools help detect discrimination, measure fairness gaps, and reduce unintended bias in machine learning models, large language models, and AI-driven decision systems.

As AI adoption accelerates across industries, fairness has become a core requirement—not just an ethical concern. Organizations now face increasing pressure from regulators, customers, and internal governance teams to ensure that AI systems do not produce harmful or discriminatory outcomes. Modern fairness tools go beyond basic metrics and now support continuous monitoring, multimodal evaluation, and real-time risk detection.

Real-world use cases:

- Hiring platforms: Ensuring candidate screening models do not favor or disadvantage specific demographics

- Credit scoring systems: Detecting unfair approval or rejection patterns across income groups

- Healthcare AI: Preventing biased diagnosis or treatment recommendations

- Customer support bots: Maintaining consistent responses across languages and regions

- Content moderation systems: Avoiding biased filtering or censorship

- AI agents: Ensuring fair reasoning in automated workflows and decisions

What to evaluate before choosing a tool:

- Breadth of fairness metrics supported

- Ability to audit datasets and model outputs

- Support for LLMs and multimodal AI

- Automation and regression testing capabilities

- Guardrails and bias mitigation techniques

- Integration with ML pipelines and DevOps workflows

- Observability and reporting dashboards

- Data privacy and retention controls

- Scalability and performance

- Customization of fairness thresholds

- Governance and compliance features

- Cost efficiency and vendor lock-in risks

Best for: AI engineers, data scientists, risk teams, and enterprises deploying AI systems in production environments, especially in finance, healthcare, HR tech, and public sector use cases.

Not ideal for: Small experimental projects or simple AI use cases where fairness risks are minimal and manual evaluation is sufficient.

What’s Changed in Bias & Fairness Testing Suites

- Fairness tools now support multimodal AI systems including text, images, and audio

- Strong integration with agentic AI workflows and autonomous systems

- Increased focus on LLM-specific evaluation and prompt testing

- Built-in detection for prompt injection and bias exploitation risks

- Shift toward continuous evaluation pipelines in CI/CD workflows

- Advanced observability dashboards with fairness metrics and alerts

- Growing demand for privacy-first evaluation and data isolation

- Support for BYO models and multi-model routing strategies

- Improved real-time monitoring of production bias drift

- Tight integration with RAG pipelines and knowledge systems

- Rise of compliance-ready audit reports and governance frameworks

- Increased emphasis on cost and latency optimization during evaluation

Quick Buyer Checklist (Scan-Friendly)

- Does the tool support multiple fairness metrics and bias detection methods?

- Can it integrate with your existing ML or LLM pipeline?

- Does it support hosted, BYO, or open-source models?

- Are evaluation and regression testing workflows automated?

- Does it provide guardrails and policy enforcement?

- Can it track latency, token usage, and cost metrics?

- Are audit logs and compliance reports available?

- Does it support multimodal AI evaluation?

- Can you configure data retention and privacy controls?

- How easy is it to customize fairness thresholds?

- Does it reduce vendor lock-in risk?

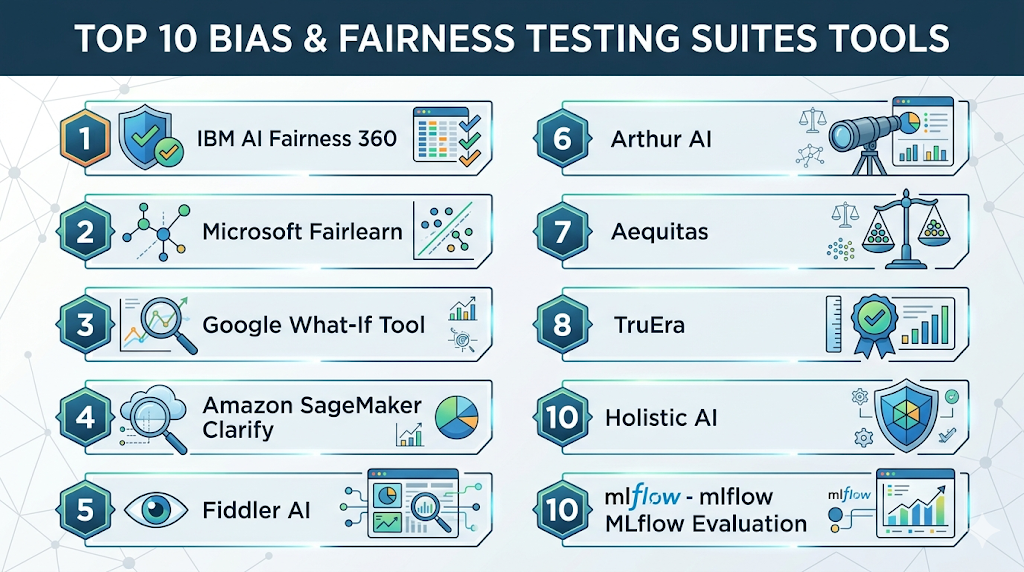

Top 10 Bias & Fairness Testing Suites Tools

1 — IBM AI Fairness 360

One-line verdict: Best for research-driven teams needing deep fairness metrics and customizable bias mitigation techniques.

Short description:

IBM AI Fairness 360 is an open-source toolkit that provides a wide range of fairness metrics and bias mitigation algorithms for machine learning models. It is widely used by researchers and enterprise data science teams to evaluate and improve model fairness. The toolkit is highly flexible but requires technical expertise to implement effectively.

Standout Capabilities

- Large collection of fairness metrics

- Bias mitigation algorithms

- Dataset and model auditing tools

- Open-source flexibility

- Strong research foundation

AI-Specific Depth

- Model support: Open-source / BYO

- RAG: N/A

- Evaluation: Strong offline evaluation

- Guardrails: Basic mitigation techniques

- Observability: Limited

Pros

- Highly customizable

- Free and open-source

- Trusted by research community

Cons

- Steep learning curve

- Limited UI

- Not plug-and-play for production

Security & Compliance

Not publicly stated

Deployment & Platforms

Linux / Self-hosted / Cloud

Integrations & Ecosystem

Works with Python-based ML frameworks and custom pipelines

- Scikit-learn compatibility

- Jupyter notebooks

- Data science workflows

Pricing Model

Open-source

Best-Fit Scenarios

- Research projects

- Custom ML pipelines

- Bias benchmarking

2 — Microsoft Fairlearn

One-line verdict: Best for developers integrating fairness checks directly into machine learning workflows.

Short description:

Fairlearn is a Python library that helps developers assess and improve fairness in machine learning models. It integrates easily into existing ML pipelines and provides tools for comparing model performance across demographic groups. It is well-suited for teams already working within Python ecosystems.

Standout Capabilities

- Fairness metrics dashboards

- Model comparison tools

- Bias mitigation algorithms

- Easy integration with ML workflows

AI-Specific Depth

- Model support: BYO

- RAG: N/A

- Evaluation: Built-in fairness metrics

- Guardrails: Limited

- Observability: Basic

Pros

- Developer-friendly

- Easy to integrate

- Strong documentation

Cons

- Limited enterprise features

- Minimal observability

- Not a full lifecycle platform

Security & Compliance

Not publicly stated

Deployment & Platforms

Linux / Cloud

Integrations & Ecosystem

Python ML ecosystem

- Scikit-learn

- Azure ML

- Data pipelines

Pricing Model

Open-source

Best-Fit Scenarios

- ML engineers

- Fairness experimentation

- Model comparison workflows

3 — Google What-If Tool

One-line verdict: Best for visual exploration of bias and model behavior using interactive analysis tools.

Short description:

The What-If Tool is an interactive interface for visualizing model predictions and detecting bias through counterfactual analysis. It helps data scientists understand how models behave across different inputs. It is especially useful for debugging and exploratory analysis rather than production monitoring.

Standout Capabilities

- Interactive visual dashboards

- Counterfactual analysis

- Model debugging tools

- No-code exploration

AI-Specific Depth

- Model support: BYO

- RAG: N/A

- Evaluation: Visual analysis

- Guardrails: N/A

- Observability: Limited

Pros

- Easy to use

- Strong visualization

- Great for exploration

Cons

- Limited automation

- Not production-focused

- Minimal integrations

Security & Compliance

Not publicly stated

Deployment & Platforms

Web / Cloud

Integrations & Ecosystem

- TensorFlow ecosystem

- ML debugging workflows

Pricing Model

Free

Best-Fit Scenarios

- Model debugging

- Data exploration

- Visualization-driven analysis

4 — Amazon SageMaker Clarify

One-line verdict: Best for AWS-based teams needing scalable bias detection integrated into production ML pipelines.

Short description:

SageMaker Clarify is a managed service that detects bias in datasets and models within the AWS ecosystem. It provides automated reporting and integrates with model training and deployment workflows. It is ideal for teams already using AWS infrastructure.

Standout Capabilities

- Automated bias reports

- Scalable evaluation

- Pipeline integration

- Explainability tools

AI-Specific Depth

- Model support: Hosted / BYO

- RAG: N/A

- Evaluation: Automated

- Guardrails: Basic

- Observability: Moderate

Pros

- Scalable

- Fully managed

- Enterprise-ready

Cons

- AWS dependency

- Cost can increase

- Limited portability

Security & Compliance

Varies / N/A

Deployment & Platforms

Cloud

Integrations & Ecosystem

Deep AWS integration

- SageMaker

- Data pipelines

- Cloud workflows

Pricing Model

Usage-based

Best-Fit Scenarios

- AWS ML pipelines

- Enterprise deployments

- Production AI systems

5 — Fiddler AI

One-line verdict: Best for production monitoring with strong fairness insights and real-time observability.

Short description:

Fiddler AI is a model monitoring platform that tracks fairness, performance, and drift in production AI systems. It provides dashboards, alerts, and explainability tools to help teams maintain trustworthy AI deployments over time.

Standout Capabilities

- Real-time bias monitoring

- Explainability tools

- Drift detection

- Alert systems

AI-Specific Depth

- Model support: Multi-model

- RAG: Limited

- Evaluation: Continuous

- Guardrails: Partial

- Observability: Strong

Pros

- Production-ready

- Strong dashboards

- Enterprise features

Cons

- Cost considerations

- Learning curve

- Limited open-source support

Security & Compliance

Varies / N/A

Deployment & Platforms

Cloud / Hybrid

Integrations & Ecosystem

- ML pipelines

- Data platforms

- APIs and SDKs

Pricing Model

Tiered

Best-Fit Scenarios

- Production monitoring

- Enterprise AI governance

- Real-time fairness tracking

6 — Arthur AI

One-line verdict: Best for enterprises needing continuous fairness and performance monitoring across deployed models.

Short description:

Arthur AI provides monitoring for AI systems, including fairness tracking, performance insights, and drift detection. It is designed for enterprise environments where AI systems operate continuously and require real-time oversight.

Standout Capabilities

- Bias monitoring dashboards

- Performance tracking

- Drift detection

- Alerts and notifications

AI-Specific Depth

- Model support: Multi-model

- RAG: N/A

- Evaluation: Continuous

- Guardrails: Limited

- Observability: Strong

Pros

- Enterprise-ready

- Real-time insights

- Strong dashboards

Cons

- Setup complexity

- Pricing transparency limited

- Limited open-source options

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud / Hybrid

Integrations & Ecosystem

- Enterprise data systems

- APIs

- ML pipelines

Pricing Model

Not publicly stated

Best-Fit Scenarios

- Enterprise AI monitoring

- Compliance tracking

- Continuous evaluation

7 — Aequitas

One-line verdict: Best for compliance-focused fairness audits and structured reporting workflows.

Short description:

Aequitas is an open-source bias audit toolkit that helps organizations generate fairness reports and evaluate model outcomes across different demographic groups. It is widely used in regulatory and policy-driven environments.

Standout Capabilities

- Bias audit reports

- Fairness metrics

- Policy-driven evaluation

- Reporting tools

AI-Specific Depth

- Model support: BYO

- RAG: N/A

- Evaluation: Strong audit reporting

- Guardrails: N/A

- Observability: Limited

Pros

- Compliance-friendly

- Easy reporting

- Open-source

Cons

- Limited UI

- No real-time monitoring

- Requires setup

Security & Compliance

Not publicly stated

Deployment & Platforms

Linux / Self-hosted

Integrations & Ecosystem

- Python workflows

- Data science tools

Pricing Model

Open-source

Best-Fit Scenarios

- Regulatory audits

- Fairness reporting

- Research use

8 — TruEra

One-line verdict: Best for combining explainability, fairness, and model evaluation in enterprise AI workflows.

Short description:

TruEra focuses on explainable AI and fairness evaluation, helping teams understand why models behave in certain ways. It is particularly useful for debugging models and ensuring fairness across complex AI systems.

Standout Capabilities

- Explainability tools

- Bias detection

- Performance evaluation

- Debugging workflows

AI-Specific Depth

- Model support: Multi-model

- RAG: Limited

- Evaluation: Strong

- Guardrails: Partial

- Observability: Strong

Pros

- Balanced feature set

- Enterprise-ready

- Strong explainability

Cons

- Complexity

- Cost

- Learning curve

Security & Compliance

Varies / N/A

Deployment & Platforms

Cloud / Hybrid

Integrations & Ecosystem

- ML platforms

- APIs

- Enterprise systems

Pricing Model

Not publicly stated

Best-Fit Scenarios

- Explainability + fairness

- Enterprise AI teams

- Model debugging

9 — WhyLabs

One-line verdict: Best for observability-driven fairness monitoring integrated with data and model tracking.

Short description:

WhyLabs provides observability tools for AI systems, including fairness-related signals and anomaly detection. It is designed for teams that want continuous monitoring rather than one-time bias evaluation.

Standout Capabilities

- Data observability

- Bias signals

- Monitoring dashboards

- Alerts

AI-Specific Depth

- Model support: Multi-model

- RAG: N/A

- Evaluation: Continuous

- Guardrails: Limited

- Observability: Strong

Pros

- Scalable

- Easy dashboards

- Strong monitoring

Cons

- Limited fairness depth

- Requires setup

- Not standalone fairness tool

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud

Integrations & Ecosystem

- Data pipelines

- ML tools

- APIs

Pricing Model

Tiered

Best-Fit Scenarios

- Production monitoring

- Data + fairness tracking

- Observability-first teams

10 — Holistic AI

One-line verdict: Best for governance-focused organizations needing fairness, risk, and compliance oversight.

Short description:

Holistic AI provides a governance-focused platform for assessing fairness, risk, and compliance across AI systems. It helps organizations align AI deployments with regulatory and ethical standards.

Standout Capabilities

- Governance dashboards

- Risk scoring

- Bias assessment

- Compliance support

AI-Specific Depth

- Model support: Multi-model

- RAG: N/A

- Evaluation: Governance-focused

- Guardrails: Strong

- Observability: Moderate

Pros

- Governance-first approach

- Compliance features

- Enterprise-ready

Cons

- Limited developer tools

- Cost considerations

- Less flexible than open-source tools

Security & Compliance

Varies / N/A

Deployment & Platforms

Cloud

Integrations & Ecosystem

- Enterprise systems

- APIs

- Governance tools

Pricing Model

Not publicly stated

Best-Fit Scenarios

- Regulated industries

- Risk management teams

- Compliance-driven AI

Comparison Table

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| IBM AIF360 | Research | Self-hosted | Open-source | Deep metrics | Complexity | N/A |

| Fairlearn | Developers | Cloud | BYO | Integration | Limited features | N/A |

| What-If Tool | Visualization | Cloud | BYO | UI/UX | Limited automation | N/A |

| SageMaker Clarify | AWS users | Cloud | Hosted/BYO | Scalability | Lock-in risk | N/A |

| Fiddler AI | Monitoring | Hybrid | Multi-model | Observability | Cost | N/A |

| Arthur AI | Enterprise | Hybrid | Multi-model | Dashboards | Complexity | N/A |

| Aequitas | Audits | Self-hosted | BYO | Reporting | Limited UI | N/A |

| TruEra | Explainability | Hybrid | Multi-model | Balanced features | Cost | N/A |

| WhyLabs | Observability | Cloud | Multi-model | Monitoring | Limited fairness depth | N/A |

| Holistic AI | Governance | Cloud | Multi-model | Compliance | Limited dev tools | N/A |

Scoring & Evaluation (Transparent Rubric)

Scoring is relative and helps compare tools based on strengths across fairness, evaluation, usability, and enterprise readiness.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| IBM AIF360 | 9 | 8 | 6 | 7 | 5 | 7 | 6 | 6 | 7.2 |

| Fairlearn | 8 | 7 | 5 | 7 | 7 | 7 | 5 | 6 | 6.8 |

| What-If Tool | 7 | 6 | 4 | 6 | 9 | 7 | 5 | 6 | 6.5 |

| SageMaker Clarify | 8 | 8 | 6 | 9 | 7 | 6 | 8 | 7 | 7.6 |

| Fiddler AI | 9 | 9 | 7 | 8 | 7 | 6 | 8 | 7 | 8.0 |

| Arthur AI | 8 | 8 | 6 | 8 | 7 | 6 | 7 | 7 | 7.5 |

| Aequitas | 7 | 7 | 5 | 6 | 6 | 7 | 6 | 6 | 6.5 |

| TruEra | 9 | 9 | 7 | 8 | 7 | 6 | 8 | 7 | 8.1 |

| WhyLabs | 8 | 8 | 6 | 8 | 8 | 7 | 7 | 7 | 7.8 |

| Holistic AI | 8 | 8 | 8 | 7 | 6 | 6 | 8 | 7 | 7.7 |

Top 3 for Enterprise: TruEra, Fiddler AI, SageMaker Clarify

Top 3 for SMB: Fairlearn, WhyLabs, Aequitas

Top 3 for Developers: IBM AIF360, Fairlearn, What-If Tool

Which Bias & Fairness Testing Suites Tool Is Right for You?

Solo / Freelancer

Use open-source tools like AIF360 or Fairlearn for flexibility and cost efficiency. These tools provide strong fairness capabilities but require technical expertise.

SMB

Choose tools like WhyLabs or Fairlearn for easier integration and moderate scalability without heavy enterprise overhead.

Mid-Market

Fiddler AI or TruEra provide a good balance of monitoring, fairness evaluation, and usability for growing teams.

Enterprise

SageMaker Clarify, TruEra, and Holistic AI are best suited for large-scale deployments requiring governance, compliance, and automation.

Regulated industries

Focus on Aequitas, Holistic AI, and TruEra for auditability, reporting, and regulatory alignment.

Budget vs premium

Open-source tools are cost-effective but require effort. Enterprise platforms offer automation and governance but at higher cost.

Build vs buy

Build if customization is critical. Buy if you need speed, compliance readiness, and production scalability.

Implementation Playbook (30 / 60 / 90 Days)

30 Days

- Identify fairness risks in datasets and models

- Select pilot tool and define evaluation metrics

- Create baseline fairness benchmarks

60 Days

- Integrate tool into ML workflows

- Automate evaluation and regression testing

- Add monitoring dashboards and alerts

90 Days

- Optimize cost and performance

- Implement governance and compliance policies

- Scale fairness monitoring across teams

Common Mistakes & How to Avoid Them

- Ignoring bias in training data

- Skipping evaluation pipelines

- No fairness benchmarks

- Lack of observability

- Over-automation without human review

- Weak guardrails

- No regression testing

- Poor data governance

- Ignoring privacy requirements

- Vendor lock-in risks

- Cost mismanagement

- No monitoring in production

FAQs

1. What is a Bias & Fairness Testing Suite?

A Bias & Fairness Testing Suite is a set of tools that helps teams identify, measure, and reduce bias in AI models and datasets. These tools analyze how different user groups are treated and highlight unfair outcomes before deployment. They are essential for building responsible and trustworthy AI systems.

2. Why is fairness important in AI systems?

Fairness is important because biased AI systems can lead to harmful outcomes in areas like hiring, lending, healthcare, and customer service. Ensuring fairness helps organizations maintain trust, meet regulatory requirements, and avoid reputational or legal risks.

3. Do all AI systems need bias testing?

Not all systems require deep fairness testing, but any AI system impacting people should be evaluated. Even simple models can introduce bias depending on the data used, so testing is recommended for most real-world applications.

4. Can bias be completely removed from AI?

Bias cannot be completely eliminated, but it can be significantly reduced. These tools help detect and mitigate bias, but human oversight, better data practices, and continuous monitoring are still required.

5. Do these tools support large language models?

Some modern fairness tools support LLMs and generative AI, including prompt evaluation and output testing. However, support varies, so buyers should verify compatibility with their AI stack.

6. What fairness metrics are commonly used?

Common metrics include demographic parity, equal opportunity, and disparate impact. The choice of metric depends on the use case and regulatory requirements.

7. Can fairness testing be automated?

Yes, many tools allow fairness tests to be integrated into CI/CD pipelines. This ensures models are continuously evaluated as they evolve over time.

8. Are open-source tools enough for enterprises?

Open-source tools can be powerful but may lack automation, monitoring, and governance features. Enterprises often combine them with commercial platforms for full lifecycle coverage.

9. How do these tools handle data privacy?

Privacy features vary by tool. Organizations should check whether data is stored, anonymized, encrypted, or retained, and whether deployment options support private environments.

10. Can fairness tools monitor models in production?

Yes, some tools provide real-time monitoring and alerts for bias drift. This helps detect issues after deployment and maintain fairness over time.

11. What industries benefit the most from fairness tools?

Industries like finance, healthcare, HR, and public sector benefit the most because decisions directly impact people and require strict compliance and fairness standards.

12. How should teams choose the right tool?

Teams should evaluate based on their technical expertise, model type, deployment environment, and compliance needs. Open-source tools work well for developers, while enterprise tools are better for governance and scalability.

Conclusion

Bias & Fairness Testing Suites have become essential for modern AI development, especially as systems move into high-impact, real-world applications. These tools help organizations detect risks early, maintain compliance, and build trust with users. From open-source libraries to enterprise-grade platforms, the ecosystem offers a wide range of solutions depending on technical maturity and business needs.

There is no one-size-fits-all solution. Teams must balance flexibility, scalability, governance, and cost when choosing a tool. Open-source options are ideal for experimentation and customization, while enterprise platforms provide automation, monitoring, and compliance features needed for large-scale deployments.

Next steps:

- Shortlist tools based on your use case and technical stack

- Run a pilot using real data and fairness metrics

- Validate evaluation workflows, security, and governance before scaling