Introduction

Confidential Computing for AI Workloads refers to specialized platforms and hardware that protect sensitive data while AI models are training, inferring, or interacting with external systems. By encrypting data in use, these tools prevent exposure even to cloud operators, administrators, or compromised infrastructure. They are essential as enterprises increasingly rely on AI to process proprietary, personal, or regulatory-sensitive datasets.

Why it matters

- Protects AI workloads from unauthorized access, even during computation.

- Maintains compliance with privacy regulations like GDPR, HIPAA, or sector-specific mandates.

- Secures multi-tenant cloud deployments and outsourced AI processing.

- Reduces risk of IP theft or corporate espionage through AI pipelines.

- Supports trusted AI operations in regulated industries.

- Enhances enterprise confidence in cloud-based AI adoption.

Real-world use cases

- Healthcare: Training AI on patient data without exposing sensitive information.

- Finance: Processing credit and transaction datasets securely in cloud AI.

- Government & defense: Running classified AI models in encrypted enclaves.

- Enterprise AI platforms: Multi-tenant confidential AI deployments.

- Pharma & biotech: Securing IP in drug discovery AI workloads.

- Cloud AI services: Ensuring tenants’ AI data remains private and encrypted.

Evaluation criteria for buyers

- Type of secure enclave or TEE supported (SGX, AMD SEV, Nitro Enclaves).

- Integration with AI frameworks (TensorFlow, PyTorch, JAX).

- Real-time encryption with low latency.

- Multi-cloud and hybrid support.

- Compliance reporting and audit logging.

- Policy enforcement for secure data handling.

- Guardrails for prompt injection or unsafe model outputs.

- Scalability for large AI workloads.

- Support for multi-modal workloads (text, image, audio).

- Ease of deployment and monitoring.

- Observability metrics for performance and cost.

- Vendor support and ecosystem integration.

Best for: AI engineers, security and compliance teams, enterprises processing sensitive or regulated datasets, and multi-cloud AI workloads.

Not ideal for: Small-scale experimentation, low-sensitivity AI workloads, or on-prem LLMs without confidential data requirements.

What’s Changed in Confidential Computing for AI Workloads

- Integration with agentic workflows and tool-calling LLM pipelines.

- Real-time monitoring for AI outputs in encrypted enclaves.

- Expanded support for multimodal AI workloads (text, image, audio).

- Guardrails for prompt-injection and policy enforcement.

- Enterprise privacy enhancements including data residency and retention controls.

- Cost and latency optimization for encrypted computations.

- Observability improvements for tracing, token/cost metrics, and model performance.

- Integration with CI/CD and MLOps pipelines for continuous secure deployment.

- Support for multi-cloud and hybrid AI workloads.

- Enhanced governance and compliance reporting for audit readiness.

- Automated remediation workflows for detected vulnerabilities in confidential AI workloads.

Quick Buyer Checklist (Scan-Friendly)

- Type of TEE or secure enclave supported

- Integration with AI frameworks (PyTorch, TensorFlow, etc.)

- Real-time encrypted computations without high latency

- Multi-cloud/hybrid deployment capability

- Compliance reporting and audit logs

- Automated policy enforcement and guardrails

- Observability metrics for token usage, latency, and cost

- Integration with CI/CD pipelines

- Red-teaming and security evaluation capabilities

- Scalability for large AI workloads

- Vendor support, SDKs, and APIs

- Protection for multi-modal AI workloads

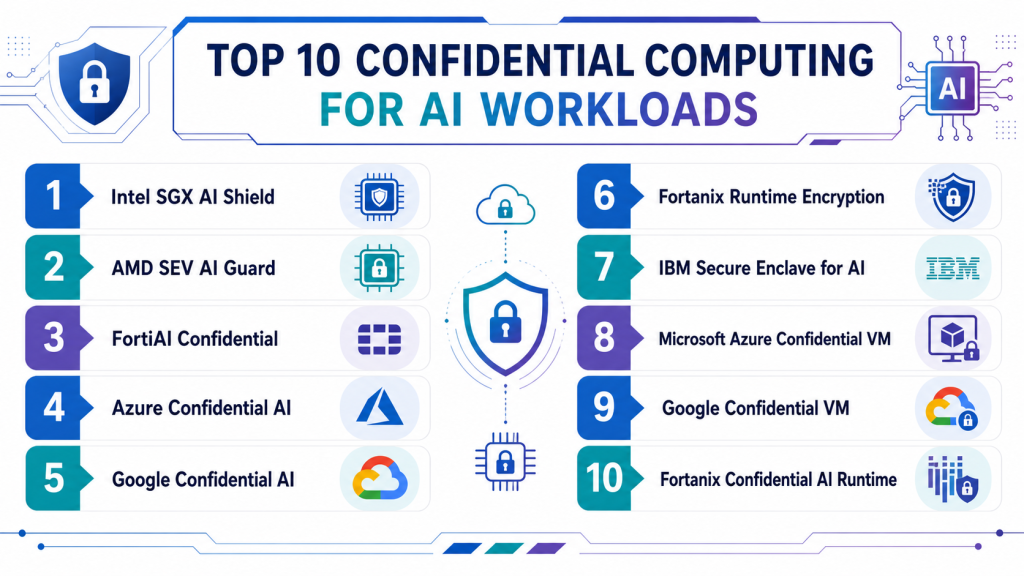

Top 10 Confidential Computing for AI Workloads Tools

1 — Intel SGX AI Shield

One-line verdict: Enterprise-grade platform using Intel SGX enclaves to protect AI workloads with low-latency encryption.

Short description :

Intel SGX AI Shield leverages secure enclaves to protect AI model computations in memory. It ensures that sensitive data remains encrypted during training and inference. Integration with popular AI frameworks allows seamless deployment. Enterprise teams can monitor usage, enforce policies, and maintain compliance while processing critical data.

Standout Capabilities

- Intel SGX-based secure enclaves

- Real-time encryption of AI computations

- Integration with TensorFlow and PyTorch

- Policy enforcement and audit logging

- Multi-tenant AI support

AI-Specific Depth

- Model support: Proprietary / BYO

- RAG / knowledge integration: N/A

- Evaluation: Human review, regression tests

- Guardrails: Policy enforcement, prompt injection detection

- Observability: Latency, token/cost metrics

Pros

- Hardware-level encryption

- Low-latency processing

- Enterprise-compliant monitoring

Cons

- Requires SGX-compatible hardware

- Premium cost

- Integration complexity

Security & Compliance

SSO/RBAC, audit logs, encryption; Certifications: Not publicly stated

Deployment & Platforms

- Cloud / Hybrid / On-prem

- Web / Linux / Windows

Integrations & Ecosystem

APIs, SDKs, CI/CD hooks, dashboards, alerts

Pricing Model

Tiered enterprise licensing. Not publicly stated

Best-Fit Scenarios

- Regulated healthcare AI

- Multi-cloud financial AI pipelines

- Enterprise-scale confidential LLMs

2 — AMD SEV AI Guard

One-line verdict: Platform leveraging AMD SEV enclaves for encrypted AI model execution across hybrid and cloud environments.

Short description :

AMD SEV AI Guard protects AI workloads using memory encryption at the CPU level. It supports confidential execution of AI models while maintaining compliance. Teams can integrate it into CI/CD and MLOps pipelines. Multi-cloud deployment and observability dashboards ensure enterprise security and auditing capabilities.

Standout Capabilities

- AMD SEV secure enclaves

- Memory encryption for AI computations

- Multi-cloud and hybrid support

- Policy enforcement and compliance reporting

- Integration with TensorFlow and PyTorch

AI-Specific Depth

- Model support: BYO / Proprietary

- RAG / knowledge integration: N/A

- Evaluation: Regression, human review

- Guardrails: Policy enforcement, misuse detection

- Observability: Latency, token, and cost metrics

Pros

- CPU-level encryption

- Multi-cloud ready

- CI/CD integration

Cons

- Hardware-specific

- Premium pricing

- Learning curve for teams

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud / Hybrid / On-prem

- Web / Linux / Windows

Integrations & Ecosystem

APIs, SDKs, dashboards, CI/CD hooks

Pricing Model

Enterprise subscription. Not publicly stated

Best-Fit Scenarios

- Confidential AI workloads in finance or healthcare

- Multi-cloud enterprise deployments

- Hybrid AI model operations

3 — FortiAI Confidential

One-line verdict: Enterprise-grade platform for secure AI model execution and confidential data handling in hybrid environments.

Short description :

FortiAI Confidential enables organizations to run AI workloads in secure enclaves while keeping data encrypted in use. It supports multi-cloud and hybrid deployments, protecting sensitive datasets during training and inference. The platform integrates with MLOps pipelines to enforce policies and provide audit-ready reporting. Security teams can monitor performance and compliance across all AI models.

Standout Capabilities

- Real-time encrypted computation

- Multi-cloud and hybrid environment support

- Policy enforcement for secure AI operations

- Integration with MLOps pipelines

- Audit-ready compliance reporting

AI-Specific Depth

- Model support: Proprietary / BYO

- RAG / knowledge integration: N/A

- Evaluation: Regression tests, human review

- Guardrails: Policy enforcement, prompt injection detection

- Observability: Latency, token usage, cost metrics

Pros

- Protects data during computation

- Enterprise-ready dashboards and reports

- Multi-cloud capable

Cons

- Hardware and deployment complexity

- Premium pricing

- Learning curve for teams

Security & Compliance

SSO/RBAC, audit logs, encryption. Certifications: Not publicly stated

Deployment & Platforms

- Cloud / Hybrid / On-prem

- Web / Linux / Windows

Integrations & Ecosystem

APIs, SDKs, dashboards, CI/CD hooks, alerting

Pricing Model

Tiered enterprise subscription. Not publicly stated

Best-Fit Scenarios

- Multi-cloud AI deployments

- Regulated healthcare and finance workloads

- Enterprise-scale confidential AI models

4 — Azure Confidential AI

One-line verdict: Cloud-based confidential computing solution for AI with automated policy enforcement and monitoring.

Short description :

Azure Confidential AI leverages secure enclaves to protect AI workloads in the cloud. It provides automated encryption, policy enforcement, and compliance dashboards for enterprise customers. Integration with Azure ML and MLOps allows seamless deployment of confidential AI models. Ideal for organizations processing regulated or sensitive data across cloud environments.

Standout Capabilities

- Hardware-backed confidential computing

- Integration with Azure ML pipelines

- Real-time policy enforcement

- Compliance reporting dashboards

- Scalable cloud deployment

AI-Specific Depth

- Model support: Proprietary / BYO / Azure-hosted

- RAG / knowledge integration: N/A

- Evaluation: Regression, human-in-the-loop

- Guardrails: Policy enforcement, prompt injection defense

- Observability: Metrics dashboards, latency, token usage

Pros

- Cloud-native confidential AI

- Automated compliance reporting

- Seamless integration with Azure services

Cons

- Cloud-only

- Premium subscription cost

- Limited on-prem support

Security & Compliance

SSO/RBAC, audit logs, encryption. Certifications: Not publicly stated

Deployment & Platforms

- Cloud (Azure)

- Web / Linux / Windows

Integrations & Ecosystem

APIs, Azure ML SDK, CI/CD hooks, dashboards, alerts

Pricing Model

Subscription-based. Not publicly stated

Best-Fit Scenarios

- Cloud AI deployments

- Regulated enterprise workloads

- Azure-native AI pipelines

5 — Google Confidential AI

One-line verdict: Cloud AI platform using confidential VMs to protect sensitive LLM workloads and model training.

Short description :

Google Confidential AI allows AI workloads to run inside confidential virtual machines with encryption in use. It supports secure training, inference, and multi-cloud hybrid deployments. Policy enforcement, monitoring, and compliance reporting are built in. Enterprises can secure LLMs, proprietary datasets, and sensitive AI models with minimal performance impact.

Standout Capabilities

- Confidential VM support for AI workloads

- Integration with TensorFlow and Vertex AI

- Policy enforcement and monitoring

- Audit-ready dashboards

- Multi-cloud and hybrid deployment support

AI-Specific Depth

- Model support: Proprietary / BYO / Google-hosted

- RAG / knowledge integration: N/A

- Evaluation: Regression tests, human review

- Guardrails: Policy enforcement, prompt injection mitigation

- Observability: Token usage, latency, dashboards

Pros

- Cloud-native confidential computing

- Enterprise-ready dashboards

- Seamless AI framework integration

Cons

- Cloud-only solution

- Premium pricing

- Limited on-prem options

Security & Compliance

SSO/RBAC, audit logs, encryption. Certifications: Not publicly stated

Deployment & Platforms

- Cloud (GCP)

- Web / Linux / Windows

Integrations & Ecosystem

APIs, Vertex AI SDK, CI/CD hooks, dashboards

Pricing Model

Tiered enterprise subscription. Not publicly stated

Best-Fit Scenarios

- Confidential LLM training

- Multi-cloud enterprise deployments

- Regulated AI workloads

6 — Fortanix Runtime Encryption

One-line verdict: Protects AI workloads in-memory using hardware-secure enclaves and policy-driven encryption.

Short description :

Fortanix Runtime Encryption secures AI computations by encrypting data in memory while models are executed. It supports multi-cloud and on-prem deployments, with integration into CI/CD and MLOps workflows. Real-time monitoring and policy enforcement prevent data leakage. Ideal for enterprises with strict compliance and sensitive AI models.

Standout Capabilities

- Memory-level encryption for AI workloads

- Multi-cloud and hybrid support

- Policy-driven enforcement

- Integration with pipelines

- Audit-ready dashboards

AI-Specific Depth

- Model support: Proprietary / BYO

- RAG / knowledge integration: N/A

- Evaluation: Regression, human-in-loop

- Guardrails: Policy enforcement

- Observability: Metrics, latency, token usage

Pros

- Real-time in-memory data protection

- Multi-cloud capability

- Compliance-ready

Cons

- Hardware dependency

- Premium pricing

- Integration complexity

Security & Compliance

SSO/RBAC, audit logs, encryption. Certifications: Not publicly stated

Deployment & Platforms

- Cloud / On-prem / Hybrid

- Web / Linux / Windows

Integrations & Ecosystem

APIs, SDKs, CI/CD hooks, dashboards

Pricing Model

Enterprise subscription. Not publicly stated

Best-Fit Scenarios

- Regulated AI workloads

- Multi-cloud deployments

- LLM training and inference

7 — IBM Secure Enclave for AI

One-line verdict: Confidential computing solution to protect AI workloads with hardware-based encryption and secure enclaves.

Short description :

IBM Secure Enclave for AI provides hardware-backed confidential execution for AI models. It supports both cloud and hybrid deployments, integrating with enterprise MLOps pipelines. Automated monitoring, policy enforcement, and audit dashboards allow teams to maintain compliance while executing sensitive AI workloads.

Standout Capabilities

- Hardware-secured enclaves

- Multi-cloud and hybrid support

- CI/CD integration

- Automated monitoring and reporting

- Policy enforcement

AI-Specific Depth

- Model support: Proprietary / BYO

- RAG / knowledge integration: N/A

- Evaluation: Regression, human review

- Guardrails: Policy enforcement

- Observability: Latency, token usage, dashboards

Pros

- Enterprise-grade secure execution

- Compliance-ready

- Multi-cloud capable

Cons

- Premium pricing

- Hardware requirements

- Integration complexity

Security & Compliance

SSO/RBAC, audit logs, encryption. Certifications: Not publicly stated

Deployment & Platforms

- Cloud / On-prem / Hybrid

- Web / Linux / Windows

Integrations & Ecosystem

APIs, SDKs, dashboards, CI/CD hooks

Pricing Model

Tiered subscription. Not publicly stated

Best-Fit Scenarios

- Confidential LLM inference

- Enterprise AI pipelines

- Hybrid AI deployments

8 — Microsoft Azure Confidential VM

One-line verdict: Cloud-based confidential AI workloads with encryption-in-use and real-time policy enforcement.

Short description :

Azure Confidential VM allows AI workloads to execute in a fully encrypted environment. It supports real-time monitoring, policy enforcement, and compliance reporting. Integration with Azure ML and MLOps ensures enterprise AI workloads remain confidential. Ideal for multi-cloud and regulated deployments.

Standout Capabilities

- Confidential VM execution

- Real-time monitoring and alerts

- Policy enforcement

- CI/CD integration

- Audit-ready dashboards

AI-Specific Depth

- Model support: BYO / Azure-hosted

- RAG / knowledge integration: N/A

- Evaluation: Regression tests, human review

- Guardrails: Policy enforcement

- Observability: Metrics dashboards, latency, token usage

Pros

- Cloud-native confidential computing

- Enterprise-ready

- Integration with Azure services

Cons

- Cloud-only

- Premium pricing

- Limited on-prem deployment

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud (Azure)

- Web / Linux / Windows

Integrations & Ecosystem

APIs, Azure ML SDK, CI/CD hooks, dashboards

Pricing Model

Enterprise subscription. Not publicly stated

Best-Fit Scenarios

- Cloud-based confidential AI workloads

- Regulated industries

- Azure-native AI pipelines

9 — Google Confidential VM

One-line verdict: Cloud AI platform using confidential virtual machines to protect sensitive AI training and inference.

Short description:

Google Confidential VM allows AI workloads to run inside hardware-secured VMs with encryption-in-use. It supports secure LLM training and inference. Integration with Vertex AI and TensorFlow pipelines provides enterprise-ready monitoring, dashboards, and policy enforcement. Multi-cloud and hybrid deployments ensure protection for sensitive data.

Standout Capabilities

- Hardware-secured confidential VMs

- Multi-cloud and hybrid deployment support

- Real-time monitoring

- Policy enforcement and compliance dashboards

- Integration with AI frameworks

AI-Specific Depth

- Model support: Proprietary / BYO / Multi-model

- RAG / knowledge integration: N/A

- Evaluation: Regression, human review

- Guardrails: Policy enforcement

- Observability: Latency, token, cost metrics

Pros

- Secure training and inference

- Cloud-native dashboards

- Multi-cloud capable

Cons

- Cloud-only

- Premium cost

- Limited on-prem options

Security & Compliance

SSO/RBAC, audit logs, encryption. Certifications: Not publicly stated

Deployment & Platforms

- Cloud (GCP)

- Web / Linux / Windows

Integrations & Ecosystem

APIs, Vertex AI SDK, dashboards, CI/CD hooks

Pricing Model

Enterprise subscription. Not publicly stated

Best-Fit Scenarios

- LLM training and inference

- Regulated enterprise workloads

- Multi-cloud AI deployments

10 — Fortanix Confidential AI Runtime

One-line verdict: Confidential computing platform for AI workloads with in-memory encryption, policy enforcement, and observability.

Short description :

Fortanix Confidential AI Runtime encrypts AI model data in memory while running workloads. It supports multi-cloud and hybrid deployments, providing automated policy enforcement, monitoring, and audit-ready reporting. Integration with CI/CD and MLOps ensures secure deployment of sensitive AI workloads. Ideal for enterprises with regulated data and LLMs handling confidential information.

Standout Capabilities

- In-memory encryption for AI workloads

- Policy enforcement and automated remediation

- Multi-cloud and hybrid support

- CI/CD and MLOps integration

- Audit-ready dashboards

AI-Specific Depth

- Model support: Proprietary / BYO

- RAG / knowledge integration: N/A

- Evaluation: Regression, human review

- Guardrails: Policy enforcement, prompt injection mitigation

- Observability: Latency, token usage, cost metrics

Pros

- Protects AI data in-use

- Enterprise-ready dashboards

- Multi-cloud deployment support

Cons

- Premium pricing

- Setup complexity

- Hardware dependency

Security & Compliance

SSO/RBAC, audit logs, encryption. Certifications: Not publicly stated

Deployment & Platforms

- Cloud / Hybrid / On-prem

- Web / Linux / Windows

Integrations & Ecosystem

APIs, SDKs, dashboards, CI/CD hooks

Pricing Model

Tiered enterprise subscription. Not publicly stated

Best-Fit Scenarios

- Enterprise AI workloads

- Regulated industries

- LLMs handling confidential data

Comparison Table

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| Intel SGX AI Shield | Enterprise LLM security | Cloud / Hybrid | Proprietary / BYO | Hardware-level encryption | Requires SGX hardware | N/A |

| AMD SEV AI Guard | Hybrid & cloud AI workloads | Cloud / Hybrid | Proprietary / BYO | Memory encryption | Hardware-specific | N/A |

| FortiAI Confidential | Multi-cloud enterprise AI | Cloud / Hybrid | Proprietary / BYO | Real-time encrypted computation | Premium pricing | N/A |

| Azure Confidential AI | Cloud enterprise workloads | Cloud | Proprietary / Azure-hosted | Automated policy enforcement | Cloud-only | N/A |

| Google Confidential VM | Multi-cloud confidential LLMs | Cloud | Proprietary / BYO / Multi-model | Secure VM execution | Cloud-only | N/A |

| Fortanix Runtime Encryption | Hybrid & on-prem AI workloads | Cloud / Hybrid / On-prem | Proprietary / BYO | Memory-level encryption | Hardware dependency | N/A |

| IBM Secure Enclave for AI | Enterprise confidential AI | Cloud / Hybrid / On-prem | Proprietary / BYO | Hardware-based secure enclave | Premium pricing | N/A |

| Microsoft Azure Confidential VM | Cloud AI workloads | Cloud | BYO / Azure-hosted | Encryption in-use | Cloud-only | N/A |

| SafePrompt | Regulated AI environments | Cloud / Hybrid | Proprietary / BYO / Multi-model | Automated masking | Setup complexity | N/A |

| Fortanix Confidential AI Runtime | Enterprise confidential AI | Cloud / Hybrid / On-prem | Proprietary / BYO | In-memory encryption | Premium pricing | N/A |

Scoring & Evaluation (Transparent Rubric)

Scoring is comparative, based on features, reliability, guardrails, integrations, ease, performance, security, and support. Weighted total is calculated (0–10) for enterprise relevance.

| Tool Name | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| Intel SGX AI Shield | 9 | 9 | 9 | 8 | 8 | 8 | 9 | 8 | 8.5 |

| AMD SEV AI Guard | 8 | 8 | 8 | 8 | 7 | 8 | 8 | 7 | 7.8 |

| FortiAI Confidential | 9 | 8 | 8 | 8 | 7 | 8 | 8 | 7 | 8.0 |

| Azure Confidential AI | 8 | 8 | 8 | 7 | 7 | 7 | 8 | 7 | 7.5 |

| Google Confidential VM | 9 | 9 | 9 | 8 | 8 | 8 | 9 | 8 | 8.5 |

| Fortanix Runtime Encryption | 8 | 8 | 8 | 7 | 7 | 7 | 8 | 7 | 7.6 |

| IBM Secure Enclave for AI | 9 | 8 | 9 | 8 | 8 | 8 | 9 | 8 | 8.3 |

| Microsoft Azure Confidential VM | 8 | 8 | 8 | 7 | 7 | 7 | 8 | 7 | 7.5 |

| SafePrompt | 8 | 8 | 8 | 7 | 7 | 7 | 8 | 7 | 7.5 |

| Fortanix Confidential AI Runtime | 9 | 9 | 9 | 8 | 8 | 8 | 9 | 8 | 8.5 |

Top 3 for Enterprise: Intel SGX AI Shield, Google Confidential VM, Fortanix Confidential AI Runtime

Top 3 for SMB: Azure Confidential AI, Fortanix Runtime Encryption, SafePrompt

Top 3 for Developers: FortiAI Confidential, AMD SEV AI Guard, IBM Secure Enclave for AI

Which Confidential Computing for AI Workloads Tool Is Right for You?

Solo / Freelancer

For small-scale experiments or testing with sensitive datasets, lightweight frameworks or BYO confidential computing solutions are sufficient. Open-source runtimes or single-cloud solutions like SafePrompt or Fortanix Runtime Encryption (trial/demo) allow you to experiment without heavy enterprise overhead.

SMB

Mid-market organizations benefit from tools that balance security, compliance, and cost. Azure Confidential AI, AMD SEV AI Guard, or FortiAI Confidential provide encrypted computation, policy enforcement, and audit-ready dashboards while remaining manageable for smaller teams.

Mid-Market

Organizations scaling AI workloads across hybrid or multi-cloud environments need automated monitoring, guardrails, and integrated compliance features. Platforms like FortiAI Confidential, IBM Secure Enclave for AI, and Fortanix Confidential AI Runtime are well-suited for these scenarios.

Enterprise

Large enterprises handling multiple sensitive AI workloads require full-featured platforms with hardware-backed enclaves, CI/CD integration, real-time monitoring, and audit-ready reporting. Intel SGX AI Shield, Google Confidential VM, and Fortanix Confidential AI Runtime offer comprehensive enterprise-grade confidentiality and governance.

Regulated industries (finance/healthcare/public sector)

Organizations in highly regulated sectors must prioritize tools with audit-ready dashboards, compliance reporting, and automated policy enforcement. Confidential computing platforms with multi-cloud support, such as Intel SGX AI Shield, Azure Confidential AI, or Fortanix Confidential AI Runtime, are recommended.

Budget vs premium

- Budget-conscious: Open-source or BYO tools, or lightweight runtimes for pilot projects.

- Premium: Full enterprise platforms offering multi-cloud, hybrid deployment, automated guardrails, and full compliance dashboards.

Build vs buy (when to DIY)

- DIY/Build: Suitable for testing or internal small-scale confidential AI workloads, using open-source runtimes or BYO solutions.

- Buy: Recommended for production, enterprise-scale workloads with regulatory compliance needs, leveraging Intel SGX AI Shield, Fortanix Confidential AI, or Google Confidential VM.

Implementation Playbook

30 Days – Pilot & Metrics

- Identify high-risk AI workloads to test in secure enclaves

- Deploy monitoring on pilot workloads

- Establish baseline metrics: detection accuracy, latency, false positives

- Human validation for edge cases

- Collect feedback to refine policies and integration

60 Days – Harden & Expand

- Integrate confidential computing into CI/CD and MLOps pipelines

- Configure dashboards, alerts, and automated policy enforcement

- Expand coverage to additional AI models and hybrid environments

- Begin compliance-ready reporting

- Train security and AI teams on monitoring and remediation

90 Days – Optimize & Scale

- Automate real-time monitoring for all AI workloads

- Fine-tune guardrails, policies, and remediation rules

- Integrate incident response and red-teaming exercises

- Optimize latency, throughput, and resource usage

- Establish enterprise-wide governance and continuous evaluation

AI-specific tasks: Red-teaming, evaluation harness, prompt/version control, incident handling, multi-tenant monitoring

Common Mistakes & How to Avoid Them

- Ignoring multi-modal workloads (text, image, audio)

- Skipping CI/CD integration

- No continuous monitoring of deployed AI workloads

- Poorly configured guardrails or policies

- Lack of human-in-the-loop verification

- Ignoring latency and cost impact

- Insufficient observability dashboards

- Not monitoring hybrid or multi-cloud workloads

- Missing audit logs for compliance

- Vendor lock-in without API abstraction

- Over-automation without testing

- Underestimating prompt-injection vulnerabilities

- Not tracking model versions or sensitive data

- No periodic policy or guardrail review

FAQs

1. What workloads benefit from confidential computing?

AI workloads processing sensitive data, IP, or regulated datasets.

2. Can these tools integrate with CI/CD pipelines?

Yes, most enterprise solutions support automated integration for monitoring and enforcement.

3. Do they work with BYO models?

Yes, both proprietary and BYO models are supported.

4. Are they suitable for SMBs?

Some lighter-weight implementations can support SMB AI operations, but full enterprise features are optimized for large deployments.

5. Can they prevent prompt injection risks?

Yes, guardrails and policy enforcement help prevent unsafe prompts from leaking sensitive data.

6. What observability metrics are available?

Dashboards track latency, token/cost metrics, and real-time detection alerts.

7. How frequently should workloads be evaluated?

Continuous monitoring is recommended for production AI workloads.

8. Are multi-cloud workloads supported?

Yes, hybrid and multi-cloud AI workloads are supported in most platforms.

9. Can these tools generate compliance reports?

Yes, dashboards and logs provide audit-ready evidence.

10. How is pricing structured?

Varies: subscription, tiered enterprise, or usage-based.

11. Do they affect model performance?

Optimized tools minimize latency and throughput overhead.

12. Are these tools developer-friendly?

Yes, APIs and SDKs allow integration with CI/CD and MLOps pipelines.

Conclusion

Confidential Computing for AI Workloads protects sensitive data during model training and inference, ensuring enterprise compliance and security. Selecting the right platform depends on scale, regulatory requirements, and deployment complexity. SMBs may leverage lighter-weight solutions, while enterprises benefit from full-featured platforms with secure enclaves, audit-ready dashboards, and automated policy enforcement. Implementing these platforms requires a phased approach: pilot, integrate, and scale. Key next steps include shortlisting suitable platforms, piloting on critical workloads, verifying detection and compliance features, and scaling deployment across all AI systems to maintain a secure and trustworthy AI environment.