Introduction

LLM Evaluation Harnesses are tools designed to systematically test, measure, and validate the performance of large language models (LLMs) across different tasks, datasets, and real-world scenarios. Instead of relying on subjective outputs or ad hoc testing, these platforms provide structured evaluation pipelines—helping teams quantify accuracy, reliability, safety, and cost efficiency.

As AI systems become embedded in production workflows—from customer support bots to autonomous agents—evaluation is no longer optional. Organizations now need continuous validation to detect hallucinations, monitor regressions, and ensure models behave as expected under changing inputs and prompts.

Common use cases include:

- Benchmarking models before deployment

- Regression testing after prompt or model updates

- Comparing multiple LLMs for cost-performance tradeoffs

- Evaluating RAG pipelines and retrieval quality

- Red-teaming models for safety and prompt injection risks

When choosing an evaluation harness, buyers should assess criteria such as dataset flexibility, evaluation metrics, automation capabilities, integration with pipelines, support for multi-model testing, observability, scalability, cost tracking, and governance controls.

Best for: AI engineers, ML teams, CTOs, and enterprises deploying LLM-powered products at scale, especially in regulated or high-stakes environments.

Not ideal for: Small teams experimenting casually with LLMs, or use cases where manual testing is sufficient and production reliability is not critical.

What’s Changed in LLM Evaluation Harnesses

- Shift from static benchmarks to continuous evaluation pipelines integrated into CI/CD workflows

- Rise of agent evaluation (multi-step reasoning, tool use, and planning validation)

- Built-in hallucination detection and factuality scoring becoming standard

- Increased focus on prompt injection and jailbreak resistance testing

- Multimodal evaluation (text, image, audio inputs) gaining importance

- Model routing evaluation across multiple providers (cost vs quality tradeoffs)

- Real-time observability with token usage, latency, and error tracking

- Synthetic dataset generation for scalable testing

- Stronger enterprise controls: audit logs, role-based access, and governance layers

- Evaluation tied to business KPIs (conversion, support resolution, etc.)

Quick Buyer Checklist (Scan-Friendly)

- Clear data privacy and retention policies

- Support for multiple models (hosted + BYO)

- Ability to test RAG pipelines and retrieval quality

- Built-in evaluation metrics (accuracy, toxicity, hallucination)

- Guardrails and adversarial testing features

- Cost and latency tracking across experiments

- Integration with CI/CD pipelines

- Audit logs and admin controls

- Support for custom datasets and scenarios

- Low vendor lock-in with exportable results

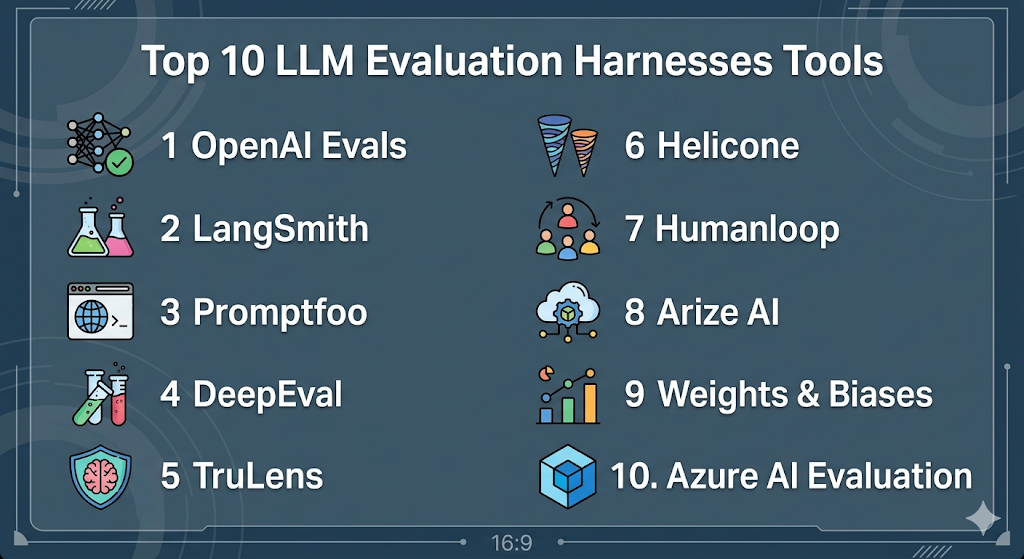

Top 10 LLM Evaluation Harnesses Tools

1 — OpenAI Evals

One-line verdict: Best for developers seeking flexible, code-first evaluation pipelines tightly integrated with OpenAI models.

Short description:

A framework designed for evaluating LLM outputs using structured datasets and test cases. Widely used by developers for regression testing and benchmarking model performance.

Standout Capabilities

- Code-based evaluation workflows for flexibility and automation

- Supports custom benchmarks and datasets

- Regression testing across model versions and prompts

- Community-driven evaluation templates

- Integration with model APIs for seamless testing

AI-Specific Depth

- Model support: Proprietary + BYO model (via API integration)

- RAG / knowledge integration: Limited / custom implementation required

- Evaluation: Prompt tests, regression, dataset-based evaluation

- Guardrails: Basic / custom logic required

- Observability: Limited built-in metrics

Pros

- Highly customizable evaluation workflows

- Strong developer community support

- Ideal for CI/CD integration

Cons

- Requires engineering effort to set up

- Limited UI for non-technical users

- Minimal built-in guardrails

Security & Compliance

Not publicly stated

Deployment & Platforms

- Platforms: Web, CLI

- Deployment: Cloud / Local

Integrations & Ecosystem

Supports integration with APIs and developer tooling, making it easy to embed evaluation into ML pipelines.

- API-based workflows

- Python SDK

- CI/CD tools

- Custom datasets

Pricing Model

Not publicly stated

Best-Fit Scenarios

- Model regression testing pipelines

- Benchmarking prompt changes

- Developer-driven evaluation workflows

2 — LangSmith

One-line verdict: Best for teams building LLM apps who need observability combined with evaluation workflows.

Short description:

A platform focused on tracing, debugging, and evaluating LLM applications, particularly those built with orchestration frameworks.

Standout Capabilities

- End-to-end tracing of LLM calls

- Built-in evaluation workflows for prompts and chains

- Dataset management for testing

- Visual debugging interface

- Supports agent workflows

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Strong support

- Evaluation: Prompt tests, regression, human feedback

- Guardrails: Basic safety checks

- Observability: Detailed tracing and metrics

Pros

- Combines observability with evaluation

- Strong ecosystem integration

- Easy debugging for complex workflows

Cons

- Best suited for specific frameworks

- Some features may require setup effort

- Limited standalone benchmarking capabilities

Security & Compliance

Not publicly stated

Deployment & Platforms

- Platforms: Web

- Deployment: Cloud

Integrations & Ecosystem

Works closely with LLM orchestration tools and APIs, enabling full lifecycle visibility.

- SDKs

- API integrations

- Dataset tools

- Workflow tracing

Pricing Model

Tiered / usage-based

Best-Fit Scenarios

- Debugging LLM pipelines

- Evaluating agent workflows

- Monitoring production systems

3 — Promptfoo

One-line verdict: Best lightweight tool for prompt testing and quick evaluation across multiple LLM providers.

Short description:

An open-source tool designed to test prompts against different models and compare outputs quickly and efficiently.

Standout Capabilities

- CLI-based prompt testing

- Multi-model comparison

- Custom assertions and scoring

- Simple configuration setup

- Local execution support

AI-Specific Depth

- Model support: Multi-model / BYO

- RAG / knowledge integration: Limited

- Evaluation: Prompt testing, assertions

- Guardrails: Custom rules

- Observability: Basic

Pros

- Easy to get started

- Lightweight and fast

- Open-source flexibility

Cons

- Limited enterprise features

- Minimal observability

- Not designed for large-scale pipelines

Security & Compliance

Not publicly stated

Deployment & Platforms

- Platforms: CLI

- Deployment: Local / Cloud

Integrations & Ecosystem

Focused on developer workflows with minimal overhead.

- CLI tools

- Config-based testing

- API support

Pricing Model

Open-source

Best-Fit Scenarios

- Prompt experimentation

- Quick model comparisons

- Developer testing workflows

4 — DeepEval

One-line verdict: Best for teams needing automated evaluation metrics like hallucination detection and answer relevance.

Short description:

A specialized evaluation framework focused on measuring LLM output quality using predefined and custom metrics.

Standout Capabilities

- Built-in hallucination detection

- Answer relevance scoring

- Dataset-based evaluation

- Automated scoring pipelines

- Python-based workflows

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Automated metrics, regression testing

- Guardrails: Limited

- Observability: Basic

Pros

- Strong focus on evaluation quality

- Easy integration with Python workflows

- Useful for RAG validation

Cons

- Limited UI

- Requires technical setup

- Narrow focus compared to full platforms

Security & Compliance

Not publicly stated

Deployment & Platforms

- Platforms: Python

- Deployment: Local / Cloud

Integrations & Ecosystem

Integrates well with ML pipelines and evaluation workflows.

- Python SDK

- Dataset integration

- API workflows

Pricing Model

Varies / N/A

Best-Fit Scenarios

- RAG evaluation

- Quality scoring

- Automated testing pipelines

5 — TruLens

One-line verdict: Best for evaluating and monitoring LLM applications with strong feedback and scoring mechanisms.

Short description:

An open-source tool designed for tracking, evaluating, and improving LLM outputs with feedback loops.

Standout Capabilities

- Feedback-based evaluation

- LLM output tracking

- Custom scoring metrics

- RAG evaluation tools

- Visualization dashboards

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Strong

- Evaluation: Feedback-based scoring

- Guardrails: Limited

- Observability: Good

Pros

- Strong evaluation flexibility

- Open-source

- Good for RAG workflows

Cons

- Setup complexity

- Limited enterprise features

- UI may be basic

Security & Compliance

Not publicly stated

Deployment & Platforms

- Platforms: Web / Python

- Deployment: Local / Cloud

Integrations & Ecosystem

Supports integration with modern LLM stacks and pipelines.

- APIs

- Python SDK

- RAG tools

Pricing Model

Open-source

Best-Fit Scenarios

- Feedback-driven evaluation

- RAG system validation

- Continuous monitoring

6 — Helicone

One-line verdict: Best for teams needing lightweight observability with basic evaluation insights for production LLM APIs.

Short description:

Helicone is primarily an LLM observability platform that also enables evaluation workflows by tracking requests, responses, and performance metrics. It’s widely used to monitor production AI systems and identify issues in real time.

Standout Capabilities

- Request-level logging for every LLM interaction with detailed metadata

- Built-in dashboards for latency, token usage, and cost tracking

- Replay and debugging capabilities for failed or low-quality outputs

- Supports multi-provider tracking in a single interface

- Lightweight proxy-based integration requiring minimal code changes

- Enables basic evaluation through log analysis and scoring

- Real-time monitoring for production systems

AI-Specific Depth

- Model support: Multi-model / BYO

- RAG / knowledge integration: Limited

- Evaluation: Log-based analysis, basic scoring

- Guardrails: Limited

- Observability: Strong (core strength)

Pros

- Extremely easy to integrate using proxy approach

- Strong visibility into cost and latency

- Useful for production monitoring at scale

Cons

- Not a full-featured evaluation harness

- Limited built-in evaluation metrics

- Requires external tools for deeper analysis

Security & Compliance

Not publicly stated

Deployment & Platforms

- Platforms: Web

- Deployment: Cloud

Integrations & Ecosystem

Designed to plug into existing LLM pipelines without heavy setup, making it ideal for teams already in production.

- API proxy integration

- Supports major LLM providers

- Logging pipelines

- Observability dashboards

- Developer tooling

Pricing Model

Usage-based / tiered

Best-Fit Scenarios

- Monitoring production LLM APIs

- Tracking cost and latency metrics

- Debugging real-world failures

7 — Humanloop

One-line verdict: Best for product teams combining prompt management, evaluation, and human feedback loops.

Short description:

Humanloop provides a collaborative platform for prompt engineering, evaluation, and continuous improvement using human-in-the-loop feedback systems.

Standout Capabilities

- Prompt versioning and experimentation workflows

- Built-in human review and annotation tools

- Dataset management for evaluation pipelines

- Feedback loops for improving model outputs

- UI-driven evaluation workflows for non-technical users

- Supports iterative prompt refinement

- Combines product and ML workflows

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Human feedback, regression testing

- Guardrails: Basic

- Observability: Moderate

Pros

- Strong collaboration between product and engineering teams

- Human-in-the-loop evaluation improves quality

- Easy-to-use interface

Cons

- Limited deep technical evaluation metrics

- May not scale easily for large enterprises

- Some workflows require manual effort

Security & Compliance

Not publicly stated

Deployment & Platforms

- Platforms: Web

- Deployment: Cloud

Integrations & Ecosystem

Focuses on bridging human feedback with model evaluation workflows.

- APIs

- Prompt management tools

- Dataset pipelines

- Annotation systems

- Model integrations

Pricing Model

Tiered / usage-based

Best-Fit Scenarios

- Prompt optimization workflows

- Human-reviewed evaluation pipelines

- Product-focused AI teams

8 — Arize AI

One-line verdict: Best enterprise-grade platform for monitoring, evaluating, and debugging production AI systems at scale.

Short description:

Arize AI is a comprehensive ML observability and evaluation platform that helps organizations track performance, detect issues, and continuously improve models in production environments.

Standout Capabilities

- End-to-end model observability with drift detection

- Advanced evaluation metrics and analytics

- Root cause analysis for model failures

- Strong support for production monitoring

- Scalable infrastructure for enterprise workloads

- Visualization dashboards for performance tracking

- Integration with ML pipelines and data systems

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Advanced metrics, regression testing

- Guardrails: Limited / custom

- Observability: Strong (core strength)

Pros

- Enterprise-grade scalability

- Deep insights into model behavior

- Strong monitoring capabilities

Cons

- Complex setup

- Higher cost compared to smaller tools

- May be overkill for small teams

Security & Compliance

- SSO/SAML: Supported

- RBAC: Supported

- Audit logs: Supported

- Encryption: Supported

- Certifications: Not publicly stated

Deployment & Platforms

- Platforms: Web

- Deployment: Cloud / Hybrid

Integrations & Ecosystem

Built for integration into large-scale ML systems and enterprise workflows.

- APIs

- Data pipelines

- ML platforms

- Monitoring tools

- Analytics systems

Pricing Model

Enterprise / tiered

Best-Fit Scenarios

- Large-scale production AI systems

- Continuous monitoring and evaluation

- Enterprise ML operations

9 — Weights & Biases (W&B)

One-line verdict: Best for ML teams needing experiment tracking combined with evaluation and model performance insights.

Short description:

Weights & Biases is a popular ML platform that enables experiment tracking, model evaluation, and collaboration across teams building AI systems.

Standout Capabilities

- Experiment tracking for model training and evaluation

- Visualization dashboards for performance metrics

- Dataset versioning and management

- Collaboration tools for ML teams

- Supports large-scale experimentation workflows

- Integration with training pipelines

- Strong community adoption

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Limited

- Evaluation: Experiment tracking, metrics

- Guardrails: N/A

- Observability: Strong for experiments

Pros

- Widely adopted and well-supported

- Excellent visualization tools

- Strong collaboration features

Cons

- Not a dedicated LLM evaluation harness

- Limited guardrail capabilities

- Requires integration effort

Security & Compliance

Not publicly stated

Deployment & Platforms

- Platforms: Web

- Deployment: Cloud / Self-hosted

Integrations & Ecosystem

Deeply integrated into ML development workflows and tooling.

- Python SDK

- ML frameworks

- Data pipelines

- Experiment tracking tools

- APIs

Pricing Model

Tiered / usage-based

Best-Fit Scenarios

- Experiment tracking

- Model evaluation during training

- Collaborative ML workflows

10 — Azure AI Evaluation

One-line verdict: Best for enterprises already in Microsoft ecosystem needing integrated evaluation and governance.

Short description:

Azure AI Evaluation provides tools for testing, benchmarking, and validating AI models within the broader Azure ecosystem, focusing on enterprise use cases.

Standout Capabilities

- Integrated evaluation within cloud AI workflows

- Support for enterprise governance and compliance

- Built-in benchmarking tools

- Scalable infrastructure

- Integration with cloud services and pipelines

- Supports production deployment workflows

- Security-focused architecture

AI-Specific Depth

- Model support: Proprietary + BYO

- RAG / knowledge integration: Supported

- Evaluation: Benchmarking, regression testing

- Guardrails: Supported

- Observability: Moderate

Pros

- Strong enterprise integration

- Built-in governance features

- Scalable infrastructure

Cons

- Ecosystem dependency

- Limited flexibility outside platform

- Learning curve for new users

Security & Compliance

- SSO/SAML: Supported

- RBAC: Supported

- Audit logs: Supported

- Encryption: Supported

- Certifications: Not publicly stated

Deployment & Platforms

- Platforms: Web

- Deployment: Cloud

Integrations & Ecosystem

Deep integration with enterprise cloud and AI services.

- Cloud services

- APIs

- Data pipelines

- Enterprise systems

- DevOps tools

Pricing Model

Usage-based / enterprise

Best-Fit Scenarios

- Enterprise AI deployments

- Regulated environments

- Cloud-native AI systems

Comparison Table (Top 10)

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| LangSmith | End-to-end LLM debugging & eval | Cloud / Hybrid | Multi-model / BYO | Deep tracing + evaluation | Paid scaling complexity | N/A |

| Weights & Biases (W&B) | Experiment tracking + eval | Cloud / Self-hosted | Multi-model / BYO | Strong experiment tracking | Learning curve | N/A |

| Promptfoo | CLI-based automated evaluation | Local / CI pipelines | Multi-model / BYO | Dev-first evaluation automation | Limited UI | N/A |

| DeepEval | Open-source evaluation testing | Local / Self-hosted | Open-source / BYO | Lightweight + extensible | Limited enterprise features | N/A |

| OpenAI Evals | Research-grade model evaluation | Local / Cloud | OpenAI models primarily | Benchmark-style evaluations | Narrow model support | N/A |

| TruLens | Explainability + evaluation | Local / Cloud | Multi-model / BYO | Feedback + interpretability | Setup complexity | N/A |

| Ragas | RAG-specific evaluation | Local / Python | Open-source / BYO | RAG metric specialization | Limited beyond RAG | N/A |

| MLflow | Experiment + evaluation tracking | Cloud / Self-hosted | Multi-model / BYO | Mature ML lifecycle tool | Not LLM-native | N/A |

| Helicone | Observability + lightweight eval | Cloud / Proxy-based | Multi-model | Real-time monitoring | Limited deep eval capabilities | N/A |

| Arize AI | Enterprise observability + eval | Cloud / Hybrid | Multi-model / BYO | Production monitoring + eval | Enterprise complexity | N/A |

Scoring & Evaluation (Transparent Rubric)

Scoring below is comparative, not absolute. Each tool is evaluated across core capabilities, reliability, safety, ecosystem, usability, performance, security, and support. Scores reflect practical usage patterns across teams rather than theoretical capability.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| LangSmith | 9 | 9 | 8 | 9 | 8 | 8 | 8 | 8 | 8.6 |

| W&B | 9 | 8 | 7 | 9 | 7 | 7 | 8 | 9 | 8.1 |

| Promptfoo | 7 | 8 | 6 | 7 | 9 | 9 | 6 | 7 | 7.7 |

| DeepEval | 7 | 7 | 6 | 6 | 8 | 9 | 6 | 7 | 7.3 |

| OpenAI Evals | 8 | 8 | 6 | 6 | 7 | 7 | 6 | 7 | 7.4 |

| TruLens | 8 | 9 | 7 | 7 | 6 | 7 | 7 | 7 | 7.6 |

| Ragas | 7 | 8 | 6 | 6 | 8 | 9 | 6 | 7 | 7.5 |

| MLflow | 8 | 7 | 6 | 9 | 6 | 7 | 8 | 9 | 7.8 |

| Helicone | 7 | 7 | 6 | 8 | 9 | 9 | 6 | 7 | 7.6 |

| Arize AI | 9 | 9 | 8 | 8 | 6 | 7 | 9 | 8 | 8.4 |

Top 3 for Enterprise

- Arize AI

- LangSmith

- Weights & Biases

Top 3 for SMB

- LangSmith

- Promptfoo

- Helicone

Top 3 for Developers

- Promptfoo

- DeepEval

- Ragas

Which LLM Evaluation Harness Is Right for You?

Solo / Freelancer

Lightweight tools like Promptfoo are ideal for quick testing without heavy setup.

SMB

TruLens or DeepEval provide balance between usability and functionality.

Mid-Market

LangSmith offers strong observability and evaluation combined.

Enterprise

Arize AI and Azure AI Evaluation provide governance, scalability, and security.

Regulated industries

Choose platforms with audit logs, data controls, and compliance support.

Budget vs premium

Open-source tools offer flexibility; enterprise tools offer reliability and support.

Build vs buy

DIY if you have ML expertise; otherwise, use managed platforms.

Implementation Playbook (30 / 60 / 90 Days)

30 Days:

- Define evaluation metrics

- Build pilot datasets

- Run baseline evaluations

60 Days:

- Add automated testing pipelines

- Implement guardrails

- Integrate observability tools

90 Days:

- Optimize cost and latency

- Scale evaluation coverage

- Add governance and audit systems

Common Mistakes & How to Avoid Them

- Ignoring hallucination risks

- Not testing edge cases

- Lack of evaluation datasets

- Overlooking prompt injection attacks

- No cost monitoring

- Poor observability

- Over-automation without review

- Vendor lock-in

- Weak governance controls

FAQs

1. What is an LLM evaluation harness?

A framework that helps test and measure LLM performance systematically using datasets and metrics.

2. Why is evaluation important?

It ensures reliability, accuracy, and safety before deploying models in production.

3. Can I use multiple models?

Yes, most tools support multi-model evaluation.

4. Do these tools support RAG?

Some do, especially those focused on application-level evaluation.

5. Are open-source tools enough?

For small teams, yes. Enterprises often need additional features.

6. What about privacy?

Varies by tool; always verify data handling policies.

7. Can I automate evaluation?

Yes, many tools support CI/CD integration.

8. Do I need coding skills?

Some tools require technical expertise.

9. How do I measure hallucinations?

Using specialized evaluation metrics and datasets.

10. Are these tools expensive?

Pricing varies; open-source options are available.

11. Can I switch tools later?

Yes, but migration effort varies.

12. What’s the biggest risk?

Lack of proper evaluation leading to unreliable AI systems.

Conclusion

LLM evaluation harnesses have become essential for building reliable, scalable AI systems, but the right choice depends heavily on your team’s technical depth, deployment scale, and need for observability or governance; start by shortlisting a few tools, run a focused pilot with real use cases, and only then scale after validating evaluation quality, security controls, and cost efficiency.