Introduction

Tool-calling middleware for AI agents acts as the bridge between large language models and external tools, APIs, and systems. Instead of generating static responses, modern AI agents can dynamically invoke functions, query databases, trigger workflows, or interact with enterprise systems. This middleware layer standardizes how agents discover, select, and execute tools safely and reliably.

This category has become critical as AI systems shift toward agentic workflows—where models plan, reason, and take actions autonomously. Organizations now expect AI to integrate deeply into business processes like customer support, data analysis, DevOps automation, and internal knowledge retrieval.

real world

real world use cases include:

- Automating multi-step business workflows

- Connecting AI agents to APIs and databases

- Enabling real-time decision systems

- Building autonomous copilots for operations and engineering

- Orchestrating multi-agent collaboration

When evaluating these platforms, buyers should consider:

- Tool/function calling reliability

- Model compatibility (open vs proprietary)

- Latency and cost efficiency

- Observability and debugging

- Security and guardrails

- Integration flexibility

- Evaluation and testing capabilities

- Vendor lock-in risks

- Scalability and deployment options

- Governance and auditability

Best for: AI engineers, platform teams, CTOs, and enterprises building production-grade AI agents with real-world integrations.

Not ideal for: Simple chatbot use cases or teams that only need basic prompt-response systems without external tool execution.

What’s Changed in Tool-Calling Middleware for Agents

- Shift from single-agent systems to multi-agent orchestration

- Native support for structured tool/function calling APIs

- Increased adoption of multimodal tool inputs (text, image, audio)

- Stronger guardrails against prompt injection and unsafe tool execution

- Built-in evaluation frameworks for reliability and regression testing

- Model routing across multiple LLM providers

- Improved observability with tracing and execution logs

- Cost-aware execution and dynamic tool selection

- Better support for private and on-prem deployments

- Standardization efforts like tool schemas and agent protocols

- Growing need for governance, audit logs, and compliance controls

Quick Buyer Checklist

- Does it support secure tool execution with permission controls?

- Can you use your own models (BYO) or open-source LLMs?

- Does it integrate with vector databases or RAG pipelines?

- Are evaluation and testing tools available?

- Does it include guardrails against prompt injection?

- How strong is observability (logs, traces, debugging)?

- Can it optimize latency and cost dynamically?

- Are audit logs and admin controls available?

- Does it support cloud, self-hosted, or hybrid deployment?

- What is the level of vendor lock-in?

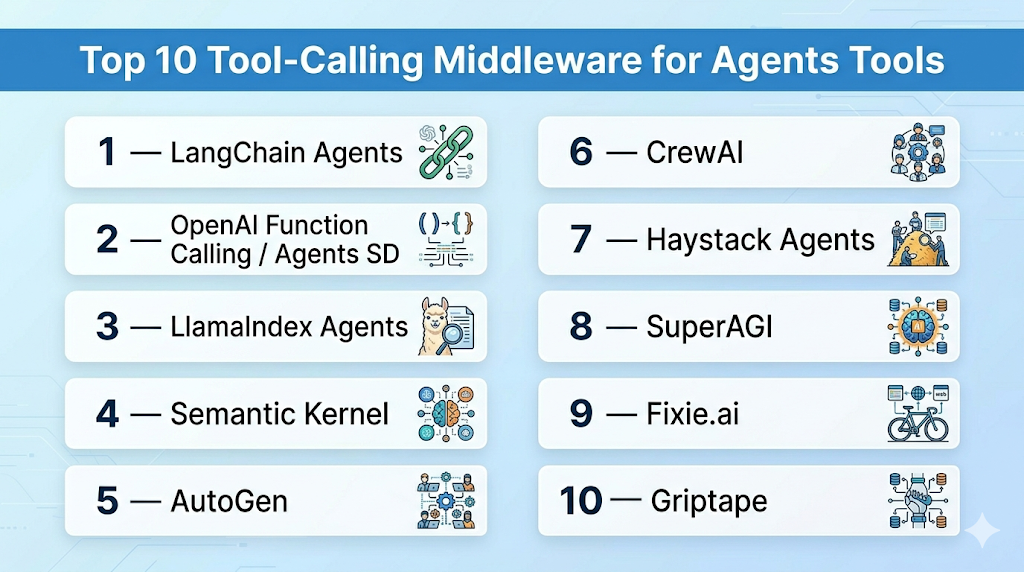

Top 10 Tool-Calling Middleware for Agents Tools

1 — LangChain Agents

One-line verdict: Best for developers building flexible, customizable agent workflows with extensive tool integrations.

Short description:

LangChain Agents provide a modular framework to connect LLMs with tools, APIs, and workflows. Widely used by developers for building agent-based systems.

Standout Capabilities

- Extensive tool integration ecosystem

- Flexible agent planning and execution

- Built-in memory and context handling

- Supports chains and multi-step workflows

- Strong community and ecosystem

- Works with multiple LLM providers

AI-Specific Depth

- Model support: Multi-model routing, BYO model

- RAG / knowledge integration: Strong support with vector DBs

- Evaluation: Basic; extended via ecosystem tools

- Guardrails: Varies / N/A

- Observability: Available via integrations

Pros

- Highly flexible and customizable

- Large ecosystem and community support

- Works with most major LLMs

Cons

- Can become complex at scale

- Requires engineering effort

- Native guardrails limited

Security & Compliance

Not publicly stated

Deployment & Platforms

- Python/JavaScript

- Cloud/Self-hosted

Integrations & Ecosystem

Strong ecosystem with APIs, SDKs, and connectors:

- Vector databases

- LLM providers

- APIs and custom tools

- Data sources

Pricing Model

Open-source with optional enterprise tooling

Best-Fit Scenarios

- Building custom AI agents

- Prototyping agent workflows

- Developer-focused experimentation

2 — OpenAI Function Calling / Agents SDK

One-line verdict: Best for teams needing reliable, structured tool-calling tightly integrated with proprietary models.

Short description:

Provides structured function calling and agent capabilities integrated with advanced LLMs, enabling reliable tool execution.

Standout Capabilities

- Native function calling support

- High reliability in tool execution

- Tight integration with models

- Structured JSON outputs

- Simplified developer experience

AI-Specific Depth

- Model support: Proprietary

- RAG / knowledge integration: Basic / via APIs

- Evaluation: Limited native tools

- Guardrails: Built-in safety layers

- Observability: Basic

Pros

- Reliable tool execution

- Easy to implement

- Strong model performance

Cons

- Vendor lock-in risk

- Limited customization

- Less control vs open frameworks

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud-based

Integrations & Ecosystem

- APIs

- SDKs

- External tools

- Function schemas

Pricing Model

Usage-based

Best-Fit Scenarios

- Production-grade assistants

- API-driven workflows

- Fast deployment use cases

3 — LlamaIndex Agents

One-line verdict: Best for data-centric agent workflows with strong retrieval and knowledge integration.

Short description:

LlamaIndex focuses on connecting LLMs with structured and unstructured data sources, enabling tool calling within data pipelines.

Standout Capabilities

- Strong RAG integration

- Data connectors and indexing

- Agent workflows with tools

- Flexible data pipelines

- Multi-source querying

AI-Specific Depth

- Model support: Multi-model / BYO

- RAG / knowledge integration: Strong

- Evaluation: Basic

- Guardrails: Varies / N/A

- Observability: Limited

Pros

- Excellent for data-heavy use cases

- Easy integration with databases

- Flexible architecture

Cons

- Less focus on orchestration

- Limited guardrails

- Requires setup effort

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud/Self-hosted

Integrations & Ecosystem

- Databases

- APIs

- Vector stores

- Data pipelines

Pricing Model

Open-source + enterprise options

Best-Fit Scenarios

- Knowledge assistants

- Data retrieval agents

- Internal enterprise tools

4 — Semantic Kernel

One-line verdict: Best for enterprise developers integrating AI agents into structured application workflows.

Short description:

Semantic Kernel provides orchestration and tool-calling capabilities with strong integration into enterprise ecosystems.

Standout Capabilities

- Plugin-based architecture

- Strong orchestration support

- Enterprise integration focus

- Supports multiple languages

- Memory and planning features

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Limited

- Guardrails: Varies / N/A

- Observability: Basic

Pros

- Enterprise-ready design

- Structured workflows

- Flexible plugins

Cons

- Learning curve

- Limited evaluation tools

- Evolving ecosystem

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud/Self-hosted

Integrations & Ecosystem

- APIs

- Plugins

- Enterprise systems

- SDKs

Pricing Model

Open-source

Best-Fit Scenarios

- Enterprise applications

- Workflow automation

- Internal tools

5 — AutoGen

One-line verdict: Best for multi-agent collaboration with automated tool usage and conversation-driven workflows.

Short description:

AutoGen enables multiple agents to collaborate, communicate, and invoke tools dynamically.

Standout Capabilities

- Multi-agent coordination

- Conversation-driven execution

- Tool integration

- Flexible agent roles

- Autonomous workflows

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Limited

- Guardrails: Varies / N/A

- Observability: Limited

Pros

- Strong multi-agent support

- Flexible workflows

- Research-friendly

Cons

- Complexity in production

- Limited guardrails

- Observability gaps

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud/Self-hosted

Integrations & Ecosystem

- APIs

- Tools

- LLM providers

- Custom workflows

Pricing Model

Open-source

Best-Fit Scenarios

- Multi-agent systems

- Research prototypes

- Complex workflows

6 — CrewAI

One-line verdict: Best for structured team-based agent workflows with defined roles and tool usage.

Short description:

CrewAI organizes agents into teams (“crews”) with roles, tasks, and tools.

Standout Capabilities

- Role-based agent design

- Task orchestration

- Tool integration

- Simple abstractions

- Workflow structuring

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Limited

- Guardrails: Varies / N/A

- Observability: Basic

Pros

- Easy to understand model

- Structured workflows

- Good for teams

Cons

- Limited advanced features

- Early-stage ecosystem

- Basic observability

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud/Self-hosted

Integrations & Ecosystem

- APIs

- Tools

- LLMs

- Workflows

Pricing Model

Varies / N/A

Best-Fit Scenarios

- Team-based agents

- Workflow automation

- Simple orchestration

7 — Haystack Agents

One-line verdict: Best for search and RAG-driven agents with integrated pipelines and tools.

Short description:

Haystack provides pipelines for search, retrieval, and agent-based execution.

Standout Capabilities

- RAG pipelines

- Tool integration

- Search optimization

- Modular design

- Open-source ecosystem

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Strong

- Evaluation: Basic

- Guardrails: Varies / N/A

- Observability: Limited

Pros

- Strong search capabilities

- Modular pipelines

- Open-source

Cons

- Less focus on orchestration

- Limited guardrails

- Requires setup

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud/Self-hosted

Integrations & Ecosystem

- Search engines

- APIs

- Databases

- LLMs

Pricing Model

Open-source

Best-Fit Scenarios

- Search agents

- Knowledge systems

- RAG workflows

8 — SuperAGI

One-line verdict: Best for autonomous agent systems with built-in tooling and monitoring.

Short description:

SuperAGI focuses on autonomous agents with integrated tools and observability.

Standout Capabilities

- Autonomous agent loops

- Built-in tools

- Monitoring dashboards

- Task execution tracking

- Plugin ecosystem

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Limited

- Guardrails: Varies / N/A

- Observability: Strong

Pros

- Built-in observability

- Autonomous workflows

- Integrated tools

Cons

- Early-stage maturity

- Limited enterprise features

- Guardrails evolving

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud/Self-hosted

Integrations & Ecosystem

- Plugins

- APIs

- Tools

- LLM providers

Pricing Model

Varies / N/A

Best-Fit Scenarios

- Autonomous agents

- Monitoring-heavy systems

- Experimentation

9 — Fixie.ai

One-line verdict: Best for building tool-using AI agents with strong execution environments.

Short description:

Fixie provides infrastructure for deploying agents that interact with tools and APIs.

Standout Capabilities

- Tool execution environments

- API integrations

- Agent hosting

- Scalable infrastructure

- Developer-focused

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Limited

- Evaluation: Limited

- Guardrails: Varies / N/A

- Observability: Basic

Pros

- Strong execution layer

- Developer-friendly

- Scalable

Cons

- Limited ecosystem

- Early-stage

- Less documentation

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- APIs

- Tools

- SDKs

- Hosting

Pricing Model

Not publicly stated

Best-Fit Scenarios

- Tool execution agents

- API-heavy workflows

- Developer builds

10 — Griptape

One-line verdict: Best for structured agent pipelines with strong control over tool usage and execution.

Short description:

Griptape provides structured pipelines and agents with controlled tool execution.

Standout Capabilities

- Pipeline architecture

- Tool abstraction

- Controlled execution

- Modular design

- Security focus

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Limited

- Guardrails: Basic

- Observability: Basic

Pros

- Structured pipelines

- Control over tools

- Modular

Cons

- Smaller ecosystem

- Limited evaluation tools

- Less community support

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud/Self-hosted

Integrations & Ecosystem

- APIs

- Tools

- SDKs

- Pipelines

Pricing Model

Open-source

Best-Fit Scenarios

- Controlled workflows

- Secure environments

- Modular pipelines

Comparison Table

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| LangChain Agents | Developers | Hybrid | Multi-model | Flexibility | Complexity | N/A |

| OpenAI Agents SDK | Production apps | Cloud | Proprietary | Reliability | Lock-in | N/A |

| LlamaIndex Agents | Data workflows | Hybrid | Multi-model | RAG strength | Orchestration limits | N/A |

| Semantic Kernel | Enterprise apps | Hybrid | Multi-model | Structure | Learning curve | N/A |

| AutoGen | Multi-agent systems | Hybrid | Multi-model | Collaboration | Complexity | N/A |

| CrewAI | Team workflows | Hybrid | Multi-model | Simplicity | Early stage | N/A |

| Haystack Agents | Search/RAG | Hybrid | Multi-model | Search pipelines | Setup effort | N/A |

| SuperAGI | Autonomous agents | Hybrid | Multi-model | Observability | Maturity | N/A |

| Fixie.ai | Tool execution | Cloud | Multi-model | Execution infra | Ecosystem | N/A |

| Griptape | Structured pipelines | Hybrid | Multi-model | Control | Smaller ecosystem | N/A |

Scoring & Evaluation (Transparent Rubric)

Scores are comparative and based on relative strengths across key enterprise and developer needs.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| LangChain | 9 | 7 | 6 | 9 | 7 | 7 | 6 | 9 | 7.8 |

| OpenAI SDK | 8 | 8 | 7 | 7 | 9 | 8 | 7 | 7 | 7.9 |

| LlamaIndex | 8 | 7 | 6 | 8 | 7 | 7 | 6 | 8 | 7.4 |

| Semantic Kernel | 8 | 7 | 6 | 8 | 6 | 7 | 7 | 7 | 7.2 |

| AutoGen | 8 | 6 | 5 | 7 | 6 | 6 | 6 | 7 | 6.8 |

| CrewAI | 7 | 6 | 5 | 7 | 8 | 6 | 6 | 6 | 6.7 |

| Haystack | 7 | 6 | 5 | 8 | 6 | 7 | 6 | 7 | 6.8 |

| SuperAGI | 7 | 6 | 5 | 7 | 6 | 6 | 6 | 6 | 6.5 |

| Fixie | 7 | 6 | 5 | 6 | 7 | 7 | 6 | 6 | 6.5 |

| Griptape | 7 | 6 | 6 | 6 | 6 | 6 | 6 | 6 | 6.4 |

Top 3 for Enterprise: Semantic Kernel, OpenAI Agents SDK, LangChain

Top 3 for SMB: CrewAI, LangChain, LlamaIndex

Top 3 for Developers: LangChain, AutoGen, LlamaIndex

Which Tool-Calling Middleware for Agents Tool Is Right for You?

Solo / Freelancer

Use LangChain or CrewAI for flexibility and simplicity. Avoid heavy enterprise tools.

SMB

LlamaIndex or CrewAI provide balance between power and usability.

Mid-Market

Semantic Kernel or LangChain with observability layers.

Enterprise

OpenAI Agents SDK or Semantic Kernel with governance and security layers.

Regulated industries

Prefer controlled environments like Semantic Kernel or Griptape.

Budget vs premium

- Budget: Open-source tools

- Premium: Managed platforms

Build vs buy

Build if customization is critical; buy if speed matters.

Implementation Playbook (30 / 60 / 90 Days)

30 Days

- Define use cases

- Build pilot agent

- Set evaluation metrics

60 Days

- Add guardrails

- Implement monitoring

- Conduct testing

90 Days

- Optimize cost/latency

- Scale deployment

- Add governance

Common Mistakes & How to Avoid Them

- Ignoring prompt injection risks

- No evaluation framework

- Poor observability

- Over-automation

- Vendor lock-in

- Weak guardrails

- No cost tracking

- Lack of governance

- Poor tool design

- No fallback strategies

FAQs

1. What is tool-calling middleware?

It connects AI agents to external tools and APIs.

2. Why is it important?

It enables agents to take real actions, not just generate text.

3. Can I use my own models?

Yes, most tools support BYO models.

4. Is it secure?

Depends on implementation and guardrails.

5. What about costs?

Varies based on usage and infrastructure.

6. Do I need RAG?

Only for knowledge-heavy applications.

7. Can I self-host?

Many tools support self-hosting.

8. How to evaluate performance?

Use testing frameworks and metrics.

9. What are guardrails?

Controls to prevent unsafe behavior.

10. Can I switch tools later?

Yes, but migration effort varies.

11. Are these tools production-ready?

Some are, others are still evolving.

12. What alternatives exist?

Custom-built systems or simpler APIs.

Conclusion

Tool-calling middleware is essential for building AI agents that can interact with real systems and automate complex workflows. The best choice depends on your specific needs—whether it’s flexibility, enterprise control, or ease of use. Start by shortlisting a few tools, test them with a pilot, validate security and performance, and then scale based on what works best for your environment.