Introduction

AI Inference API Management Platforms sit between your applications and AI models, acting as a control layer that manages how requests are routed, monitored, secured, and optimized. Instead of calling individual model APIs directly, teams use these platforms to standardize access, enforce policies, and control performance and costs across multiple models.

These platforms matter now because modern AI systems are no longer single-model pipelines. They involve multi-model orchestration, real-time decision routing, agent workflows, and strict governance requirements. Without a centralized inference layer, costs spiral, latency becomes unpredictable, and security risks increase.

Real-world use cases include:

- Multi-model routing for customer support agents based on query complexity

- Real-time fraud detection pipelines with dynamic model selection

- Enterprise copilots that switch models for cost vs accuracy trade-offs

- AI-powered internal tools with strict audit and compliance requirements

- High-volume generative AI APIs with latency optimization across regions

What to evaluate:

- Model routing flexibility

- Cost optimization controls

- Latency management

- Observability and tracing

- Security and policy enforcement

- Multi-model support (open + proprietary)

- Rate limiting and traffic shaping

- Evaluation and testing support

- Vendor lock-in risk

- Deployment flexibility

Best for: AI engineers, platform teams, and CTOs building scalable, multi-model AI systems across startups to large enterprises.

Not ideal for: Small projects using a single model with low traffic, where direct API integration is simpler and more cost-effective.

What’s Changed in AI Inference API Management Platforms

- Shift from static routing to dynamic, context-aware model selection

- Native support for agent workflows and tool-calling pipelines

- Built-in cost optimization (auto-switch to cheaper models when possible)

- Latency-aware routing across regions and providers

- Integrated evaluation loops for production monitoring

- Stronger guardrails against prompt injection and misuse

- Unified observability across all model calls and pipelines

- BYO model support alongside hosted APIs

- Fine-grained access control and audit logging

- Increased demand for hybrid and self-hosted deployments

- Policy-driven inference governance (who can call what model)

- Multi-modal routing (text, image, audio in a single pipeline)

Quick Buyer Checklist (Scan-Friendly)

- Does it support multiple models (OpenAI, open-source, custom)?

- Can you route requests dynamically based on logic or cost?

- Are data retention and privacy controls configurable?

- Does it provide evaluation and testing pipelines?

- Are guardrails and policy enforcement built-in?

- Can you monitor latency, tokens, and cost in real time?

- Does it integrate with your existing stack (APIs, SDKs)?

- Is there support for hybrid or self-hosted deployment?

- Are audit logs and admin controls available?

- How hard is it to switch vendors later (lock-in risk)?

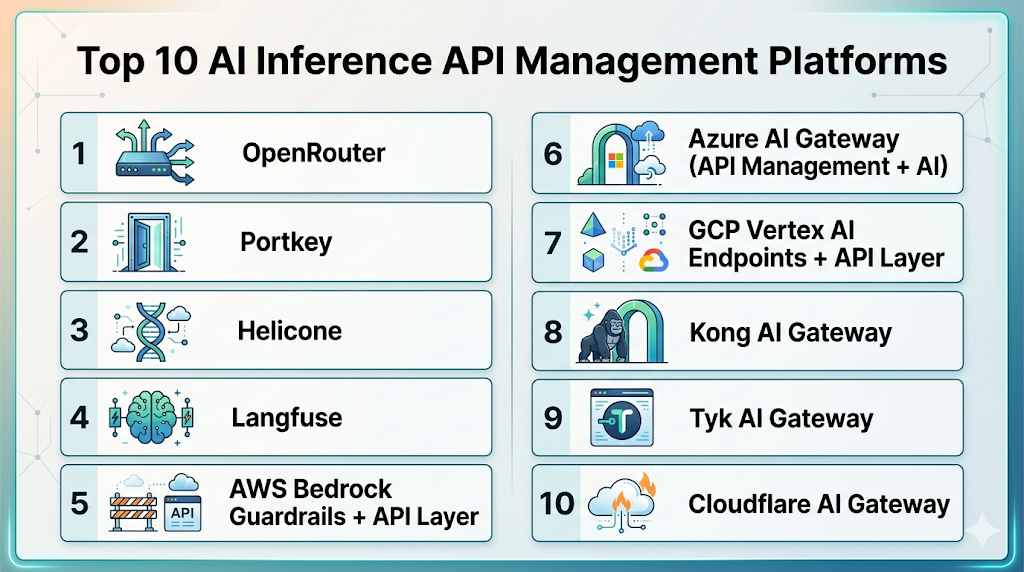

Top 10 AI Inference API Management Platforms

1 — OpenRouter

One-line verdict: Best for developers needing simple multi-model routing with cost-aware API abstraction.

Short description:

OpenRouter provides a unified API layer that allows developers to access multiple AI models through a single endpoint, simplifying routing and cost optimization across providers.

Standout Capabilities

- Unified API across multiple LLM providers

- Automatic fallback between models

- Cost-aware routing logic

- Transparent pricing abstraction

- Fast setup with minimal configuration

- Broad model compatibility

- Lightweight and developer-friendly

AI-Specific Depth

- Model support: Multi-model routing (proprietary + open-source)

- RAG / knowledge integration: N/A

- Evaluation: Limited

- Guardrails: Basic

- Observability: Basic usage metrics

Pros

- Extremely easy to integrate

- Reduces vendor lock-in

- Good for rapid prototyping

Cons

- Limited enterprise features

- Basic observability

- Minimal governance controls

Security & Compliance

Not publicly stated

Deployment & Platforms

- Web

- Cloud

Integrations & Ecosystem

Offers API-first integration with SDK compatibility for common programming languages.

- REST APIs

- SDKs

- Compatible with LLM frameworks

- Developer tools

Pricing Model

Usage-based

Best-Fit Scenarios

- Multi-model experimentation

- Cost optimization prototypes

- Developer-focused AI apps

2 — Portkey

One-line verdict: Best for teams needing production-grade AI gateway with observability, governance, and routing.

Short description:

Portkey acts as a full AI gateway, offering routing, logging, monitoring, and policy enforcement for AI inference APIs in production systems.

Standout Capabilities

- Centralized AI gateway

- Advanced logging and tracing

- Policy-based routing

- Multi-provider support

- Prompt management features

- Cost monitoring dashboards

- Rate limiting and retries

AI-Specific Depth

- Model support: Multi-model + BYO

- RAG / knowledge integration: Limited

- Evaluation: Yes (basic testing workflows)

- Guardrails: Yes

- Observability: Strong

Pros

- Enterprise-ready features

- Strong observability

- Flexible routing

Cons

- Setup complexity

- Learning curve

- Pricing not transparent

Security & Compliance

- RBAC

- Audit logs

- Encryption

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud

- Hybrid

Integrations & Ecosystem

Supports integration with major AI providers and developer tooling ecosystems.

- APIs

- SDKs

- Logging tools

- Cloud platforms

Pricing Model

Tiered + usage-based

Best-Fit Scenarios

- Production AI systems

- Enterprise governance

- Multi-team environments

3 — Helicone

One-line verdict: Best for teams prioritizing observability and debugging of AI API calls at scale.

Short description:

Helicone focuses on logging, monitoring, and analyzing AI inference requests, helping teams understand performance, cost, and reliability.

Standout Capabilities

- Detailed request logging

- Cost tracking per request

- Latency monitoring

- Debugging tools

- Open-source components

- Simple integration layer

- Analytics dashboards

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: N/A

- Evaluation: Limited

- Guardrails: Limited

- Observability: Strong

Pros

- Excellent observability

- Easy integration

- Developer-friendly

Cons

- Not a full gateway

- Limited routing features

- Minimal guardrails

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

- Self-hosted

Integrations & Ecosystem

Integrates with popular AI APIs and monitoring tools.

- APIs

- SDKs

- Logging pipelines

- Analytics tools

Pricing Model

Freemium + usage

Best-Fit Scenarios

- Debugging AI pipelines

- Monitoring costs

- Improving performance

4 — Langfuse

One-line verdict: Best for teams combining observability with evaluation and prompt tracking.

Short description:

Langfuse provides observability and evaluation tooling for LLM applications, helping teams track prompts, outputs, and performance.

Standout Capabilities

- Prompt tracking

- Evaluation workflows

- Observability dashboards

- Version control for prompts

- Open-source option

- Feedback loops

- Dataset creation

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Yes

- Evaluation: Strong

- Guardrails: Limited

- Observability: Strong

Pros

- Combines eval + observability

- Open-source flexibility

- Good developer tooling

Cons

- Not a full routing platform

- Requires setup effort

- Limited guardrails

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

- Self-hosted

Integrations & Ecosystem

Works well with LLM frameworks and data pipelines.

- APIs

- SDKs

- Vector DBs

- Dev tools

Pricing Model

Open-source + enterprise

Best-Fit Scenarios

- Evaluation pipelines

- Prompt management

- AI quality monitoring

5 — AWS Bedrock Guardrails + API Layer

One-line verdict: Best for enterprises deeply invested in AWS needing secure and scalable inference management.

Short description:

AWS provides inference management through Bedrock APIs combined with guardrails, monitoring, and enterprise-grade infrastructure.

Standout Capabilities

- Native AWS integration

- Managed model access

- Guardrails and policy enforcement

- Scalable infrastructure

- IAM-based access control

- Monitoring via AWS tools

- Multi-model access

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Yes

- Evaluation: Limited

- Guardrails: Strong

- Observability: Strong

Pros

- Enterprise-grade security

- Scalable infrastructure

- Deep AWS integration

Cons

- Vendor lock-in

- Complex setup

- Cost visibility challenges

Security & Compliance

- IAM

- Encryption

- Audit logs

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

Strong ecosystem within AWS services.

- AWS services

- APIs

- SDKs

- Data pipelines

Pricing Model

Usage-based

Best-Fit Scenarios

- Enterprise AI systems

- Regulated workloads

- AWS-native applications

6 — Azure AI Gateway (API Management + AI)

One-line verdict: Best for enterprises needing policy-driven AI API management within Microsoft ecosystem.

Short description:

Azure integrates AI inference with API Management, allowing teams to enforce policies, monitor usage, and manage multi-model deployments.

Standout Capabilities

- API gateway integration

- Policy enforcement

- Enterprise security

- Multi-model access

- Monitoring tools

- RBAC controls

- Scalable deployment

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Yes

- Evaluation: Limited

- Guardrails: Strong

- Observability: Strong

Pros

- Enterprise-ready

- Strong governance

- Deep Microsoft integration

Cons

- Complex configuration

- Azure dependency

- Cost complexity

Security & Compliance

- RBAC

- Audit logs

- Encryption

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

Integrates across Microsoft ecosystem.

- Azure services

- APIs

- SDKs

- DevOps tools

Pricing Model

Usage-based

Best-Fit Scenarios

- Microsoft-centric organizations

- Enterprise governance

- Large-scale deployments

7 — GCP Vertex AI Endpoints + API Layer

One-line verdict: Best for teams needing scalable inference endpoints with integrated model lifecycle management.

Short description:

Vertex AI provides managed endpoints for deploying and serving models with monitoring and scaling capabilities.

Standout Capabilities

- Managed endpoints

- Auto-scaling

- Monitoring tools

- Model versioning

- Integration with pipelines

- Multi-model deployment

- Data integration

AI-Specific Depth

- Model support: Multi-model + BYO

- RAG / knowledge integration: Yes

- Evaluation: Limited

- Guardrails: Limited

- Observability: Strong

Pros

- Scalable infrastructure

- Good ML integration

- Flexible deployment

Cons

- Complex setup

- Limited guardrails

- GCP dependency

Security & Compliance

- IAM

- Encryption

- Audit logs

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

Strong ML ecosystem integration.

- GCP services

- APIs

- SDKs

- Data pipelines

Pricing Model

Usage-based

Best-Fit Scenarios

- ML-heavy workflows

- Scalable inference

- Data-integrated AI systems

8 — Kong AI Gateway

One-line verdict: Best for organizations extending API gateway infrastructure to manage AI inference traffic.

Short description:

Kong extends traditional API gateway capabilities to AI workloads, offering routing, security, and traffic control.

Standout Capabilities

- API gateway foundation

- Traffic control

- Rate limiting

- Plugin architecture

- Security policies

- Scalable routing

- Observability tools

AI-Specific Depth

- Model support: BYO + multi-model

- RAG / knowledge integration: N/A

- Evaluation: N/A

- Guardrails: Yes

- Observability: Strong

Pros

- Mature gateway tech

- Highly customizable

- Strong performance

Cons

- Not AI-native

- Requires configuration

- Limited evaluation tools

Security & Compliance

- RBAC

- Encryption

- Audit logs

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud

- Self-hosted

Integrations & Ecosystem

Extensive API ecosystem.

- APIs

- Plugins

- Dev tools

- Cloud integrations

Pricing Model

Open-core + enterprise

Best-Fit Scenarios

- API-heavy organizations

- Custom AI routing

- Hybrid deployments

9 — Tyk AI Gateway

One-line verdict: Best for teams wanting open-source API gateway with AI traffic management capabilities.

Short description:

Tyk provides API management extended to AI inference use cases with strong customization and deployment flexibility.

Standout Capabilities

- Open-source gateway

- Traffic control

- Policy enforcement

- Analytics

- Hybrid deployment

- Custom plugins

- API lifecycle management

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: N/A

- Evaluation: N/A

- Guardrails: Yes

- Observability: Moderate

Pros

- Flexible deployment

- Open-source option

- Strong API controls

Cons

- Not AI-native

- Limited evaluation

- Requires setup

Security & Compliance

- RBAC

- Audit logs

- Encryption

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud

- Self-hosted

- Hybrid

Integrations & Ecosystem

Works across API ecosystems.

- APIs

- Plugins

- Dev tools

- Cloud services

Pricing Model

Open-source + enterprise

Best-Fit Scenarios

- Custom deployments

- Hybrid environments

- API-first teams

10 — Cloudflare AI Gateway

One-line verdict: Best for edge-based AI inference routing with global performance optimization.

Short description:

Cloudflare AI Gateway provides routing, caching, and monitoring for AI APIs at the edge, improving latency and reliability.

Standout Capabilities

- Edge routing

- Global latency optimization

- Caching for AI responses

- Observability tools

- Rate limiting

- Security features

- Easy integration

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: N/A

- Evaluation: Limited

- Guardrails: Moderate

- Observability: Strong

Pros

- Excellent performance

- Easy to deploy

- Strong global network

Cons

- Limited evaluation tools

- Not full LLMOps platform

- Feature depth varies

Security & Compliance

- Encryption

- Access controls

- Audit logs

- Certifications: Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

Integrates with edge and API systems.

- APIs

- Edge functions

- Dev tools

- Cloud services

Pricing Model

Usage-based

Best-Fit Scenarios

- Low-latency AI apps

- Global deployments

- High-traffic systems

Comparison Table (Top 10)

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| OpenRouter | Developers | Cloud | Multi-model | Simplicity | Limited enterprise features | N/A |

| Portkey | Enterprises | Cloud/Hybrid | Multi-model | Full gateway | Complexity | N/A |

| Helicone | Observability | Cloud/Self-hosted | Multi-model | Logging | Limited routing | N/A |

| Langfuse | Eval + tracking | Cloud/Self-hosted | Multi-model | Evaluation | Not gateway | N/A |

| AWS Bedrock | Enterprise | Cloud | Multi-model | Security | Lock-in | N/A |

| Azure AI Gateway | Enterprise | Cloud | Multi-model | Governance | Complexity | N/A |

| GCP Vertex AI | ML teams | Cloud | Multi-model/BYO | Scalability | Setup complexity | N/A |

| Kong AI Gateway | API teams | Cloud/Self-hosted | BYO | Flexibility | Not AI-native | N/A |

| Tyk AI Gateway | Open-source users | Hybrid | BYO | Customization | Setup effort | N/A |

| Cloudflare AI Gateway | Edge apps | Cloud | Multi-model | Performance | Limited eval | N/A |

Scoring & Evaluation (Transparent Rubric)

Scores are comparative, not absolute. They reflect relative strengths across features, evaluation, guardrails, integrations, usability, performance, security, and community.

| Tool | Core | Reliability | Guardrails | Integrations | Ease | Perf/Cost | Security | Support | Total |

|---|---|---|---|---|---|---|---|---|---|

| OpenRouter | 7 | 6 | 5 | 7 | 9 | 8 | 5 | 6 | 7.0 |

| Portkey | 9 | 8 | 8 | 8 | 7 | 8 | 8 | 7 | 8.2 |

| Helicone | 7 | 7 | 5 | 7 | 8 | 8 | 6 | 7 | 7.3 |

| Langfuse | 8 | 8 | 6 | 8 | 7 | 7 | 6 | 7 | 7.6 |

| AWS Bedrock | 9 | 8 | 9 | 9 | 6 | 7 | 9 | 8 | 8.4 |

| Azure AI | 9 | 8 | 9 | 9 | 6 | 7 | 9 | 8 | 8.4 |

| GCP Vertex | 8 | 7 | 6 | 9 | 6 | 8 | 8 | 7 | 7.7 |

| Kong | 8 | 7 | 8 | 9 | 6 | 8 | 8 | 7 | 7.8 |

| Tyk | 7 | 6 | 7 | 8 | 6 | 7 | 7 | 6 | 7.0 |

| Cloudflare | 8 | 7 | 7 | 8 | 8 | 9 | 8 | 7 | 8.0 |

Top 3 for Enterprise

- AWS Bedrock

- Azure AI Gateway

- Portkey

Top 3 for SMB

- OpenRouter

- Cloudflare AI Gateway

- Langfuse

Top 3 for Developers

- OpenRouter

- Helicone

- Langfuse

Which AI Inference API Management Platform Is Right for You?

Solo / Freelancer

Choose OpenRouter or Helicone for simplicity and fast setup without heavy infrastructure.

SMB

Cloudflare AI Gateway or Langfuse offers a balance of performance, monitoring, and cost control.

Mid-Market

Portkey or Kong provides flexibility with stronger governance and routing capabilities.

Enterprise

AWS Bedrock, Azure AI Gateway, or GCP Vertex AI offer full-scale infrastructure, security, and compliance.

Regulated industries

Prefer AWS or Azure for stronger governance, auditability, and enterprise controls.

Budget vs premium

- Budget: OpenRouter, Tyk

- Premium: AWS, Azure, Portkey

Build vs buy

Build if you need full customization and control; buy if speed, reliability, and compliance matter more.

Implementation Playbook (30 / 60 / 90 Days)

30 days

- Select 2–3 platforms

- Define success metrics (latency, cost, accuracy)

- Run pilot with real workloads

60 days

- Implement guardrails

- Add evaluation pipelines

- Deploy monitoring dashboards

90 days

- Optimize routing strategies

- Reduce costs via model switching

- Scale across teams and use cases

Common Mistakes & How to Avoid Them

- No evaluation pipeline

- Ignoring prompt injection risks

- Poor cost monitoring

- Over-reliance on one model

- Lack of observability

- No fallback strategies

- Weak access controls

- Ignoring latency

- Vendor lock-in

- No audit logs

- Over-automation

- Missing governance policies

FAQs

- What is an AI inference API management platform?

It acts as a control layer between your application and AI models, managing routing, monitoring, security, and cost optimization across multiple model providers. - When should I start using one?

You should consider it once you are using multiple models, handling high traffic, or needing better control over cost, latency, and reliability. - Can these platforms reduce AI costs?

Yes, many platforms offer smart routing and fallback mechanisms that automatically switch to lower-cost models when appropriate. - Do they support both proprietary and open-source models?

Most modern platforms support a mix of hosted proprietary models and bring-your-own (BYO) open-source models. - Are these platforms suitable for small projects?

Not always. For simple or low-scale applications, direct API integration is often more practical and cost-effective. - How do they improve performance and latency?

They optimize request routing based on factors like region, model speed, and workload, ensuring faster and more consistent responses. - Do they include security and access controls?

Many platforms offer features like role-based access control (RBAC), API keys, audit logs, and encryption, though depth varies. - Can I self-host these platforms?

Some tools provide self-hosted or hybrid deployment options, while others are fully cloud-based. - Do they support evaluation and testing of AI outputs?

Some platforms include built-in evaluation tools, while others require integration with external evaluation frameworks. - What is the risk of vendor lock-in?

It depends on the platform. Tools that support multi-model routing and standard APIs generally reduce lock-in risk. - How difficult is it to switch between models?

With the right platform, switching models can be done with minimal code changes through configuration or routing rules. - Do these platforms support multimodal AI (text, image, audio)?

Increasingly yes, but support levels vary depending on the platform and underlying model providers.

Conclusion

AI inference API management platforms are becoming essential for teams building scalable, reliable, and cost-efficient AI systems. As applications grow more complex with multiple models and real-time decision-making, these platforms provide the control layer needed to manage performance, enforce security, and optimize costs. The right choice depends on your scale, technical needs, and infrastructure maturity—so start with a focused pilot, validate outcomes, and scale gradually with strong evaluation and governance in place.