Introduction

Reinforcement Learning from Human Feedback (RLHF) and Reinforcement Learning from AI Feedback (RLAIF) training platforms are specialized tools designed to improve AI model behavior through feedback loops. In simple terms, these platforms help align AI systems with human preferences, safety expectations, and real-world performance goals by using labeled data, rankings, or automated feedback signals.

As AI systems become more autonomous—especially with the rise of AI agents and multimodal applications—alignment is no longer optional. Raw models often produce inconsistent, biased, or unsafe outputs. RLHF and RLAIF platforms address this by enabling structured training pipelines where models learn from curated feedback and evaluation signals.

Real-world use cases include:

- Aligning chatbots and copilots with company policies

- Reducing hallucinations and improving response accuracy

- Training domain-specific assistants (legal, healthcare, finance)

- Improving AI agent decision-making and tool usage

- Moderating outputs for safety and compliance

- Iteratively improving model performance through feedback loops

What to evaluate:

- Feedback collection methods (human vs AI vs hybrid)

- Annotation workflows and labeling quality controls

- Support for reward modeling and policy optimization

- Evaluation and benchmarking capabilities

- Guardrails and safety enforcement

- Integration with training pipelines (LLMs, multimodal models)

- Observability (feedback quality, model performance metrics)

- Scalability and cost efficiency

- Security and data governance

- Ease of use and collaboration features

Best for: AI engineers, ML teams, and enterprises building aligned AI systems, especially those deploying AI agents or operating in regulated environments.

Not ideal for: Teams that only need basic prompt tuning or lightweight customization without structured feedback pipelines; simpler fine-tuning approaches may suffice in those cases.

What’s Changed in RLHF / RLAIF Training Platforms

- Shift from purely human feedback to hybrid human + AI feedback (RLAIF)

- Integration with agentic workflows and tool-calling systems

- Support for multimodal feedback (text, image, audio)

- Built-in evaluation pipelines to reduce hallucinations and bias

- Stronger guardrails against prompt injection and unsafe outputs

- Increased demand for privacy-first feedback pipelines

- Emergence of automated feedback generation using synthetic data

- Improved observability (reward signals, performance tracking)

- Expansion of BYO model support and custom training loops

- Growth of real-time feedback systems for continuous learning

- Standardization of governance workflows and auditability

- Focus on cost-efficient training using smaller feedback datasets

Quick Buyer Checklist (Scan-Friendly)

- Does it support RLHF, RLAIF, or both?

- Can you collect human and/or AI-generated feedback?

- Are there built-in evaluation and benchmarking tools?

- Does it include guardrails and safety enforcement?

- Can you integrate with LLMs and multimodal models?

- Is data privacy and retention control clearly defined?

- Are observability tools available (metrics, reward tracking)?

- Can you deploy in cloud, self-hosted, or hybrid environments?

- Are there annotation workflows and quality controls?

- Does it support scalable feedback pipelines?

- What is the cost structure (human labeling vs automation)?

- Is there a risk of vendor lock-in?

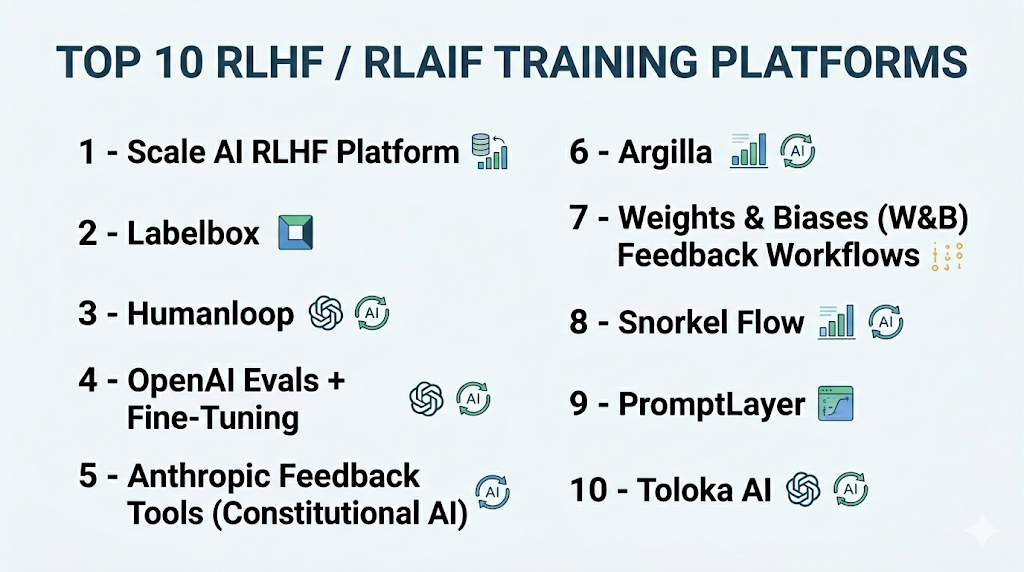

Top 10 RLHF / RLAIF Training Platforms

#1 — Scale AI RLHF Platform

One-line verdict: Best for enterprise-grade RLHF pipelines with high-quality human labeling and scalable feedback systems.

Short description:

A platform offering large-scale human feedback and data labeling services for training and aligning AI models.

Standout Capabilities

- Large human annotation workforce

- High-quality labeling pipelines

- Custom RLHF workflows

- Scalable infrastructure

- Enterprise-grade data handling

AI-Specific Depth

- Model support: BYO + proprietary integrations

- RAG / knowledge integration: N/A

- Evaluation: Human evaluation pipelines

- Guardrails: Policy-based moderation workflows

- Observability: Feedback and quality metrics

Pros

- High-quality human feedback

- Scalable labeling

- Enterprise-ready

Cons

- Cost can be high

- Requires integration effort

- Less developer-focused

Security & Compliance

RBAC, data controls; certifications: Not publicly stated

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- Data pipelines

- ML platforms

- Annotation tools

Pricing Model

Usage-based (human labeling + services)

Best-Fit Scenarios

- Enterprise AI alignment

- Large-scale RLHF projects

- Regulated environments

#2 — Labelbox

One-line verdict: Best for teams needing flexible annotation workflows combined with RLHF-style feedback pipelines.

Short description:

A data-centric AI platform that supports annotation, feedback collection, and model improvement workflows.

Standout Capabilities

- Custom annotation workflows

- Collaboration tools

- Data management

- Model-assisted labeling

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: N/A

- Evaluation: Annotation-based evaluation

- Guardrails: Limited

- Observability: Data and labeling metrics

Pros

- Flexible workflows

- Good UI

- Strong collaboration

Cons

- Not RLHF-native

- Limited guardrails

- Requires setup

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- SDKs

- ML tools

- Data pipelines

Pricing Model

Tiered

Best-Fit Scenarios

- Annotation workflows

- Mid-scale RLHF

- Data-centric teams

#3 — Humanloop

One-line verdict: Best for integrating human feedback directly into LLM development and evaluation pipelines.

Short description:

A platform focused on prompt engineering, evaluation, and feedback loops for LLM applications.

Standout Capabilities

- Feedback-driven iteration

- Prompt evaluation tools

- Human-in-the-loop workflows

- Experiment tracking

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Compatible

- Evaluation: Strong

- Guardrails: Limited

- Observability: Prompt and feedback metrics

Pros

- Developer-friendly

- Strong evaluation tools

- Fast iteration

Cons

- Limited large-scale RLHF

- Guardrails not extensive

- Enterprise features vary

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- LLM providers

- Dev tools

Pricing Model

Varies / N/A

Best-Fit Scenarios

- Prompt optimization

- Feedback-driven apps

- LLM experimentation

#4 — OpenAI Evals + Fine-Tuning

One-line verdict: Best for structured evaluation and alignment within hosted model ecosystems.

Short description:

A set of tools for evaluating and improving model outputs through structured feedback.

Standout Capabilities

- Evaluation frameworks

- Feedback loops

- Integration with hosted models

- Prompt testing

AI-Specific Depth

- Model support: Proprietary

- RAG / knowledge integration: Compatible

- Evaluation: Strong

- Guardrails: Built-in policies

- Observability: Metrics available

Pros

- Easy integration

- Strong evaluation

- Reliable infrastructure

Cons

- Limited BYO flexibility

- Vendor lock-in risk

- Less control

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- SDKs

- Dev tools

Pricing Model

Usage-based

Best-Fit Scenarios

- Hosted AI systems

- Evaluation workflows

- Rapid deployment

#5 — Anthropic Feedback Tools (Constitutional AI)

One-line verdict: Best for AI-driven feedback systems with strong safety and alignment focus.

Short description:

A framework leveraging AI-generated feedback for alignment and safety improvements.

Standout Capabilities

- AI-generated feedback loops

- Safety-focused training

- Constitutional AI approach

- Reduced human labeling

AI-Specific Depth

- Model support: Proprietary

- RAG / knowledge integration: N/A

- Evaluation: AI-driven

- Guardrails: Strong

- Observability: Limited

Pros

- Reduced reliance on human labeling

- Strong safety focus

- Scalable

Cons

- Limited transparency

- Less customization

- Proprietary ecosystem

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- LLM tools

Pricing Model

Varies / N/A

Best-Fit Scenarios

- Safety-critical systems

- AI alignment

- Scalable feedback loops

#6 — Argilla

One-line verdict: Best open-source platform for human feedback and dataset curation in RLHF workflows.

Short description:

An open-source tool for collecting, annotating, and managing feedback datasets.

Standout Capabilities

- Open-source

- Feedback dataset management

- Annotation UI

- Integration with ML pipelines

AI-Specific Depth

- Model support: BYO + open-source

- RAG / knowledge integration: Compatible

- Evaluation: Manual

- Guardrails: N/A

- Observability: Basic

Pros

- Open-source flexibility

- Easy integration

- Good for customization

Cons

- Limited enterprise features

- Requires setup

- No advanced guardrails

Deployment & Platforms

Self-hosted, cloud

Integrations & Ecosystem

- Python SDK

- ML pipelines

- APIs

Pricing Model

Open-source

Best-Fit Scenarios

- Open-source RLHF

- Data labeling

- Custom workflows

#7 — Weights & Biases (W&B) Feedback Workflows

One-line verdict: Best for tracking experiments and integrating feedback into model evaluation pipelines.

Short description:

A platform for experiment tracking and model evaluation with support for feedback loops.

Standout Capabilities

- Experiment tracking

- Visualization tools

- Feedback integration

- Collaboration

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: Compatible

- Evaluation: Strong

- Guardrails: N/A

- Observability: Advanced

Pros

- Strong observability

- Good integrations

- Enterprise-ready

Cons

- Not RLHF-native

- Requires setup

- Pricing varies

Deployment & Platforms

Cloud, self-hosted

Integrations & Ecosystem

- APIs

- ML frameworks

- CI/CD

Pricing Model

Tiered

Best-Fit Scenarios

- Experiment tracking

- Evaluation pipelines

- ML ops

#8 — Snorkel Flow

One-line verdict: Best for programmatic labeling and weak supervision in feedback-driven training pipelines.

Short description:

A platform that enables data labeling using programmatic rules instead of manual annotation.

Standout Capabilities

- Weak supervision

- Programmatic labeling

- Data-centric AI workflows

- Scalable labeling

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: N/A

- Evaluation: Strong

- Guardrails: N/A

- Observability: Metrics

Pros

- Reduces manual labeling

- Scalable

- Efficient

Cons

- Requires expertise

- Not RLHF-specific

- Setup complexity

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- Data pipelines

- ML tools

Pricing Model

Varies / N/A

Best-Fit Scenarios

- Data labeling

- Weak supervision

- Large datasets

#9 — PromptLayer

One-line verdict: Best for tracking prompts and collecting feedback in LLM applications.

Short description:

A tool focused on prompt tracking, evaluation, and feedback collection.

Standout Capabilities

- Prompt tracking

- Feedback collection

- Logging

- Evaluation

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Compatible

- Evaluation: Basic

- Guardrails: Limited

- Observability: Logs and metrics

Pros

- Simple to use

- Good for debugging

- Fast integration

Cons

- Limited RLHF features

- Not scalable for large pipelines

- Basic evaluation

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- SDKs

- LLM tools

Pricing Model

Tiered

Best-Fit Scenarios

- Prompt tracking

- Small-scale feedback

- Debugging

#10 — Toloka AI

One-line verdict: Best for scalable human feedback collection with crowdsourced annotation pipelines.

Short description:

A platform offering human-in-the-loop data labeling and feedback services.

Standout Capabilities

- Crowdsourced workforce

- Scalable annotation

- Quality control

- Data labeling pipelines

AI-Specific Depth

- Model support: BYO

- RAG / knowledge integration: N/A

- Evaluation: Human evaluation

- Guardrails: Policy-based workflows

- Observability: Quality metrics

Pros

- Scalable workforce

- Cost flexibility

- Human feedback quality

Cons

- Quality varies

- Requires management

- Not developer-first

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- Data pipelines

- ML tools

Pricing Model

Usage-based

Best-Fit Scenarios

- Large-scale labeling

- RLHF pipelines

- Data annotation

Comparison Table (Top 10)

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| Scale AI | Enterprise RLHF | Cloud | BYO | High-quality labeling | Cost | N/A |

| Labelbox | Annotation | Cloud | BYO | Flexibility | Not RLHF-native | N/A |

| Humanloop | Feedback loops | Cloud | Multi-model | Iteration speed | Limited scale | N/A |

| OpenAI Evals | Evaluation | Cloud | Proprietary | Reliability | Lock-in | N/A |

| Anthropic Tools | Safety | Cloud | Proprietary | Guardrails | Transparency | N/A |

| Argilla | Open-source | Hybrid | BYO | Flexibility | Setup | N/A |

| W&B | Tracking | Hybrid | BYO | Observability | Not RLHF-native | N/A |

| Snorkel | Labeling | Cloud | BYO | Automation | Complexity | N/A |

| PromptLayer | Prompt tracking | Cloud | Multi-model | Simplicity | Limited features | N/A |

| Toloka | Crowdsourcing | Cloud | BYO | Scale | Quality variance | N/A |

Scoring & Evaluation (Transparent Rubric)

Scoring is comparative and reflects relative strengths across key criteria, not absolute rankings.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| Scale AI | 9 | 8 | 7 | 8 | 6 | 7 | 8 | 7 | 7.8 |

| Labelbox | 7 | 6 | 5 | 7 | 7 | 6 | 7 | 7 | 6.6 |

| Humanloop | 8 | 8 | 5 | 8 | 8 | 7 | 6 | 7 | 7.4 |

| OpenAI Evals | 8 | 9 | 7 | 8 | 9 | 7 | 7 | 8 | 8.0 |

| Anthropic Tools | 8 | 8 | 9 | 7 | 7 | 7 | 7 | 7 | 7.8 |

| Argilla | 7 | 6 | 4 | 7 | 7 | 8 | 6 | 6 | 6.6 |

| W&B | 8 | 8 | 5 | 9 | 7 | 7 | 8 | 8 | 7.7 |

| Snorkel | 7 | 7 | 5 | 7 | 6 | 8 | 7 | 6 | 6.9 |

| PromptLayer | 6 | 6 | 4 | 6 | 8 | 7 | 5 | 6 | 6.2 |

| Toloka | 7 | 7 | 6 | 6 | 6 | 7 | 7 | 6 | 6.8 |

Top 3 for Enterprise: Scale AI, OpenAI Evals, Anthropic Tools

Top 3 for SMB: Humanloop, Labelbox, Argilla

Top 3 for Developers: Humanloop, Argilla, PromptLayer

Which RLHF / RLAIF Training Platform Is Right for You?

Solo / Freelancer

Use lightweight tools like PromptLayer or Argilla for simple feedback loops and experimentation.

SMB

Humanloop or Labelbox provide a balance of usability and capability for growing teams.

Mid-Market

Combine W&B with Argilla or Snorkel for scalable feedback and evaluation pipelines.

Enterprise

Scale AI or OpenAI-based systems offer robust, scalable RLHF pipelines.

Regulated industries (finance/healthcare/public sector)

Prioritize platforms with strong governance, auditability, and private deployment options.

Budget vs premium

Open-source tools reduce costs, while managed platforms offer scalability and convenience.

Build vs buy (when to DIY)

Build if you need full control over feedback loops; buy if speed and scalability are critical.

Implementation Playbook (30 / 60 / 90 Days)

30 Days

- Define alignment goals and metrics

- Select tools and run pilot

- Collect initial feedback datasets

60 Days

- Build evaluation pipelines

- Add guardrails and safety checks

- Start integrating into production

90 Days

- Optimize feedback loops

- Scale training pipelines

- Implement governance and monitoring

Common Mistakes & How to Avoid Them

- Ignoring evaluation metrics

- Over-relying on synthetic feedback

- Poor-quality human labeling

- Lack of guardrails

- Weak observability

- Cost overruns

- No version control

- Data privacy issues

- Over-automation

- Vendor lock-in

- Inconsistent feedback quality

- Lack of testing

FAQs

1. What is RLHF?

RLHF uses human feedback to train models to produce better and safer outputs.

2. What is RLAIF?

RLAIF uses AI-generated feedback instead of human labeling.

3. Which is better: RLHF or RLAIF?

It depends—RLHF offers higher quality, while RLAIF scales faster.

4. Do I need human annotators?

Not always, but they improve quality significantly.

5. Can I automate feedback?

Yes, using RLAIF or hybrid approaches.

6. Is RLHF expensive?

It can be, especially with human labeling.

7. Can I self-host these platforms?

Some tools support it; others are cloud-only.

8. Are evaluation tools included?

Varies by platform.

9. What are guardrails?

Mechanisms that prevent unsafe or incorrect outputs.

10. Can I use my own models?

Most platforms support BYO models.

11. How do I reduce costs?

Use hybrid feedback and optimize pipelines.

12. What are alternatives?

Prompt engineering, fine-tuning, or RAG-based approaches.

Conclusion

RLHF and RLAIF training platforms play a critical role in aligning modern AI systems with real-world expectations, helping teams improve accuracy, safety, and reliability; however, the right platform depends on your scale, budget, and need for human versus automated feedback—so the best approach is to shortlist a few tools, run a pilot with real feedback data, and validate evaluation, guardrails, and performance before