Introduction

Model fine-tuning platforms help teams customize pre-trained AI models to perform better on specific tasks, datasets, or domains. Instead of relying on generic outputs, fine-tuning enables organizations to train models on proprietary data, improving accuracy, tone, and relevance.

As AI adoption matures, fine-tuning has become a key capability for building competitive AI systems. From enterprise copilots to domain-specific assistants, companies now require models that understand their unique workflows and data.

Common real-world use cases include:

- Customer support chatbots trained on internal knowledge

- Domain-specific AI assistants (legal, finance, healthcare)

- Personalized content generation systems

- Internal knowledge copilots

- AI agents tailored for workflows

- Multilingual or localized AI applications

Key evaluation criteria:

- Model flexibility (open vs proprietary)

- Fine-tuning techniques (full, LoRA, adapters)

- Dataset management and versioning

- Evaluation and testing capabilities

- Guardrails and safety alignment

- Deployment and scalability

- Cost efficiency

- Observability and monitoring

- Integration with existing tools

- Security and governance

Best for: AI engineers, ML teams, startups building AI products, and enterprises requiring domain-specific intelligence.

Not ideal for: simple use cases where prompt engineering is enough, or teams without datasets or ML expertise.

What’s Changed in Model Fine-Tuning Platforms

- Rise of parameter-efficient tuning (LoRA, adapters)

- Integration with agent-based workflows

- Built-in evaluation pipelines

- Multimodal fine-tuning support

- Dataset versioning and lineage tracking

- Focus on hallucination reduction

- Embedded guardrails and safety alignment

- Cost optimization through smaller models

- Hybrid RAG + fine-tuning architectures

- Continuous fine-tuning workflows

- Improved observability tools

- Stronger enterprise privacy controls

Quick Buyer Checklist (Scan-Friendly)

- Does it support your required base models?

- Can you use LoRA or adapter-based tuning?

- Are datasets versioned and tracked?

- Does it include evaluation tools?

- Are guardrails built in or external?

- Can it scale to production workloads?

- Are costs predictable and controllable?

- Does it integrate with your stack?

- Are audit logs and permissions available?

- Is vendor lock-in a concern?

- Does it support hybrid RAG workflows?

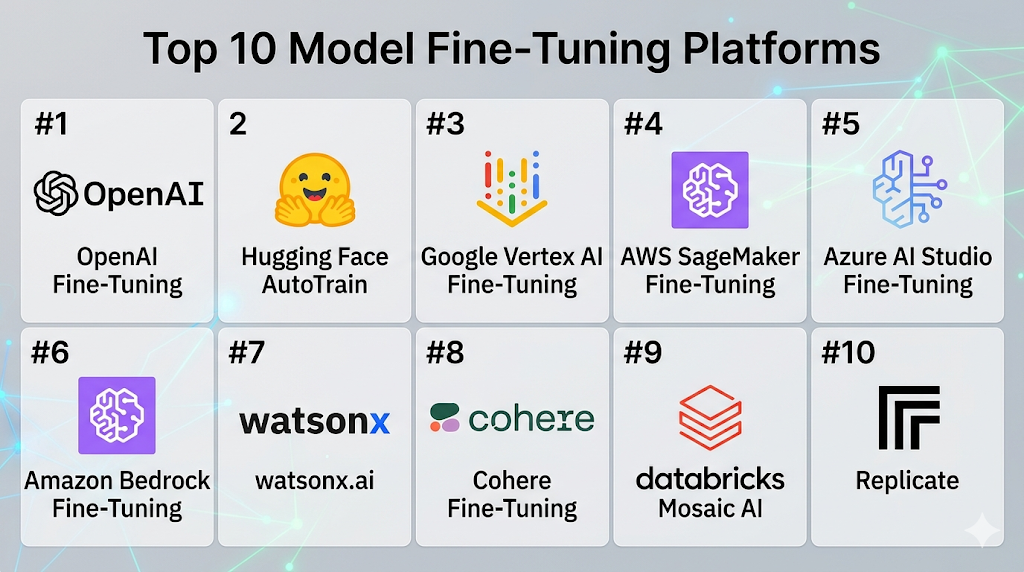

Top 10 Model Fine-Tuning Platforms

#1 — OpenAI Fine-Tuning

One-line verdict: Best for fast, reliable fine-tuning with simple API-driven deployment.

Short description:

Managed fine-tuning for proprietary models with seamless API integration for production use.

Standout Capabilities

- API-based fine-tuning workflow

- Structured dataset ingestion

- Quick deployment

- Stable performance

- Scalable infrastructure

- Strong developer ecosystem

AI-Specific Depth

- Model support: Proprietary

- RAG / knowledge integration: External

- Evaluation: Basic

- Guardrails: Built-in

- Observability: Usage metrics

Pros

- Easy to use

- Reliable outputs

- Fast deployment

Cons

- Limited customization

- Vendor lock-in

- Less control over internals

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- APIs

- SDKs

- Backend services

- AI pipelines

Pricing Model

Usage-based

Best-Fit Scenarios

- Chatbots

- SaaS AI features

- API-based apps

#2 — Hugging Face AutoTrain

One-line verdict: Best no-code solution for fine-tuning open-source models quickly.

Short description:

A beginner-friendly platform enabling quick fine-tuning with minimal ML expertise.

Standout Capabilities

- No-code interface

- Dataset preprocessing

- Open model access

- Quick experimentation

- Hugging Face ecosystem

AI-Specific Depth

- Model support: Open-source

- RAG / knowledge integration: External

- Evaluation: Built-in

- Guardrails: External

- Observability: Basic

Pros

- Easy onboarding

- Open ecosystem

- Fast iteration

Cons

- Limited advanced control

- Scaling challenges

- Deployment setup required

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- Hugging Face Hub

- Transformers

- Datasets

- ML tools

Pricing Model

Tiered

Best-Fit Scenarios

- Beginners

- Prototyping

- Open-source workflows

#3 — Google Vertex AI Fine-Tuning

One-line verdict: Best for enterprise-scale fine-tuning with integrated ML pipelines.

Short description:

A comprehensive ML platform supporting training, deployment, and monitoring.

Standout Capabilities

- End-to-end ML lifecycle

- Scalable infrastructure

- Integrated pipelines

- Data management

- Enterprise readiness

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Built-in

- Guardrails: Policy-based

- Observability: Advanced

Pros

- Highly scalable

- Strong ecosystem

- Enterprise-grade

Cons

- Complex setup

- Learning curve

- Cost management needed

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- Data pipelines

- ML tools

- APIs

- Cloud services

Pricing Model

Usage-based

Best-Fit Scenarios

- Enterprise AI

- Large-scale ML

- Production systems

#4 — AWS SageMaker Fine-Tuning

One-line verdict: Best for flexible and customizable fine-tuning pipelines at scale.

Short description:

A robust ML platform for building and deploying custom fine-tuned models.

Standout Capabilities

- Custom pipelines

- Scalable compute

- Model registry

- Experiment tracking

- Deployment integration

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Built-in

- Guardrails: External

- Observability: Advanced

Pros

- Highly flexible

- Scalable infrastructure

- Production-ready

Cons

- Complex setup

- Requires expertise

- Cost control needed

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- AWS ecosystem

- APIs

- Data services

- ML pipelines

Pricing Model

Usage-based

Best-Fit Scenarios

- Enterprise ML

- Custom workflows

- Large-scale training

#5 — Azure AI Studio Fine-Tuning

One-line verdict: Best for enterprises already using Microsoft ecosystem for AI deployment.

Short description:

A platform for building and fine-tuning AI models with enterprise integrations.

Standout Capabilities

- Enterprise integration

- Secure deployment

- Monitoring tools

- Scalable training

- Workflow orchestration

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Built-in

- Guardrails: Policy controls

- Observability: Advanced

Pros

- Strong enterprise fit

- Secure environment

- Scalable

Cons

- Ecosystem dependency

- Complexity

- Pricing variability

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- Microsoft tools

- APIs

- Enterprise systems

Pricing Model

Usage-based

Best-Fit Scenarios

- Enterprise apps

- Secure deployments

- Microsoft environments

#6 — Amazon Bedrock Fine-Tuning

One-line verdict: Best for managed fine-tuning across multiple foundation models.

Short description:

A platform providing access to multiple models with fine-tuning capabilities.

Standout Capabilities

- Multi-model access

- Managed infrastructure

- Secure environment

- Integration with AWS

- Simplified workflows

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Basic

- Guardrails: Built-in

- Observability: Available

Pros

- Flexible model choice

- Managed setup

- Secure

Cons

- Limited customization

- Ecosystem dependency

- Learning curve

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- AWS tools

- APIs

- Data services

Pricing Model

Usage-based

Best-Fit Scenarios

- Multi-model strategies

- Enterprise AI

- Cloud-native apps

#7 — IBM watsonx.ai

One-line verdict: Best for regulated industries needing governance-heavy fine-tuning workflows.

Short description:

An enterprise AI platform focused on governance, compliance, and model customization.

Standout Capabilities

- Governance tools

- Data control

- Enterprise workflows

- Model customization

- Compliance focus

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Built-in

- Guardrails: Strong

- Observability: Advanced

Pros

- Strong governance

- Enterprise focus

- Secure

Cons

- Complex

- Slower setup

- Less developer-friendly

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud / Hybrid

Integrations & Ecosystem

- Enterprise systems

- APIs

- Data platforms

Pricing Model

Not publicly stated

Best-Fit Scenarios

- Regulated industries

- Enterprise AI

- Compliance-heavy use cases

#8 — Cohere Fine-Tuning

One-line verdict: Best for NLP-focused fine-tuning with enterprise-friendly APIs.

Short description:

A platform focused on language model customization for enterprise applications.

Standout Capabilities

- NLP specialization

- API-first design

- Custom model tuning

- Enterprise support

- Scalable infrastructure

AI-Specific Depth

- Model support: Proprietary

- RAG / knowledge integration: External

- Evaluation: Basic

- Guardrails: Built-in

- Observability: Available

Pros

- Strong NLP performance

- Easy integration

- Developer-friendly

Cons

- Limited model variety

- Less ecosystem depth

- Customization limits

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- APIs

- SDKs

- Enterprise apps

Pricing Model

Usage-based

Best-Fit Scenarios

- NLP apps

- Chatbots

- Enterprise AI

#9 — Databricks Mosaic AI

One-line verdict: Best for data-centric fine-tuning integrated with analytics and lakehouse architecture.

Short description:

A platform combining data engineering and model fine-tuning in one ecosystem.

Standout Capabilities

- Data + AI integration

- Lakehouse architecture

- Custom model training

- Scalable pipelines

- Enterprise analytics

AI-Specific Depth

- Model support: Open + proprietary

- RAG / knowledge integration: Strong

- Evaluation: Built-in

- Guardrails: External

- Observability: Advanced

Pros

- Strong data integration

- Scalable pipelines

- Enterprise-ready

Cons

- Complex setup

- Learning curve

- Cost considerations

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- Data pipelines

- ML tools

- APIs

Pricing Model

Usage-based

Best-Fit Scenarios

- Data-heavy AI

- Enterprise analytics

- ML pipelines

#10 — Replicate

One-line verdict: Best for developers experimenting with fine-tuned models via simple APIs.

Short description:

A platform for running and fine-tuning models with easy deployment and sharing.

Standout Capabilities

- Simple API usage

- Model hosting

- Community models

- Fast experimentation

- Easy deployment

AI-Specific Depth

- Model support: Open-source

- RAG / knowledge integration: External

- Evaluation: Limited

- Guardrails: External

- Observability: Basic

Pros

- Easy to start

- Flexible

- Developer-friendly

Cons

- Limited enterprise features

- Basic monitoring

- Scaling challenges

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

Integrations & Ecosystem

- APIs

- Developer tools

- Model hosting

Pricing Model

Usage-based

Best-Fit Scenarios

- Prototyping

- Experiments

- Indie developers

Comparison Table (Top 10)

| Tool | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| OpenAI | API apps | Cloud | Proprietary | Ease | Lock-in | N/A |

| Hugging Face | Open-source | Cloud | Open | Flexibility | Scaling | N/A |

| Vertex AI | Enterprise | Cloud | Multi | Scalability | Complexity | N/A |

| SageMaker | Custom ML | Cloud | Multi | Control | Cost | N/A |

| Azure AI | Enterprise | Cloud | Multi | Integration | Lock-in | N/A |

| Bedrock | Multi-model | Cloud | Multi | Choice | Limits | N/A |

| IBM watsonx | Governance | Hybrid | Multi | Compliance | Complexity | N/A |

| Cohere | NLP apps | Cloud | Proprietary | Simplicity | Limits | N/A |

| Databricks | Data AI | Cloud | Multi | Data integration | Complexity | N/A |

| Replicate | Devs | Cloud | Open | Ease | Scaling | N/A |

Scoring & Evaluation (Transparent Rubric)

| Tool | Core | Reliability | Guardrails | Integrations | Ease | Perf/Cost | Security | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| OpenAI | 9 | 9 | 8 | 9 | 10 | 8 | 8 | 9 | 8.9 |

| Hugging Face | 9 | 8 | 7 | 9 | 9 | 8 | 7 | 9 | 8.5 |

| Vertex AI | 9 | 9 | 8 | 10 | 7 | 9 | 9 | 9 | 8.9 |

| SageMaker | 9 | 9 | 7 | 10 | 6 | 9 | 9 | 9 | 8.7 |

| Azure AI | 9 | 9 | 8 | 10 | 7 | 8 | 9 | 9 | 8.8 |

| Bedrock | 8 | 8 | 8 | 9 | 8 | 8 | 9 | 8 | 8.4 |

| IBM watsonx | 8 | 9 | 9 | 8 | 6 | 7 | 10 | 8 | 8.3 |

| Cohere | 8 | 8 | 7 | 8 | 9 | 8 | 8 | 8 | 8.2 |

| Databricks | 9 | 9 | 7 | 10 | 6 | 9 | 9 | 8 | 8.6 |

| Replicate | 7 | 7 | 6 | 7 | 9 | 7 | 7 | 7 | 7.4 |

Top 3 for Enterprise: Vertex AI, Azure AI, SageMaker

Top 3 for SMB: OpenAI, Hugging Face, Cohere

Top 3 for Developers: Hugging Face, OpenAI, Replicate

Which Model Fine-Tuning Platform Is Right for You?

Solo / Freelancer

Use Hugging Face or Replicate for flexibility and ease.

SMB

OpenAI and Cohere provide strong balance.

Mid-Market

SageMaker or Databricks for scaling.

Enterprise

Vertex AI, Azure AI, or IBM watsonx.

Regulated industries

IBM watsonx or Azure AI preferred.

Budget vs premium

- Budget: Hugging Face

- Premium: Vertex AI, Azure

Build vs buy

Build if control is critical; buy for speed and simplicity.

Implementation Playbook (30 / 60 / 90 Days)

30 Days

- Define use case

- Prepare datasets

- Run pilot fine-tuning

- Set success metrics

60 Days

- Add evaluation pipeline

- Improve dataset quality

- Deploy staging model

- Add guardrails

90 Days

- Optimize costs

- Scale deployment

- Add monitoring

- Implement governance

Common Mistakes & How to Avoid Them

- Poor dataset quality

- No evaluation process

- Ignoring hallucinations

- Overfitting

- No monitoring

- Weak guardrails

- Cost overruns

- No version control

- Vendor lock-in

- Lack of human review

- Missing audit logs

- No rollback plan

FAQs

What is model fine-tuning?

Customizing a pre-trained model for specific tasks.

Is it always necessary?

No, simple tasks may only need prompting.

Is it expensive?

Varies based on compute and dataset size.

Can beginners use it?

Yes, with no-code tools.

Is it secure?

Depends on platform and setup.

Can I use my own data?

Yes, that’s the main purpose.

Can I combine with RAG?

Yes, often recommended.

Does it reduce hallucinations?

It can, but not completely.

Is open-source better?

Depends on control vs convenience.

Can I switch platforms later?

Possible but may require rework.

Do I need ML expertise?

Helpful but not always required.

What’s the biggest risk?

Poor data leading to poor models.

Conclusion

Model fine-tuning platforms are essential for turning generic AI into highly specialized systems. There is no single “best” platform—your choice depends on your scale, data, and technical expertise.

Next steps:

- Shortlist 2–3 platforms

- Run pilot fine-tuning

- Validate evaluation, cost, and security before scaling