Introduction

Open-source model hub platforms are centralized ecosystems where developers, researchers, and enterprises can discover, share, fine-tune, and deploy machine learning and large language models. These platforms act as the backbone of modern AI development, enabling teams to move faster by reusing pre-trained models instead of building everything from scratch.

In practical terms, they simplify access to thousands of models across natural language processing, vision, audio, and multimodal AI. They also provide tooling for versioning, fine-tuning, evaluation, and deployment workflows.

These platforms are becoming essential because AI systems are increasingly modular. Instead of relying on a single monolithic model, organizations now combine multiple specialized models, routing them dynamically based on task requirements.

Common real-world use cases include:

- Building enterprise AI copilots using pre-trained models

- Fine-tuning domain-specific LLMs for customer support

- Deploying multimodal AI pipelines (text, image, audio)

- Running research experiments with reproducible models

- Hosting internal model registries for AI teams

- Powering edge or cloud inference applications

Key evaluation criteria include:

- Model diversity and ecosystem size

- Fine-tuning and deployment support

- Versioning and reproducibility

- Integration with MLOps pipelines

- Security and access control

- Dataset and training tooling

- Evaluation and benchmarking support

- Community activity and governance model

- Enterprise readiness and scalability

- Multi-framework compatibility

Best for: AI engineers, ML researchers, enterprise AI teams, and startups building production-grade AI systems.

Not ideal for: users looking for turnkey AI applications without engineering involvement or teams without ML infrastructure maturity.

What’s Changed in Open-Source Model Hub Platforms

- Shift toward multimodal model ecosystems (text, image, audio, video)

- Strong adoption of fine-tuning pipelines directly within hubs

- Increased focus on model governance and safe publishing

- Built-in evaluation benchmarks becoming standard

- Growth of agent-ready model formats

- Improved integration with vector databases and RAG systems

- Expansion of edge-compatible lightweight models

- Model routing and orchestration support emerging

- Strong emphasis on reproducibility and experiment tracking

- Enterprise-grade access control and private model registries

- Open-weight models competing with proprietary APIs

- Growing ecosystem of inference optimization tools

Quick Buyer Checklist (Scan-Friendly)

- Does the platform support both open and private model hosting?

- How strong is the fine-tuning and training workflow support?

- Are models versioned and reproducible?

- Does it integrate with MLOps pipelines?

- Can it support both cloud and local deployment?

- Are evaluation tools available for benchmarking models?

- How strong is access control and governance?

- Does it support multimodal models?

- What is the risk of vendor lock-in?

- Are datasets and training workflows integrated?

- Does it support model conversion and interoperability?

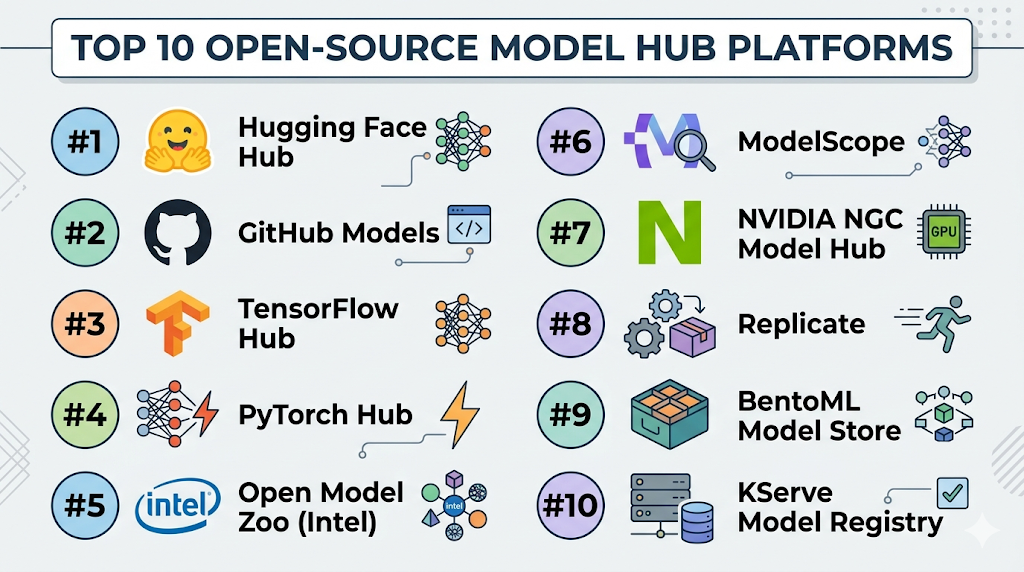

Top 10 Open-Source Model Hub Platforms (Updated)

#1 — Hugging Face Hub

One-line verdict: Best all-in-one ecosystem for discovering, sharing, and deploying open-source AI models.

Short description:

A widely adopted model hub for machine learning models across NLP, vision, and multimodal domains. Used by researchers, startups, and enterprises.

Standout Capabilities

- Massive open model ecosystem

- Built-in model versioning

- Fine-tuning and training tools

- Dataset hosting and sharing

- Inference API support

- Strong community contributions

- Multimodal model support

- Integration with major ML frameworks

AI-Specific Depth

- Model support: Open-source + proprietary integrations

- RAG / knowledge integration: Supported via ecosystem tools

- Evaluation: Model benchmarking and community evals

- Guardrails: External tooling required

- Observability: Inference logs and monitoring available

Pros

- Largest open-source model ecosystem

- Strong community support

- Easy experimentation and deployment

Cons

- Governance complexity at scale

- Not fully enterprise-controlled by default

- Requires external guardrail setup

Security & Compliance

- Private model repositories supported

- Access control features available

- Enterprise governance options available (details vary)

Deployment & Platforms

- Cloud

- Self-hosted options via ecosystem tools

Integrations & Ecosystem

- PyTorch, TensorFlow

- Inference APIs

- Vector databases

- MLOps pipelines

- Fine-tuning frameworks

Pricing Model

Open-source + enterprise tiers (details vary)

Best-Fit Scenarios

- Model experimentation

- Enterprise AI pipelines

- Research and benchmarking

#2 — GitHub Models

One-line verdict: Best for integrating open-source models directly into developer workflows.

Short description:

A model distribution layer integrated into developer ecosystems for easier access to AI models.

Standout Capabilities

- Seamless developer integration

- Model hosting within code workflows

- Version-controlled AI assets

- API-based model access

- Strong developer ecosystem alignment

- CI/CD compatibility

AI-Specific Depth

- Model support: Open-source + hosted models

- RAG / knowledge integration: External

- Evaluation: Not fully built-in

- Guardrails: External

- Observability: Basic usage metrics

Pros

- Strong developer experience

- Easy integration into software projects

- Version-controlled models

Cons

- Limited AI-native tooling

- Not deeply specialized for ML workflows

- Requires external evaluation systems

Security & Compliance

- Enterprise access controls available

- Repository-level permissions

Deployment & Platforms

- Cloud-based

Integrations & Ecosystem

- GitHub Actions

- CI/CD pipelines

- Developer APIs

- Open-source repositories

Pricing Model

Varies

Best-Fit Scenarios

- Developer-centric AI applications

- CI/CD-integrated AI workflows

- Rapid prototyping

#3 — TensorFlow Hub

One-line verdict: Best for reusable TensorFlow model components and modular ML development.

Short description:

A repository of reusable machine learning modules designed for TensorFlow-based workflows.

Standout Capabilities

- Pre-trained model modules

- Reusable ML components

- Strong TensorFlow integration

- Image and NLP model support

- Lightweight deployment options

- Transfer learning support

AI-Specific Depth

- Model support: TensorFlow models

- RAG / knowledge integration: External

- Evaluation: Not built-in

- Guardrails: Not built-in

- Observability: Limited

Pros

- Strong TensorFlow ecosystem alignment

- Easy transfer learning

- Stable production usage

Cons

- Limited LLM-native tooling

- Framework dependency

- Less flexible than modern hubs

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

- Edge via TensorFlow ecosystem

Integrations & Ecosystem

- TensorFlow

- TFLite

- ML pipelines

- Cloud ML systems

Pricing Model

Open-source

Best-Fit Scenarios

- TensorFlow-based AI systems

- Research prototyping

- Image and NLP workflows

#4 — PyTorch Hub

One-line verdict: Best for PyTorch-native model sharing and research workflows.

Short description:

A model repository integrated with PyTorch for fast experimentation and reproducible research.

Standout Capabilities

- Native PyTorch integration

- Research-focused model sharing

- Easy model loading APIs

- Fast prototyping support

- Open ecosystem compatibility

- Lightweight usage model

AI-Specific Depth

- Model support: PyTorch models

- RAG / knowledge integration: External

- Evaluation: External tooling

- Guardrails: Not built-in

- Observability: Minimal

Pros

- Excellent for research workflows

- Simple model usage

- Strong PyTorch ecosystem

Cons

- Not enterprise-focused

- Limited governance features

- Minimal production tooling

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

- Local environments

Integrations & Ecosystem

- PyTorch ecosystem

- Research notebooks

- ML pipelines

Pricing Model

Open-source

Best-Fit Scenarios

- Academic research

- AI prototyping

- PyTorch model sharing

#5 — Open Model Zoo (Intel)

One-line verdict: Best for optimized AI models for edge and Intel hardware ecosystems.

Short description:

A collection of optimized deep learning models for performance-focused inference.

Standout Capabilities

- Hardware-optimized models

- Edge AI focus

- Pre-trained model collection

- Performance tuning support

- Computer vision specialization

AI-Specific Depth

- Model support: Open-source optimized models

- RAG / knowledge integration: External

- Evaluation: Limited

- Guardrails: Not built-in

- Observability: Basic

Pros

- Strong edge optimization

- Efficient inference performance

- Hardware-aligned models

Cons

- Limited LLM support

- Narrow ecosystem focus

- Less flexible than modern hubs

Security & Compliance

Not publicly stated

Deployment & Platforms

- Edge devices

- Intel hardware ecosystems

Integrations & Ecosystem

- OpenVINO

- Edge AI pipelines

- Computer vision tools

Pricing Model

Open-source

Best-Fit Scenarios

- Edge AI applications

- Industrial AI systems

- Computer vision workloads

#6 — ModelScope

One-line verdict: Best alternative model ecosystem for multilingual and multimodal AI development.

Short description:

A growing model hub offering a wide range of AI models across languages and modalities.

Standout Capabilities

- Multilingual model support

- Large model repository

- Multimodal AI focus

- Research-driven ecosystem

- Fine-tuning support

- Open collaboration model

AI-Specific Depth

- Model support: Open-source + research models

- RAG / knowledge integration: Supported externally

- Evaluation: Limited built-in tools

- Guardrails: Not built-in

- Observability: Basic

Pros

- Strong multilingual support

- Broad model coverage

- Active research ecosystem

Cons

- Smaller global adoption

- Limited enterprise tooling

- Tooling still evolving

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

- Research environments

Integrations & Ecosystem

- ML frameworks

- Dataset tools

- Fine-tuning pipelines

Pricing Model

Open-source

Best-Fit Scenarios

- Multilingual AI systems

- Research experimentation

- Multimodal applications

#7 — NVIDIA NGC Model Hub

One-line verdict: Best enterprise-grade model hub optimized for GPU-accelerated AI workloads.

Short description:

A curated catalog of optimized AI models designed for high-performance GPU environments.

Standout Capabilities

- GPU-optimized models

- Enterprise AI focus

- Pre-trained AI pipelines

- High-performance inference

- Containerized model deployment

AI-Specific Depth

- Model support: Optimized AI models

- RAG / knowledge integration: External

- Evaluation: Limited

- Guardrails: Not built-in

- Observability: GPU metrics available

Pros

- Excellent performance optimization

- Enterprise-grade reliability

- Strong GPU integration

Cons

- Hardware dependency

- Less open flexibility

- Ecosystem lock-in concerns

Security & Compliance

Not publicly stated

Deployment & Platforms

- GPU cloud

- Enterprise systems

Integrations & Ecosystem

- CUDA ecosystem

- AI containers

- ML pipelines

Pricing Model

Varies

Best-Fit Scenarios

- High-performance AI workloads

- Enterprise GPU deployments

- Research training pipelines

#8 — Replicate

One-line verdict: Best for running and sharing open-source models via simple API-based deployment.

Short description:

A platform that enables easy deployment and execution of machine learning models through APIs.

Standout Capabilities

- API-based model execution

- Simple deployment workflow

- Model sharing system

- Scalable inference execution

- Developer-friendly interface

AI-Specific Depth

- Model support: Open-source models

- RAG / knowledge integration: External

- Evaluation: Not built-in

- Guardrails: External

- Observability: Basic logs

Pros

- Extremely easy deployment

- Fast prototyping

- API-first design

Cons

- Limited enterprise control

- External dependency

- Cost scaling concerns

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud-based

Integrations & Ecosystem

- APIs

- ML workflows

- Developer tools

Pricing Model

Usage-based (varies)

Best-Fit Scenarios

- Rapid prototyping

- API-based AI apps

- Lightweight model deployment

#9 — BentoML Model Store

One-line verdict: Best for production-ready model packaging and deployment workflows.

Short description:

A model serving platform designed to standardize deployment pipelines.

Standout Capabilities

- Production model packaging

- Deployment automation

- API generation

- Scalable inference serving

- MLOps integration

AI-Specific Depth

- Model support: Multi-framework

- RAG / knowledge integration: External

- Evaluation: External tools

- Guardrails: External

- Observability: Deployment logs

Pros

- Strong production focus

- Scalable deployment system

- Flexible model support

Cons

- Requires engineering setup

- Not a pure model repository

- Learning curve

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

- Self-hosted

Integrations & Ecosystem

- Kubernetes

- MLOps tools

- CI/CD pipelines

Pricing Model

Open-source + enterprise

Best-Fit Scenarios

- Production ML systems

- Enterprise AI deployment

- Scalable inference pipelines

#10 — KServe Model Registry

One-line verdict: Best Kubernetes-native model serving and registry system for enterprise AI workloads.

Short description:

A cloud-native platform for deploying and managing machine learning models at scale.

Standout Capabilities

- Kubernetes-native architecture

- Scalable model serving

- Multi-framework support

- Autoscaling inference systems

- Enterprise deployment focus

AI-Specific Depth

- Model support: Multi-framework

- RAG / knowledge integration: External

- Evaluation: External

- Guardrails: External

- Observability: Kubernetes monitoring

Pros

- Highly scalable architecture

- Enterprise-ready design

- Strong cloud-native support

Cons

- Complex setup

- Requires Kubernetes expertise

- Heavy infrastructure needs

Security & Compliance

Not publicly stated

Deployment & Platforms

- Cloud

- Kubernetes clusters

Integrations & Ecosystem

- Kubernetes

- MLOps pipelines

- Cloud-native tools

Pricing Model

Open-source

Best-Fit Scenarios

- Enterprise AI deployment

- Large-scale inference systems

- Cloud-native ML infrastructure

Comparison Table (Top 10)

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| Hugging Face Hub | AI ecosystems | Cloud | Open + proprietary | Largest ecosystem | Governance complexity | N/A |

| GitHub Models | Developers | Cloud | Open + hosted | Dev integration | Limited ML tooling | N/A |

| TensorFlow Hub | TensorFlow workflows | Hybrid | TensorFlow models | Modular reuse | LLM limitations | N/A |

| PyTorch Hub | Research | Cloud/local | PyTorch | Simplicity | No enterprise features | N/A |

| Open Model Zoo | Edge AI | Edge | Optimized models | Performance | Narrow focus | N/A |

| ModelScope | Multilingual AI | Cloud | Open models | Multilingual support | Smaller ecosystem | N/A |

| NVIDIA NGC | GPU enterprise AI | Cloud | Optimized models | Performance | Hardware lock-in | N/A |

| Replicate | API deployment | Cloud | Open models | Simplicity | Cost scaling | N/A |

| BentoML | MLOps deployment | Hybrid | Multi-framework | Production readiness | Setup complexity | N/A |

| KServe | Enterprise Kubernetes AI | Cloud/self-hosted | Multi-framework | Scalability | Infrastructure complexity | N/A |

Scoring & Evaluation (Transparent Rubric)

Each platform is evaluated based on enterprise readiness, ecosystem maturity, and deployment flexibility. Scores are comparative and intended to guide selection, not represent absolute performance.

| Tool | Core | Reliability | Guardrails | Integrations | Ease | Perf/Cost | Security | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| Hugging Face Hub | 10 | 9 | 7 | 10 | 9 | 8 | 8 | 10 | 9.0 |

| GitHub Models | 8 | 8 | 6 | 9 | 9 | 7 | 8 | 8 | 8.0 |

| TensorFlow Hub | 8 | 8 | 6 | 9 | 8 | 8 | 8 | 8 | 7.9 |

| PyTorch Hub | 8 | 8 | 6 | 8 | 9 | 8 | 7 | 8 | 7.8 |

| Open Model Zoo | 7 | 8 | 5 | 7 | 7 | 9 | 8 | 7 | 7.4 |

| ModelScope | 8 | 7 | 6 | 8 | 8 | 7 | 7 | 7 | 7.6 |

| NVIDIA NGC | 9 | 9 | 7 | 9 | 7 | 10 | 8 | 9 | 8.6 |

| Replicate | 8 | 8 | 6 | 8 | 10 | 7 | 7 | 8 | 7.9 |

| BentoML | 9 | 9 | 7 | 9 | 7 | 9 | 8 | 8 | 8.3 |

| KServe | 9 | 9 | 7 | 9 | 6 | 10 | 9 | 8 | 8.4 |

Top 3 for Enterprise: Hugging Face Hub, KServe, NVIDIA NGC

Top 3 for SMB: Replicate, Hugging Face Hub, GitHub Models

Top 3 for Developers: Hugging Face Hub, PyTorch Hub, Replicate

Which Open-Source Model Hub Platform Is Right for You?

Solo / Freelancer

Best choices are lightweight ecosystems like Replicate or Hugging Face Hub for fast experimentation.

SMB

GitHub Models and Hugging Face Hub provide strong balance between ease and capability.

Mid-Market

BentoML and Hugging Face Hub support scalable deployment workflows.

Enterprise

KServe, NVIDIA NGC, and Hugging Face Hub are best for governance and scale.

Regulated industries

KServe and BentoML offer stronger control and self-hosting capabilities.

Budget vs premium

- Budget: PyTorch Hub, TensorFlow Hub

- Premium: NVIDIA NGC, KServe

Build vs buy (when to DIY)

Build custom model hubs when you need deep compliance or proprietary workflows; otherwise adopt existing ecosystems for speed and scalability.

Implementation Playbook (30 / 60 / 90 Days)

30 Days

- Identify model requirements

- Test multiple hubs

- Benchmark model performance

- Evaluate integration complexity

60 Days

- Integrate model registry

- Establish versioning system

- Add evaluation workflows

- Secure access control

90 Days

- Scale across teams

- Optimize deployment pipelines

- Add governance and audit systems

- Implement cost optimization

Common Mistakes & How to Avoid Them

- Using models without version control

- Ignoring evaluation benchmarks

- Poor dataset governance

- Over-reliance on single model source

- Lack of deployment standardization

- Not tracking model drift

- Ignoring access control policies

- No fallback model strategy

- Weak integration with CI/CD

- Missing observability layer

- Vendor lock-in without abstraction

- Underestimating infrastructure needs

FAQs

What is an open-source model hub platform?

It is a system that hosts, shares, and manages machine learning models for reuse and deployment.

Why are these platforms important?

They accelerate AI development by removing the need to train models from scratch.

Can these platforms be used for production?

Yes, many are designed for production AI systems with scaling support.

Do they support fine-tuning?

Most modern hubs include or integrate fine-tuning workflows.

Are they free to use?

Many are open-source, but enterprise features may be paid.

Can I host private models?

Yes, most platforms support private repositories.

Do they support multimodal models?

Increasingly, yes—especially newer ecosystems.

Can they integrate with cloud systems?

Yes, most integrate with major cloud and MLOps tools.

What is vendor lock-in risk?

Dependence on a single ecosystem that makes switching difficult.

Are they secure?

Security depends on configuration and platform; enterprise controls vary.

Can I use multiple hubs together?

Yes, hybrid setups are common in production environments.

What is the biggest limitation?

Complexity increases as ecosystems scale and diversify.

Conclusion

Open-source model hub platforms are foundational to modern AI development. They enable teams to discover, customize, and deploy models at scale while maintaining flexibility across frameworks and infrastructures.

Choosing the right platform depends on your team’s maturity, deployment needs, and governance requirements. Some platforms prioritize ease of use, while others focus on enterprise-scale control or research flexibility.

Next steps:

- Shortlist platforms based on your use case

- Run small-scale benchmarking experiments

- Validate governance and deployment requirements before scaling