Introduction

LLMOps platforms are tools and systems designed to help teams build, deploy, monitor, and maintain applications powered by large language models (LLMs). They act as the operational backbone of AI systems—similar to how DevOps supports traditional software—handling everything from prompt management and evaluation to observability, cost tracking, and governance.

As AI systems evolve beyond simple chatbots into complex, multi-step agent workflows, managing reliability, safety, and cost becomes significantly more challenging. LLMOps platforms address these challenges by providing structured ways to test outputs, monitor performance, and enforce guardrails in production environments.

Common use cases include:

- Monitoring and debugging LLM outputs in production

- Managing prompts, versions, and experiments

- Evaluating model reliability and reducing hallucinations

- Tracking token usage and optimizing costs

- Building and maintaining RAG pipelines

- Enforcing guardrails and compliance policies

What to evaluate when choosing an LLMOps platform:

- Prompt management and version control

- Evaluation and testing frameworks

- Observability (logs, traces, metrics)

- Cost tracking and optimization tools

- Guardrails and safety controls

- Integration with LLM providers and vector databases

- Support for agent workflows

- Deployment flexibility (cloud vs self-hosted)

- Role-based access and governance

- Ease of integration with existing stacks

Best for: AI engineers, ML teams, and product teams building production-grade AI systems—especially in SaaS, fintech, healthcare, and enterprise IT.

Not ideal for: Teams building simple prototypes or one-off AI features where basic API usage and logging are sufficient.

What’s Changed in LLMOps Platforms

- Agent observability is now standard, enabling tracking of multi-step reasoning and tool usage

- Built-in evaluation pipelines support regression testing for prompts and outputs

- Real-time guardrails help detect prompt injection and unsafe behavior

- Native RAG monitoring tracks retrieval quality and grounding accuracy

- Multi-model orchestration enables routing across providers for cost and performance optimization

- Token-level cost visibility provides granular spend tracking

- Privacy-first features include data masking, retention controls, and regional handling

- Prompt versioning behaves like code with structured workflows

- Human-in-the-loop evaluation is integrated into pipelines

- Latency optimization tools such as caching and batching are widely supported

- Governance dashboards provide audit logs and compliance visibility

- Integration with agent frameworks is increasingly common

Quick Buyer Checklist (Scan-Friendly)

- Do you get full visibility into prompts, responses, and execution traces?

- Can you evaluate outputs systematically (offline and real-time)?

- Are guardrails built-in or dependent on external tools?

- Does it support multiple LLM providers or BYO models?

- Can you track and control token usage and costs?

- Does it integrate with your RAG stack or vector database?

- Are there strong access controls (RBAC, audit logs)?

- Can you version and manage prompts effectively?

- Is agent workflow support available?

- How easy is it to switch providers and avoid lock-in?

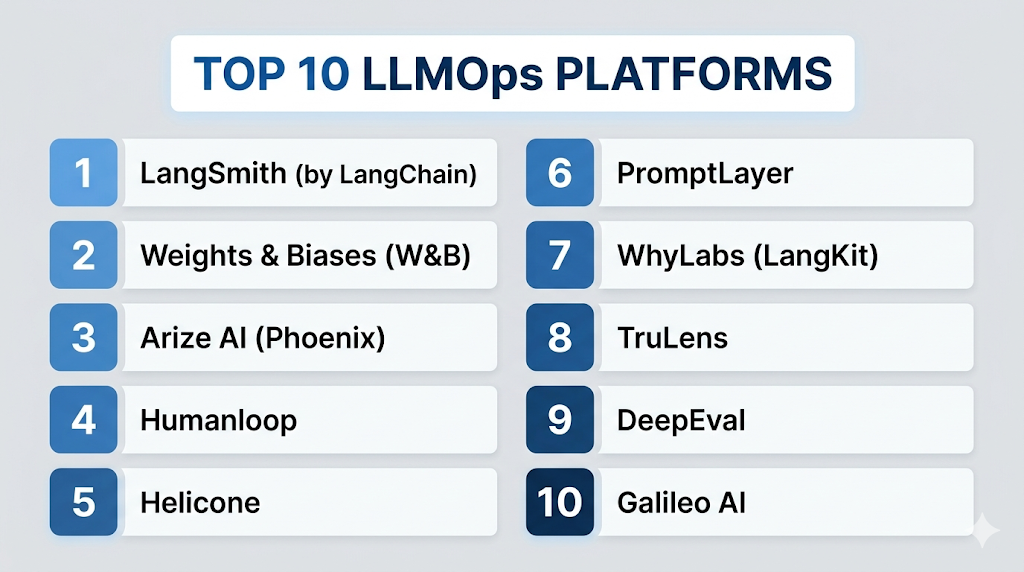

Top 10 LLMOps Platforms

#1 — LangSmith (by LangChain)

One-line verdict: Best for developers building complex LLM applications with deep tracing and debugging capabilities.

Short description:

LangSmith is an observability and evaluation platform designed for LLM applications, especially those built with LangChain. It helps teams trace execution, debug issues, and evaluate outputs.

Standout Capabilities

- Detailed execution tracing for chains and agents

- Prompt debugging and visualization

- Dataset-based evaluation workflows

- Experiment tracking and comparison

- Real-time monitoring of LLM calls

- Feedback collection pipelines

- Strong developer tooling

AI-Specific Depth

- Model support: Multi-model via integrations

- RAG / knowledge integration: Strong (via LangChain ecosystem)

- Evaluation: Dataset testing, regression, human feedback

- Guardrails: Limited native; relies on integrations

- Observability: Deep tracing, logs, metrics

Pros

- Excellent debugging and tracing

- Strong integration ecosystem

- Developer-friendly workflows

Cons

- Best suited for LangChain users

- Limited built-in guardrails

- Learning curve for new users

Security & Compliance

Encryption and access controls available. Certifications: Not publicly stated.

Deployment & Platforms

Web, Cloud

Integrations & Ecosystem

LangSmith integrates tightly with modern AI development stacks.

- LangChain

- APIs and SDKs

- Vector databases

- Custom pipelines

- Agent frameworks

Pricing Model

Usage-based / tiered

Best-Fit Scenarios

- Debugging agent workflows

- RAG-based applications

- Prompt experimentation and testing

#2 — Weights & Biases (W&B)

One-line verdict: Best for ML teams needing robust experiment tracking and scalable evaluation workflows.

Short description:

Weights & Biases is a mature ML operations platform that extends into LLM observability, evaluation, and experiment tracking.

Standout Capabilities

- Experiment tracking across models and prompts

- Dataset versioning

- Evaluation dashboards

- Collaboration tools

- Scalable infrastructure

- Visualization of experiments

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Varies / N/A

- Evaluation: Strong support for benchmarking and testing

- Guardrails: N/A

- Observability: Strong metrics and tracking

Pros

- Mature ML ecosystem

- Strong evaluation capabilities

- Excellent collaboration features

Cons

- Less LLM-native compared to newer tools

- Guardrails not built-in

- Setup complexity

Security & Compliance

RBAC and encryption supported. Certifications: Not publicly stated.

Deployment & Platforms

Cloud / Self-hosted

Integrations & Ecosystem

- ML frameworks

- APIs and SDKs

- Data pipelines

- Experiment tracking tools

- Visualization systems

Pricing Model

Tiered / enterprise

Best-Fit Scenarios

- ML-heavy teams

- Experiment tracking

- Benchmarking LLM performance

#3 — Arize AI (Phoenix)

One-line verdict: Best for production monitoring and diagnosing LLM performance issues at scale.

Short description:

Arize AI provides observability and evaluation tools for monitoring LLM applications in production environments.

Standout Capabilities

- LLM tracing and monitoring

- Data drift detection

- Root cause analysis tools

- Evaluation dashboards

- Performance insights

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Strong

- Guardrails: Limited

- Observability: Strong

Pros

- Strong production monitoring

- Good debugging capabilities

- Enterprise-ready features

Cons

- Less focus on prompt workflows

- Guardrails limited

- Learning curve

Security & Compliance

Access controls and encryption supported. Certifications: Not publicly stated.

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- Data monitoring systems

- ML pipelines

- Analytics tools

- Custom integrations

Pricing Model

Not publicly stated

Best-Fit Scenarios

- Production AI monitoring

- Performance debugging

- Enterprise AI systems

#4 — Humanloop

One-line verdict: Best for teams focused on prompt management and human-in-the-loop evaluation workflows.

Short description:

Humanloop enables teams to test, evaluate, and improve prompts using structured feedback loops.

Standout Capabilities

- Prompt testing workflows

- Human feedback integration

- Experiment tracking

- Evaluation dashboards

- Iteration tools

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: N/A

- Evaluation: Strong

- Guardrails: N/A

- Observability: Moderate

Pros

- Strong prompt workflows

- Human feedback integration

- Easy experimentation

Cons

- Limited observability

- Smaller ecosystem

- Less enterprise tooling

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- SDKs

- Feedback systems

- Prompt tools

- AI pipelines

Pricing Model

Not publicly stated

Best-Fit Scenarios

- Prompt iteration

- Feedback-driven applications

- AI UX testing

#5 — Helicone

One-line verdict: Best for lightweight cost tracking and observability for LLM API usage.

Short description:

Helicone provides logging, monitoring, and analytics for LLM API usage with a focus on simplicity.

Standout Capabilities

- API logging

- Cost tracking dashboards

- Request/response monitoring

- Lightweight integration

- Open-source friendly

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: N/A

- Evaluation: Basic

- Guardrails: N/A

- Observability: Strong

Pros

- Simple setup

- Cost visibility

- Lightweight

Cons

- Limited evaluation tools

- No guardrails

- Basic feature set

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud / Self-hosted

Integrations & Ecosystem

- APIs

- SDKs

- Logging tools

- Analytics systems

- Developer tools

Pricing Model

Freemium

Best-Fit Scenarios

- Cost monitoring

- Startup environments

- API-level observability

#6 — PromptLayer

One-line verdict: Best for prompt tracking and versioning with minimal setup overhead.

Short description:

PromptLayer tracks prompts, responses, and usage across LLM applications, helping teams manage prompt workflows.

Standout Capabilities

- Prompt logging

- Version control

- Usage tracking

- Lightweight integration

- Simple dashboards

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: N/A

- Evaluation: Basic

- Guardrails: N/A

- Observability: Moderate

Pros

- Easy to use

- Quick integration

- Lightweight

Cons

- Limited advanced features

- Basic evaluation

- Not enterprise-grade

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- SDKs

- Prompt tools

- Logging systems

- AI pipelines

Pricing Model

Usage-based

Best-Fit Scenarios

- Prompt tracking

- Early-stage applications

- Lightweight monitoring

#7 — WhyLabs (LangKit)

One-line verdict: Best for monitoring data quality and ensuring LLM reliability in production systems.

Short description:

WhyLabs focuses on monitoring data and model behavior, including LLM-specific metrics for reliability.

Standout Capabilities

- Data quality monitoring

- LLM performance tracking

- Drift detection

- Evaluation tools

- Monitoring dashboards

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Strong

- Guardrails: Limited

- Observability: Strong

Pros

- Strong reliability focus

- Enterprise-ready

- Good monitoring tools

Cons

- Less prompt tooling

- Complex setup

- Limited guardrails

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud

Integrations & Ecosystem

- Data pipelines

- APIs

- ML systems

- Monitoring tools

- Analytics platforms

Pricing Model

Not publicly stated

Best-Fit Scenarios

- Data monitoring

- Reliability tracking

- Enterprise use cases

#8 — TruLens

One-line verdict: Best for evaluating LLM outputs and improving RAG systems with feedback loops.

Short description:

TruLens provides evaluation tools for LLM applications, particularly for RAG pipelines and feedback systems.

Standout Capabilities

- Evaluation metrics

- Feedback tracking

- RAG evaluation tools

- Open-source flexibility

- Experiment tracking

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Strong

- Evaluation: Strong

- Guardrails: N/A

- Observability: Moderate

Pros

- Strong evaluation capabilities

- RAG-focused

- Open-source

Cons

- Limited observability

- No guardrails

- Smaller ecosystem

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud / Self-hosted

Integrations & Ecosystem

- APIs

- SDKs

- RAG frameworks

- Feedback tools

- AI pipelines

Pricing Model

Open-source

Best-Fit Scenarios

- RAG evaluation

- Research projects

- Feedback loops

#9 — DeepEval

One-line verdict: Best for automated testing and benchmarking of LLM applications in development workflows.

Short description:

DeepEval focuses on evaluating LLM outputs using automated testing and benchmarking techniques.

Standout Capabilities

- Automated evaluation tests

- Benchmarking tools

- CI/CD integration

- Testing workflows

- Developer-focused design

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Strong

- Guardrails: N/A

- Observability: Basic

Pros

- Strong testing tools

- Developer-friendly

- Automation support

Cons

- Limited observability

- No guardrails

- Early-stage ecosystem

Security & Compliance

Not publicly stated

Deployment & Platforms

Varies / N/A

Integrations & Ecosystem

- APIs

- CI/CD systems

- Testing frameworks

- Developer tools

- AI pipelines

Pricing Model

Open-source / tiered

Best-Fit Scenarios

- Testing pipelines

- CI/CD integration

- Benchmarking

#10 — Galileo AI

One-line verdict: Best for end-to-end LLM observability with enterprise-focused evaluation and debugging tools.

Short description:

Galileo AI provides monitoring, evaluation, and debugging tools for LLM systems with a focus on enterprise use cases.

Standout Capabilities

- End-to-end observability

- Evaluation pipelines

- Debugging tools

- Performance monitoring

- Enterprise dashboards

AI-Specific Depth

- Model support: Multi-model

- RAG / knowledge integration: Supported

- Evaluation: Strong

- Guardrails: Moderate

- Observability: Strong

Pros

- Full observability stack

- Strong evaluation features

- Enterprise capabilities

Cons

- Smaller ecosystem

- Pricing not transparent

- Less community adoption

Security & Compliance

Not publicly stated

Deployment & Platforms

Cloud

Integrations & Ecosystem

- APIs

- SDKs

- Monitoring tools

- AI pipelines

- Analytics systems

Pricing Model

Not publicly stated

Best-Fit Scenarios

- Enterprise monitoring

- Debugging workflows

- Evaluation pipelines

Comparison Table (Top 10)

| Tool Name | Best For | Deployment | Model Flexibility | Strength | Watch-Out | Public Rating |

|---|---|---|---|---|---|---|

| LangSmith | Developers | Cloud | Multi-model | Deep tracing | LangChain dependency | N/A |

| W&B | ML teams | Hybrid | Multi-model | Experiment tracking | Complexity | N/A |

| Arize AI | Enterprise | Cloud | Multi-model | Monitoring | Learning curve | N/A |

| Humanloop | Prompt ops | Cloud | Multi-model | Feedback loops | Limited observability | N/A |

| Helicone | Startups | Hybrid | Multi-model | Cost tracking | Basic features | N/A |

| PromptLayer | Early-stage | Cloud | Multi-model | Simplicity | Limited depth | N/A |

| WhyLabs | Enterprise | Cloud | Multi-model | Data monitoring | Setup complexity | N/A |

| TruLens | RAG systems | Hybrid | Multi-model | Evaluation | Limited observability | N/A |

| DeepEval | Developers | N/A | Multi-model | Testing | Early-stage | N/A |

| Galileo AI | Enterprise | Cloud | Multi-model | Observability | Smaller ecosystem | N/A |

Scoring & Evaluation (Transparent Rubric)

The following scores are comparative and reflect how each platform performs across key LLMOps capabilities. These are not absolute ratings but a structured way to evaluate trade-offs based on features, usability, and enterprise readiness.

| Tool | Core | Reliability/Eval | Guardrails | Integrations | Ease | Perf/Cost | Security/Admin | Support | Weighted Total |

|---|---|---|---|---|---|---|---|---|---|

| LangSmith | 9 | 8 | 6 | 9 | 8 | 7 | 7 | 8 | 8.0 |

| W&B | 8 | 9 | 5 | 8 | 7 | 7 | 8 | 9 | 7.9 |

| Arize AI | 8 | 8 | 6 | 8 | 7 | 7 | 8 | 8 | 7.8 |

| Humanloop | 7 | 8 | 5 | 7 | 8 | 7 | 7 | 7 | 7.3 |

| Helicone | 6 | 6 | 4 | 7 | 9 | 8 | 6 | 7 | 6.9 |

| PromptLayer | 6 | 6 | 4 | 6 | 9 | 7 | 6 | 6 | 6.6 |

| WhyLabs | 8 | 8 | 6 | 7 | 6 | 7 | 8 | 7 | 7.5 |

| TruLens | 7 | 8 | 4 | 6 | 7 | 7 | 6 | 6 | 6.9 |

| DeepEval | 7 | 9 | 4 | 6 | 7 | 7 | 6 | 6 | 7.1 |

| Galileo AI | 8 | 8 | 7 | 7 | 7 | 7 | 8 | 7 | 7.7 |

Top 3 for Enterprise: Arize AI, WhyLabs, Galileo AI

Top 3 for SMB: LangSmith, Humanloop, Helicone

Top 3 for Developers: LangSmith, DeepEval, TruLens

Which LLMOps Platform Is Right for You?

Solo / Freelancer

Choose Helicone or PromptLayer for simplicity and fast setup. These tools provide basic observability without heavy infrastructure requirements.

SMB

LangSmith and Humanloop offer a strong balance between usability and advanced capabilities, making them suitable for growing teams.

Mid-Market

Arize AI and WhyLabs provide better monitoring, evaluation, and scalability for teams managing production workloads.

Enterprise

Galileo AI, Arize AI, and WhyLabs deliver full observability, governance, and reliability needed for large-scale deployments.

Regulated industries (finance/healthcare/public sector)

WhyLabs and Arize AI are strong choices due to their focus on monitoring, reliability, and compliance-oriented features.

Budget vs premium

- Budget: Helicone, TruLens, DeepEval

- Premium: Arize AI, Galileo AI, WhyLabs

Build vs buy (when to DIY)

Build your own stack if you need full control and have strong ML engineering resources. Otherwise, LLMOps platforms significantly reduce complexity and accelerate deployment.

Implementation Playbook (30 / 60 / 90 Days)

30 Days

- Identify high-impact AI use cases

- Define success metrics (accuracy, latency, cost)

- Set up logging and observability

- Create initial evaluation datasets

- Prototype prompt workflows

60 Days

- Implement evaluation pipelines

- Add guardrails and safety checks

- Integrate cost monitoring and alerts

- Introduce prompt version control

- Roll out to a limited user group

90 Days

- Optimize latency and cost efficiency

- Expand monitoring and observability

- Add governance and audit logs

- Scale across teams and workflows

- Establish incident response processes

Common Mistakes & How to Avoid Them

- Ignoring prompt injection risks

- Not implementing evaluation frameworks

- Poor data retention and privacy handling

- Lack of observability into LLM behavior

- Unexpected cost overruns due to poor tracking

- Over-automation without human validation

- Vendor lock-in without abstraction layers

- No prompt version control

- Weak or missing guardrails

- No monitoring of hallucinations

- Ignoring latency and performance issues

- Poor integration planning

- Lack of incident response strategy

FAQs

What is LLMOps?

LLMOps is the practice of managing, monitoring, and optimizing applications powered by large language models.

Do I need LLMOps for small projects?

Not necessarily. Basic logging may be sufficient for simple or experimental use cases.

What is evaluation in LLMOps?

Evaluation involves testing outputs for accuracy, consistency, and reliability using structured datasets and metrics.

Are these platforms expensive?

Pricing varies. Many tools offer usage-based, freemium, or enterprise pricing models.

Can I use multiple models?

Yes, most LLMOps platforms support multi-model workflows.

What are guardrails?

Guardrails are mechanisms that prevent unsafe, biased, or incorrect outputs.

Is self-hosting possible?

Some platforms support self-hosting or hybrid deployments, while others are cloud-only.

How do I reduce hallucinations?

Use evaluation frameworks, RAG systems, and guardrails to improve reliability.

What is observability?

Observability refers to tracking logs, metrics, and traces of LLM behavior in production.

Can I switch platforms later?

Yes, but using abstraction layers can make switching easier.

Are open-source tools viable?

Yes, especially for teams prioritizing flexibility and cost control.

Do I need RAG support?

If your application relies on external or proprietary data, RAG support is important.

Conclusion

LLMOps platforms have become essential for building reliable, scalable, and efficient AI systems. They provide the structure needed to manage complexity, reduce risk, and optimize performance across the entire lifecycle of LLM applications.

There is no single “best” platform. The right choice depends on your team size, technical maturity, and specific use case—whether it’s debugging workflows, monitoring production systems, or optimizing costs.

Next steps:

- Shortlist two to three platforms based on your requirements

- Run a pilot using real-world workloads

- Validate evaluation, security, and cost controls before scaling